这次就是由于一个问题,引出了一系列的思路

问题是:scrapy爬取的数据控制台已经有内容输出,但是数据库中没有?

下面是我过程中的问题:其实大多数的代码问题都是由于自己代码不熟练造成的

圈出来的内容在编写中是不能随意改变得(三种数据库的pipelines的写法结构相同)。

解答:我的错误就是由于将process_item ,顺手错写成了process_spider,导致数据无法放入数据库,而且各种求教,解决都不行,最后检查代码才发现

下方是完整正确的代码

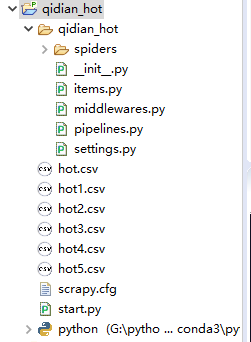

项目包结构:

items.py

import scrapy

class QidianHotItem(scrapy.Item):

# define the fields for your item here like:

name = scrapy.Field()#书名

author = scrapy.Field()#作者

type = scrapy.Field()#类型

status=scrapy.Field()#小说目前的状态(连载或完结)

info = scrapy.Field()#小说简介

up_date = scrapy.Field()#最近更新

qidian_hot_spider.py

#coding:utf-8

#爬取起点中文网的小说信息

from scrapy import Request

from scrapy.spiders import Spider

from qidian_hot.items import QidianHotItem

from scrapy.loader import ItemLoader

class HotSaleSpider(Spider):

name="hot"#爬虫名称

#伪装浏览器

def start_requests(self):

url="https://www.qidian.com/rank/hotsales?style=1&page=1"

self.current_page=1

yield Request(url)

#Request(url, callback, method, headers, body, cookies, meta, encoding, priority, dont_filter, errback, flags)

def parse(self, response): #数据解析

list_selector = response.xpath("//div[@class='book-mid-info']")

#使用xpath定位

for one_selector in list_selector:

#获取小说信息

name=one_selector.xpath("h4/a/text()").extract()[0]

#小说作者

author=one_selector.xpath("p[1]/a[1]/text()").extract()[0]

#小说类型

type=one_selector.xpath("p[1]/a[2]/text()").extract()[0]

#状态

status=one_selector.xpath("p[1]/span/text()").extract()[0]

#小说简介

info=one_selector.xpath("p[2]/text()").extract()[0]

#更新

up_date = one_selector.xpath("p[3]/a/text()").extract()[0]

#使用items

item = QidianHotItem()

item["name"] = name

item["author"]=author

item["type"]=type

item["status"]=status

item["info"]=info

item["up_date"]=up_date

yield item

#获取下一页的数据

self.current_page+=1

if self.current_page<=2:

next_url="https://www.qidian.com/rank/hotsales?style=1&page=%d"%self.current_page

yield Request(next_url)

settings.py

USER_AGENT ="Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/75.0.3770.100 Safari/537.36"

ROBOTSTXT_OBEY = False #不遵守robots.txt 规则

#初始状态是默认关闭的 300代表的是先后顺序,在多个管道的时候

ITEM_PIPELINES = {

'qidian_hot.pipelines.QidianHotPipeline': 300,

# 'qidian_hot.pipelines.MongoDB_Spider': 400, #这是MongoDB数据库的管道

'qidian_hot.pipelines.Redis_Spider': 400, #这是redis数据库的管道

# 'qidian_hot.pipelines.MySQLPipeline': 500, #这是mysql数据库

}

三种数据库的配置项,书写在settings.py文件中

#数据库 MySQL

MYSQL_DB_NAME = "hot_qidian"

MYSQL_HOST = "localhost"

MYSQL_USER = "root"

MYSQL_PASSWORD = "123456"

#数据库MongoDB

MONGODB_HOST = "127.0.0.1"

MONGODB_PORT = 27017

MONGODB_NAME = "qidian"

MONGODB_COLLECTION = "hot"

#Redis 数据库

REDIS_HOST = "127.0.0.1"

REDIS_PORT = 6379

REDIS_DB_INDEX = 1

1、使用MySQL数据库

class MySQLPipeline(object):

#在spider开始之前,调用一次

def open_spider(self,spider):

db_name = spider.settings.get("MYSQL_DB_NAME","hot_qidian")

host = spider.settings.get("MYSQL_HOST","localhost")

user = spider.settings.get("MYSLQ_USER","root")

password = spider.settings.get("MYDSQL_PASSWORD","123456")

#连接数据库

self.db_conn = MySQLdb.connect(db = db_name,

host = host,

user = user,

password = password,

charset = "utf8"

)

self.db_cursor = self.db_conn.cursor() #得到游标

#处理每一个item

def process_item(self,item,spider):

values = (item["name"],item["author"],item["type"],item["status"],item["up_date"])

#确定sql语句

sql = 'insert into hot(name,author,type,status,up_date) values(%s,%s,%s,%s,%s)'

self.db_cursor.execute(sql,values)

return item

#在spider结束时,调用一次

def close_spider(self,spider):

self.db_conn.commit() #提交数据

self.db_cursor.close()

self.db_conn.close()

一次使用成功。

2、MongoDB数据库

import pymongo

class MongoDB_Spider(object):

#开始之前调用一次

def open_sipder(self,spider):

host = spider.settings.get('MONGODB_HOST')

port = spider.settings.get('MONGODB_PORT')

db_name = spider.settings.get('MONGODB_NAME')

db_collection = spider.settings.get('MONGODB_COLLECTION')

self.db_client = pymongo.MongoClient(host=host,port = port)

#指定数据库

self.db_name = self.db_client[db_name]

#指定集合,得到一个集合对象

self.db_collection = self.db_name[db_collection]

#处理每一个item数据

def process_item(self,item,spider):

item_hot = dict(item)

self.db_collection.insert(item_hot)

#结束时,调用一次

def close_spider(self,spider):

self.db_client.close()

3、连接redis数据库

import redis

class Redis_Spider(object):

num = 1

def __init__(self):

self.item_set=set()

def open_spider(self,spider):

host = spider.settings.get("REDIS_HOST","localhost")

port = spider.settings.get("REDIS_PORT",6379)

db_index = spider.settings.get("REDIS_DB_INDEX",1)

self.db_conn = redis.Redis(host=host,port=port,password='',db=db_index)

#将数据存储到数据库中

def process_item(self,item,spider):

print(self.db_conn)

try:

item_dict = dict(item)

self.db_conn.rpush("novel",item_dict)

return item

except Exception as ex:

print(ex)

def close_spider(self,spider):#关闭

self.db_conn.connection_pool.disconnect()