目录

在离线混合部署概念

先举一个例子,众所周知的天猫双十一当天的流量非常之大,那么就需要成百上千台服务器构成一个超大集群来提供计算能力,但是双十一过去之后,集群资源就面临着浪费的局面。假设业务分为在线和离线两种模式:在线任务需要资源相对较少,但要求响应时间短;离线任务则不需要对任务进行迅速响应,但是计算量相对较大、占用资源多。那么我们应该怎么在K8S集群上完成这两种业务的部署、并且使得CPU利用率始终维持在一个比较高的水平上呢?显然要用到在离线混合部署,这有效第解决了资源利用的问题,但是也有人会问,如果某一个离线任务突然来了好几十TB的任务需要训练,就会导致在线资源的损失,这里就用到了k8s中的QoS,在另一文章中有详细介绍(https://blog.csdn.net/qq_46595591/article/details/107620114)

下面将着重介绍如何通过k8s上的kubeflow部署TensorFlow机器学习任务

最新版kubeflow部署

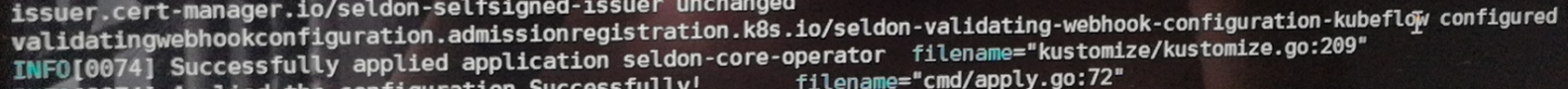

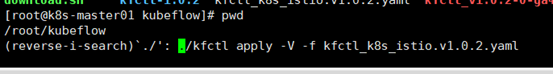

kubeflow 这个东西出来的比较新,但是更新迭代的速度也特别快,查阅国内资料,基本上对于kubeflow的部署都没有及时更新,这给部署造成了很大的困难,将在另一篇文章中详细介绍kubeflow部署的全过程以及踩过的坑,这里是已经成功的

具体参考我的另一篇文章:

配置要求(均根据官方文档)

1.k8s:1.14.6

2.kfctl :1.02

3…其中一个工作节点必须为4 CPU, 16G内存,50G硬盘

具体操作命令

1.下载kfctl 压缩包 tar -xvf kfctl_v1.0.2_<platform>.tar.gz

2.export PATH=$PATH:"<path-to-kfctl>"

3.export KF_NAME=<your choice of name for the Kubeflow deployment>

4.export KF_DIR=${BASE_DIR}/${KF_NAME}

5.export CONFIG_URI="https://raw.githubusercontent.com/kubeflow/manifests/v1.0-branch/kfdef/kfctl_k8s_istio.v1.0.2.yaml"

6.下载 yaml 文件 wget https://raw.githubusercontent.com/kubeflow/manifests/v1.0-branch/kfdef/kfctl_k8s_istio.v1.0.2.yaml

7.修改 kfctl_k8s_istio.v1.0.2.yaml 将 https://github.com/kubeflow/manifests/archive/v1.0.2.tar.gz 改为 file:///root/kubeflow/v1.0.2.tar.gz

8.mkdir -p ${KF_DIR}

cd ${KF_DIR}

kfctl apply -V -f ${CONFIG_URI}

TensorFlow部署

概念

TensorFlow 是由 Google Brain 团队为深度神经网络(DNN)开发的功能强大的开源软件库,于 2015 年 11 月首次发布,在 Apache 2.x 协议许可下可用

开源深度学习库 TensorFlow 允许将深度神经网络的计算部署到任意数量的 CPU 或 GPU 的服务器、PC 或移动设备上,且只利用一个 TensorFlow API。你可能会问,还有很多其他的深度学习库,如 Torch、Theano、Caffe 和 MxNet,那 TensorFlow 与其他深度学习库的区别在哪里呢?包括 TensorFlow 在内的大多数深度学习库能够自动求导、开源、支持多种 CPU/GPU、拥有预训练模型,并支持常用的NN架构,如递归神经网络(RNN)、卷积神经网络(CNN)和深度置信网络(DBN)

TensorFlow 则还有更多的特点,如下:

支持所有流行语言,如 Python、C++、Java、R和Go

可以在多种平台上工作,甚至是移动平台和分布式平台

它受到所有云服务(AWS、Google和Azure)的支持

Keras——高级神经网络 API,已经与 TensorFlow 整合

与 Torch/Theano 比较,TensorFlow 拥有更好的计算图表可视化

允许模型部署到工业生产中,并且容易使用

有非常好的社区支持

TensorFlow 不仅仅是一个软件库,它是一套包括 TensorFlow,TensorBoard 和 TensorServing 的软件

部署

这里介绍的是在k8s上通过kubeflow 部署TensorFlow

离线

跑一个pod

注意 如果不是外网 这里百分百被墙 而且会疯狂报错

这边已经给大家准备好了国内镜像(这个镜像目前看来是只有我独一份)

https://github.com/Eros11on/Dockerfile-library(这还不能直接拉取,具体操作一定要看另一篇文章!!!!)

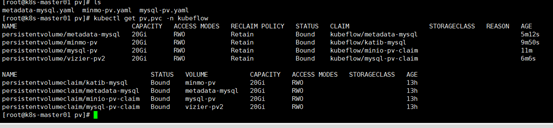

创建PV 修改各个deploy statefulset 的镜像下载策略 ,因为下载策略是Always ,需要修改为(imagePullPolicy后面值改为 IfNotPresent)

例子

#kubectl edit deploy deploy名字 -n kubeflow

在线

这里用到的是GPU

这里不再具体阐述

http://c.biancheng.net/view/2003.html上讲的很清楚

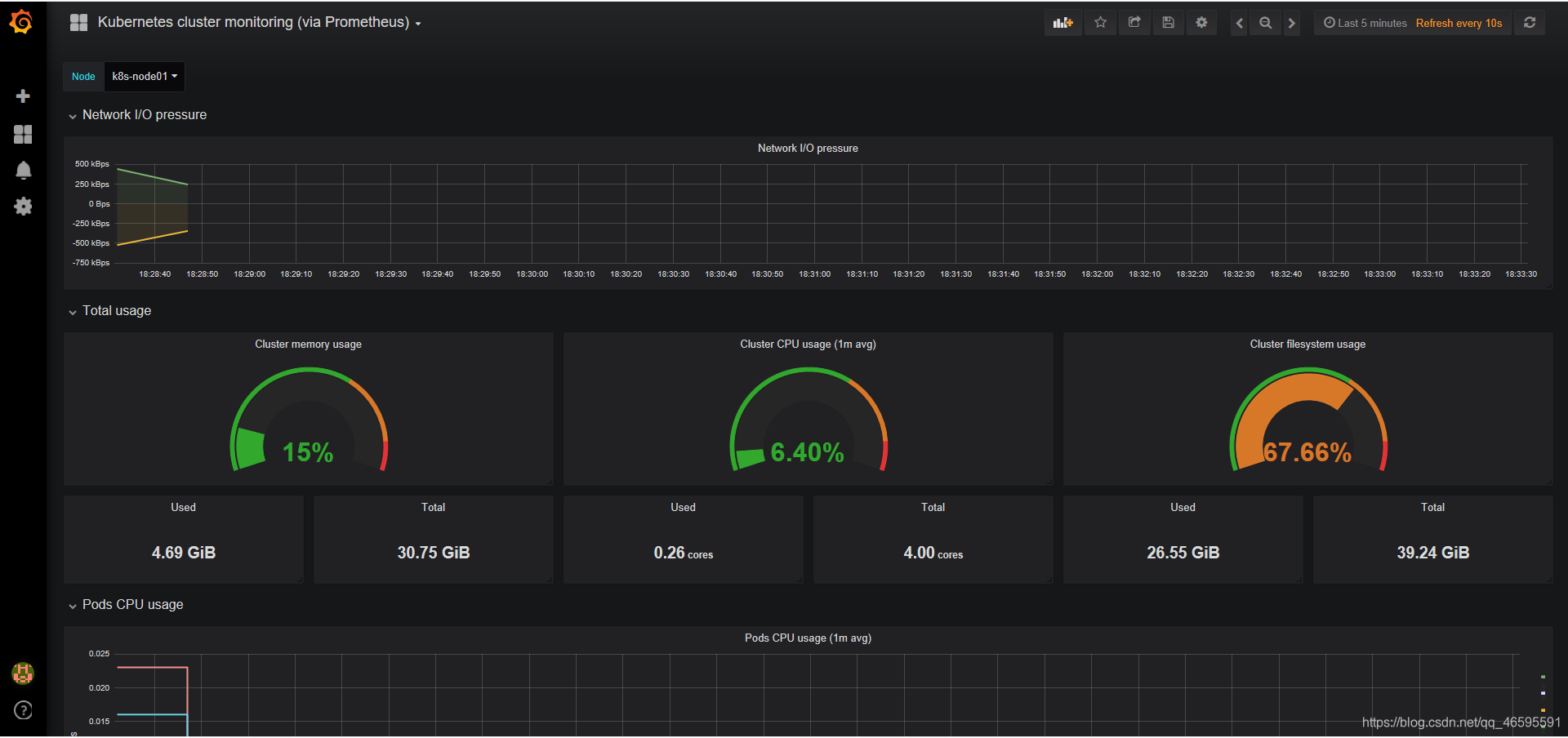

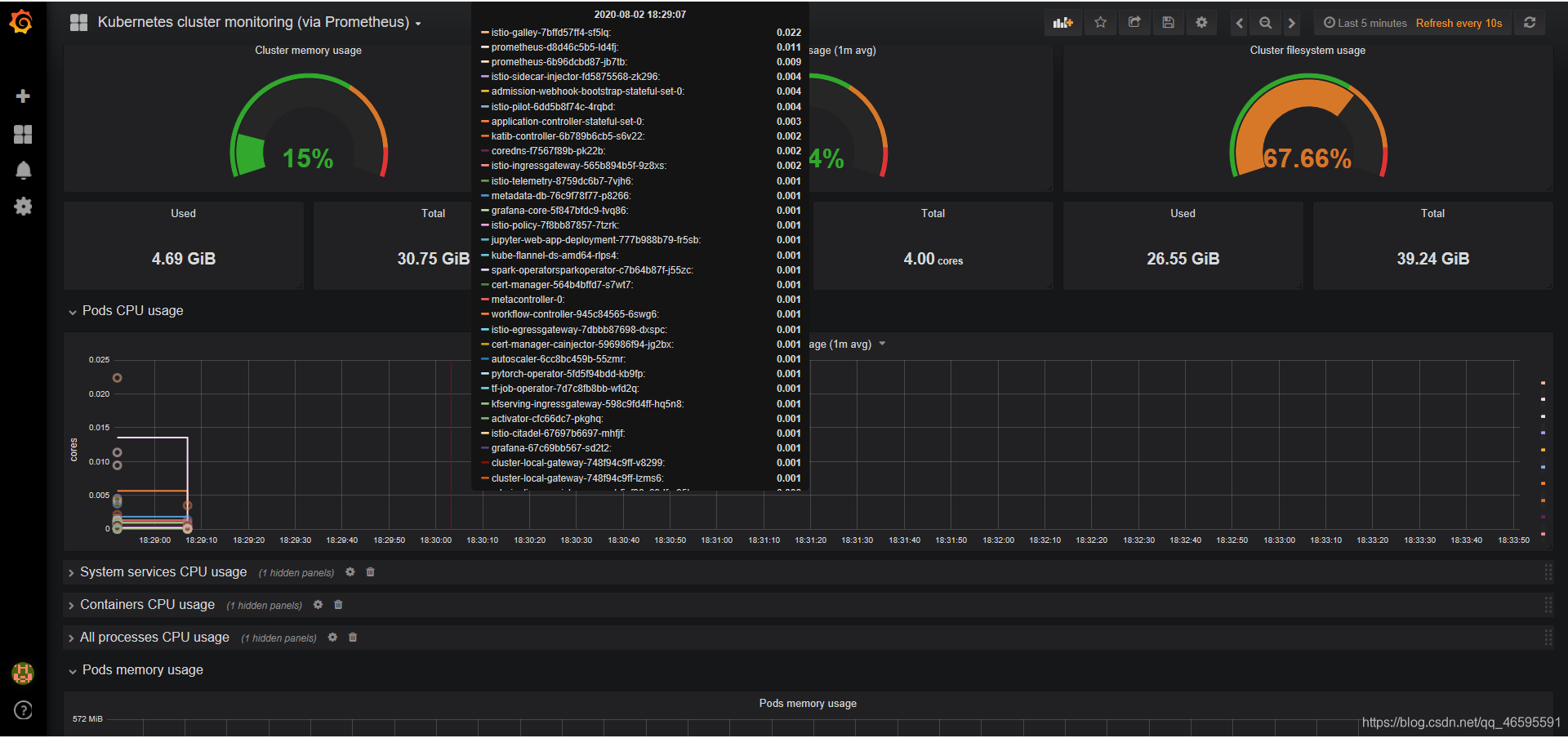

k8s上的prometheus+grafana监控资源

概念

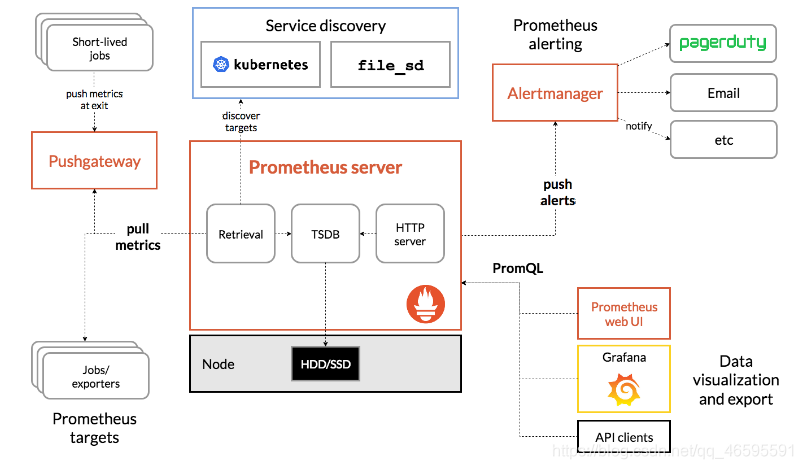

Prometheus (中文名:普罗米修斯)是由 SoundCloud 开发的开源监控报警系统和时序列数据库(TSDB).自2012年起,许多公司及组织已经采用 Prometheus,并且该项目有着非常活跃的开发者和用户社区.现在已经成为一个独立的开源项目。Prometheus 在2016加入 CNCF ( Cloud Native Computing Foundation ), 作为在 kubernetes 之后的第二个由基金会主持的项目。 Prometheus 的实现参考了Google内部的监控实现,与源自Google的Kubernetes结合起来非常合适。另外相比influxdb的方案,性能更加突出,而且还内置了报警功能。它针对大规模的集群环境设计了拉取式的数据采集方式,只需要在应用里面实现一个metrics接口,然后把这个接口告诉Prometheus就可以完成数据采集了,下图为prometheus的架构图

部署

这里使用prometheus+grafana监控部署的机器学习任务

环境

这里用的是阿里云服务器

1个master 4C 16G

1个node 4C 32G 都是60G 云盘

k8s:1.14.6

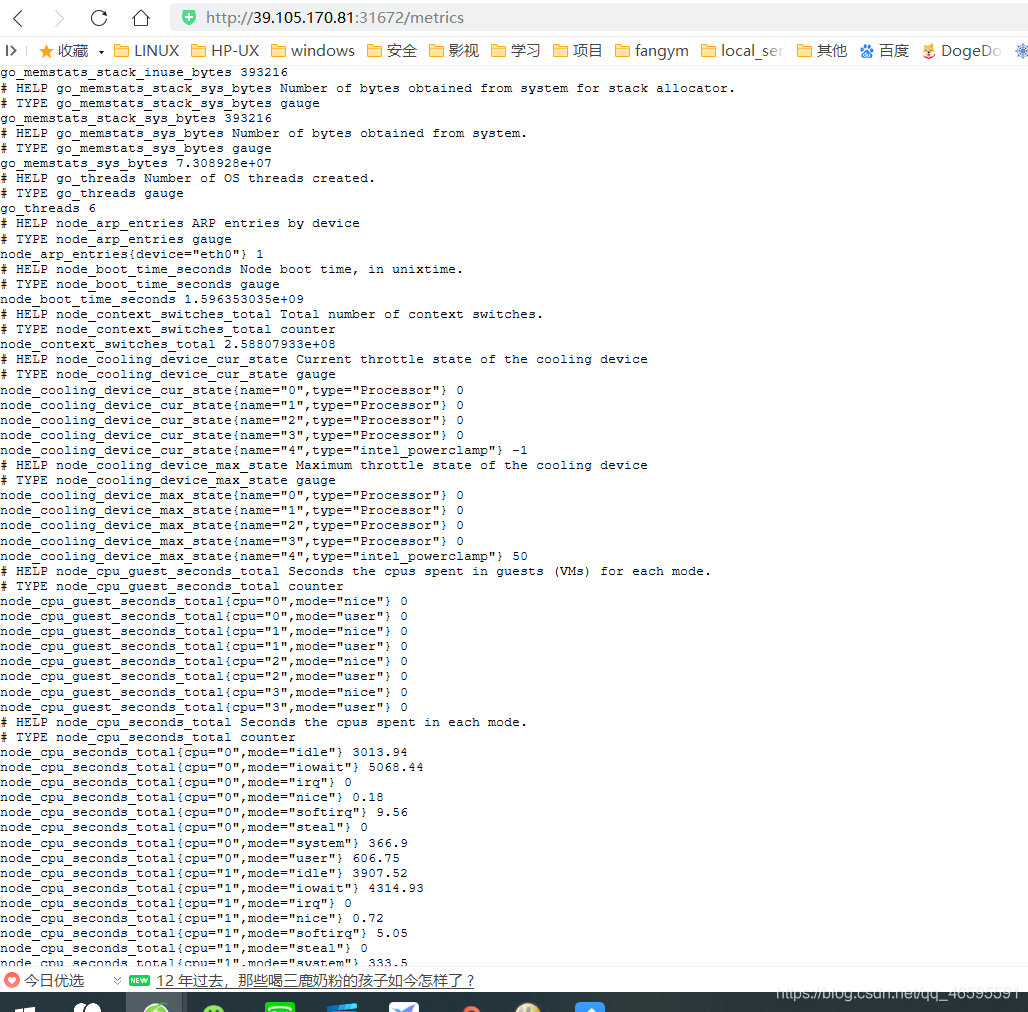

采用daemonset方式部署node-exporter组件

cat node-exporter.yaml

apiVersion: extensions/v1beta1

kind: DaemonSet

metadata:

name: node-exporter

namespace: kube-system

labels:

k8s-app: node-exporter

spec:

template:

metadata:

labels:

k8s-app: node-exporter

spec:

containers:

- image: prom/node-exporter

name: node-exporter

ports:

- containerPort: 9100

protocol: TCP

name: http

---

apiVersion: v1

kind: Service

metadata:

labels:

k8s-app: node-exporter

name: node-exporter

namespace: kube-system

spec:

ports:

- name: http

port: 9100

nodePort: 31672

protocol: TCP

type: NodePort

selector:

k8s-app: node-exporter

部署prometheus组件

1.rbac文件

cat rbac-setup.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: prometheus

rules:

- apiGroups: [""]

resources:

- nodes

- nodes/proxy

- services

- endpoints

- pods

verbs: ["get", "list", "watch"]

- apiGroups:

- extensions

resources:

- ingresses

verbs: ["get", "list", "watch"]

- nonResourceURLs: ["/metrics"]

verbs: ["get"]

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: prometheus

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: prometheus

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: prometheus

subjects:

- kind: ServiceAccount

name: prometheus

namespace: kube-system

2.以configmap的形式管理prometheus组件的配置文件

cat configmap.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: prometheus-config

namespace: kube-system

data:

prometheus.yml: |

global:

scrape_interval: 15s

evaluation_interval: 15s

scrape_configs:

- job_name: 'kubernetes-apiservers'

kubernetes_sd_configs:

- role: endpoints

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- source_labels: [__meta_kubernetes_namespace, __meta_kubernetes_service_name, __meta_kubernetes_endpoint_port_name]

action: keep

regex: default;kubernetes;https

- job_name: 'kubernetes-nodes'

kubernetes_sd_configs:

- role: node

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)

- target_label: __address__

replacement: kubernetes.default.svc:443

- source_labels: [__meta_kubernetes_node_name]

regex: (.+)

target_label: __metrics_path__

replacement: /api/v1/nodes/${1}/proxy/metrics

- job_name: 'kubernetes-cadvisor'

kubernetes_sd_configs:

- role: node

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)

- target_label: __address__

replacement: kubernetes.default.svc:443

- source_labels: [__meta_kubernetes_node_name]

regex: (.+)

target_label: __metrics_path__

replacement: /api/v1/nodes/${1}/proxy/metrics/cadvisor

- job_name: 'kubernetes-service-endpoints'

kubernetes_sd_configs:

- role: endpoints

relabel_configs:

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scrape]

action: keep

regex: true

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scheme]

action: replace

target_label: __scheme__

regex: (https?)

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_path]

action: replace

target_label: __metrics_path__

regex: (.+)

- source_labels: [__address__, __meta_kubernetes_service_annotation_prometheus_io_port]

action: replace

target_label: __address__

regex: ([^:]+)(?::\d+)?;(\d+)

replacement: $1:$2

- action: labelmap

regex: __meta_kubernetes_service_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_service_name]

action: replace

target_label: kubernetes_name

- job_name: 'kubernetes-services'

kubernetes_sd_configs:

- role: service

metrics_path: /probe

params:

module: [http_2xx]

relabel_configs:

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_probe]

action: keep

regex: true

- source_labels: [__address__]

target_label: __param_target

- target_label: __address__

replacement: blackbox-exporter.example.com:9115

- source_labels: [__param_target]

target_label: instance

- action: labelmap

regex: __meta_kubernetes_service_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_service_name]

target_label: kubernetes_name

- job_name: 'kubernetes-ingresses'

kubernetes_sd_configs:

- role: ingress

relabel_configs:

- source_labels: [__meta_kubernetes_ingress_annotation_prometheus_io_probe]

action: keep

regex: true

- source_labels: [__meta_kubernetes_ingress_scheme,__address__,__meta_kubernetes_ingress_path]

regex: (.+);(.+);(.+)

replacement: ${1}://${2}${3}

target_label: __param_target

- target_label: __address__

replacement: blackbox-exporter.example.com:9115

- source_labels: [__param_target]

target_label: instance

- action: labelmap

regex: __meta_kubernetes_ingress_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_ingress_name]

target_label: kubernetes_name

- job_name: 'kubernetes-pods'

kubernetes_sd_configs:

- role: pod

relabel_configs:

- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_scrape]

action: keep

regex: true

- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_path]

action: replace

target_label: __metrics_path__

regex: (.+)

- source_labels: [__address__, __meta_kubernetes_pod_annotation_prometheus_io_port]

action: replace

regex: ([^:]+)(?::\d+)?;(\d+)

replacement: $1:$2

target_label: __address__

- action: labelmap

regex: __meta_kubernetes_pod_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_pod_name]

action: replace

target_label: kubernetes_pod_name

3.Prometheus deployment 文件

cat prometheus.yaml

apiVersion: apps/v1beta2

kind: Deployment

metadata:

labels:

name: prometheus-deployment

name: prometheus

namespace: kube-system

spec:

replicas: 1

selector:

matchLabels:

app: prometheus

template:

metadata:

labels:

app: prometheus

spec:

containers:

- image: prom/prometheus:v2.0.0

name: prometheus

command:

- "/bin/prometheus"

args:

- "--config.file=/etc/prometheus/prometheus.yml"

- "--storage.tsdb.path=/prometheus"

- "--storage.tsdb.retention=24h"

ports:

- containerPort: 9090

protocol: TCP

volumeMounts:

- mountPath: "/prometheus"

name: data

- mountPath: "/etc/prometheus"

name: config-volume

resources:

requests:

cpu: 100m

memory: 100Mi

limits:

cpu: 500m

memory: 2500Mi

serviceAccountName: prometheus

volumes:

- name: data

emptyDir: {}

- name: config-volume

configMap:

name: prometheus-config

---

kind: Service

apiVersion: v1

metadata:

labels:

app: prometheus

name: prometheus

namespace: kube-system

spec:

type: NodePort

ports:

- port: 9090

targetPort: 9090

nodePort: 30003

selector:

app: prometheus

4.通过上述yaml文件创建相应的对象

kubectl create -f node-exporter.yaml

kubectl create -f rbac-setup.yaml

kubectl create -f configmap.yaml

kubectl create -f promethues.yaml

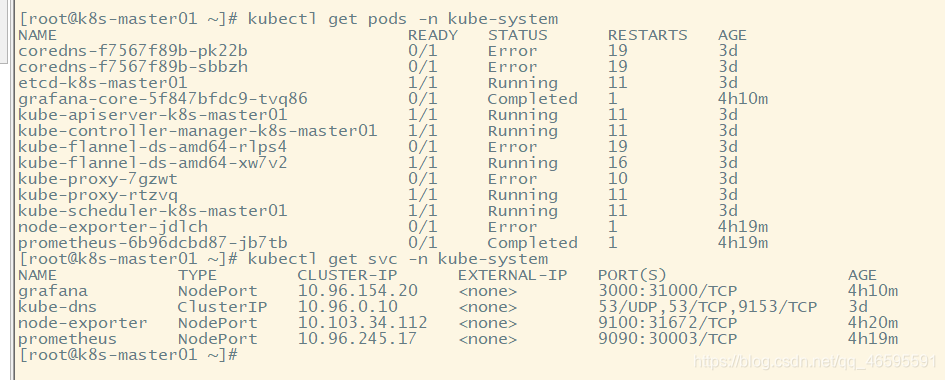

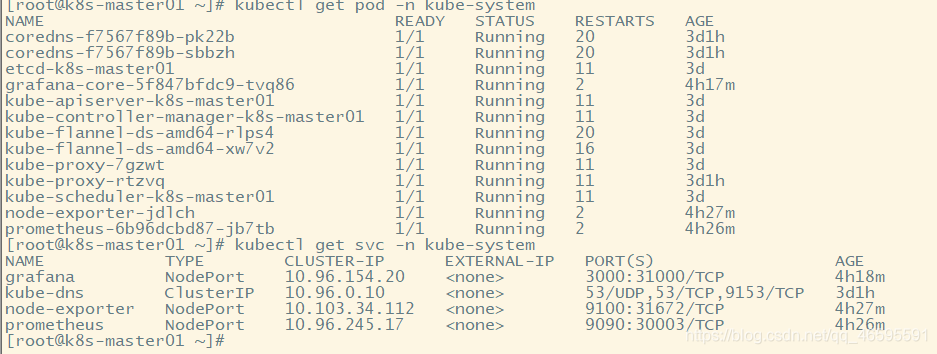

5.查看相关pod和service

kubectl get pods -n kube-system

kubectl get svc -n kube-system

6.Node-exporter对应的nodeport端口为31672,通过访问http://localhost:31672/metrics 可以看到对应的metrics

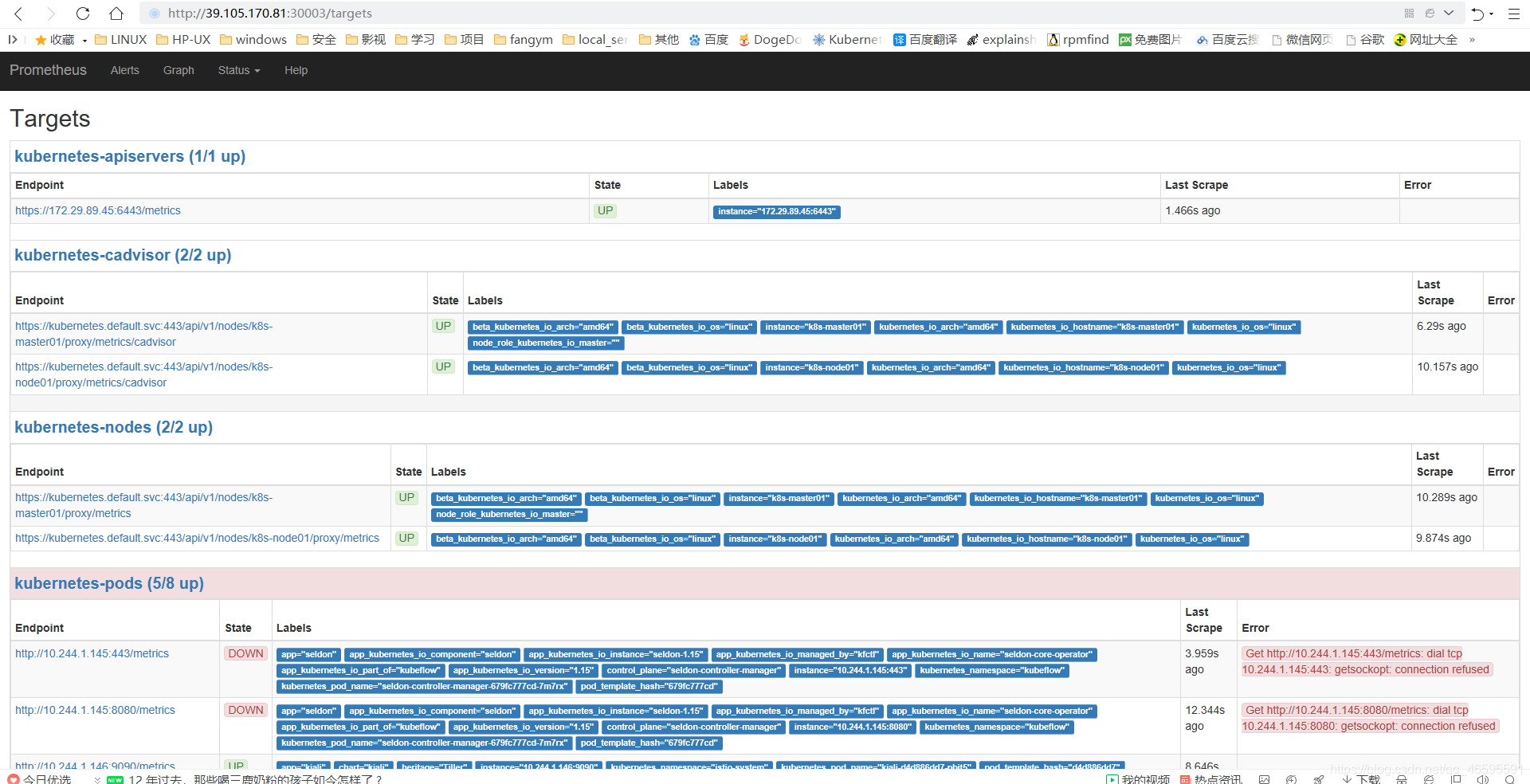

7.prometheus对应的nodeport端口为30003,通过访问http://localhost:30003/targets 可以看到prometheus已经成功连接上了k8s的apiserver

部署grafana组件

1.grafana deployment配置文件

apiVersion: extensions/v1beta1

kind: Deployment

metadata:

name: grafana-core

namespace: kube-system

labels:

app: grafana

component: core

spec:

replicas: 1

template:

metadata:

labels:

app: grafana

component: core

spec:

containers:

- image: grafana/grafana:5.0.0

name: grafana-core

imagePullPolicy: IfNotPresent

resources:

limits:

cpu: 100m

memory: 100Mi

requests:

cpu: 100m

memory: 100Mi

env:

- name: GF_AUTH_BASIC_ENABLED

value: "true"

- name: GF_AUTH_ANONYMOUS_ENABLED

value: "false"

readinessProbe:

httpGet:

path: /login

port: 3000

volumeMounts:

- name: grafana-persistent-storage

mountPath: /var

volumes:

- name: grafana-persistent-storage

emptyDir: {}

---

apiVersion: v1

kind: Service

metadata:

name: grafana

namespace: kube-system

labels:

app: grafana

component: core

spec:

type: NodePort

ports:

- port: 3000

nodePort: 31000

selector:

app: grafana

2.部署grafana

kubectl create -f grafana.yaml

3.查看grafana pod和service

kubectl get pod -n kube-system

kubectl get svc -n kube-system

4.可以看到grafana nodeport端口为31000,可使用nodeip+nodeport的方式访问grafana http://localhost:31000

5.可以直接输入模板编号315在线导入,或者下载好对应的json模板文件本地导入,面板模板下载地址https://grafana.com/dashboards/315

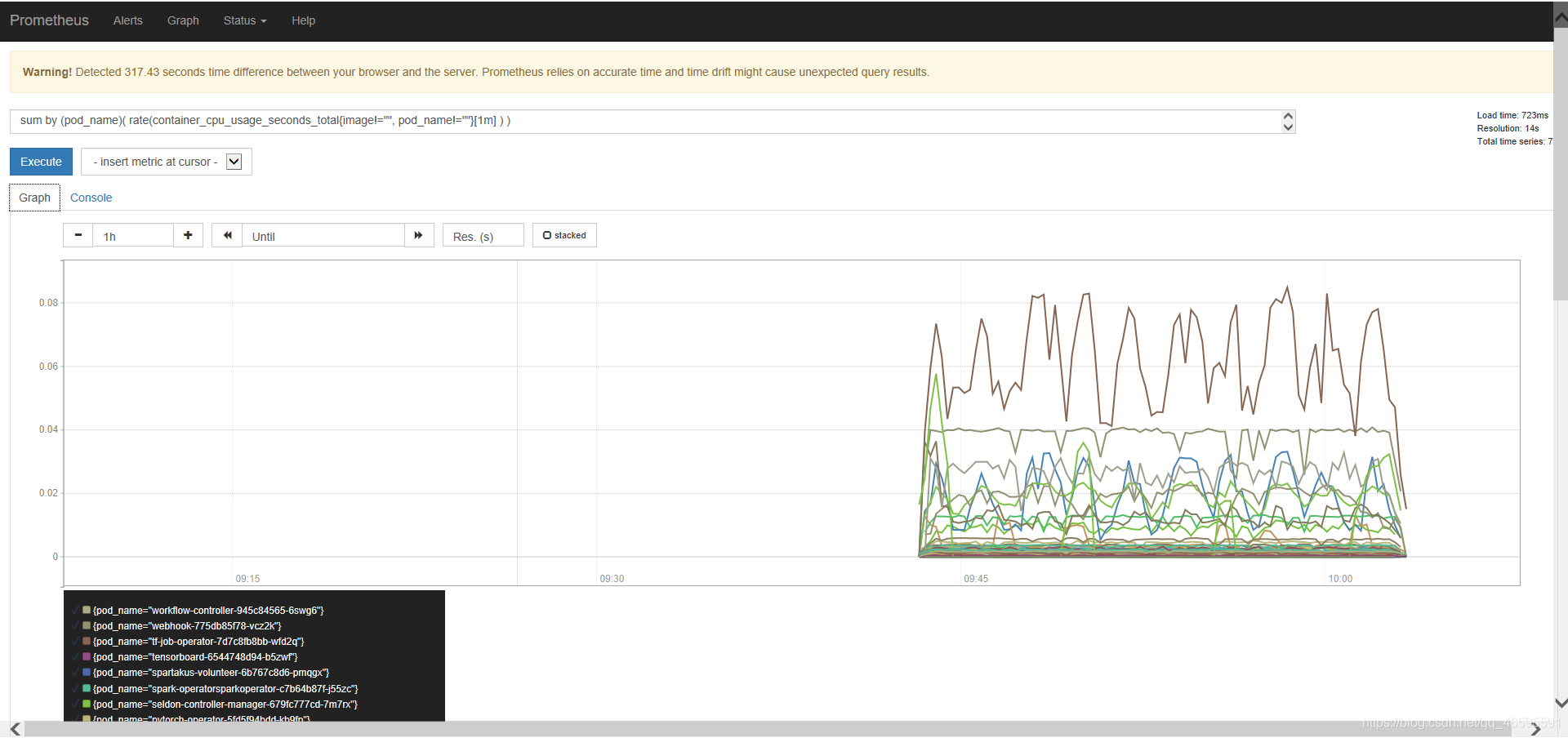

可以看到监控资源