用colab跑CBAM注意力机制的时候一直报错

runtimeerror: adaptive_avg_pool2d_backward_cuda does not have a deterministic implementation, but you set 'torch.use_deterministic_algorithms(true)'. you can turn off determinism just for this operation, or you can use the 'warn_only=true' option, if that's acceptable for your application. you can also file an issue at https://github.com/pytorch/pytorch/issues to help us prioritize adding deterministic support for this operation.

改了几天,我发现用pycharm就不报错,可能是池化层的反向传播出现了问题。所以我尝试把torch.use_deterministic_algorithms(False)加到train.py里面,结果就成功了。

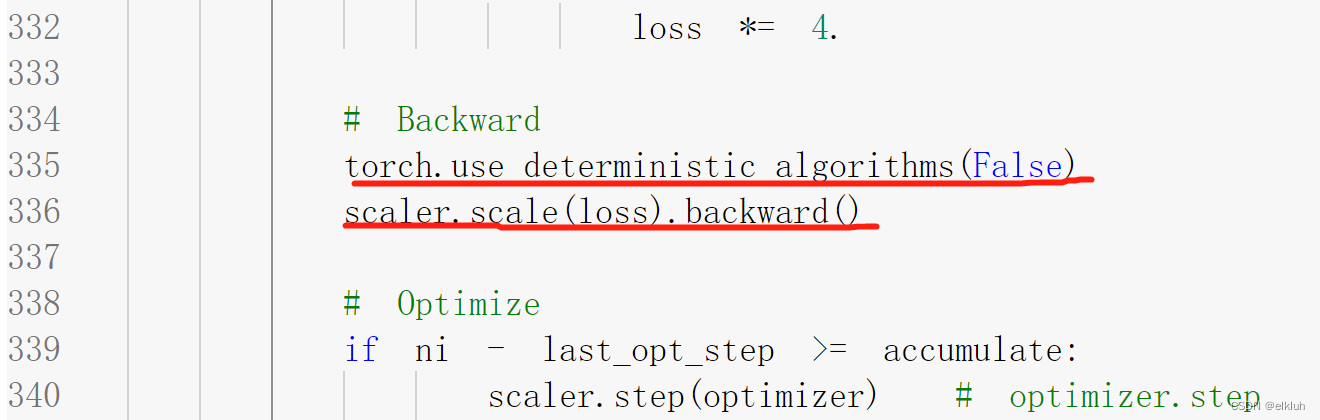

加到train.py 的335行scaler.scale(loss).backward()前面