Derivatives of activation functions 激励函数的导数

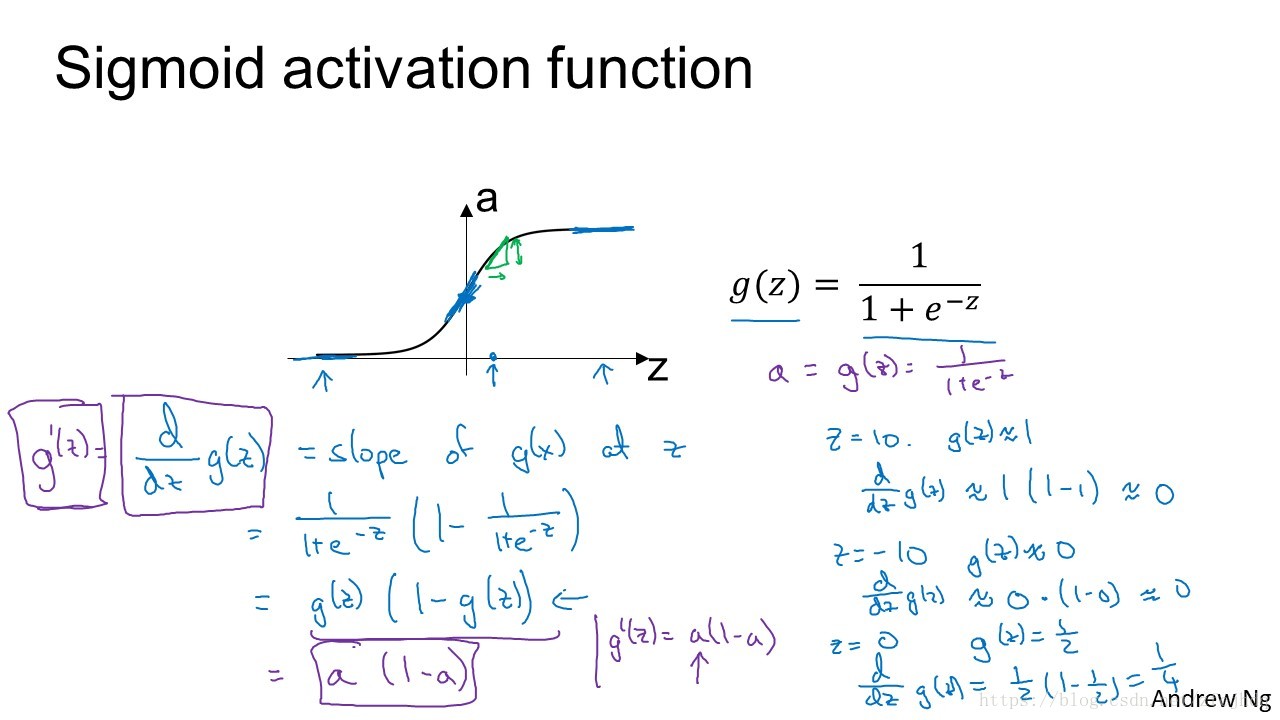

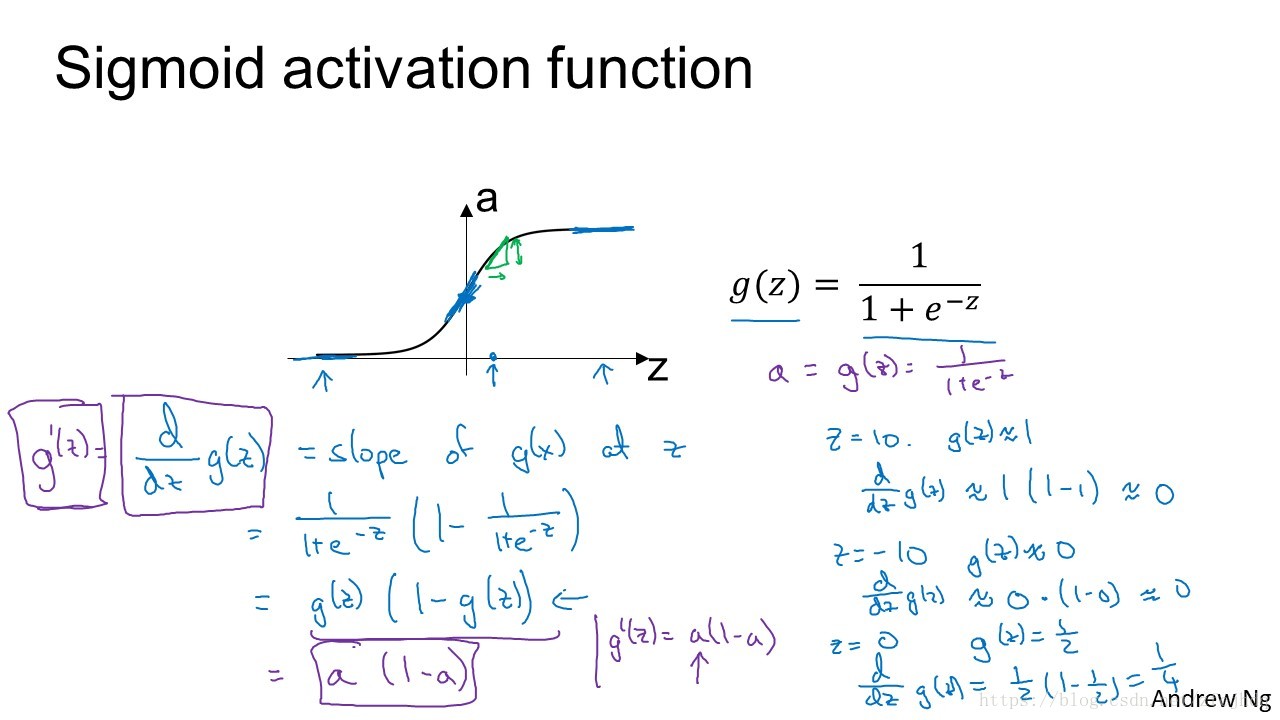

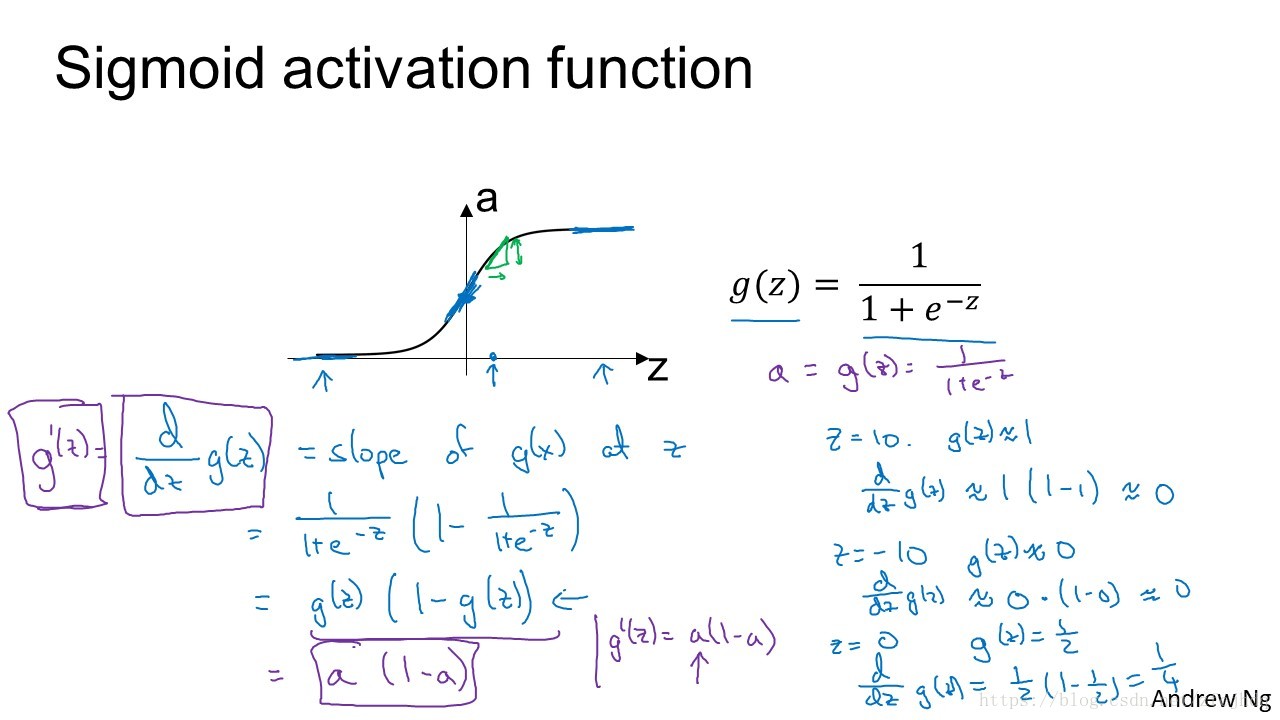

Derivatives of Sigmod

sigmod=g(z)=11+e−z(1)

g′(z)=ddzg(z)=d(1+e−z)−1d(1+e−z)∗d(1+e−z)d(−z)∗d(−z)dz=−(1+e−z)−2∗e−z∗−1=1(1+e−z)∗e−z(1+e−z)=1(1+e−z)∗1+e−z−1(1+e−z)=1(1+e−z)∗(1−1(1+e−z))=g(z)∗(1−g(z))(2)

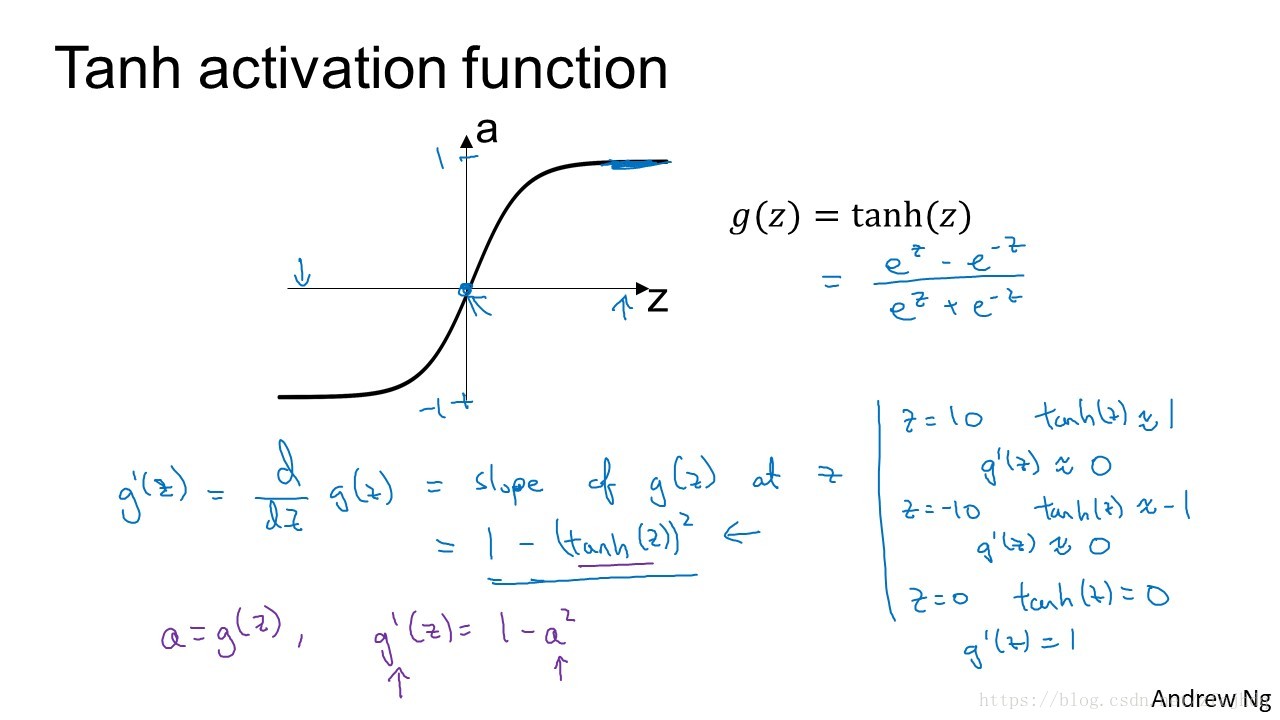

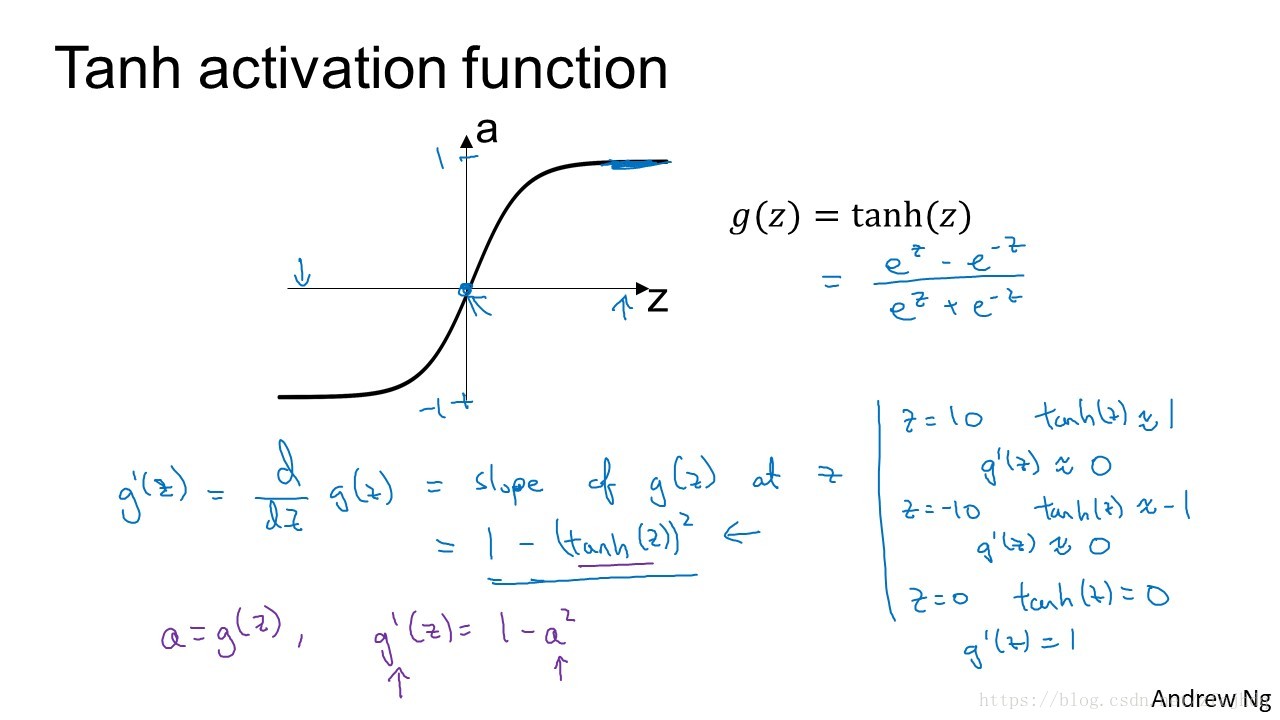

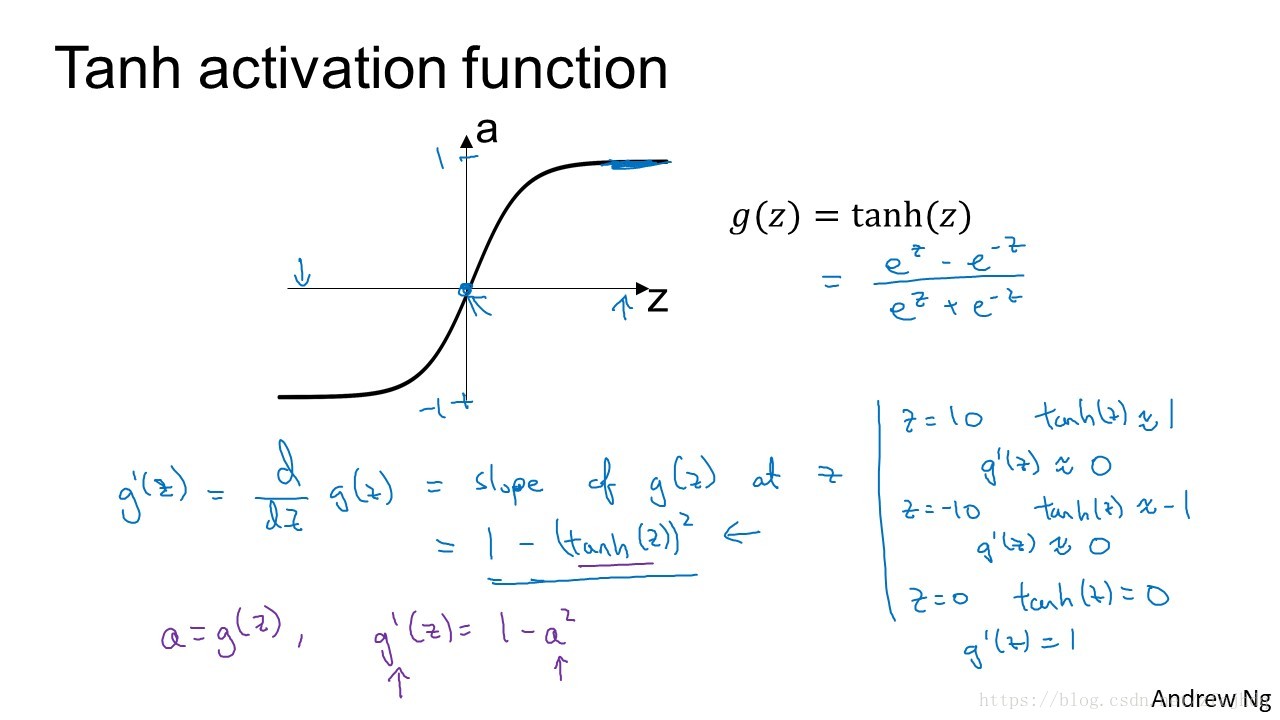

Derivatives of tanh

sinh(z)=ez−e−z2(3)

cosh(z)=ez+e−z2(4)

ddzsinh(z)=ez+e−z2=cosh(z)(5)

ddzcosh(z)=ez−e−z2=sinh(z)(6)

tanh(z)=sinh(z)cosh(z)=g(z)=ez−e−zez+e−z(7)

g′(z)=ddz(sinh(z)cosh(z)−1)=ddzsinh(z)∗cosh(z)−1+sinh(z)∗ddcosh(z)cosh(z)−1∗ddzcosh(z)=1+sinh(z)∗−1cosh(z)2∗sinh(z)=1−tanh(z)2(8)

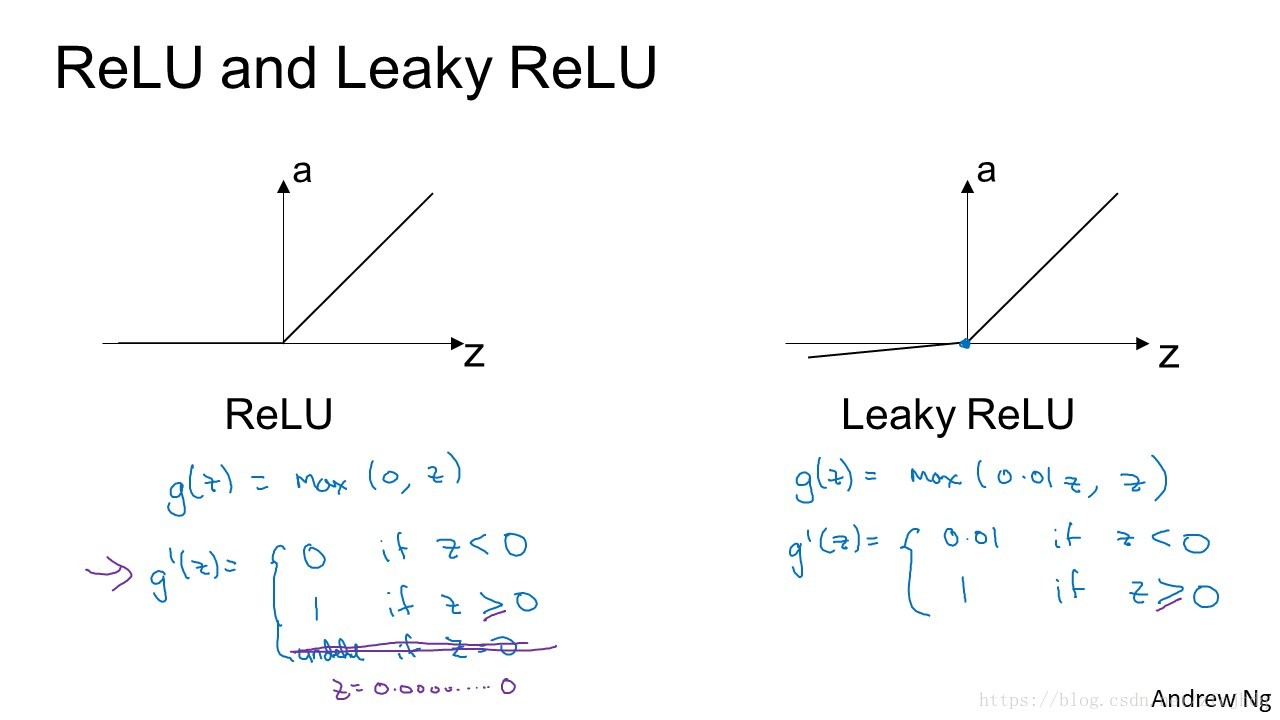

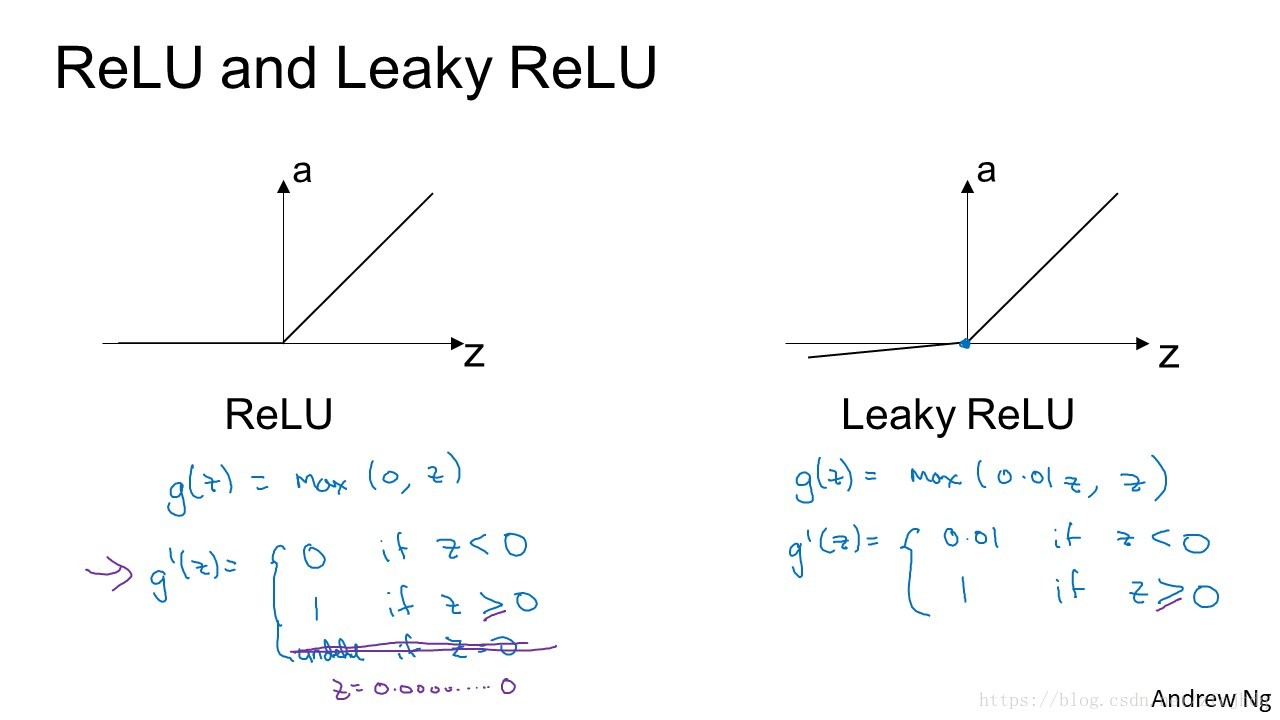

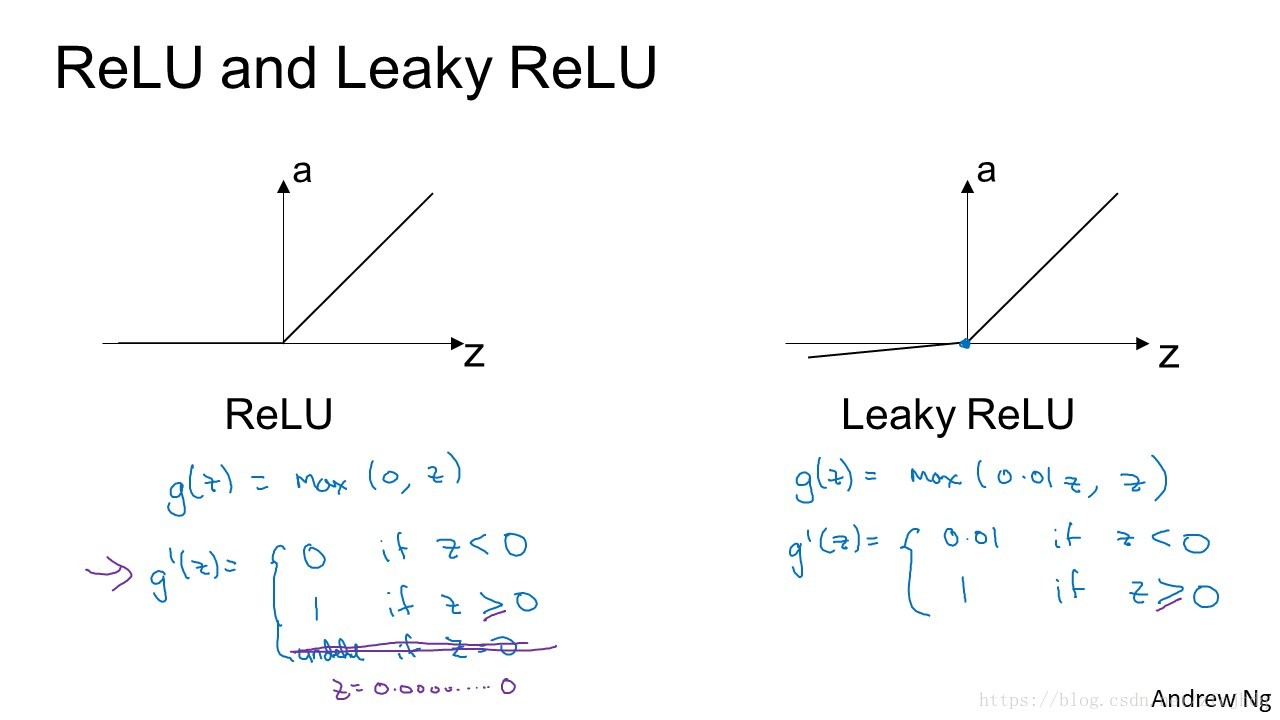

Derivatives of ReLU

ReLU=g(z)=max(0,z)(9)

g′(z)={01if z<0if z≥0(10)

Leaky ReLU=g(z)=max(0.01z,z)(11)

g′(z)={0.011if z<0if z≥0(12)