定义函数:读数据,清洗,分词。标签存入target_list,文本存入content_list

代码:

import os # 读文件的函数(生成器),获取所有txt文件 def readFile(path): fileList = os.listdir(path) for className in fileList: # 类别循环层 classPath = os.path.join(path, className) # 拼接类别路径 fileList = os.listdir(classPath) for fileName in fileList: # txt文件循环层,拿每一条新闻 filePath = os.path.join(classPath, fileName) # 拼接文件路径 genInfo(filePath) # 根据生成的文件路径提取它的类别和文本 import numpy as np def genInfo(path): classfity = path.split('\\')[-2] # 获取类别 with open(path,'r',encoding='utf-8') as f: content = f.read() # 获取文本 appToList(classfity, content) # 类别存入列表中,文本处理后用结巴分词存入另外一个列表 import jieba import jieba.posseg as psg def appToList(classfity, content): # 数据处理 processed = "".join([word for word in content if word.isalpha()]) # 结巴分词,分词后获取长度>=3的有意义词汇,去重并转为一个字符串 # clear = " ".join(set([i.word for i in psg.cut(processed) if (len(i.word)>=3) and (i.flag=='nr' or i.flag=='n' or i.flag=='v' or i.flag=='a' or i.flag=='vn' or i.flag=='i')])) # 结巴分词,分词后获取长度>=2的词汇,并转为一个字符串 clear = " ".join([i for i in jieba.cut(processed, cut_all=True, HMM=True) if (len(i)>=2)]) # 追加到列表 target_list.append(classfity) content_list.append(clear)

截图:

将content_list列表向量化再建模,将模型用于预测并评估模型

代码:

from sklearn.feature_extraction.text import TfidfVectorizer from sklearn.model_selection import train_test_split from sklearn.naive_bayes import GaussianNB,MultinomialNB from sklearn.model_selection import cross_val_score from sklearn.metrics import classification_report path = r'F:\计算机\python\挖掘\data' target_list = [] content_list = [] # 读入文件,并将数据处理后追加到两个列表中 readFile(path) # 划分训练集测试集并建立特征向量,为建立模型做准备 # 划分训练集测试集 x_train,x_test,y_train,y_test = train_test_split(content_list,target_list,test_size=0.2,stratify=target_list) # 转化为特征向量,这里选择TfidfVectorizer的方式建立特征向量。不同新闻的词语使用会有较大不同。 vectorizer = TfidfVectorizer() X_train = vectorizer.fit_transform(x_train) X_test = vectorizer.transform(x_test) # 建立模型,这里用多项式朴素贝叶斯,因为样本特征的分布大部分是多元离散值 mnb = MultinomialNB() module = mnb.fit(X_train, y_train) #进行预测 y_predict = module.predict(X_test) # 输出模型精确度 scores=cross_val_score(mnb,X_test,y_test,cv=10) print("Accuracy:%.3f"%scores.mean()) # 输出模型评估报告 print("classification_report:\n",classification_report(y_predict,y_test))

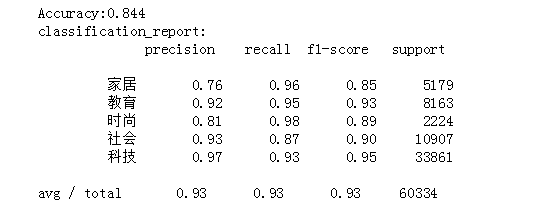

截图:

根据特征向量提取逆文本频率高的词汇,将预测结果和实际结果进行对比(用条形图)

代码:

# 根据逆文本频率筛选词汇,阈值=0.8 highWord = [] cla = [] for i in range(X_test.shape[0]): for j in range(X_test.shape[1]): if X_test[i,j] > 0.8: highWord.append(j) cla.append(i) # 查看具体哪个词 for i,j in zip(highWord, cla): print(vectorizer.get_feature_names()[i],'\t', y_test[j]) # 将预测结果和实际结果进行对比 import collections import matplotlib.pyplot as plt import pandas as pd from pylab import mpl mpl.rcParams['font.sans-serif'] = ['FangSong'] # 指定默认字体 mpl.rcParams['axes.unicode_minus'] = False # 解决保存图像是负号'-'显示为方块的问题 # 统计测试集和预测集的各类新闻个数 testCount = collections.Counter(y_test) predCount = collections.Counter(y_predict) print('实际:',testCount,'\n', '预测', predCount) # 建立标签列表,实际结果列表,预测结果列表, nameList = list(testCount.keys()) testList = list(testCount.values()) predictList = list(predCount.values()) x = list(range(len(nameList))) print("新闻类别:",nameList,'\n',"实际:",testList,'\n',"预测:",predictList) # 画图 plt.figure(figsize=(7,5)) total_width, n = 0.6, 2 width = total_width / n plt.bar(x, testList, width=width,label='实际',fc = 'g') for i in range(len(x)): x[i] = x[i] + width plt.bar(x, predictList,width=width,label='预测',tick_label = nameList,fc='b') plt.grid() plt.title('实际和预测对比图',fontsize=17) plt.xlabel('新闻类别',fontsize=17) plt.ylabel('频数',fontsize=17) plt.legend(fontsize =17) plt.tick_params(labelsize=17) plt.show()

截图: