一、关于saltstack

1、什么是saltstack

SaltStack是一个服务器基础架构集中化管理平台,具备配置管理、远程执行、监控等功能。

基于Python语言实现,结合轻量级消息队列(ZeroMQ)与Python第三方模块(Pyzmq、PyCrypto、Pyjinjia2、python-msgpack和PyYAML等)构建。

通过部署SaltStack,我们可以在成千万台服务器上做到批量执行命令,根据不同业务进行配置集中化管理、分发文件、采集服务器数据、操作系统基础及软件包管理等,SaltStack是运维人员提高工作效率、规范业务配置与操作的利器。

2、saltstack的原理:

SaltStack 采用 C/S模式,server端就是salt的master,client端就是minion,minion与master之间通过ZeroMQ消息队列通信

minion上线后先与master端联系,把自己的pub key发过去,这时master端通过salt-key -L命令就会看到minion的key,接受该minion-key后,也就是master与minion已经互信

master可以发送任何指令让minion执行了,salt有很多可执行模块,比如说cmd模块,在安装minion的时候已经自带了,它们通常位于你的python库中,

locate salt | grep /usr/可以看到salt自带的所有东西。这些模块是python写成的文件,里面会有好多函数,如cmd.run,当我们执行

salt '*' cmd.run 'uptime'的时候,master下发任务匹配到的minion上去,minion执行模块函数,并返回结果。master监听4505和4506端口,4505对应的是ZMQ的PUB system,用来发送消息,4506对应的是REP system是来接受消息的。

3、具体步骤如下:

- Salt stack的Master与Minion之间通过ZeroMq进行消息传递,使用了ZeroMq的发布-订阅模式,连接方式包括tcp,ipc

- salt命令,将

cmd.run ls命令从salt.client.LocalClient.cmd_cli发布到master,获取一个Jodid,根据jobid获取命令执行结果。 - master接收到命令后,将要执行的命令发送给客户端minion。

- minion从消息总线上接收到要处理的命令,交给

minion._handle_aes处理 minion._handle_aes发起一个本地线程调用cmdmod执行ls命令。线程执行完ls后,调用minion._return_pub方法,将执行结果通过消息总线返回给master- master接收到客户端返回的结果,调用

master._handle_aes方法,将结果写的文件中 salt.client.LocalClient.cmd_cli通过轮询获取Job执行结果,将结果输出到终端。

实验前提准备:

server1 172.25.58.1

server2 172.25.58.2

server3 172.25.58.3

真机: 172.25.58.250

在真机上搭建saltstack本地仓库,然后通过httpd的默认共享目录共享出去,这样就可以,在三台虚拟机进行下载操作。

[root@foundation58 ~]# cd /var/www/html/

[root@foundation58 html]# ls

2019 4.0 new rhel6.5 rhel7.0 rhel7.3

[root@foundation58 html]# cd 2019/

[root@foundation58 2019]# ls

libsodium-1.0.18-1.el7.x86_64.rpm

libtomcrypt-1.17-25.el7.x86_64.rpm

libtommath-0.42.0-5.el7.x86_64.rpm

openpgm-5.2.122-2.el7.x86_64.rpm

python2-crypto-2.6.1-16.el7.x86_64.rpm

python2-futures-3.0.5-1.el7.noarch.rpm

python2-msgpack-0.5.6-5.el7.x86_64.rpm

python2-psutil-2.2.1-5.el7.x86_64.rpm

python-cherrypy-5.6.0-2.el7.noarch.rpm

python-tornado-4.2.1-1.el7.x86_64.rpm

python-zmq-15.3.0-3.el7.x86_64.rpm

PyYAML-3.11-1.el7.x86_64.rpm

repodata

salt-2019.2.0-1.el7.noarch.rpm

salt-api-2019.2.0-1.el7.noarch.rpm

salt-master-2019.2.0-1.el7.noarch.rpm

salt-minion-2019.2.0-1.el7.noarch.rpm

salt-ssh-2019.2.0-1.el7.noarch.rpm

salt-syndic-2019.2.0-1.el7.noarch.rpm

zeromq-4.1.4-7.el7.x86_64.rpm

[root@foundation58 ~]# createrepo /var/www/html/2019/

Spawning worker 0 with 5 pkgs

Spawning worker 1 with 5 pkgs

Spawning worker 2 with 5 pkgs

Spawning worker 3 with 4 pkgs

Workers Finished

Saving Primary metadata

Saving file lists metadata

Saving other metadata

Generating sqlite DBs

Sqlite DBs complete

三台虚拟机上,均搭建yum源:

[root@server1 yum.repos.d]# pwd

/etc/yum.repos.d

[root@server1 yum.repos.d]# cat salt.repo

[salt]

name=saltstack

baseurl=http://172.25.58.250/2019

gpgcheck=0

1、配置salt

server1作为master:

[root@server1 ~]# yum install -y salt-master

[root@server1 ~]# systemctl start salt-master

[root@server1 ~]# systemctl enable salt-master #设置开机自启server2,3作为minion,即被控节点:

#在被控节点上添加主机信息:

[root@server2 ~]# cd /etc/salt/

[root@server2 salt]# ls

cloud cloud.maps.d master minion.d proxy.d

cloud.conf.d cloud.profiles.d master.d pki roster

cloud.deploy.d cloud.providers.d minion proxy

[root@server2 salt]# vim minion

15 # resolved, then the minion will fail to start.

16 master: 172.25.58.1

[root@server2 salt]# systemctl restart salt-minion

![]()

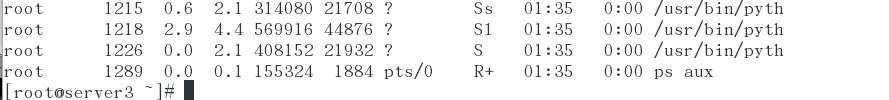

server3上做同样的操作~~~

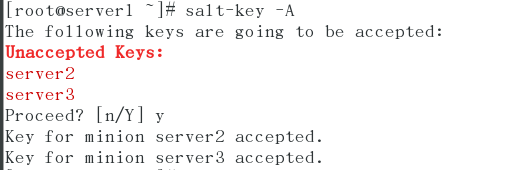

此时在server1上查看salt列表:(server2 server3均加上~~~)

[root@server1 ~]# salt-key -L

Accepted Keys:

Denied Keys:

Unaccepted Keys:

server2

server3

Rejected Keys:

[root@server1 ~]# salt-key -A

The following keys are going to be accepted:

Unaccepted Keys:

server2

server3

Proceed? [n/Y] Y

Key for minion server2 accepted.

Key for minion server3 accepted.安装

[root@server1 ~]# yum install lsof tree -y

[root@server1 ~]# salt server2 test.ping

server2:

True

[root@server1 ~]# salt server3 test.ping

server3:

True

进行测试后,查看4505端口和4506端口:

[root@server1 ~]# lsof -i :4505

COMMAND PID USER FD TYPE DEVICE SIZE/OFF NODE NAME

salt-mast 2285 root 15u IPv4 23602 0t0 TCP *:4505 (LISTEN)

salt-mast 2285 root 17u IPv4 27923 0t0 TCP sever1:4505->sever2:41228 (ESTABLISHED)

salt-mast 2285 root 18u IPv4 27936 0t0 TCP sever1:4505->sever3:58194 (ESTABLISHED)

[root@server1 ~]# lsof -i :4506

COMMAND PID USER FD TYPE DEVICE SIZE/OFF NODE NAME

salt-mast 2291 root 23u IPv4 23610 0t0 TCP *:4506 (LISTEN)

此时进行测试:

master监听4505和4506端口,4505对应的是ZMQ的PUB system,用来发送消息

4506对应的是REP system是来接受消息的。

[root@server1 ~]# salt server2 test.ping

server2:

True

[root@server1 ~]# salt server3 test.ping

server3:

True

[root@server1 master]# salt server2 cmd.run hostname

server2:

server2

[root@server1 ~]# lsof -i :4505

COMMAND PID USER FD TYPE DEVICE SIZE/OFF NODE NAME

salt-mast 2285 root 15u IPv4 23602 0t0 TCP *:4505 (LISTEN)

salt-mast 2285 root 17u IPv4 27923 0t0 TCP sever1:4505->sever2:41228 (ESTABLISHED)

salt-mast 2285 root 18u IPv4 27936 0t0 TCP sever1:4505->sever3:58194 (ESTABLISHED)

[root@server1 ~]# lsof -i :4506

COMMAND PID USER FD TYPE DEVICE SIZE/OFF NODE NAME

salt-mast 2291 root 23u IPv4 23610 0t0 TCP *:4506 (LISTEN)

[root@server2 ~]# lsof -i :4505

COMMAND PID USER FD TYPE DEVICE SIZE/OFF NODE NAME

salt-mini 2178 root 27u IPv4 21530 0t0 TCP sever2:38184->sever1:4505 (ESTABLISHED)

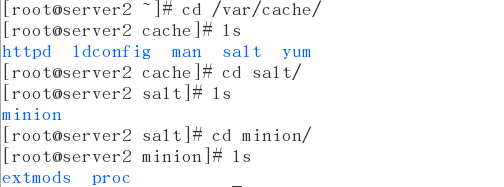

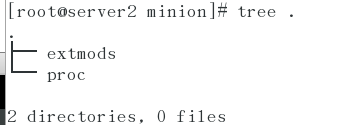

[root@server1 ~]# cd /etc/salt/pki/

[root@server1 pki]# tree .

.

├── master

│ ├── master.pem

│ ├── master.pub

│ ├── minions

│ │ ├── server2

│ │ └── server3

│ ├── minions_autosign

│ ├── minions_denied

│ ├── minions_pre

│ └── minions_rejected

└── minion

7 directories, 4 files

[root@server1 pki]# md5sum server2

0f44838afa6b0986b4ee1fe0c470a1b3 server2

[root@server1 pki]# ls

master minion

[root@server1 pki]# cd master/minions

[root@server1 minions]# ls

server2 server3

[root@server1 minions]# md5sum server2

0f44838afa6b0986b4ee1fe0c470a1b3 server2

[root@server1 master]# ls

master.pem minions minions_denied minions_rejected

master.pub minions_autosign minions_pre

[root@server1 master]# md5sum master.pub

401458b786d98f13235fe3ab5f254a57 master.pub

查看server2上经过md5加密后的密码,和server1上查看接过一样:

[root@server2 pki]# pwd

/etc/salt/pki

[root@server2 pki]# tree .

.

├── master

└── minion

├── minion_master.pub

├── minion.pem

└── minion.pub

2 directories, 3 files

[root@server2 pki]# cd minion/

[root@server2 minion]# ls

minion_master.pub minion.pem minion.pub

[root@server2 minion]# md5sum minion.pub

0f44838afa6b0986b4ee1fe0c470a1b3 minion.pub

[root@server2 minion]# md5sum minion_master.pub

401458b786d98f13235fe3ab5f254a57 minion_master.pub

测试完毕,此时salt-master以及minion都配置好了。

下来进行练习使用

前提,都要创建一个改哦工作目录:

[root@server1 ~]# mkdir /srv/salt二、salt-stack的应用

应用一:apache的搭建

/srv/salt是默认的工作目录

[root@server1 files]# pwd

/srv/salt/apache/files

[root@server1 files]# cd ..

[root@server1 apache]# ls

files service.sls

[root@server1 apache]# cat service.sls

apache-install:

pkg.installed:

- pkgs:

- httpd

- httpd-tools

file.managed:

- name: /etc/httpd/conf/httpd.conf

- source: salt://apache/files/httpd.conf

service.running:

- name: httpd

- reload: true

- watch:

- file: apache-install

reload还是restart 视对应的服务而定

[root@server1 apache]# ls

files service.sls

[root@server1 apache]# scp server3:/etc/httpd/conf/httpd.conf files

[root@server1 apache]# cd files/

[root@server1 files]# ls

httpd.conf此时进行部署,可以看到部署到server3上成功~~~

[root@server1 apache]# salt server3 state.sls apache.service

#apache是工作目录,service是文件

server3:

----------

ID: apache-install

Function: pkg.installed

Result: True

Comment: All specified packages are already installed

Started: 02:42:36.440826

Duration: 1516.674 ms

Changes:

----------

ID: apache-install

Function: file.managed

Name: /etc/httpd/conf/httpd.conf

Result: True

Comment: File /etc/httpd/conf/httpd.conf is in the correct state

Started: 02:42:38.003767

Duration: 96.749 ms

Changes:

----------

ID: apache-install

Function: service.running

Name: httpd

Result: True

Comment: Started Service httpd

Started: 02:42:38.103105

Duration: 278.095 ms

Changes:

----------

httpd:

True

Summary for server3

------------

Succeeded: 3 (changed=1)

Failed: 0

------------

Total states run: 3

Total run time: 1.892 s应用二:源码安装nginx

[root@server1 salt]# pwd

/srv/salt

[root@server1 salt]# mkdir nginx

[root@server1 salt]# cd nginx/

[root@server1 nginx]# vim install.sls

[root@server1 nginx]# pwd

/srv/salt/nginx

[root@server1 nginx]# ls

install.sls

[root@server1 nginx]# mkdir files

创建files目录,将nginx安装包下载到这个目录下接下来分别创建三个目录:

files #存放文件以及nginx安装包

install.sls #nginx的源码安装文件

start.sls #nginx的启动文件

[root@server1 nginx]# ls

files install.sls start.sls(1)、先进行源码安装:

[root@server1 nginx]# cat install.sls

nginx-install:

pkg.installed:

- pkgs:

- gcc

- openssl-devel

- pcre-devel

file.managed:

- name: /mnt/nginx-1.17.4.tar.gz

- source: salt://nginx/files/nginx-1.17.4.tar.gz

cmd.run:

- name: cd /mnt && tar zxf nginx-1.17.4.tar.gz && cd nginx-1.17.4 && sed -i.bak 's/CFLAGS="$CFLAGS -g"/#CFLAGS="$CFLAGS -g"/g' auto/cc/gcc && ./configure --prefix=/usr/local/nginx --with-http_ssl_module &> /dev/null && make &> /dev/null && make install &> /dev/null

- creates: /usr/local/nginx

、

(2)、进行启动模块的创建:

[root@server1 nginx]# cat start.sls

include:

- nginx.install

/usr/local/nginx/conf/nginx.conf:

file.managed:

- source: salt://nginx/files/nginx.conf

nginx-service:

file.managed:

- name: /usr/lib/systemd/system/nginx.service

- source: salt://nginx/files/nginx.service

service.running:

- name: nginx

- reload: true

- watch:

- file: /usr/local/nginx/conf/nginx.conf

网上下载,nginx的源码启动脚本,然后进行配置修改:

[root@server1 files]# pwd

/srv/salt/nginx/files

[root@server1 files]# ls

nginx-1.17.4.tar.gz nginx.conf nginx.service

[root@server1 files]# cat nginx.service

[Unit]

Description=The NGINX HTTP and reverse proxy server

After=syslog.target network.target remote-fs.target nss-lookup.target

[Service]

Type=forking

PIDFile=/usr/local/nginx/logs/nginx.pid

ExecStartPre=/usr/local/nginx/sbin/nginx -t

ExecStart=/usr/local/nginx/sbin/nginx

ExecReload=/usr/loacl/nginx/sbin/nginx -s reload

ExecStop=/bin/kill -s QUIT $MAINPID

PrivateTmp=true

[Install]

WantedBy=multi-user.target

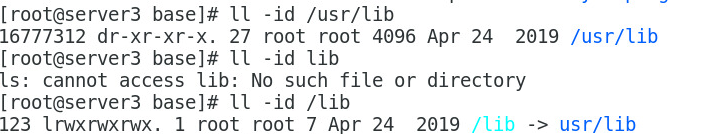

其实 /usr/lib和/lib是一样的,/lib只是做了个软连接罢了。

(3)、最后一步:[root@server1 salt]# salt '*' state.highstate

关于该运行命令:

master将会指导所有的目标minions运行 state.highstate。当minion执行highstate,它将会下载top文件中匹配的内容,minion将表达式中匹配的内容下载、编 译、执行。一旦完成,minion将返回所有的动作执行结果和所有更改

关于:salt '*' state.highstate test=True

#只是测试执行,类似于模拟,不会在minion真正执行,便于我们编写测试时使用。

也可以进行多结点的推送~~~

[root@server1 salt]# salt 'server[2,3]' state.highstate 或者多节点推送~~

[root@server1 salt]# ls

apache nginx top.sls

[root@server1 salt]# ls

apache nginx top.sls

[root@server1 salt]# tree .

.

├── apache

│ ├── files

│ │ └── httpd.conf

│ └── service.sls

├── nginx

│ ├── files

│ │ ├── nginx-1.17.4.tar.gz

│ │ ├── nginx.conf

│ │ └── nginx.service

│ ├── install.sls

│ └── start.sls

└── top.sls

4 directories, 8 files

[root@server1 salt]# salt '*' state.highstate

推送结果如下,成功~~~

[root@server1 salt]# salt '*' state.highstate

server3:

----------

ID: apache-install

Function: pkg.installed

Result: True

Comment: The following packages were installed/updated: httpd, httpd-tools

Started: 15:33:21.632120

Duration: 4788.075 ms

Changes:

----------

apr:

----------

new:

1.4.8-3.el7

old:

apr-util:

----------

new:

1.5.2-6.el7

old:

httpd:

----------

new:

2.4.6-45.el7

old:

httpd-tools:

----------

new:

2.4.6-45.el7

old:

mailcap:

----------

new:

2.1.41-2.el7

old:

----------

ID: apache-install

Function: file.managed

Name: /etc/httpd/conf/httpd.conf

Result: True

Comment: File /etc/httpd/conf/httpd.conf is in the correct state

Started: 15:33:26.463460

Duration: 108.513 ms

Changes:

----------

ID: apache-install

Function: service.running

Name: httpd

Result: True

Comment: Started Service httpd

Started: 15:33:28.299062

Duration: 127.855 ms

Changes:

----------

httpd:

True

Summary for server3

------------

Succeeded: 3 (changed=2)

Failed: 0

------------

Total states run: 3

Total run time: 5.024 s

server2:

----------

ID: nginx-install

Function: pkg.installed

Result: True

Comment: 3 targeted packages were installed/updated.

Started: 15:33:21.167920

Duration: 10206.927 ms

Changes:

----------

cpp:

----------

new:

4.8.5-11.el7

old:

gcc:

----------

new:

4.8.5-11.el7

old:

glibc-devel:

----------

new:

2.17-157.el7

old:

glibc-headers:

----------

new:

2.17-157.el7

old:

kernel-headers:

----------

new:

3.10.0-514.el7

old:

keyutils-libs-devel:

----------

new:

1.5.8-3.el7

old:

krb5-devel:

----------

new:

1.14.1-26.el7

old:

libcom_err-devel:

----------

new:

1.42.9-9.el7

old:

libkadm5:

----------

new:

1.14.1-26.el7

old:

libmpc:

----------

new:

1.0.1-3.el7

old:

libselinux-devel:

----------

new:

2.5-6.el7

old:

libsepol-devel:

----------

new:

2.5-6.el7

old:

libverto-devel:

----------

new:

0.2.5-4.el7

old:

mpfr:

----------

new:

3.1.1-4.el7

old:

openssl-devel:

----------

new:

1:1.0.1e-60.el7

old:

pcre-devel:

----------

new:

8.32-15.el7_2.1

old:

zlib-devel:

----------

new:

1.2.7-17.el7

old:

----------

ID: nginx-install

Function: file.managed

Name: /mnt/nginx-1.17.4.tar.gz

Result: True

Comment: File /mnt/nginx-1.17.4.tar.gz updated

Started: 15:33:31.401550

Duration: 209.246 ms

Changes:

----------

diff:

New file

mode:

0644

----------

ID: nginx-install

Function: cmd.run

Name: cd /mnt && tar zxf nginx-1.17.4.tar.gz && cd nginx-1.17.4 && sed -i.bak 's/CFLAGS="$CFLAGS -g"/#CFLAGS="$CFLAGS -g"/g' auto/cc/gcc && ./configure --prefix=/usr/local/nginx --with-http_ssl_module &> /dev/null && make &> /dev/null && make install &> /dev/null

Result: True

Comment: Command "cd /mnt && tar zxf nginx-1.17.4.tar.gz && cd nginx-1.17.4 && sed -i.bak 's/CFLAGS="$CFLAGS -g"/#CFLAGS="$CFLAGS -g"/g' auto/cc/gcc && ./configure --prefix=/usr/local/nginx --with-http_ssl_module &> /dev/null && make &> /dev/null && make install &> /dev/null" run

Started: 15:33:31.616297

Duration: 24555.504 ms

Changes:

----------

pid:

2469

retcode:

0

stderr:

stdout:

----------

ID: /usr/local/nginx/conf/nginx.conf

Function: file.managed

Result: True

Comment: File /usr/local/nginx/conf/nginx.conf is in the correct state

Started: 15:33:56.172085

Duration: 20.27 ms

Changes:

----------

ID: nginx-service

Function: file.managed

Name: /usr/lib/systemd/system/nginx.service

Result: True

Comment: File /usr/lib/systemd/system/nginx.service updated

Started: 15:33:56.192527

Duration: 18.645 ms

Changes:

----------

diff:

New file

mode:

0644

----------

ID: nginx-service

Function: service.running

Name: nginx

Result: True

Comment: Started Service nginx

Started: 15:33:57.271475

Duration: 71.306 ms

Changes:

----------

nginx:

True

Summary for server2

------------

Succeeded: 6 (changed=5)

Failed: 0

------------

Total states run: 6

Total run time: 35.082 s

查看结果:succeed 6 执行成功~~~

nginx源码编译好了,并且安装成功~~~~

验证:

在server2上看,nginx源码安装成功!!!!

[root@server2 ~]# ll /usr/local/nginx/

total 0

drwx------ 2 nobody root 6 Nov 26 15:33 client_body_temp

drwxr-xr-x 2 root root 333 Nov 27 10:37 conf

drwx------ 2 nobody root 6 Nov 26 15:33 fastcgi_temp

drwxr-xr-x 2 root root 40 Nov 26 15:33 html

drwxr-xr-x 2 root root 58 Nov 26 15:33 logs

drwx------ 2 nobody root 6 Nov 26 15:33 proxy_temp

drwxr-xr-x 2 root root 19 Nov 26 15:33 sbin

drwx------ 2 nobody root 6 Nov 26 15:33 scgi_temp

drwx------ 2 nobody root 6 Nov 26 15:33 uwsgi_temp优化:

1、修改并发数目,然后推动进行验证:

在server1上,对从server2上cop过来的nginx.conf文件里进行修改,修改进程数目:

[root@server1 files]# cat nginx.conf

#user nobody;

worker_processes 2;

[root@server1 nginx]# ls

files install.sls start.sls

[root@server1 nginx]# salt server2 state.sls nginx.start #对server2执行nginx的start.sls文件

在server2上查看,该哦工作进程即可:

2391 ? Ss 0:00 nginx: master process /usr/local/nginx/sbin/nginx

2392 ? S 0:00 nginx: worker process

2393 ? S 0:00 nginx: worker process

2405 pts/0 R+ 0:00 ps ax2、继续优化脚本,如果pid文件不存在就执行

#下边的是新添加的

/usr/local/nginx/sbin/nginx:

cmd.run:

- creates: /usr/local/nginx/logs/nginx.pid但是可以查看,nginx的启动脚本里都有配置,所以在这里不需要~~~~

3、还可以继续优化,在推送脚本里添加 cmd.wait

关于cmd.wait:

cmd.run在每次应用state的时候都会被执行;

而cmd.wait用于在某个state变化时才会执行,通常和watch一起使用

/usr/local/nginx/sbin/nginx -s reload

cmd.wait:

- watch:

- file: /usr/local/nginx/conf/nginx.conf应用三:haproxy的搭建

1、编辑推送文件,先进行安装然后再进行scp haproxy的配置文件~~

[root@server1 salt]# ls

apache haproxy nginx top.sls

[root@server1 salt]# cd haproxy/

[root@server1 haproxy]# ls

files install.sls

[root@server1 haproxy]# cat install.sls

haproxy-install:

pkg.installed:

- pkgs:

- haproxy执行推送,给server4上安装haproxy

[root@server1 salt]# salt server4 state.sls haproxy.install

server4:

----------

ID: haproxy-install

Function: pkg.installed

Result: True

Comment: The following packages were installed/updated: haproxy

Started: 15:09:10.089318

Duration: 3138.347 ms

Changes:

----------

haproxy:

----------

new:

1.5.18-3.el7

old:

Summary for server4

------------

Succeeded: 1 (changed=1)

Failed: 0

------------

Total states run: 1

Total run time: 3.138 s

[root@server1 salt]# ls

apache haproxy nginx top.sls

[root@server1 salt]# ls

apache haproxy nginx top.sls

[root@server1 salt]# cd haproxy/

[root@server1 haproxy]# ls

files install.sls

[root@server1 haproxy]# cat install.sls

haproxy-install:

pkg.installed:

- pkgs:

- haproxy将配置文件从server4上cop到server1的文件目录下:

[root@server1 haproxy]# scp [email protected]:/etc/haproxy/haproxy.cfg files2、完善install文件,添加文件管理和启动服务模块

[root@server1 salt]# cat haproxy/

files/ install.sls

[root@server1 salt]# cat haproxy/install.sls

haproxy-install:

pkg.installed:

- pkgs:

- haproxy

file.managed:

- name: /etc/haproxy/haproxy.cfg

- source: salt://haproxy/files/haproxy.cfg

service.running:

- name: haproxy

- reload: True

- watch:

- file: haproxy-installhaproxy配置文件的配置,线尽心安装给server4,安装完毕后,将4上的配置文件copy过来到/srv/salt/haproxy/files目录下,入然后进行相应的配置即可~~

[root@server1 salt]# cat haproxy/files/haproxy.cfg

#---------------------------------------------------------------------

# Example configuration for a possible web application. See the

# full configuration options online.

#

# http://haproxy.1wt.eu/download/1.4/doc/configuration.txt

#

#---------------------------------------------------------------------

#---------------------------------------------------------------------

# Global settings

#---------------------------------------------------------------------

global

# to have these messages end up in /var/log/haproxy.log you will

# need to:

#

# 1) configure syslog to accept network log events. This is done

# by adding the '-r' option to the SYSLOGD_OPTIONS in

# /etc/sysconfig/syslog

#

# 2) configure local2 events to go to the /var/log/haproxy.log

# file. A line like the following can be added to

# /etc/sysconfig/syslog

#

# local2.* /var/log/haproxy.log

#

log 127.0.0.1 local2

chroot /var/lib/haproxy

pidfile /var/run/haproxy.pid

maxconn 4000

user haproxy

group haproxy

daemon

# turn on stats unix socket

stats socket /var/lib/haproxy/stats

#---------------------------------------------------------------------

# common defaults that all the 'listen' and 'backend' sections will

# use if not designated in their block

#---------------------------------------------------------------------

defaults

mode http

log global

option httplog

option dontlognull

option http-server-close

option forwardfor except 127.0.0.0/8

option redispatch

retries 3

timeout http-request 10s

timeout queue 1m

timeout connect 10s

timeout client 1m

timeout server 1m

timeout http-keep-alive 10s

timeout check 10s

maxconn 3000

#---------------------------------------------------------------------

# main frontend which proxys to the backends

#---------------------------------------------------------------------

frontend main *:8080

default_backend app

backend app

balance roundrobin

server app1 127.0.0.2:80 check

server app2 127.0.0.3:80 check

推送~

[root@server1 haproxy]# salt server4 state.sls haproxy.install成功

[root@server1 haproxy]# ls

files install.sls

[root@server1 haproxy]# salt server4 state.sls haproxy.install

server4:

----------

ID: haproxy-install

Function: pkg.installed

Result: True

Comment: All specified packages are already installed

Started: 15:49:23.013975

Duration: 517.771 ms

Changes:

----------

ID: haproxy-install

Function: file.managed

Name: /etc/haproxy/haproxy.cfg

Result: True

Comment: File /etc/haproxy/haproxy.cfg updated

Started: 15:49:23.533969

Duration: 31.696 ms

Changes:

----------

diff:

---

+++

@@ -60,7 +60,7 @@

#---------------------------------------------------------------------

# main frontend which proxys to the backends

#---------------------------------------------------------------------

-frontend main *:80

+frontend main *:8080

default_backend app

backend app

----------

ID: haproxy-install

Function: service.running

Name: haproxy

Result: True

Comment: Service reloaded

Started: 15:49:23.597898

Duration: 22.382 ms

Changes:

----------

haproxy:

True

Summary for server4

------------

Succeeded: 3 (changed=2)

Failed: 0

------------

Total states run: 3

Total run time: 571.849 ms

在这里,需要注意的是端口。刚才在实验的时候,我将server4上haproxy的端口设置成了80,但是80端口已经被占用,就到导致服务器起不来~~~

应用四、keepalived+haproxy

1、先进行安装keepalived

[root@server1 salt]# ls

apache haproxy keepalived nginx top.sls

[root@server1 salt]# cd keepalived/

[root@server1 keepalived]# ls

install.sls

[root@server1 salt]# salt server4 state.sls keepalived.install

server4:

----------

ID: kp-install

Function: pkg.installed

Result: True

Comment: The following packages were installed/updated: keepalived

Started: 16:57:13.608327

Duration: 3358.124 ms

Changes:

----------

keepalived:

----------

new:

1.2.13-8.el7

old:

lm_sensors-libs:

----------

new:

3.4.0-4.20160601gitf9185e5.el7

old:

net-snmp-agent-libs:

----------

new:

1:5.7.2-24.el7_2.1

old:

net-snmp-libs:

----------

new:

1:5.7.2-24.el7_2.1

old:

Summary for server4

------------

Succeeded: 1 (changed=1)

Failed: 0

------------

Total states run: 1

Total run time: 3.358 s

2、然后copy keepalived的主配置文件

[root@server1 files]# scp [email protected]:/etc/keepalived/keepalived.conf .

[email protected]'s password:

keepalived.conf 100% 3562 3.5KB/s 00:00

[root@server1 files]# ls

keepalived.conf3、配置主配置文件

[root@server1 keepalived]# pwd

/srv/salt/keepalived

[root@server1 keepalived]# ls

files install.sls

[root@server1 keepalived]# cat files/keepalived.conf

! Configuration File for keepalived

global_defs {

notification_email {

[email protected]

[email protected]

[email protected]

}

notification_email_from [email protected]

smtp_server 192.168.200.1

smtp_connect_timeout 30

router_id LVS_DEVEL

}

vrrp_instance VI_1 {

state {{STATE}}

interface eth0

virtual_router_id {{VRID}}

priority {{PRIORITY}}

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

172.25.58.100

}

}

4、配置安装文件sls

[root@server1 keepalived]# ls

files install.sls

[root@server1 keepalived]# cat install.sls

kp-install:

pkg.installed:

- pkgs:

- keepalived

file.managed:

- name: /etc/keepalived/keepalived.conf

- source: salt://keepalived/files/keepalived.conf

- template: jinja

{% if grains['fqdn'] == 'server1' %}

STATE: MASTER

VRID: 51

PRIORITY: 100

{% elif grains['fqdn'] == 'server4' %}

STATE: BACKUP

VRID: 51

PRIORITY: 50

{% endif %}

service.running:

- name: keepalived

- reload: True

- watch:

- file: kp-install

5、推送~~~

[root@server1 keepalived]# salt server4 state.sls keepalived.install

[root@server1 keepalived]# salt server4 state.sls keepalived.install

server4:

----------

ID: kp-install

Function: pkg.installed

Result: True

Comment: All specified packages are already installed

Started: 17:21:21.134990

Duration: 522.619 ms

Changes:

----------

ID: kp-install

Function: file.managed

Name: /etc/keepalived/keepalived.conf

Result: True

Comment: File /etc/keepalived/keepalived.conf updated

Started: 17:21:21.659836

Duration: 43.304 ms

Changes:

----------

diff:

---

+++

@@ -13,19 +13,17 @@

}

vrrp_instance VI_1 {

- state MASTER

+ state BACKUP

interface eth0

virtual_router_id 51

- priority 100

+ priority 50

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

- 192.168.200.16

- 192.168.200.17

- 192.168.200.18

+ 172.25.58.100

}

}

----------

ID: kp-install

Function: service.running

Name: keepalived

Result: True

Comment: Started Service keepalived

Started: 17:21:21.704058

Duration: 82.764 ms

Changes:

----------

keepalived:

True

Summary for server4

------------

Succeeded: 3 (changed=2)

Failed: 0

------------

Total states run: 3

Total run time: 648.687 ms