前言

搁置挺长时间的HBase API学习终于提上日程,打开原来创建的虚拟机,发现竟然崩掉了,于是就干脆重新创一个加上Hadoop单机版安装一个单机HBase,以下为过程记录。

准备

- NAT模式的虚拟机一个(Vmware创建),具体方法见https://blog.csdn.net/qq_38586378/article/details/86996644

- jdk,下载地址:https://www.oracle.com/technetwork/java/javase/downloads/jdk8-downloads-2133151.html

- hadoop-2.4.0 下载地址:http://archive.apache.org/dist/hadoop/common/hadoop-2.4.0/

- hbase-1.3.3 下载地址:http://archive.apache.org/dist/hbase/1.3.3/ 需要注意hbase和hadoop的版本兼容问题,具体可参考https://blog.csdn.net/renzhewudi77/article/details/83826779

- 注意一个非常重要的点,即使是单节点,也是需要配置SSH密钥才能保证hadoop正常启动。SSH配置参考https://blog.csdn.net/qq_38586378/article/details/81352358

1. ssh-keygen -t rsa (中间Y/N选择直接空格即可)

2. cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys

安装过程

1. tar -zxvf解压所有压缩包,并移动到安装目录/usr/local/src/【根据个人需求】

2. 配置环境变量并source /etc/profile生效

3. hadoop单机配置

3.1 配置hadoop-2.4.0/etc/hadoop/hadoop-env.sh

export JAVA_HOME=/usr/local/src/jdk1.8.0_201

export HADOOP_NAMENODE_OPTS=" -Xms1024m -Xmx1024m -XX:+UseParallelGC"

export HADOOP_DATANODE_OPTS=" -Xms1024m -Xmx1024m"

3.2 配置hadoop-2.4.0/etc/hadoop/core-site.xml 其中8020为hadoop的端口号

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://ranger:8020</value>

</property>

</configuration>

3.3 配置hadoop-2.4.0/etc/hadoop/hdfs-site.xml

<configuration>

<property>

<name>dfs.replication</name>

<value>1</value>

</property>

<property>

<name>dfs.namenode.name.dir</name>

<value>file:///usr/local/src/hadoop/hdfs/nn</value>

</property>

<property>

<name>dfs.datanode.data.dir</name>

<value>file:///usr/local/src/hadoop/hdfs/dn</value>

</property>

</configuration>

3.4 格式化namenode

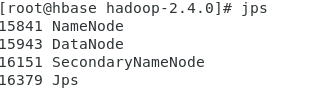

3.5 启动测试是否成功

3.6 web端查看结点情况 hbase:50070/ 注意hbase为虚拟机的hostname

3.7 如果启动Hadoop出错

错误:

Starting namenodes on [master]

ERROR: Attempting to operate on hdfs namenode as root

ERROR: but there is no HDFS_NAMENODE_USER defined. Aborting operation.

Starting datanodes

ERROR: Attempting to operate on hdfs datanode as root

ERROR: but there is no HDFS_DATANODE_USER defined. Aborting operation.

Starting secondary namenodes [slave1]

ERROR: Attempting to operate on hdfs secondarynamenode as root

ERROR: but there is no HDFS_SECONDARYNAMENODE_USER defined. Aborting operation.

解决方法:

在/hadoop/sbin路径下:

将start-dfs.sh,stop-dfs.sh两个文件顶部添加以下参数

HDFS_DATANODE_USER=root

HADOOP_SECURE_DN_USER=hdfs

HDFS_NAMENODE_USER=root

HDFS_SECONDARYNAMENODE_USER=root

start-yarn.sh,stop-yarn.sh顶部也需添加以下

YARN_RESOURCEMANAGER_USER=root

HADOOP_SECURE_DN_USER=yarn

YARN_NODEMANAGER_USER=root

然后重新执行3.4-.3.5格式化后重新启动Hadoop服务(如果仍然未成功,尝试配置SSH)。

3.8 如果需要配置资源管理yarn,则继续配置marped-site.xml和yarn-site.xml

# etc/hadoop/mapred-site.xml:

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

</configuration>

# etc/hadoop/yarn-site.xml:

<configuration>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

</configuration>4. hbase单机配置

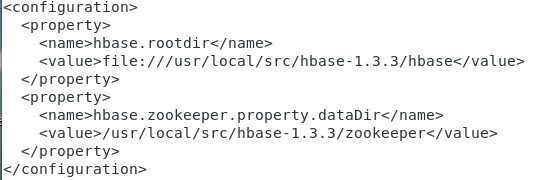

4.1 配置hbase-1.3.3/conf/hbase-site.xml

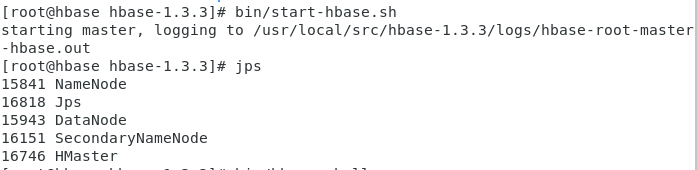

4.2 bin/start-hbase.sh测试是否安装成功

4.3 bin/hbase shell测试是否可用

总结

根据需要学会安装单机版、伪分布式版和完全分布式版,一般初步学习单机版足够。