tensorflow自然语言处理(自动生成古诗)

在我上一篇博客当中,已经写了CNN验证码识别,由此可以看出神经网络的强大之处,所以这篇博客主要是来讲解一下RNN中的LSTM网络处理自然语言,输入一个字就自动生成一篇优美的古诗。

RNN主要逻辑就是每个样本之间有比较强烈的关联性,这种关联性比较适合自然语言的处理,因为我们说的话都是有一定的关联性。这里我们不过多的讲解RNN的理论基础,因为上百度上面搜索还是有很多的,我这篇主要是讲解案例。

步骤思路

1、首先我们要先获取训练集,因为这种层级比较少的神经网络,不适合应用在比较广的方向,一般训练集的内容都是在一个方向上,比如只获取唐诗三百首,或者是某个时代的诗词,因为我有的时候也会写一两首诗,所以知道每个时代的诗的风格是不一样的,这里我获取了一部分的诗歌集和七言律诗和五言律诗,三个分开训练得出三个不同的模型。古诗词的获取可以取网站上爬取出来。

2、然后就要处理这些古诗词,这里有很多人都是用分词来做的,但是我试过分词的效果不是很好,因为分词训练出来的结果不好,因为在用结巴分词不是太能够把古诗词分的很好。所以我就把每个字分出来,因为每个普通的词的每个字也是有很大的联系在里面的,所以我就把每个字给分出来。分词出来后就要把这些字转换成对应的数字,因为机器只会识别数字。

3、建立模型,这里要建立两层的LSTM深层模型,两层的效果以及比较好了,如果用三层也是可以的。

处理训练集数据

切割古诗

start_token = 'B'

end_token = 'E'

def process_text(file_name):

poems = []

with open(file_name, "r", encoding='utf-8', ) as f:

for line in f.readlines():

try: #因为有些诗词不是规定格式,所以要加一个捕获异常

title, content = line.strip().split(':') #把诗词的标题和诗词的内容提取出来

content = content.replace(' ', '')

#加一些判断下去,就可以避免有些多余的符号在里面

if '_' in content or '(' in content or '(' in content or '《' in content or '[' in content or \

start_token in content or end_token in content:

continue

if len(content) < 5 or len(content) > 79:

continue

content = start_token + content + end_token #加上一些标记在头尾,后期就比较好处理

poems.append(content)

except ValueError as e:

pass

#把每个字给切割下来

all_word = []

for poem in poems:

for word in poem:

all_word.append(word)

#对每个子进行计数

counter = collections.Counter(all_word)

words = sorted(counter.keys(), key=lambda x: counter[x], reverse=True) #对词进行排序,常用的词放在前面

words.append(' ')

L = len(words)

word_int_map = dict(zip(words, range(L))) #把词和词出现的次数存成字典

poems_vector = [list(map(lambda word: word_int_map.get(word, L), poem)) for poem in poems] #把每首诗的代表数字存成列表

print(word_int_map)

return poems_vector, word_int_map, words

这里的主要逻辑是这样的:1、先把每一首诗的内容提取出来,因为题目不在我的考虑范围之内。2、然后把每首诗的每个字符提取出来,存成列表。3、然后在统计每个词出现的次数,然后按照出现最多的放在前面,存成字典。4、最后赋予每个字有一个单独的数字代表,每首诗都变成一个列表,而列表的内容就是每个字的代表数字。

批量处理

因为训练的时候肯定是批量处理的,所以下面就说一下批量处理。

def get_batch(batch_size,poems_vector,word_int):

n_chunk = len(poems_vector) // batch_size #把诗词的数量整除批量处理的量

x_batches = []

y_batches = []

#这里定义批量处理的两个索引

for i in range(n_chunk):

start_index = i * batch_size

end_index = start_index + batch_size

batches = poems_vector[start_index:end_index] #截取训练集的样本

length = max(map(len, batches)) #获取最长的那个诗词的长度

x_data = np.full((batch_size, length), word_int[' '], np.int32) #制造一个数组

for row, batch in enumerate(batches): #把x_data全都替换成诗词的数字代表数组

x_data[row, :len(batch)] = batch

y_data = np.copy(x_data)

y_data[:, :-1] = x_data[:, 1:]

x_batches.append(x_data)

y_batches.append(y_data)

return x_batches, y_batches

这里的代码逻辑就是,先把内容分为一批一批,这样方便训练的时候提取。然后根据批量内容生成一个numpy数组,这个数组是固定一个数字,然后再用每首诗的列表内容代替掉这个数组。这个数组就是特征值。目标值就根据特征值进行修改,调整方向如:

x_data y_data

[6,2,4,6,9] [2,4,6,9,9]

[1,4,2,8,5] [4,2,8,5,5]

这样就可以根据模型得出的最后的结果,然后和y_data进行对比,就可以得出最后的结果是否正确了。

源码

import collections

import numpy as np

file = "D:\\mypathon3\\python\\RNN诗词生成\\wulv-all.txt"

#首先对诗词的文本格式进行处理

start_token = 'B'

end_token = 'E'

def process_text(file_name):

poems = []

with open(file_name, "r", encoding='utf-8', ) as f:

for line in f.readlines():

try: #因为有些诗词不是规定格式,所以要加一个捕获异常

title, content = line.strip().split(':') #把诗词的标题和诗词的内容提取出来

content = content.replace(' ', '')

#加一些判断下去,就可以避免有些多余的符号在里面

if '_' in content or '(' in content or '(' in content or '《' in content or '[' in content or \

start_token in content or end_token in content:

continue

if len(content) < 5 or len(content) > 79:

continue

content = start_token + content + end_token #加上一些标记在头尾,后期就比较好处理

poems.append(content)

except ValueError as e:

pass

#把每个字给切割下来

all_word = []

for poem in poems:

for word in poem:

all_word.append(word)

#对每个子进行计数

counter = collections.Counter(all_word)

words = sorted(counter.keys(), key=lambda x: counter[x], reverse=True) #对词进行排序,常用的词放在前面

words.append(' ')

L = len(words)

word_int_map = dict(zip(words, range(L))) #把词和词出现的次数存成字典

poems_vector = [list(map(lambda word: word_int_map.get(word, L), poem)) for poem in poems] #把每首诗的代表数字存成列表

print(word_int_map)

return poems_vector, word_int_map, words

#这里批量获取训练样本,并且把训练样本转换成特征值和目标值

def get_batch(batch_size,poems_vector,word_int):

n_chunk = len(poems_vector) // batch_size #把诗词的数量整除批量处理的量

x_batches = []

y_batches = []

#这里定义批量处理的两个索引

for i in range(n_chunk):

start_index = i * batch_size

end_index = start_index + batch_size

batches = poems_vector[start_index:end_index] #截取训练集的样本

length = max(map(len, batches)) #获取最长的那个诗词的长度

x_data = np.full((batch_size, length), word_int[' '], np.int32) #制造一个数组

for row, batch in enumerate(batches): #把x_data全都替换成诗词的数字代表数组

x_data[row, :len(batch)] = batch

y_data = np.copy(x_data)

y_data[:, :-1] = x_data[:, 1:]

x_batches.append(x_data)

y_batches.append(y_data)

return x_batches, y_batches

建立模型

建立模型之前我们首先要确定一些参数,LSTM的神经元输出的特征数,128,批量数:64,神经网络的深度:2

定义模型的函数

def model_get(): #建立模型的函数

lstm_cell = tf.nn.rnn_cell.BasicLSTMCell(num_units=128, reuse=tf.get_variable_scope().reuse)

lstm_cell_2 = tf.nn.rnn_cell.DropoutWrapper(lstm_cell,output_keep_prob=0.6) #防止过拟合

return lstm_cell_2

这里我用LSTM的底层细胞,然后删除一部分值,防止过拟合,其实这里删不删除都无所谓,因为本来就是要过拟合的,因为古诗就要过拟合才行。

准备数据

layer_num = 2

hidden_size = 128

batch_size = 64

#首先要获取诗词的字典和诗词字,和每个字对应的数字

poems_vector, word_int_map, words = process_text(file)

words_size = len(words)

batches_inputs, batches_outputs = get_batch(batch_size, poems_vector, word_int_map)

#特征值和目标值的占位符

input_data = tf.placeholder(tf.int32, [batch_size, None])

output_targets = tf.placeholder(tf.int32, [batch_size, None])

embedding = tf.get_variable('embedding', initializer=tf.random_uniform(

[words_size + 1, hidden_size], -1.0, 1.0))

inputs = tf.nn.embedding_lookup(embedding, input_data)

这里主要是获取数据,然后修改数据的形状,这里用tf.nn.embedding_lookup(embedding, input_data)修改数据的方式,因为我每首诗的字符数都有可能不一样的,那就动态修改数据,而且不清楚每层神经元层有多少个神经元,所以就动态修改形状。

inputs的形状就是[-1,input_size,timesept_size]。

模型生成和模型优化

mlstm_cell = tf.nn.rnn_cell.MultiRNNCell([model_get() for _ in range(layer_num)])

init_state = mlstm_cell.zero_state(batch_size, dtype=tf.float32)

outputs, last_state = tf.nn.dynamic_rnn(mlstm_cell, inputs = inputs, initial_state=init_state)

output = tf.reshape(outputs, [-1, hidden_size])

weights = tf.Variable(tf.truncated_normal([hidden_size, words_size + 1]))

bias = tf.Variable(tf.zeros(shape=[words_size + 1]))

logits = tf.nn.bias_add(tf.matmul(output, weights), bias=bias)

#模型优化

labels = tf.one_hot(tf.reshape(output_targets, [-1]), depth=words_size + 1)

loss = tf.nn.softmax_cross_entropy_with_logits(labels=labels, logits=logits)

total_loss = tf.reduce_mean(loss)

train_op = tf.train.AdamOptimizer(0.005).minimize(total_loss)

#准确率

accuracy = tf.equal(tf.argmax(labels, 1), tf.argmax(logits, 1), name="accuracy")

accuracy = tf.cast(accuracy, tf.float32)

accuracy_mean = tf.reduce_mean(accuracy, name="accuracy_mean")

这里如果是熟悉tensorflow的就很简单在里面,所以就不做过多的讲解。

模型训练和保存

saver = tf.train.Saver(tf.global_variables())

init_op = tf.group(tf.global_variables_initializer(), tf.local_variables_initializer())

#开启会话

with tf.Session() as sess:

sess.run(init_op)

start_epoch = 0

checkpoint = tf.train.latest_checkpoint("D:\\mypathon3\\python\\RNN诗词生成\\model")

if checkpoint:

saver.restore(sess, checkpoint)

print("## restore from the checkpoint {0}".format(checkpoint))

start_epoch += int(checkpoint.split('-')[-1])

try:

n_chunk = len(poems_vector) // 64

print(n_chunk)

for i in range(start_epoch,50):

n = 0

for batch in range(n_chunk):

sess.run(train_op, feed_dict={input_data: batches_inputs[n], output_targets: batches_outputs[n]})

loss_1 = sess.run(total_loss,feed_dict={input_data: batches_inputs[n], output_targets: batches_outputs[n]})

last_state_1 = sess.run(last_state,feed_dict={input_data: batches_inputs[n], output_targets: batches_outputs[n]})

accuracys = sess.run(accuracy_mean,feed_dict={input_data: batches_inputs[n], output_targets: batches_outputs[n]})

print('Epoch: %d, batch: %d, training loss: %.6f' % (i, batch, loss_1))

print('Epoch: %d, batch: %d, training 准确率: %.6f' % (i, batch, accuracys))

if i % 6 == 0:

saver.save(sess, "D:\\mypathon3\\python\\RNN诗词生成\\model\\model", global_step=i)

except KeyboardInterrupt:

print('## Interrupt manually, try saving checkpoint for now...')

saver.save(sess, checkpoint, global_step=i)

print('## Last epoch were saved, next time will start from epoch {}.'.format(i))

这里使用了模型迭代的方式,就是先保存一个模型,然后在下面打开这个模型,根据我们以前训练的步骤接着训练,这样就可以避免电脑死机然后重新训练。

源码

import tensorflow as tf

from text import process_text,get_batch

file = "D:\\mypathon3\\python\\RNN诗词生成\\wulv-all.txt"

def model_get(): #建立模型的函数

lstm_cell = tf.nn.rnn_cell.BasicLSTMCell(num_units=128, reuse=tf.get_variable_scope().reuse)

lstm_cell_2 = tf.nn.rnn_cell.DropoutWrapper(lstm_cell,output_keep_prob=0.6) #防止过拟合

return lstm_cell_2

def train_run():

layer_num = 2

hidden_size = 128

batch_size = 64

#首先要获取诗词的字典和诗词字,和每个字对应的数字

poems_vector, word_int_map, words = process_text(file)

words_size = len(words)

batches_inputs, batches_outputs = get_batch(batch_size, poems_vector, word_int_map)

#特征值和目标值的占位符

input_data = tf.placeholder(tf.int32, [batch_size, None])

output_targets = tf.placeholder(tf.int32, [batch_size, None])

embedding = tf.get_variable('embedding', initializer=tf.random_uniform(

[words_size + 1, hidden_size], -1.0, 1.0))

inputs = tf.nn.embedding_lookup(embedding, input_data)

#开始建立模型,建立两层的RNN模型,每个模型的输出的特征数是128,神经数量是动态的,输入的特征值数量也是动态的

mlstm_cell = tf.nn.rnn_cell.MultiRNNCell([model_get() for _ in range(layer_num)])

init_state = mlstm_cell.zero_state(batch_size, dtype=tf.float32)

outputs, last_state = tf.nn.dynamic_rnn(mlstm_cell, inputs = inputs, initial_state=init_state)

output = tf.reshape(outputs, [-1, hidden_size])

weights = tf.Variable(tf.truncated_normal([hidden_size, words_size + 1]))

bias = tf.Variable(tf.zeros(shape=[words_size + 1]))

logits = tf.nn.bias_add(tf.matmul(output, weights), bias=bias)

#模型优化

labels = tf.one_hot(tf.reshape(output_targets, [-1]), depth=words_size + 1)

loss = tf.nn.softmax_cross_entropy_with_logits(labels=labels, logits=logits)

total_loss = tf.reduce_mean(loss)

train_op = tf.train.AdamOptimizer(0.005).minimize(total_loss)

#准确率

accuracy = tf.equal(tf.argmax(labels, 1), tf.argmax(logits, 1), name="accuracy")

accuracy = tf.cast(accuracy, tf.float32)

accuracy_mean = tf.reduce_mean(accuracy, name="accuracy_mean")

saver = tf.train.Saver(tf.global_variables())

init_op = tf.group(tf.global_variables_initializer(), tf.local_variables_initializer())

#开启会话

with tf.Session() as sess:

sess.run(init_op)

start_epoch = 0

checkpoint = tf.train.latest_checkpoint("D:\\mypathon3\\python\\RNN诗词生成\\model")

if checkpoint:

saver.restore(sess, checkpoint)

print("## restore from the checkpoint {0}".format(checkpoint))

start_epoch += int(checkpoint.split('-')[-1])

try:

n_chunk = len(poems_vector) // 64

print(n_chunk)

for i in range(start_epoch,50):

n = 0

for batch in range(n_chunk):

sess.run(train_op, feed_dict={input_data: batches_inputs[n], output_targets: batches_outputs[n]})

loss_1 = sess.run(total_loss,feed_dict={input_data: batches_inputs[n], output_targets: batches_outputs[n]})

last_state_1 = sess.run(last_state,feed_dict={input_data: batches_inputs[n], output_targets: batches_outputs[n]})

accuracys = sess.run(accuracy_mean,feed_dict={input_data: batches_inputs[n], output_targets: batches_outputs[n]})

print('Epoch: %d, batch: %d, training loss: %.6f' % (i, batch, loss_1))

print('Epoch: %d, batch: %d, training 准确率: %.6f' % (i, batch, accuracys))

if i % 6 == 0:

saver.save(sess, "D:\\mypathon3\\python\\RNN诗词生成\\model\\model", global_step=i)

except KeyboardInterrupt:

print('## Interrupt manually, try saving checkpoint for now...')

saver.save(sess, checkpoint, global_step=i)

print('## Last epoch were saved, next time will start from epoch {}.'.format(i))

train_run()

开始测试

加载模型

start_token = 'B'

end_token = 'E'

file = "D:\\mypathon3\\python\\RNN诗词生成\\wulv-all.txt"

hidden_size = 128

layer_num = 2

batch_size = 1

#先还原数据模型

def model_get(): #建立模型的函数

lstm_cell = tf.nn.rnn_cell.BasicLSTMCell(num_units=128, reuse=tf.get_variable_scope().reuse)

# lstm_cell_2 = tf.nn.rnn_cell.DropoutWrapper(lstm_cell,output_keep_prob=0.5) #防止过拟合

return lstm_cell

这里就不用删除一部分值了。

poems_vector, word_int_map, words = process_text(file)

words_size = len(words)

input_data = tf.placeholder(tf.int32, [batch_size, None])

embedding = tf.get_variable('embedding', initializer=tf.random_uniform(

[words_size + 1, hidden_size], -1.0, 1.0))

inputs = tf.nn.embedding_lookup(embedding, input_data)

mlstm_cell = tf.nn.rnn_cell.MultiRNNCell([model_get() for _ in range(layer_num)])

init_state = mlstm_cell.zero_state(1, dtype=tf.float32)

outputs, last_state = tf.nn.dynamic_rnn(mlstm_cell, inputs, initial_state=init_state)

output = tf.reshape(outputs, [-1, hidden_size])

weights = tf.Variable(tf.truncated_normal([hidden_size, words_size + 1]))

bias = tf.Variable(tf.zeros(shape=[words_size + 1]))

logits = tf.nn.bias_add(tf.matmul(output, weights), bias=bias)

prediction = tf.nn.softmax(logits)

这里要注意一个点,就是init_state的形状,因为我要输入一个字作为数据,所以init_state的形状的batch_size也是1,其他地方都没什么改变。

根据模型加载数据

with tf.Session() as sess:

sess.run(init_op)

checkpoint = tf.train.latest_checkpoint("D:\\mypathon3\\python\\RNN诗词生成\\model")

saver.restore(sess, checkpoint)

x = np.array([list(map(word_int_map.get, start_token))])

#得出预测值

predict = sess.run(prediction, feed_dict={input_data: x})

ps = tf.argmax(predict, 1)

print(sess.run(ps))

last_states = sess.run(last_state, feed_dict={input_data: x})

这里是比较重要的点,也是整个模型比较难的点。这里要先得出一个值作为输入,因为我在上面处理数据的时候,在每首诗的前面和后面都加了E和B,这样就方便这里处理了。

这里首先预测得出的值作为后面至关重要的参数,就是init_state。

得出一个接一个的字

#利用数据生成字

def to_word(predict, vocabs):

predict = predict[0]

predict /= np.sum(predict)

sample = np.random.choice(np.arange(len(predict)), p=predict)

if sample > len(vocabs):

return vocabs[-1]

else:

return vocabs[sample]

begin_word = input("输入首个字:")

word = begin_word or to_word(predict, words)

poem_ = ''

i = 0

word_list = []

while word != end_token:

poem_ += word

i += 1

if i > 24:

break

x = np.array([[word_int_map[word]]])

# 得出预测值

predict = sess.run(prediction, feed_dict={input_data: x,init_state:last_states})

last_states = sess.run(last_state, feed_dict={input_data: x,init_state:last_states})

word = to_word(predict, words)

print(word_list)

print(poem_)

print("*"*100)

这里就是整个代码最难的部分了,首先我们要限制生成字的数量,用if i > 24:

break。

1、首先获取第一个字的值,作为模型的输入数据输入x = np.array([[word_int_map[word]]])

2、这里一定要不断更新init_state的参数,不然这首诗的关联就只有第一个字,只要不断拿上一个数据的参数作为init_state的参数输入,就会关联上每个字了。

3、得出的预测值,不是直接用tf.argmax来直接获取的,而是在可能性比较高的那几个字中随机抽取一个字,训练过后发现,大部分的字还是跟tf.argmax得出来的字是一样的,这也是因为古诗的原因,某个字不是固定和某个字关联,而是和几个字有关联的。

得出的字处理成诗句

def pretty_print_poem(poem_):

poem_sentences = poem_.split('。')

for s in poem_sentences:

if s != '' and len(s) > 10:

print(s + '。')

if s != "" and len(s) <= 10 and len(s) >= 5:

print(s + "。")

因为模型的准确率和损失都训练的非常好了,但是还会生成想这样的例子:

松門風自埽,瀑布雪難消。秋夜聞清梵,餘音逐海潮。却歸睦州

后面的四个字我就把他给删除掉。

源码

import tensorflow as tf

from text import process_text,get_batch

import numpy as np

start_token = 'B'

end_token = 'E'

file = "D:\\mypathon3\\python\\RNN诗词生成\\wulv-all.txt"

hidden_size = 128

layer_num = 2

batch_size = 1

#利用数据生成字

def to_word(predict, vocabs):

predict = predict[0]

predict /= np.sum(predict)

sample = np.random.choice(np.arange(len(predict)), p=predict)

if sample > len(vocabs):

return vocabs[-1]

else:

return vocabs[sample]

def pretty_print_poem(poem_):

poem_sentences = poem_.split('。')

for s in poem_sentences:

if s != '' and len(s) > 10:

print(s + '。')

if s != "" and len(s) <= 10 and len(s) >= 5:

print(s + "。")

#先还原数据模型

def model_get(): #建立模型的函数

lstm_cell = tf.nn.rnn_cell.BasicLSTMCell(num_units=128, reuse=tf.get_variable_scope().reuse)

# lstm_cell_2 = tf.nn.rnn_cell.DropoutWrapper(lstm_cell,output_keep_prob=0.5) #防止过拟合

return lstm_cell

poems_vector, word_int_map, words = process_text(file)

words_size = len(words)

input_data = tf.placeholder(tf.int32, [batch_size, None])

embedding = tf.get_variable('embedding', initializer=tf.random_uniform(

[words_size + 1, hidden_size], -1.0, 1.0))

inputs = tf.nn.embedding_lookup(embedding, input_data)

mlstm_cell = tf.nn.rnn_cell.MultiRNNCell([model_get() for _ in range(layer_num)])

init_state = mlstm_cell.zero_state(1, dtype=tf.float32)

outputs, last_state = tf.nn.dynamic_rnn(mlstm_cell, inputs, initial_state=init_state)

output = tf.reshape(outputs, [-1, hidden_size])

weights = tf.Variable(tf.truncated_normal([hidden_size, words_size + 1]))

bias = tf.Variable(tf.zeros(shape=[words_size + 1]))

logits = tf.nn.bias_add(tf.matmul(output, weights), bias=bias)

prediction = tf.nn.softmax(logits)

saver = tf.train.Saver(tf.global_variables())

init_op = tf.group(tf.global_variables_initializer(), tf.local_variables_initializer())

#开启会话

with tf.Session() as sess:

sess.run(init_op)

checkpoint = tf.train.latest_checkpoint("D:\\mypathon3\\python\\RNN诗词生成\\model")

saver.restore(sess, checkpoint)

x = np.array([list(map(word_int_map.get, start_token))])

#得出预测值

predict = sess.run(prediction, feed_dict={input_data: x})

ps = tf.argmax(predict, 1)

print(sess.run(ps))

last_states = sess.run(last_state, feed_dict={input_data: x})

begin_word = input("输入首个字:")

word = begin_word or to_word(predict, words)

poem_ = ''

i = 0

word_list = []

while word != end_token:

poem_ += word

i += 1

if i > 24:

break

x = np.array([[word_int_map[word]]])

# 得出预测值

predict = sess.run(prediction, feed_dict={input_data: x,init_state:last_states})

last_states = sess.run(last_state, feed_dict={input_data: x,init_state:last_states})

word = to_word(predict, words)

print(word_list)

print(poem_)

print("*"*100)

pretty_print_poem(poem_)

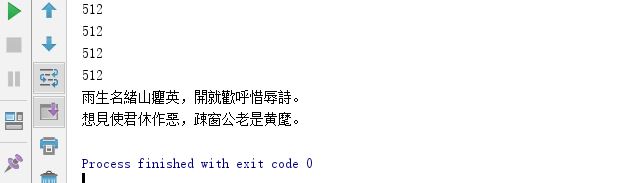

测试

首先拿“梁”字来测试

梁法惟康富貴臣,二通標格價如泥。

非容妄聖俱當輩,作主從來作壽詩。

再用“雨”字来测试

注意

要注意一下几点内如:

1、这里参考模型是否优化好了要参考准确率和损失,因为这个是古诗,所以要对比两个方面才可以说明模型是否已经好了。

2、训练一定要迭代训练,要不断的保存模型和加载模型进行训练。

3、训练集的要求,训练集一定要是某个小方面,一旦训练集里面的诗的风格是多变的,那么测试出来的效果也是乱七八糟的。

这个模型总体来说要比我上一篇的模型好很多了。