下面的代码复制到pycharm里面直接运行会自动显示出来每一步处理结果的图像,每一张图像间隔几秒左右自动显示下一张。

代码部分有思路清晰的注释,易读,易让初学者首先从总体上把握思路。稍掌握一些数字图像处理基本知识就能轻易明白每一步在做什么。

另外,下面这些方法有些只适合特定场景,并不能普适;但是提供了一种大致的方向,以及使用数字图像处理技术解决这类问题的一般思路,故有很大参考价值

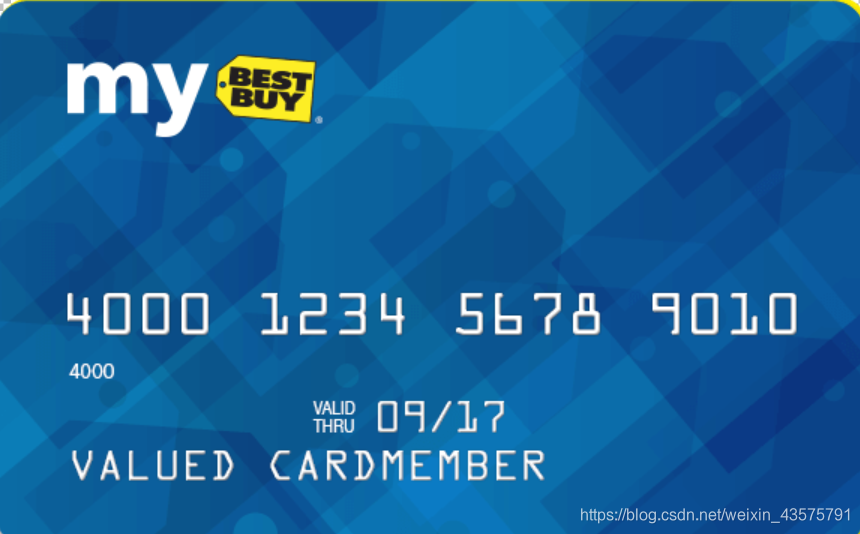

1、信用卡数字识别

基本思路是:

使用数字图像处理技术,基于模板匹配的思想,通过比较匹配得分识别数字。

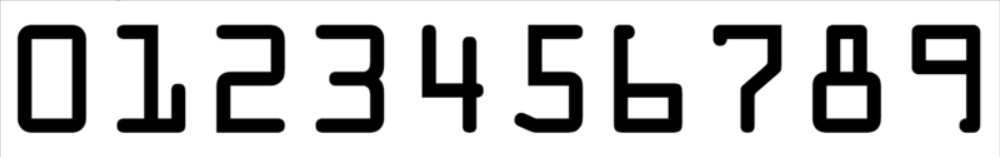

1) 从数字模板提取数字

将带有数字模板的图片转化为二值图,通过找最外层轮廓及其外接矩形,提取ROI,得到数字模板

2) 处理信用卡图片

将图片转化为灰度图,通过边缘检测以及一系列开、闭操作,并将图片二值化,找到图片上数字所在区域,并提取出数字,和第1步提取的模板数字匹配,得分最高的即是所识别的数字

代码整理如下:

import cv2

import numpy as np

# 指定信用卡类型

first_number = {

"3": "American Express",

"4": "Visa",

"5": "MasterCard",

"6": "Discover Card"

}

def img_show(name, img):

cv2.imshow(name, img)

cv2.waitKey(2400)

cv2.destroyAllWindows()

# 定义轮廓排序函数

def sort_contours(cnts, method="left-to-right"):

reverse = False

i = 0

if method == "right-to-left" or method == "bottom-to-top":

reverse = True

if method == "top-to-bottom" or method == "bottom-to-top":

i = 1

boundingboxes = [cv2.boundingRect(c) for c in cnts]

(cnts, boundingBoxes) = zip(*sorted(zip(cnts, boundingboxes),

key=lambda b: b[1][i], reverse=reverse))

return cnts, boundingboxes

# 提取数字模板

def template_process(template):

img_show('original', template)

# 1、转化为灰度图、二值图

temp_gray = cv2.cvtColor(template, cv2.COLOR_BGR2GRAY)

img_show('gray', temp_gray)

temp_thresh = cv2.threshold(temp_gray, 10, 255, cv2.THRESH_BINARY_INV)[1]

img_show('thresh', temp_thresh)

# 2、找最外层轮廓及其外接矩形,并排序

refCnts, hierarchy = cv2.findContours(temp_thresh, cv2.RETR_EXTERNAL, cv2.CHAIN_APPROX_SIMPLE)

refCnts = sort_contours(refCnts, method="left-to-right")[0]

# 可视化,画出轮廓

tempCnt = template.copy()

cv2.drawContours(tempCnt, refCnts, -1, (0, 0, 255), 3)

img_show('cnts', tempCnt)

# 3、提取数字模板

digits = {}

for (i, c) in enumerate(refCnts):

(x, y, w, h) = cv2.boundingRect(c) # 直边界矩形

roi = temp_thresh[y:y + h, x:x + w]

roi = cv2.resize(roi, (55, 88))

digits[i] = roi

return digits

# 找数字所在位置

def find_numlocs(threshCnts):

locs = []

# 遍历轮廓

for (i, c) in enumerate(threshCnts):

(x, y, w, h) = cv2.boundingRect(c)

ar = w / float(h)

if 2 < ar < 4.5 and (20 < w < 55) and (10 < h < 20):

locs.append((x, y, w, h))

# 将符合的轮廓从左到右排序

locs = sorted(locs, key=lambda ix: ix[0])

return locs

# 图片处理,返回灰度图和数字位置

def img_process(img):

img = cv2.resize(img, None, fx=0.3, fy=0.3, interpolation=cv2.INTER_CUBIC)

resized_img = img.copy()

cur_img = img.copy()

# 1、转换为灰度图

gray = cv2.cvtColor(img, cv2.COLOR_BGR2GRAY)

img_show('gray', gray)

# 初始化卷积核

rectKernel = cv2.getStructuringElement(cv2.MORPH_RECT, (9, 3))

sqKernel = cv2.getStructuringElement(cv2.MORPH_RECT, (5, 5))

# 2、礼帽操作,突出更明亮的区域

tophat = cv2.morphologyEx(gray, cv2.MORPH_TOPHAT, rectKernel)

# 3、边缘检测,x方向

gradX = cv2.Sobel(tophat, ddepth=cv2.CV_32F, dx=1, dy=0, ksize=-1)

gradX = np.absolute(gradX)

# 归一化

(minVal, maxVal) = (np.min(gradX), np.max(gradX))

gradX = (255 * ((gradX - minVal) / (maxVal - minVal)))

gradX = gradX.astype("uint8")

img_show('gradX', gradX)

# 4、闭操作、二值化

gradX = cv2.morphologyEx(gradX, cv2.MORPH_CLOSE, rectKernel)

img_show('gradX', gradX)

thresh = cv2.threshold(gradX, 0, 255, cv2.THRESH_BINARY | cv2.THRESH_OTSU)[1]

img_show('thresh', thresh)

# 让边缘清晰规整

thresh = cv2.morphologyEx(thresh, cv2.MORPH_CLOSE, sqKernel)

img_show('thresh', thresh)

# 5、计算轮廓

threshCnts, thresh_hierarchy = cv2.findContours(thresh, cv2.RETR_EXTERNAL, cv2.CHAIN_APPROX_SIMPLE)

cv2.drawContours(cur_img, threshCnts, -1, (0, 0, 255), 3)

img_show('Cnts', cur_img)

# 6、得到数字轮廓位置

locs = find_numlocs(threshCnts)

return resized_img, gray, locs

# 识别,返回识别好的图片和识别的数字列表

def digits_recognize(img, template):

digits = template_process(template)

recognized_img, gray, locs = img_process(img)

output = []

# 遍历每一个轮廓中的数字

for (i, (gX, gY, gW, gH)) in enumerate(locs):

groupOutput = []

group = gray[gY - 5:gY + gH + 5, gX - 5: gX + gW + 5]

img_show('group', group)

# 二值化

group = cv2.threshold(group, 0, 255, cv2.THRESH_OTSU)[1]

img_show('group', group)

# 计算每一组数字轮廓并排序

digitCnts, hierarchy = cv2.findContours(group, cv2.RETR_EXTERNAL, cv2.CHAIN_APPROX_SIMPLE)

digitCnts = sort_contours(digitCnts)[0]

# 计算每一组的每个数值

for c in digitCnts:

(x, y, w, h) = cv2.boundingRect(c)

roi = group[y: y + h, x: x + w]

roi = cv2.resize(roi, (55, 88))

scores = []

for (digit, digitROI) in digits.items():

result = cv2.matchTemplate(roi, digitROI, cv2.TM_CCOEFF) # 相关系数匹配

(_, score, _, _) = cv2.minMaxLoc(result)

scores.append(score)

# 得到最合适的数字

groupOutput.append(str(np.argmax(scores)))

cv2.rectangle(recognized_img, (gX - 5, gY - 5), (gX + gW + 5, gY + gH + 5), (0, 0, 255), 1)

cv2.putText(recognized_img, "".join(groupOutput), (gX, gY - 15), cv2.FONT_HERSHEY_SIMPLEX, 0.65, (0, 0, 255), 2)

# 得到结果

output.extend(groupOutput)

# 打印结果

print("Credit Card Type: {}".format(first_number[output[0]]))

print("Credit Card #: {}".format("".join(output)))

return recognized_img

if __name__ == "__main__":

img = cv2.imread('./opencv/data/credit/card03.jpg')

template = cv2.imread('./opencv/data/credit/template.png')

recognized_img = digits_recognize(img, template)

img_show('result', recognized_img)

所用图片

2、答题卡识别

基本思路是:

1) 将原图像在转换为灰度图的基础上,通过高斯模糊去除噪声,进行边缘检测,找到答题纸轮廓,进行透视变换,提取到只含有答题纸的图像

2) 将答题纸转化为二值图,找到答案所在圆圈轮廓,利用掩膜,判断答案区域非零像素数量,拥有最大非零像素数量的答案是所选答案,和正确答案对照,判断最终得分,并标出正确答案。

代码如下

import numpy as np

import cv2

# 正确答案

answer_key = {0: 1, 1: 4, 2: 0, 3: 3, 4: 1}

# 坐标排序(4,2)

def order_points(pts):

# 一共4个坐标点

rect = np.zeros((4, 2), dtype="float32")

# 按顺序找到对应坐标0123分别是 左上,右上,右下,左下

# 计算左上,右下

s = pts.sum(axis=1)

rect[0] = pts[np.argmin(s)]

rect[2] = pts[np.argmax(s)]

# 计算右上和左下

diff = np.diff(pts, axis=1)

rect[1] = pts[np.argmin(diff)]

rect[3] = pts[np.argmax(diff)]

return rect

# 透视变换

def four_point_transform(image, pts):

# 获取输入坐标点

rect = order_points(pts)

(tl, tr, br, bl) = rect

# 计算输入的w和h值

widthA = np.sqrt(((br[0] - bl[0]) ** 2) + ((br[1] - bl[1]) ** 2))

widthB = np.sqrt(((tr[0] - tl[0]) ** 2) + ((tr[1] - tl[1]) ** 2))

maxWidth = max(int(widthA), int(widthB))

heightA = np.sqrt(((tr[0] - br[0]) ** 2) + ((tr[1] - br[1]) ** 2))

heightB = np.sqrt(((tl[0] - bl[0]) ** 2) + ((tl[1] - bl[1]) ** 2))

maxHeight = max(int(heightA), int(heightB))

# 变换后对应坐标位置

dst = np.array([

[0, 0],

[maxWidth - 1, 0],

[maxWidth - 1, maxHeight - 1],

[0, maxHeight - 1]], dtype="float32")

# 计算变换矩阵

M = cv2.getPerspectiveTransform(rect, dst)

warped = cv2.warpPerspective(image, M, (maxWidth, maxHeight))

# 返回变换后结果

return warped

# 轮廓排序

def sort_contours(cnts, method="left-to-right"):

reverse = False

i = 0

if method == "right-to-left" or method == "bottom-to-top":

reverse = True

if method == "top-to-bottom" or method == "bottom-to-top":

i = 1

boundingBoxes = [cv2.boundingRect(c) for c in cnts]

(cnts, boundingBoxes) = zip(*sorted(zip(cnts, boundingBoxes),

key=lambda b: b[1][i], reverse=reverse))

return cnts, boundingBoxes

# 展示图片

def cv_show(name, img):

cv2.imshow(name, img)

cv2.waitKey(2400)

cv2.destroyAllWindows()

# 快速展示图片

def cv_quick_show(name, img):

cv2.imshow(name, img)

cv2.waitKey(600)

cv2.destroyAllWindows()

# 找答题纸轮廓

def find_doc_contours(edged_cnts):

docCnt = None

if len(edged_cnts) > 0: # 确保检测到了

edged_cnts = sorted(edged_cnts, key=cv2.contourArea, reverse=True) # 根据轮廓大小进行排序

# 遍历每一个轮廓

for c in edged_cnts:

# 近似

peri = cv2.arcLength(c, True) # 轮廓周长

approx = cv2.approxPolyDP(c, 0.02 * peri, True) # 轮廓近似

if len(approx) == 4:

docCnt = approx

break

return docCnt

# 找答案圆圈轮廓

def find_circle_contours(cnts):

questionCnts = []

# 通过计算轮廓外接矩形的长宽来确定圆圈对应的轮廓

for c in cnts:

# 计算比例和大小

(x, y, w, h) = cv2.boundingRect(c)

ar = w / float(h)

# 根据实际情况指定标准

if w >= 20 and h >= 20 and ar >= 0.9 and ar <= 1.1:

questionCnts.append(c)

# 将得到的圆圈轮廓按照从上到下进行排序

questionCnts = sort_contours(questionCnts, method="top-to-bottom")[0]

return questionCnts

# 画出真实答案并计算分数

def draw_compute_score(warped, questionCnts):

draw_warped = warped.copy()

correct = 0

thresh = cv2.threshold(warped, 0, 255, cv2.THRESH_BINARY_INV | cv2.THRESH_OTSU)[1]

for (q, i) in enumerate(np.arange(0, len(questionCnts), 5)): # 每排有5个选项

cnts = sort_contours(questionCnts[i:i + 5])[0] # 每5个轮廓一组,每组从左到右排序,即得到每行圆圈轮廓

bubbled = None

# 对每一组,遍历每一个结果

for (j, c) in enumerate(cnts):

# 使用mask来判断结果

mask = np.zeros(thresh.shape, dtype="uint8") # 生成一个和thresh一样大小的全0图像mask

cv2.drawContours(mask, [c], -1, 255, -1) # -1表示填充,在mask圆圈轮廓对应位置画出轮廓填充

cv_quick_show('mask', mask)

# 通过计算非零点数量来算是否选择这个答案

mask = cv2.bitwise_and(thresh, thresh, mask=mask)

total = cv2.countNonZero(mask)

# 通过阈值判断

if bubbled is None or total > bubbled[0]:

bubbled = (total, j)

# 对比正确答案

color = (0, 0, 255)

k = answer_key[q]

# 判断正确

if k == bubbled[1]:

color = (0, 255, 0)

correct += 1

# 绘图

cv2.drawContours(draw_warped, [cnts[k]], -1, color, 3)

score = (correct / 5.0) * 100

cv2.putText(draw_warped, "{:.2f}%".format(score), (10, 30), cv2.FONT_HERSHEY_SIMPLEX, 0.9, (0, 0, 255), 2)

return draw_warped, score

# 图片处理

def img_process(image):

contours_img = image.copy()

cv_show('original', image)

gray = cv2.cvtColor(image, cv2.COLOR_BGR2GRAY) # 1、灰度图

cv_show('gray', gray) # 可视化

blurred = cv2.GaussianBlur(gray, (5, 5), 0) # 2、高斯模糊去除噪声

cv_show('blurred', blurred)

edged = cv2.Canny(blurred, 75, 200) # 3、边缘检测

cv_show('Canny', edged)

# 寻找轮廓前进行边缘检测或阈值处理,而边缘检测对噪声很敏感,所以边缘检测前要进行高斯模糊

# 轮廓检测

edged_cnts = cv2.findContours(edged.copy(), cv2.RETR_EXTERNAL, cv2.CHAIN_APPROX_SIMPLE)[0] # 4、找轮廓

cv2.drawContours(contours_img, edged_cnts, -1, (0, 0, 255), 3) # 画轮廓

docCnt = find_doc_contours(edged_cnts) # 5、找到答题纸对应的轮廓

warped = four_point_transform(gray, docCnt.reshape(4, 2)) # 6、透视变换

cv_show('warped', warped)

thresh = cv2.threshold(warped, 0, 255, cv2.THRESH_BINARY_INV | cv2.THRESH_OTSU)[1] # 7、二值化

cnts = cv2.findContours(thresh, cv2.RETR_EXTERNAL, cv2.CHAIN_APPROX_SIMPLE)[0] # 8、找轮廓

cv2.drawContours(thresh, cnts, -1, (0, 0, 255), 3) # -1表示绘制所有轮廓

cv_show('thresh_Contours', thresh)

questionCnts = find_circle_contours(cnts) # 9、找到答案所在圆圈轮廓

draw_warped, score = draw_compute_score(warped, questionCnts) # 10、画答案并计算分数

return draw_warped, score

if __name__ == "__main__":

image = cv2.imread('./opencv/data/answer/card01.jpg')

draw_warped, score = img_process(image)

print("[INFO] score: {:.2f}%".format(score))

cv_show("Original", image)

cv_show("Exam", draw_warped)

所用图片