1.单层卷积神经网络的理论要素

1.按批次输入,每一个批次的结构都为,定义为x:

2.卷积核:core

3.卷积 cov = tf.nn.conv2D

4.激活(预测,上一章有介绍) predicition = tf.nn.relu(cov)

5.池化(没有介绍,下一章会有介绍)

6.代价(上一章有简要介绍)

7.优化器(没有介绍,你可以去看我的深度学习理论里面关于优化器的介绍)

2.单层卷积神经网络的技术要素

代码贴出来,自己看吧,我也不知道怎么写...实在是太简单了

import MinistDataAnalyser as mal

import matplotlib.pyplot as plt

import tensorflow as tf

import numpy as np

ds = mal.getDC()

dataNum = 300

#数据输入

xI = tf.placeholder(tf.float32,[None,784])

y = tf.placeholder(tf.float32,[None,10])

#数据整容

x = tf.reshape(xI,[dataNum,28,28,1])

#第一层卷积

coreNaum = 32#卷积核的数量

w = tf.Variable(tf.truncated_normal([5,5,1,coreNaum],stddev=0.1))

covBias = tf.Variable(tf.truncated_normal([coreNaum],stddev=0.1))

cov = tf.nn.conv2d(x,w,strides=[1,1,1,1],padding='SAME')+covBias

cov = tf.nn.relu(cov)

pool = tf.nn.max_pool(cov,ksize=[1,2,2,1],strides = [1,2,2,1],padding = "SAME")

# cov2CoreNum = 64

#

#

#

# cov2Core = tf.Variable(tf.truncated_normal([5,5,coreNaum,cov2CoreNum],stddev=0.1))

# cov2Bias = tf.Variable(tf.truncated_normal([cov2CoreNum],stddev=0.1))

#

# cov2 = tf.nn.conv2d(pool,cov2Core,strides=[1,1,1,1],padding='SAME')+cov2Bias

# pool2 = tf.nn.max_pool(cov2,ksize=[1,2,2,1],strides = [1,2,2,1],padding = "SAME",name="pool2")

covShape = tf.shape(pool)

fcLen = covShape[1]*covShape[2]*covShape[3]

#FCLayer

fcInput = tf.reshape(pool,[covShape[0],fcLen])

fcInput = tf.to_float(fcInput)

fcW = tf.Variable(tf.truncated_normal([fcLen,10],stddev=0.1))

fcBias = tf.Variable(tf.truncated_normal([1,10],stddev=0.1))

#fcW = tf.Variable(np.random.random([fcLen,10]))

prediction = tf.matmul(fcInput,fcW)+fcBias

# 随机droout,功能和正则化相似

# prediction = tf.nn.dropout(prediction,0.8)

prediction = tf.nn.softmax(prediction)

#损失

Loss = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(labels=y,logits=prediction))

#梯度下降优化

Train = tf.train.GradientDescentOptimizer(0.01).minimize(Loss)

correct_prediciton = tf.equal(tf.argmax(prediction,1),tf.argmax(y,1))

accuracy = tf.reduce_mean(tf.cast(correct_prediciton,tf.float32))

with tf.Session() as sess:

sess.run(tf.initialize_all_variables())

for i in range(1000):

xd, yd = ds.getBatch(dataNum)

sess.run(Train, feed_dict={xI: xd, y: yd})

acy = sess.run(accuracy,feed_dict={xI:xd,y:yd})

print(acy)

pass

pass其中

MinistDataAnalyser

是我自己写的一个Mnist的解析类

import sys

sys.path.append("..")

import web

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

import numpy as np

import matplotlib.pyplot as plt

import time

from scipy import misc

import os

import json

from PIL import Image

import random

class getDC():

def __init__(self):

self.dc = []

self.label = []

mnist = input_data.read_data_sets("MNIST_data", one_hot=True)

self.originData = mnist

trainSet = mnist.train.images

labelSet = mnist.train.labels

self.testData = []

self.testLabel = []

for i in range(50000):

img = trainSet[i]

img = img.reshape(1, 28*28)

self.dc.append(img)

self.label.append(labelSet[i])

pass

self.dc = np.array(self.dc)

self.dc = self.dc.reshape([50000,784])

self.label=np.array(self.label)

self.label = self.label.reshape([50000,10])

for i in range(10000):

tempImg = mnist.test.images[i]

tempImg = tempImg.reshape(1,28*28)

self.testData.append(tempImg)

self.testLabel.append(mnist.test.labels[i])

pass

self.testData = np.array(self.testData)

self.testData = self.testData.reshape([10000, 784])

self.testLabel = np.array(self.testLabel)

self.testLabel = self.testLabel.reshape([10000, 10])

self.index = 0

self.oritinDataCopy = self.dc.copy()

print(self.oritinDataCopy.__len__())

pass

def getBatchGF(self,scale):

return self.originData.train.next_batch(scale)

def getBatch2(self,scale):

dataBatch = []

labelBatch = []

indicator = []

for i in range(scale):

randomValue = random.randint(0, self.oritinDataCopy.__len__() - 1)

dataBatch.append(self.dc[randomValue])

labelBatch.append(self.label[randomValue])

indicator.append(randomValue)

pass

for i in range(indicator.__len__()):

self.oritinDataCopy.remove(indicator[i])

pass

pass

def getBatch(self,scale):

dataBatch = []

labelBatch = []

for i in range(scale):

randomValue = random.randint(0,50000-1)

dataBatch.append(self.dc[randomValue])

labelBatch.append(self.label[randomValue])

pass

dataBatch = np.array(dataBatch).reshape([scale,784])

labelBatch = np.array(labelBatch).reshape([scale,10])

return dataBatch,labelBatch

# //dc = getDC()

# #

# img = dc.dc[0].reshape([28,28])

# plt.figure(0)

# plt.imshow(img)

# plt.show()

# print(img)

当然,他自己也有内部的解析类,数据集使用额是mnist数据集

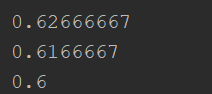

使用梯度下降作为优化器,训练1000次,准确率在(学习率 = 0.01)

越小,收敛速度越慢,0.001训练1000次,准确率只有0.2左右

使用AdamOptimizer优化器,训练1000次,准确率在(学习率 = 0.001)

卧槽...