前言

在提取特征点之后,我们往往需要采取一定的策略来对特征点进行匹配,但在某些场合,库中提供的匹配也许并不能满足我们的需求,需要我们自己来实现匹配算法。因此,这就要求我们对提取出来的特征描述子进行操作。compute方法可以提取特征点的特征描述子,并存储在一个矩阵(Mat)中,但是当我查看源码,想搞清楚矩阵中元素与特征点的对应关系时,却发现compute的函数说明似乎有点问题。

提取特征描述子的步骤

Mat descriptors1;

Mat descriptors2;

ptrFeature2D->compute(image1,keypoints1,descriptors1);

ptrFeature2D->compute(image2,keypoints2,descriptors2);

Compute函数原型:

CV_WRAP virtual void compute( InputArray image,

CV_OUT CV_IN_OUT std::vector<KeyPoint>& keypoints,

OutputArray descriptors );

```

描述:

@param image Image.

@param keypoints Input collection of keypoints. Keypoints for which a descriptor cannot be

computed are removed. Sometimes new keypoints can be added, for example: SIFT duplicates keypoint with several dominant orientations (for each orientation).

@param descriptors Computed descriptors. In the second variant of the method descriptors[i] are descriptors computed for a keypoints[i]. Row j is the keypoints (or keypoints[i]) is the descriptor for keypoint j-th keypoint. (这句话是啥意思我是没看懂)

参数解释:

img :要描述的图像

keypoints :被检测到的特征点

descriptors1: 输出的特征描述子矩阵

自己动手 丰衣足食

验证keypoints 与 descirptor之间的对应关系:

测试代码(定义那些就省去了。)

Ptr<cv::xfeatures2d::SURF>detector = cv::xfeatures2d::SURF::create(Hessian_Threshold);

vector<KeyPoint> keypoints;

detector->detect(gray_img, keypoints, Mat()); //检测关键点

detector->compute(gray_img, keypoints, descriptors1);

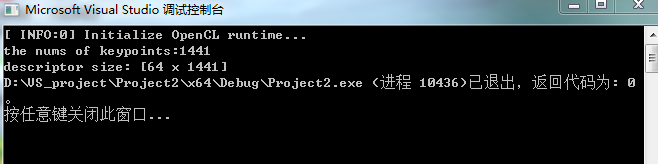

cout << "the nums of keypoints:" << keypoints.size() << '\n' << "descriptor size: " << descriptors1.size();

实验结果:

分析与总结

特征点一共有1441个,SURF的特征向量为64维,因此:

储存特征描述子的矩阵应该是: 行数表示特征分量,列数表示第几个特征点的特征向量