一、背景

近期学习python request模块,想要实现一个输入关键字和页面数就可以查找到页面下的所有博客的功能,然后把查询结果写入excel的功能,利用request模块获取页面,BeautifulSoup获取指定数据(博客名称和博客url),xlsxwriter用来绘制Excel模板,并将指定内容写入Excel。后续利用这种思维抓取其他类型的数据,把抓取到的数据存入文件或数据库中。

二、代码

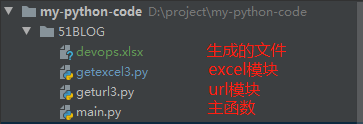

2.1 结构

- getexcel模块主要是创建Excel文件,sheet工作表,绘制Excel模板,写入指定内容

- geturl模块主要是根据关键字拼接成页面url,获取页面url内容,通过BeautifulSoup获取博客名称和url

2.2 代码

- getexcel3.py

#!/bin/env python

# -*- coding:utf-8 -*-

# @Author : kelly

import xlsxwriter

class create_excle:

def __init__(self):

self.tag_list = ["blog_name", "blog_url"]

def create_workbook(self,search=" "):

excle_name = search + '.xlsx'

#定义excle名称

workbook = xlsxwriter.Workbook(excle_name)

worksheet_M = workbook.add_worksheet(search)

print('create %s....' % excle_name)

return workbook,worksheet_M

def col_row(self,worksheet):

worksheet.set_column('A:A', 30)

worksheet.set_row(0, 17)

worksheet.set_column('A:A',58)

worksheet.set_column('B:B', 58)

def shell_format(self,workbook):

#表头格式

merge_format = workbook.add_format({

'bold': 1,

'border': 1,

'align': 'center',

'valign': 'vcenter',

'fg_color': '#FAEBD7'

})

#标题格式

name_format = workbook.add_format({

'bold': 1,

'border': 1,

'align': 'center',

'valign': 'vcenter',

'fg_color': '#E0FFFF'

})

#正文格式

normal_format = workbook.add_format({

'align': 'center',

})

return merge_format,name_format,normal_format

#写入title和列名

def write_title(self,worksheet,search,merge_format):

title = search + "搜索结果"

worksheet.merge_range('A1:B1', title, merge_format)

print('write title success')

def write_tag(self,worksheet,name_format):

tag_row = 1

tag_col = 0

for num in self.tag_list:

worksheet.write(tag_row,tag_col,num,name_format)

tag_col += 1

print('write tag success')

#写入内容

def write_context(self,worksheet,con_dic,normal_format):

row = 2

for k,v in con_dic.items():

if row > len(con_dic):

break

col = 0

worksheet.write(row,col,k,normal_format)

col+=1

worksheet.write(row,col,v,normal_format)

row+=1

print('write context success')

#关闭excel

def workbook_close(self,workbook):

workbook.close()

if __name__ == '__main__':

print('This is create excel mode')

- geturl3.py

#!/bin/env python

# -*- coding:utf-8 -*-

import requests

from bs4 import BeautifulSoup

class get_urldic:

#获取搜索关键字

def get_url(self):

urlList = []

first_url = 'http://blog.51cto.com/search/result?q='

after_url = '&type=&page='

try:

search = input("Please input search name:")

page = int(input("Please input page:"))

except Exception as e:

print('Input error:',e)

exit()

for num in range(1,page+1):

url = first_url + search + after_url + str(num)

urlList.append(url)

print("Please wait....")

return urlList,search

#获取网页文件

def get_html(self,urlList):

response_list = []

for r_num in urlList:

request = requests.get(r_num)

response = request.content

response_list.append(response)

return response_list

#获取blog_name和blog_url

def get_soup(self,html_doc):

result = {}

for g_num in html_doc:

soup = BeautifulSoup(g_num,'html.parser')

context = soup.find_all('a',class_='m-1-4 fl')

for i in context:

title=i.get_text()

result[title.strip()]=i['href']

return result

if __name__ == '__main__':

blog = get_urldic()

urllist, search = blog.get_url()

html_doc = blog.get_html(urllist)

result = blog.get_soup(html_doc)

for k,v in result.items():

print('search blog_name is:%s,blog_url is:%s' % (k,v))- main.py

#!/bin/env python

# -*- coding:utf-8 -*-

import geturl3

import getexcel3

#获取url字典

def get_dic():

blog = geturl3.get_urldic()

urllist, search = blog.get_url()

html_doc = blog.get_html(urllist)

result = blog.get_soup(html_doc)

return result,search

#写入excle

def write_excle(urldic,search):

excle = getexcel3.create_excle()

workbook, worksheet = excle.create_workbook(search)

excle.col_row(worksheet)

merge_format, name_format, normal_format = excle.shell_format(workbook)

excle.write_title(worksheet,search,merge_format)

excle.write_tag(worksheet,name_format)

excle.write_context(worksheet,urldic,normal_format)

excle.workbook_close(workbook)

def main():

url_dic ,search_name = get_dic()

write_excle(url_dic,search_name)

if __name__ == '__main__':

main()三、测试结果

3.1 运行程序

运行main.py,填写搜索的关键字和查询页数

3.2 运行结果

根据kafka关键字和页数,可以看到已经生成了一个kafka.xlsx的文件

打开kafka.xlsx查看结果

利用request,xlsxwriter,BeautifulSoup爬取51CTO指定关键字和页数的流程就全部结束了,这里面通过request获取到了html页面所有内容形成列表,解析html,获取所有<a>标签,最后获取 标签正文和url会有点绕,可以debug看下变量是怎么变化的。