一、 需求描述

统计硅谷影音视频网站的常规指标,各种 TopN 指标:

–统计视频观看数 Top10

–统计视频类别热度 Top10

–统计视频观看数 Top20 所属类别以及类别包含的 Top20 的视频个数

–统计视频观看数 Top50 所关联视频的所属类别 Rank

–统计每个类别中的视频热度 Top10

–统计每个类别中视频流量 Top10

–统计上传视频最多的用户 Top10 以及他们上传的观看次数在前 20 视频

–统计每个类别视频观看数 Top10

二、项目

2.1 数据结构

1、视频表

2、用户表

2.2 ETL 原始数据

通过观察原始数据形式,可以发现,视频可以有多个所属分类,每个所属分类用&符号分割,且分割的两边有空格字符,同时相关视频也是可以有多个元素,多个相关视频又用“\t”进行分割。为了分析数据时方便对存在多个子元素的数据进行操作,我们首先进行数据重组清洗操作。即:将所有的类别用“&”分割,同时去掉两边空格,多个相关视频 id 也使用“&”进行分割。

1、ETL 之 ETLUtil

/**

* 1.过滤脏数据

* 2.将类别字段中的空格

* 3.替换关联视频中的分隔符

*/

public class ETLUtil {

public static String etlStr(String line){

//切割数据

String[] split = line.split("\t");

//1. 过滤脏数据

if (split.length<9) return null;

//2. 去掉类别字段中的空格

split[3] = split[3].replaceAll(" ", "");

//3.替换关联视频的分隔符

StringBuffer sb = new StringBuffer();

for (int i=0; i < split.length; i++) {

if (i < 9) {

if (i==split.length - 1){

sb.append(split[i]);

}else {

sb.append(split[i]).append("\t");

}

}else {

if (i==split.length - 1){

sb.append(split[i]);

}else {

sb.append(split[i]).append("&");

}

}

}

return sb.toString();

}

}2、ETL 之 Mapper

package com.atlxl;

import org.apache.commons.lang.StringUtils;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

/**

* @author LXL

* @create 2019-06-05 21:26

*/

public class ETlMapper extends Mapper<LongWritable, Text, Text, NullWritable> {

private Text k = new Text();

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

//1.获取一行数据

String line = value.toString();

//2.清洗数据

String etlStr = ETLUtil.etlStr(line);

//3.写出数据

if (StringUtils.isBlank(etlStr)) {

return;

}

k.set(etlStr);

context.write(k, NullWritable.get());

}

}3、ETL 之 Runner

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import org.apache.hadoop.util.Tool;

import org.apache.hadoop.util.ToolRunner;

/**

* @author LXL

* @create 2019-06-05 23:22

*/

public class ETLDriver implements Tool{

private Configuration configuration;

public int run(String[] args) throws Exception {

//1.获取job对象

Job job = Job.getInstance(getConf());

//2.封装driver类

job.setJarByClass(ETLDriver.class);

//3.关联Mapper类

job.setMapperClass(ETlMapper.class);

//4.Mapper输出的KV类型

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(NullWritable.class);

//5.最终输出类型

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(NullWritable.class);

//6.输入输出路径

FileInputFormat.setInputPaths(job, new Path(args[0]));

FileOutputFormat.setOutputPath(job, new Path(args[1]));

job.setNumReduceTasks(0);

//7.提交任务

boolean result = job.waitForCompletion(true);

return result ? 0 : 1;

}

public void setConf(Configuration conf) {

configuration = conf;

}

public Configuration getConf() {

return configuration;

}

public static void main(String[] args) throws Exception {

int run = ToolRunner.run(new ETLDriver(), args);

System.out.println(run);

}

}4、执行 ETL

$ bin/yarn jar ~/softwares/jars/gulivideo-0.0.1-SNAPSHOT.jar \

com.atguigu.etl.ETLVideosRunner \

/gulivideo/video/2008/0222 \

/gulivideo/output/video/2008/0222三、准备工作

上传数据到集群:

[root@node01 datas]# hadoop fs -put user /

[root@node01 datas]# hadoop fs -put video /执行jar包:

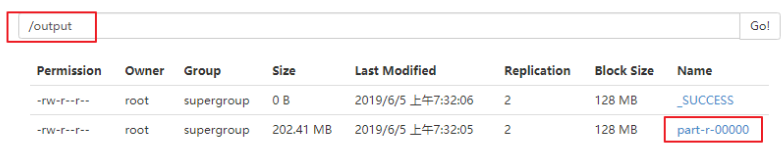

[root@node01 datas]# yarn jar etl.jar com.atlxl.ETLDriver /video/2008/0222 /output

3.1 创建表

创建表:gulivideo_ori,gulivideo_user_ori,

创建表:gulivideo_orc,gulivideo_user_orc

gulivideo_ori:

create table gulivideo_ori(

videoId string,

uploader string,

age int,

category array<string>,

length int,

views int,

rate float,

ratings int,

comments int,

relatedId array<string>)

row format delimited

fields terminated by "\t"

collection items terminated by "&"

stored as textfile;gulivideo_user_ori:

create table gulivideo_user_ori(

uploader string,

videos int,

friends int)

row format delimited

fields terminated by "\t"

stored as textfile;然后把原始数据插入到 orc 表中

gulivideo_orc:

create table gulivideo_orc(

videoId string,

uploader string,

age int,

category array<string>,

length int,

views int,

rate float,

ratings int,

comments int,

relatedId array<string>)

clustered by (uploader) into 8 buckets

row format delimited fields terminated by "\t"

collection items terminated by "&"

stored as orc;gulivideo_user_orc:

create table gulivideo_user_orc(

uploader string,

videos int,

friends int)

row format delimited

fields terminated by "\t"

stored as orc;3.2 导入ETL后的数据

gulivideo_ori:

load data inpath "/gulivideo/output/video/2008/0222" into table

gulivideo_ori;

gulivideo_user_ori:

load data inpath "/gulivideo/user/2008/0903" into table

gulivideo_user_ori;

3.3 向 ORC 表插入数据

gulivideo_orc:

insert into table gulivideo_orc select * from gulivideo_ori;gulivideo_user_orc:

insert into table gulivideo_user_orc select * from

gulivideo_user_ori;四、业务分析

4.1 统计视频观看数 Top10

思路:使用 order by 按照 views 字段做一个全局排序即可,同时我们设置只显示前 10 条。

最终代码:

select * from gulivideo_orc order by views desc limit 10;这里可能会出现内存溢出,报错可以查看一下日志。如果是内存溢出看下一章常见问题解决。

也可以用以下代码:

select

videoId,

uploader,

age,

category,

length,

views,

rate,

ratings,

comments

from

gulivideo_orc

order by

views

desc limit

10;4.2 统计视频类别热度 Top10

思路:

(1)即统计每个类别有多少个视频,显示出包含视频最多的前 10 个类别。

(2) 我们需要按照类别 group by 聚合,然后 count组内的 videoId 个数即可。

(3) 因为当前表结构为:一个视频对应一个或多个类别。所以如果要 group by 类别,需要先将类别进行列转行(展开),然后再进行 count 即可。

(4) 最后按照热度排序,显示前 10 条。

最终代码:

select

category_name as category,

count(t1.videoId) as hot

from (

select

videoId,

category_name

from

gulivideo_orc lateral view explode(category) t_catetory as category_name) t1

group by

t1.category_name

order by

hot

desc limit

10;

4.3 统计出视频观看数最高的 20 个视频的所属类别以及类别包含Top20 视频的个数

思路:

(1) 先找到观看数最高的 20 个视频所属条目的所有信息,降序排列

(2) 把这 20 条信息中的 category 分裂出来(列转行)

(3)最后查询视频分类名称和该分类下有多少个 Top20 的视频

最终代码:

select

category_name as category,

count(t2.videoId) as hot_with_views

from (

select

videoId,

category_name

from (

select

*

from

gulivideo_orc

order by

views

desc limit

20) t1 lateral view explode(category) t_catetory as category_name) t2

group by

category_name

order by

hot_with_views

desc;4.4 统计视频观看数 Top50 所关联视频的所属类别 Rank

思路:

(1) 查询出观看数最多的前 50 个视频的所有信息(当然包含了每个视频对应的关联视频),记为临时表 t1

t1:观看数前 50 的视频

select * from gulivideo_orc

order by views

desc limit 50;(2) 将找到的 50 条视频信息的相关视频 relatedId 列转行,记为临时表 t2

t2:将相关视频的 id 进行列转行操作

select

explode(relatedId) as videoId

from

t1;(3) 将相关视频的 id 和 gulivideo_orc 表进行 inner join 操作

t5:得到两列数据,一列是 category,一列是之前查询出来的相关视频 id

(select distinct(t2.videoId), t3.category

from t2

inner join

gulivideo_orc t3 on t2.videoId = t3.videoId) t4 lateral view

explode(category) t_catetory as category_name;(4) 按照视频类别进行分组,统计每组视频个数,然后排行

最终代码:

SELECT *

FROM (

SELECT category_name, COUNT(*) AS hot

FROM (

SELECT *

FROM (

SELECT *

FROM (

SELECT DISTINCT relatedId_name

FROM (

SELECT videoId, views, category, relatedId

FROM gulivideo_orc

ORDER BY views DESC

LIMIT 50

) t1

LATERAL VIEW explode(relatedId) relatedId_t AS relatedId_name

) t2

JOIN gulivideo_orc t3 ON t2.relatedId_name = t3.videoId

) t4

LATERAL VIEW explode(category) category_t AS category_name

) t5

GROUP BY category_name

) t6

ORDER BY hot DESC;

4.5 统计每个类别中的视频热度Top10,以Music为例

思路:

(1)要想统计Music类别中的视频热度Top10,需要先找到Music类别,那么就需要将category展开,所以可以创建一张表用于存放categoryId展开的数据。

(2) 向category展开的表中插入数据。

(3) 统计对应类别(Music)中的视频热度。

最终代码:

创建表类别表:

create table gulivideo_category(

videoId string,

uploader string,

age int,

categoryId string,

length int,

views int,

rate float,

ratings int,

comments int,

relatedId array<string>)

row format delimited

fields terminated by "\t"

collection items terminated by "&"

stored as orc;向类别表中插入数据:

insert into table gulivideo_category

select

videoId,

uploader,

age,

categoryId,

length,

views,

rate,

ratings,

comments,

relatedId

from

gulivideo_orc lateral view explode(category) catetory as categoryId;统计Music类别的Top10(也可以统计其他)

select

videoId,

views

from

gulivideo_category

where

categoryId = "Music"

order by

views

desc limit

10;4.6 统计每个类别中视频流量Top10,以Music为例

思路:

(1) 创建视频类别展开表(categoryId列转行后的表)

(2) 按照ratings排序即可

最终代码:

select

videoId,

views,

ratings

from

gulivideo_category

where

categoryId = "Music"

order by

ratings

desc limit

10;4.7 统计上传视频最多的用户 Top10 以及他们上传的观看次数在前 20 的视频

思路:

(1) 先找到上传视频最多的 10 个用户的用户信息

select * from gulivideo_user_orc

order by videos

desc limit 10;(2) 通过 uploader 字段与 gulivideo_orc 表进行 join,得到的信息按照 views 观看次数进行排序

即可。

最终代码:

SELECT *

FROM (

SELECT views, videoId, t1.uploader

FROM (

SELECT uploader, videos

FROM gulivideo_user_orc

ORDER BY videos DESC

LIMIT 10

) t1

JOIN gulivideo_orc t2 ON t1.uploader = t2.uploader

) t3

ORDER BY views DESC

LIMIT 20;4.8 统计每个类别视频观看数Top10

思路:

(1) 先得到categoryId展开的表数据

(2)子查询按照categoryId进行分区,然后分区内排序,并生成递增数字,该递增数字这一列起名为rank列

(3)通过子查询产生的临时表,查询rank值小于等于10的数据行即可。

最终代码:

select

t1.*

from (

select

videoId,

categoryId,

views,

row_number() over(partition by categoryId order by views desc) rank from gulivideo_category) t1

where

rank <= 10;4.9.统计视频观看数Top20所属类别

select

category_name as category,

count(t2.videoId) as hot_with_views

from (

select

videoId,

category_name

from (

select

*

from

gulivideo_orc

order by

views

desc limit

20) t1 lateral view explode(category) t_catetory as category_name) t2

group by

category_name

order by

hot_with_views

desc;