目录

1.2.1 客户端处理SYN+ACK报文 tcp_rcv_synsent_state_process

1.3.1 服务器端收到SYN处理 tcp_rcv_state_process

1.4.2 接收ack确认更新发送窗口 tcp_ack_update_window

1 快慢路劲分流

TCP的接收过程为什么要分为快速路径和慢速路径处理,如下面这段话所述:

就是要高效,如果满足快速路径,那么在接收过程中就可以使用更少的检查条件。

1.1 首部预测标记 pred_flags

那么什么样的数据包是当前最期望收到的数据包呢?共有两种情况:

- 如果才发送了数据,那么这时最期望收到的就是对刚发送数据的确认;

- 如果只是接收场景,那么最期望收到的一定是以rcv_nxt为起始序号的数据包。

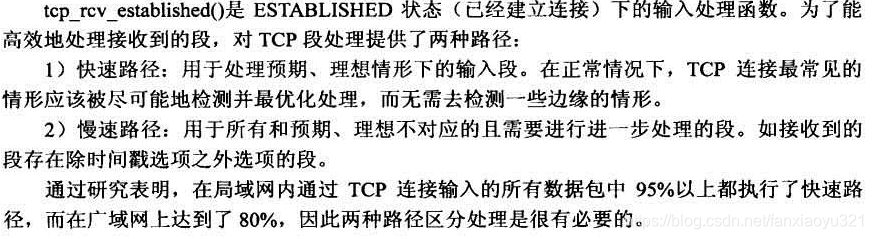

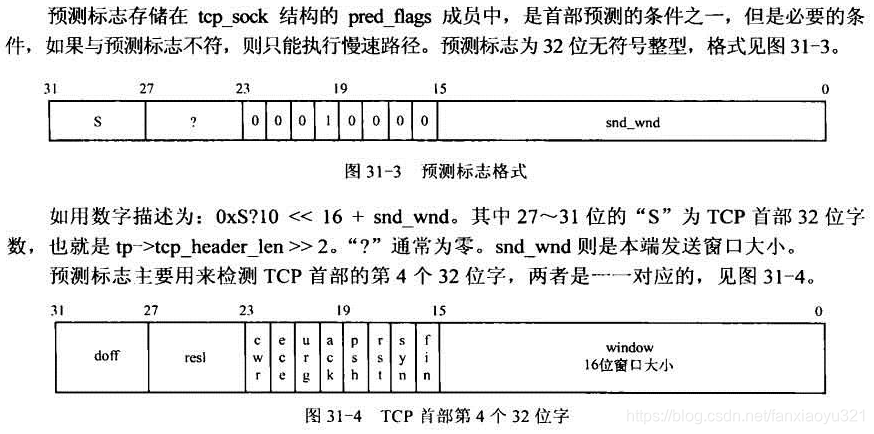

如何来表述这种预期想法呢,TCB中引入了pred_flags变量,该32位变量和TCP首部的第四个32位字对应,如果二者匹配,那么就认为是基本匹配。pred_flags和TCP首部的对应关系如下图所示:

1.1.1 首部预测标记的设定

pred_flags为0表示关闭首部预测,这时所有输入数据包的处理都按照慢速路径处理,如果可以开启,代码中会对该标记进行设定,这是通过tcp_fast_path_check()完成的。

static inline void __tcp_fast_path_on(struct tcp_sock *tp, u32 snd_wnd)

{

//pred_flags中有三部分:首部长度、ACK标记、发送窗口(即对端的接收窗口)

tp->pred_flags = htonl((tp->tcp_header_len << 26) |

ntohl(TCP_FLAG_ACK) |

snd_wnd);

}

static inline void tcp_fast_path_on(struct tcp_sock *tp)

{

__tcp_fast_path_on(tp, tp->snd_wnd >> tp->rx_opt.snd_wscale);

}

//该函数会检查是否可以设置pred_flags标记,如果可以则设置

static inline void tcp_fast_path_check(struct sock *sk)

{

struct tcp_sock *tp = tcp_sk(sk);

//cond1: 乱序队列为空;

//cond2: 接收窗口还有剩余空间;

//cond3: 接收内存还没有受限;

//cond4: 没有紧急数据传输

if (skb_queue_empty(&tp->out_of_order_queue) &&

tp->rcv_wnd &&

atomic_read(&sk->sk_rmem_alloc) < sk->sk_rcvbuf &&

!tp->urg_data)

tcp_fast_path_on(tp);

}

1.2 __tcp_fast_path_on 调用

1.2.1 客户端处理SYN+ACK报文 tcp_rcv_synsent_state_process

直接调用__tcp_fast_path_on()的地方是在客户端收到SYN+ACK段后的处理过程中,如下:

static int tcp_rcv_synsent_state_process(struct sock *sk, struct sk_buff *skb,

struct tcphdr *th, unsigned len)

{

...

//为什么只有在对端没有使用窗口扩大选项时才可以开启首部预测???

if (!tp->rx_opt.snd_wscale)

__tcp_fast_path_on(tp, tp->snd_wnd);

else

tp->pred_flags = 0;

...

1.3 tcp_fast_path_on 调用

1.3.1 服务器端收到SYN处理 tcp_rcv_state_process

直接调用tcp_fast_path_on()的地方是在服务器端收到SYN段后的处理过程中,如下:

int tcp_rcv_state_process(struct sock *sk, struct sk_buff *skb,

const struct tcphdr *th, unsigned int len)

{

...

int acceptable = tcp_ack(sk, skb, FLAG_SLOWPATH |

FLAG_UPDATE_TS_RECENT) > 0;

switch (sk->sk_state) {

case TCP_SYN_RECV:

if (acceptable) {

...

tcp_fast_path_on(tp);

} else {

return 1;

}

break;

...

1.4 tcp_fast_path_check 调用

直接调用tcp_fast_path_check()的地方有三处,如下:

1.4.1 tcp_recvmsg() 处理完紧急数据

//第一处是在tcp_recvmsg()中处理完紧急数据后,可能需要重新开启开启快速路径处理

int tcp_recvmsg(struct kiocb *iocb, struct sock *sk, struct msghdr *msg,

size_t len, int nonblock, int flags, int *addr_len)

{

...

if (tp->urg_data && after(tp->copied_seq, tp->urg_seq)) {

tp->urg_data = 0;

tcp_fast_path_check(sk);

}

...

}1.4.2 接收ack确认更新发送窗口 tcp_ack_update_window

//第二处是收到确认后,更新了发送窗口,这种情况下需要重新设定

static int tcp_ack_update_window(struct sock *sk, struct sk_buff *skb, u32 ack,

u32 ack_seq)

{

...

if (tcp_may_update_window(tp, ack, ack_seq, nwin)) {

flag |= FLAG_WIN_UPDATE;

tcp_update_wl(tp, ack, ack_seq);

//发送窗口发生了变化

if (tp->snd_wnd != nwin) {

tp->snd_wnd = nwin;

/* Note, it is the only place, where

* fast path is recovered for sending TCP.

*/

tp->pred_flags = 0;

tcp_fast_path_check(sk);

}

}

...

}1.4.3 更新接收队列 tcp_data_queue

//第三处是将输入数据放入到接收队列后,更新了自己的内存占用量,需要重新设定

static void tcp_data_queue(struct sock *sk, struct sk_buff *skb)

{

...

tcp_fast_path_check(sk);

...

}

1.5 快速路径判断条件

是否能够执行快速路径,pred_flags匹配只是前提条件,还有一些其它的判断条件。关于快速路径的所有判断条件,tcp_rcv_established()的函数头注释解释的很清楚:

/*

* TCP receive function for the ESTABLISHED state.

*

* It is split into a fast path and a slow path. The fast path is

* disabled when:

* - A zero window was announced from us - zero window probing

* is only handled properly in the slow path.

* - Out of order segments arrived.

* - Urgent data is expected.

* - There is no buffer space left

* - Unexpected TCP flags/window values/header lengths are received

* (detected by checking the TCP header against pred_flags)

* - Data is sent in both directions. Fast path only supports pure senders

* or pure receivers (this means either the sequence number or the ack

* value must stay constant)

* - Unexpected TCP option.

*

* When these conditions are not satisfied it drops into a standard

* receive procedure patterned after RFC793 to handle all cases.

* The first three cases are guaranteed by proper pred_flags setting,

* the rest is checked inline. Fast processing is turned on in

* tcp_data_queue when everything is OK.

*/

int tcp_rcv_established(struct sock *sk, struct sk_buff *skb,

struct tcphdr *th, unsigned len)

{

...

}

2 快速路径执行

int tcp_rcv_established(struct sock *sk, struct sk_buff *skb,

struct tcphdr *th, unsigned len)

{

struct tcp_sock *tp = tcp_sk(sk);

/*

* Header prediction.

* The code loosely follows the one in the famous

* "30 instruction TCP receive" Van Jacobson mail.

*

* Van's trick is to deposit buffers into socket queue

* on a device interrupt, to call tcp_recv function

* on the receive process context and checksum and copy

* the buffer to user space. smart...

*

* Our current scheme is not silly either but we take the

* extra cost of the net_bh soft interrupt processing...

* We do checksum and copy also but from device to kernel.

*/

tp->rx_opt.saw_tstamp = 0;

/* pred_flags is 0xS?10 << 16 + snd_wnd

* if header_prediction is to be made

* 'S' will always be tp->tcp_header_len >> 2

* '?' will be 0 for the fast path, otherwise pred_flags is 0 to

* turn it off (when there are holes in the receive

* space for instance)

* PSH flag is ignored.

*/

//cond1. 收到的数据包和首部预测标记指示的期望数据包匹配

//cond2. 收到的数据包的起始序列号就是最想接收的数据包的序列号

if ((tcp_flag_word(th) & TCP_HP_BITS) == tp->pred_flags &&

TCP_SKB_CB(skb)->seq == tp->rcv_nxt) {

int tcp_header_len = tp->tcp_header_len;

/* Timestamp header prediction: tcp_header_len

* is automatically equal to th->doff*4 due to pred_flags

* match.

*/

//根据时间戳选项检查是否要执行慢速路径

if (tcp_header_len == sizeof(struct tcphdr) + TCPOLEN_TSTAMP_ALIGNED) {

...

}

//处理不带数据部分的输入报文,比如纯粹的ACK段

if (len <= tcp_header_len) {

/* Bulk data transfer: sender */

if (len == tcp_header_len) {

/* Predicted packet is in window by definition.

* seq == rcv_nxt and rcv_wup <= rcv_nxt.

* Hence, check seq<=rcv_wup reduces to:

*/

//时间戳选项处理

if (tcp_header_len ==

(sizeof(struct tcphdr) + TCPOLEN_TSTAMP_ALIGNED) &&

tp->rcv_nxt == tp->rcv_wup)

tcp_store_ts_recent(tp);

/* We know that such packets are checksummed

* on entry.

*/

//进行ACK相关处理处理

tcp_ack(sk, skb, 0);

//释放skb

__kfree_skb(skb);

//由于先前可能收到了ACK,发送窗口发生了变化,所以这里尝试进行数据的发送

tcp_data_snd_check(sk);

return 0;

} else { /* Header too small */

//输入报文过于小了,无效报文丢弃

TCP_INC_STATS_BH(TCP_MIB_INERRS);

goto discard;

}

} else {

//这段代码处理有负荷的数据段,并且尽可能的将该数据包的内容拷贝到用户空间

//(只要条件满足,见下面1,2,3,4)

//eaten标识该数据包是否已经被拷贝到用户空间

int eaten = 0;

//DMA操作相关,忽略

int copied_early = 0;

//1. 当前接收到数据就是用户空间待拷贝的序号

//2. 用户空间提供的接收buffer足够容纳当前数据包

//这两个条件成立,那么就尝试将输入数据直接拷贝给用户程序

if (tp->copied_seq == tp->rcv_nxt &&

len - tcp_header_len <= tp->ucopy.len) {

#ifdef CONFIG_NET_DMA

if (tcp_dma_try_early_copy(sk, skb, tcp_header_len)) {

copied_early = 1;

eaten = 1;

}

#endif

//3. 当前处于进程上下文

//4. 该TCB已经被用户进程锁定

if (tp->ucopy.task == current &&

sock_owned_by_user(sk) && !copied_early) {

__set_current_state(TASK_RUNNING);

//拷贝数据包到用户空间程序提供的buffer中,拷贝成功后,设置eaten标志位

if (!tcp_copy_to_iovec(sk, skb, tcp_header_len))

eaten = 1;

}

//数据已经拷贝给了用户空间,那么更新时间戳、RTT、rcv_nxt信息

if (eaten) {

/* Predicted packet is in window by definition.

* seq == rcv_nxt and rcv_wup <= rcv_nxt.

* Hence, check seq<=rcv_wup reduces to:

*/

//Timestamp选项处理

if (tcp_header_len ==

(sizeof(struct tcphdr) +

TCPOLEN_TSTAMP_ALIGNED) &&

tp->rcv_nxt == tp->rcv_wup)

tcp_store_ts_recent(tp);

//根据Timestamp进行RTT测量

tcp_rcv_rtt_measure_ts(sk, skb);

//更新rcv_nxt

__skb_pull(skb, tcp_header_len);

tp->rcv_nxt = TCP_SKB_CB(skb)->end_seq;

NET_INC_STATS_BH(LINUX_MIB_TCPHPHITSTOUSER);

}

//DMA相关

if (copied_early)

tcp_cleanup_rbuf(sk, skb->len);

}

//该数据包没有被拷贝到用户空间,所以需要将其放入到接收队列中

if (!eaten) {

//校验计算

if (tcp_checksum_complete_user(sk, skb))

goto csum_error;

/* Predicted packet is in window by definition.

* seq == rcv_nxt and rcv_wup <= rcv_nxt.

* Hence, check seq<=rcv_wup reduces to:

*/

//更新时间戳

if (tcp_header_len ==

(sizeof(struct tcphdr) + TCPOLEN_TSTAMP_ALIGNED) &&

tp->rcv_nxt == tp->rcv_wup)

tcp_store_ts_recent(tp);

//RTT测量

tcp_rcv_rtt_measure_ts(sk, skb);

//如果该数据包实际占用空间超过了套接字预分配缓存大小,则执行慢速路径

if ((int)skb->truesize > sk->sk_forward_alloc)

goto step5;

NET_INC_STATS_BH(LINUX_MIB_TCPHPHITS);

//删除TCP首部,将数据包加入到TCB的receive队列等待用户进程读取

/* Bulk data transfer: receiver */

__skb_pull(skb, tcp_header_len);

__skb_queue_tail(&sk->sk_receive_queue, skb);

//设置该SKB的宿主,更新该TCB的内存用量

skb_set_owner_r(skb, sk);

//更新下一个预期接收的数据包的序号

tp->rcv_nxt = TCP_SKB_CB(skb)->end_seq;

}

//有新数据到达,执行一些相关事件,如进行延迟确认控制的更新

tcp_event_data_recv(sk, skb);

//收到的ACK有更新,调用tcp_ack()进行ACK相关处理,然后尝试发送数据

if (TCP_SKB_CB(skb)->ack_seq != tp->snd_una) {

/* Well, only one small jumplet in fast path... */

tcp_ack(sk, skb, FLAG_DATA);

tcp_data_snd_check(sk);

if (!inet_csk_ack_scheduled(sk))

goto no_ack;

}

//延迟确认

__tcp_ack_snd_check(sk, 0);

no_ack:

#ifdef CONFIG_NET_DMA

if (copied_early)

__skb_queue_tail(&sk->sk_async_wait_queue, skb);

else

#endif

//如果数据包已经被拷贝到用户空间,则skb没有必要再存在,所以释放其内存空间,

//否则调用回调函数通知应用程序数据已经就绪

if (eaten)

__kfree_skb(skb);

else

sk->sk_data_ready(sk, 0);

return 0;

}

}

slow_path:

//慢速路径处理

...

}