文章目录

1. 安装

-

dnf install rhel-system-roles.noarch -y:安装本地角色 -

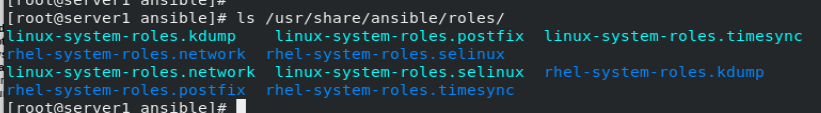

ls /usr/share/ansible/roles/:角色目录

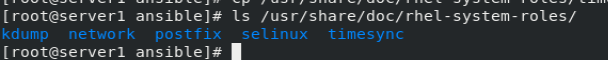

/usr/share/doc/rhel-system-roles/:剧本目录

2. 同步时区

-

cp /usr/share/doc/rhel-system-roles/timesync/example-timesync-playbook.yml /mnt/ansible/:复制同步时区剧本 -

vim ansible.cfg:修改角色变量路径

roles_path = /usr/share/ansible/roles/

vim example-timesync-playbook.yml

---

- hosts: webserver

vars:

timesync_ntp_servers:

- hostname: 172.25.17.250

iburst: yes

roles:

- rhel-system-roles.timesync

- 执行剧本

在目标主机查看:

vim /etc/chrony.conf

chronyc sources -v

3. 修改selinux

cp /usr/share/doc/rhel-system-roles/selinux/example-selinux-playbook.yml /mnt/ansible/selinux-playbook.yml

3.1 无需重启的selinux修改

- enforcing 与 permissive 之间的转换

vim selinux-playbook.yml

---

- hosts: server2

vars:

selinux_policy: targeted

selinux_state: permissive

roles:

- rhel-system-roles.selinux

在目标主机测试:

3.2 需要重启的selinux修改

- 与 disabled 之间的转换

vim selinux-playbook.yml

---

- hosts: test

vars:

selinux_policy: targeted

selinux_state: disabled

tasks:

- name: execute the role and catch errors

block:

- include_role:

name: rhel-system-roles.selinux

rescue:

# Fail if failed for a different reason than selinux_reboot_required.

- name: handle errors

fail:

msg: "role failed"

when: not selinux_reboot_required

- name: restart managed host

shell: sleep 2 && shutdown -r now "Ansible updates triggered"

async: 1

poll: 0

ignore_errors: true

- name: wait for managed host to come back

wait_for_connection:

delay: 10

timeout: 300

- name: reapply the role

include_role:

name: rhel-system-roles.selinux

在目标主机测试:

4. 更改bool值、安全上下文、端口号(在enforcing状态下)

vim selinux-playbook.yml

---

- hosts: test

vars:

selinux_policy: targeted

selinux_state: enforcing

selinux_booleans:

- {

name: 'samba_enable_home_dirs', state: 'on' }

selinux_fcontexts:

- {

target: '/samba(/.*)?', setype: 'samba_share_t', ftype: 'd' }

selinux_ports:

- {

ports: '82', proto: 'tcp', setype: 'http_port_t', state: 'present' }

tasks:

- name: Creates directory

file:

path: /samba

state: directory

- name: execute the role and catch errors

block:

- include_role:

name: rhel-system-roles.selinux

rescue:

# Fail if failed for a different reason than selinux_reboot_required.

- name: handle errors

fail:

msg: "role failed"

when: not selinux_reboot_required

- name: restart managed host

shell: sleep 2 && shutdown -r now "Ansible updates triggered"

async: 1

poll: 0

ignore_errors: true

- name: wait for managed host to come back

wait_for_connection:

delay: 10

timeout: 300

- name: reapply the role

include_role:

name: rhel-system-roles.selinux

在目标主机查看:

getsebool -a | grep samba:查看bool值ll -Zd /samba/:查看安全上下文semanage port -l | grep http:82端口成功修改

5. 存储管理

在目标主机中加磁盘fdisk -l /dev/vdb

借鉴 /usr/share/doc/rhel-system-roles/storage/README.md

vim storage.yml

---

- hosts: test

roles:

- name: rhel-system-roles.storage

storage_pools:

- name: app

disks:

- sdb #磁盘类型

volumes:

- name: shared

size: "4 GiB"

mount_point: "/mnt/app/shared"

fs_type: xfs

state: present

#state: absent #卸载

- name: users

size: "4 GiB"

mount_point: "/mnt/app/users"

fs_type: ext4

state: present

在目标主机中查看:

cat /etc/fstabdflvspvsvgs

在目标主机卸载分区:

- 将剧本中的present改为absent

vgremove apppvremove /dev/vdb

6. playbook磁盘分区

vim lvs.yml

---

- hosts: test

tasks:

- name: create vg

lvg:

vg: demovg

pvs: /dev/vdb

- name: create lv

lvol:

vg: demovg

lv: "{

{ item }}"

size: 100%FREE

loop:

- demolv

when: item not in ansible_lvm['lvs']

- name: create xfs filesystem

filesystem:

fstype: xfs

dev: /dev/demovg/demolv

force: yes

- name: mount lv

mount:

path: /mnt/app

src: /dev/demovg/demolv

fstype: xfs

opts: noatime

在目标主机查看:

lvsvgspvscat /etc/fstab

卸载:

lvremove /dev/demovg/demolvvgremove demovgpvremove /dev/vdb

6. 自动检测磁盘分区情况进行分区

vim lvs.yml

---

- hosts: test

vars_files:

- partlist.yml

tasks:

- name: Create a new primary

parted:

device: /devdb

number: "{

{ item.num }}"

state: present

part_start: "{

{ item.start }}"

part_end: "{

{ item.end }}"

loop: "{

{ partlist }}"

when: item.name not in ansible_devices['vdb']['partitions']

- name: create xfs filesystem

filesystem:

fstype: xfs

dev: "/dev/{

{ item.name }}"

loop: "{

{ partlist }}"

- name: create mount dir

file:

path: "/mnt/{

{ item.dir }}"

state: directory

loop: "{

{ partlist }}"

- name: mount partations

mount:

path: "/mnt/{

{ item.dir }}"

src: "/dev/{

{ item.name }}"

fstype: xfs

opts: noatime

state: mounted

loop: "{

{ partlist }}"

vim partlist.yml

---

partlist:

- name: vdb1

num: 1

start: 0

end: 1GiB

dir: dir1

- name: vdb2

num: 2

start: 1GiB

end: 2GiB

dir: dir2