1.这个代码仅仅是用到了简单的爬虫知识,没有用自动化之类的库,

因为是简单爬取,所有没有考虑太多的操作

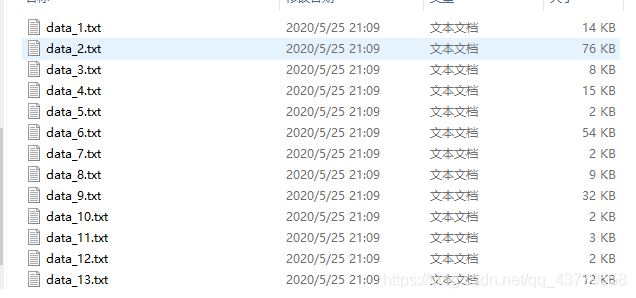

2.将人民日报对这次新冠肺炎疫情的报道的内容进行爬取,仅仅对文字进行爬取

3.没有考虑反爬的情况,所有运行的时候又可能会保错,但是多运行几次就可以了,当然,因为反爬和没有对所有文章都分析html里的文章所在的标签,所以保存的有的文件内容会少且乱

from urllib import request

from urllib import parse

import urllib

import re

import time

MAX_NUM = 30

package = 1

save_path = r"C:\Users\pc\Desktop\python学习\课堂作业\NLP作业\data"

punctuation = [',', '”', "。", "?", "!", ":", ";", "‘", "’", "”"]

headers = {

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/83.0.4103.61 Safari/537.36 Edg/83.0.478.37'}

url = "https://news.sogou.com/news?mode=1&sort=0&fixrank=1&"

params = {

'query': '新冠肺炎报道人民日报'}

qs = parse.urlencode(params)

url = url + qs + "&shid=hb1" + "&page=" + str(package)

print("访问: ", package, "\n", url)

package += 1

req = urllib.request.Request(url=url, headers=headers)

resp = request.urlopen(req)

info = resp.read()

info = info.decode('utf-8', "ignore")

# 状态码

print(resp.getcode())

urls = re.findall('<a href="http.*?html"', info, re.I)

num = 0

while num < MAX_NUM:

for u in urls:

u = u.replace("<a href=\"", "")[:-1]

print(u)

req = urllib.request.Request(url=u, headers=headers)

resp = request.urlopen(req)

if resp.getcode() == 200:

info = resp.read()

info = info.decode('utf-8', "ignore")

if len(info) > 30000:

word = re.findall('<p.*?</p>', info, re.S)

for i in re.findall('<div.*?</div>', info, re.S):

word.append(i)

if len(word) > 5:

num += 1

f = open(save_path + "\data_" + str(num) + '.txt', 'w', encoding="utf-8")

for w in word:

for i in w:

if '\u4e00' <= i <= '\u9fff':

f.write(str(i))

elif i in punctuation:

f.write(" ")

f.close()

print("over" + str(num))

time.sleep(1)

url = "https://news.sogou.com/news?mode=1&sort=0&fixrank=1&"

params = {

'query': '新冠肺炎报道人民日报'}

qs = parse.urlencode(params)

url = url + qs + "&shid=hb1" + "&page=" + str(package)

print("访问: ", package, "\n", url)

package += 1

req = urllib.request.Request(url=url, headers=headers)

resp = request.urlopen(req)

info = resp.read()

info = info.decode('utf-8', "ignore")

# 状态码

print(resp.getcode())

urls = re.findall('<a href="http.*?html"', info, re.I)