- 前言: ResNet

-

目录

0 More Details: HomeLink

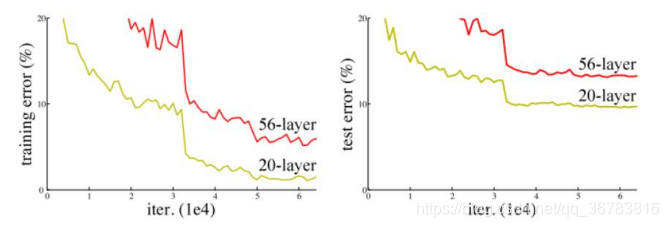

1 Why ResNet

The deeper the network,

The more parameters,

The worse results we’ll get

WERIED!!!

WHY???

Too deep, accuracy saturated

Gradient vanished

How???

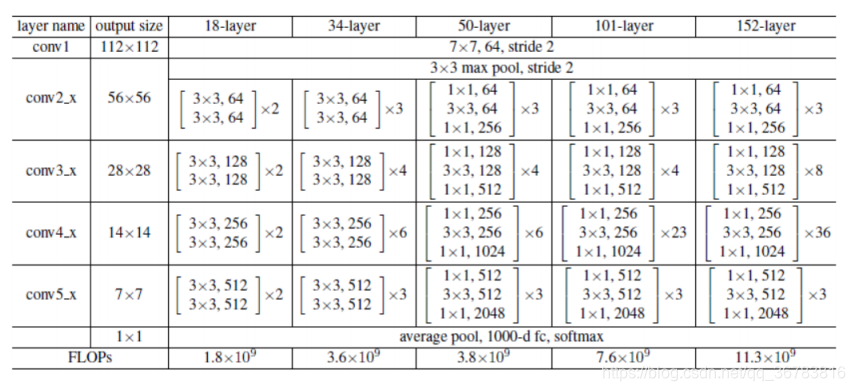

1.1 Structure: 2Qs

Structure

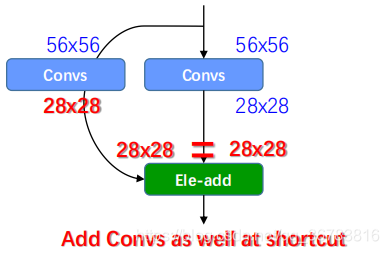

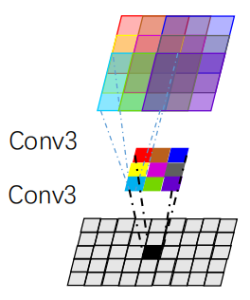

We can use conv/pool to reduce the size,

What about the shortcut?

How can we add 2 parts without same resolution?

-

Get rich set of primary features

-

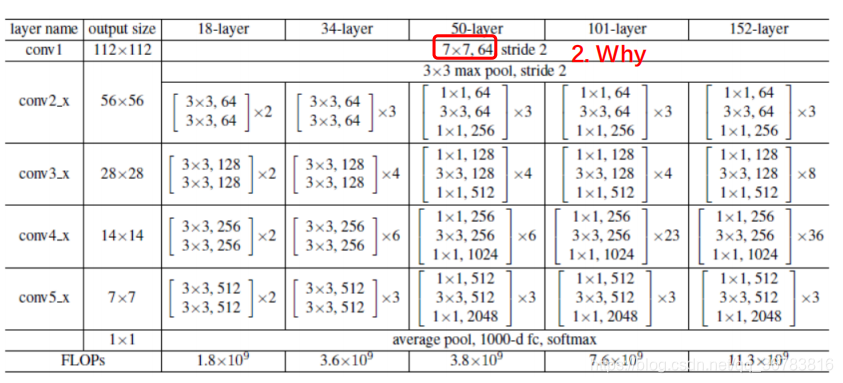

Channel of input layer is less, big kernel doesn’t have to mean great of params

-

Computing reason:

Res:7 * 7 * 3 * 64 *112 * 112 = 120M

VGG: 3 * 3 * 3 * 64 * 224 * 224 +

3 * 3 * 64 * 64 * 224 * 224 +

3 * 3 * 64 * 128 * 112 *112 +

3 * 3 * 128 * 128 * 56 * 56

= x >>120M * 2

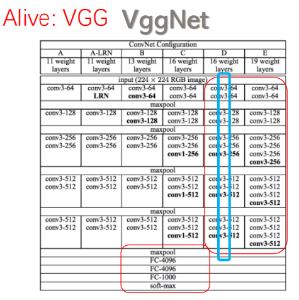

1.2 VggNet & Small kernel

Small kernel always better?

NO

Sacrificing more real details of the image

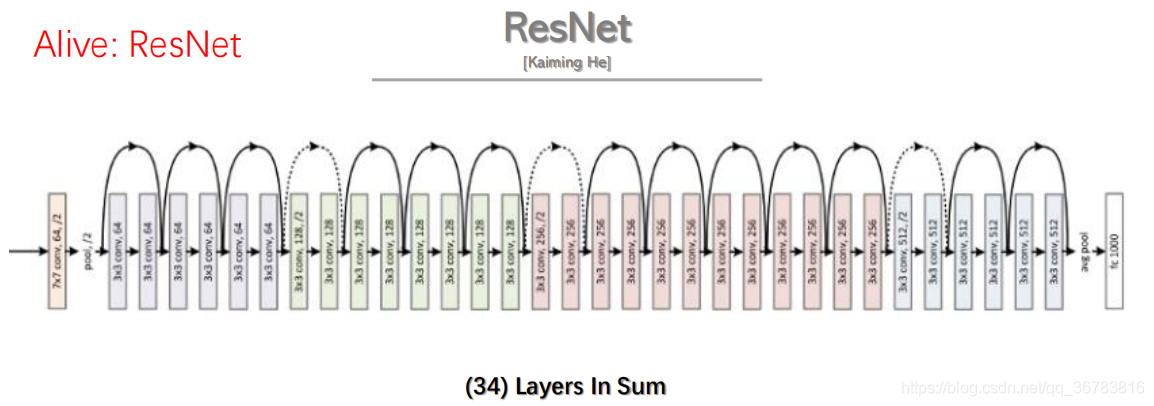

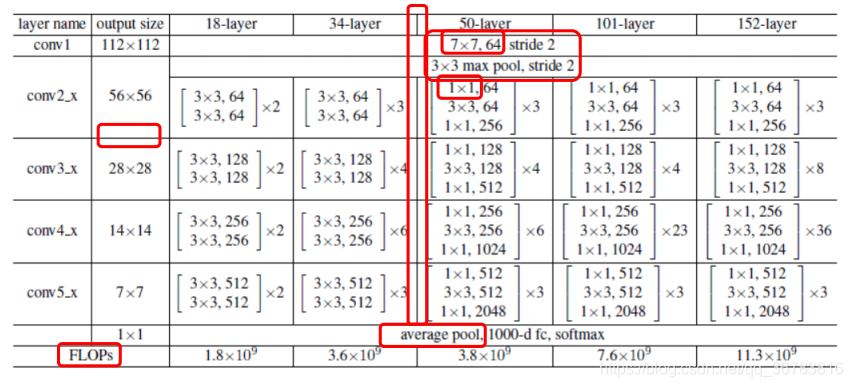

1.3 Structure: Revisit

50-layer : 49 Conv layer

conv1: 7 * 7

before 50-layer no 1 * 1

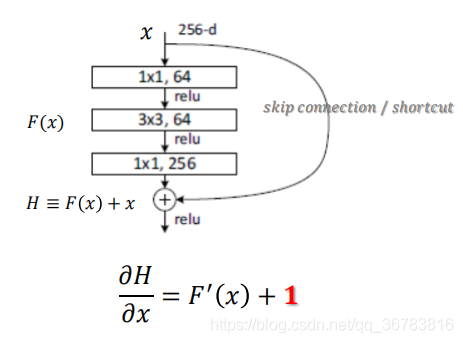

50-layer, num of channel: less less more…

how add between left and right ? add conv in left…

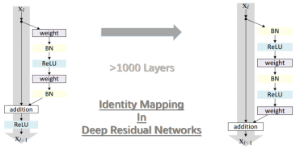

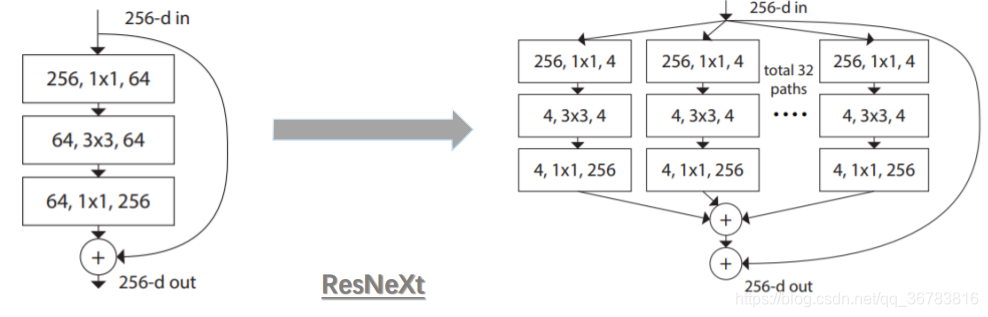

2 Structure: Advanced?

deeper

wider

3 Why better?

1 Solved gradient vanishing by using shortcut

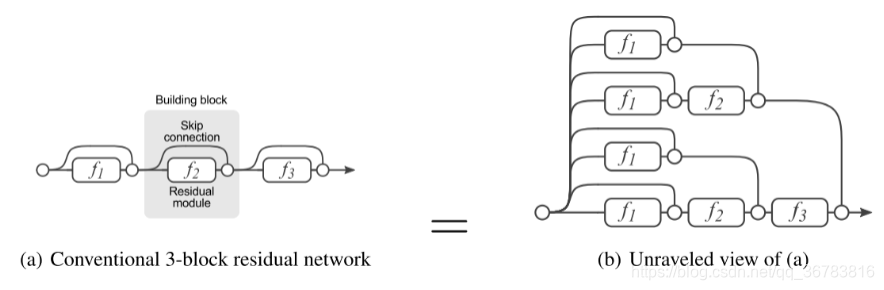

2 Can be seen as assembled models