修改master主机名字

[root@server1 ~]$ hostname master

[root@server1 ~]$ hostname slave1

[root@server1 ~]$ hostname slave2

修改hosts文件

[root@server1 ~]$ vim /etc/hosts

192.168.66.128 master

192.168.66.129 slave1

192.168.66.130 slave2

修改完成后保存执行如下命令

[root@server1 ~]$ source /etc/hosts

配置Master无密码登录所有Salve

在Master节点上生成密码对

[root@server1 ~]$ ssh-keygen -t rsa -P ''

把id_rsa.pub追加到授权的key里面去

[root@server1 ~]$ cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys

修改ssh配置文件"/etc/ssh/sshd_config"

[root@server1 ~]$ vim /etc/ssh/sshd_config

RSAAuthentication yes # 启用 RSA 认证

PubkeyAuthentication yes # 启用公钥私钥配对认证方式

重启ssh服务

[root@server1 ~]$ systemctl restart ssh

验证无密码登录本机是否成功

[root@server1 ~]$ ssh master

将公钥复制到所有的Slave机器上

[root@server1 ~]$ scp -r /root/.ssh/id_rsa.pub root@slave1:/root/

[root@server1 ~]$ scp -r /root/.ssh/id_rsa.pub root@slave2:/root/

接着配置server1节点,以下是在server2、server3节点的配置操作

在"/root/"下创建".ssh"文件夹

[root@server2 ~]$ mkdir /root/.ssh

将server1的公钥追加到server2、server3的授权文件"authorized_keys"中

[root@server2 ~]$ cat /root/id_rsa.pub >> /root/.ssh/authorized_keys

修改"/etc/ssh/sshd_config"

[root@server2 ~]$ vim /etc/ssh/sshd_config

RSAAuthentication yes $ 启用 RSA 认证

PubkeyAuthentication yes $ 启用公钥私钥配对认证方式

创建" server2、server3"自己的公钥和私钥,并把自己的公钥追加到"authorized_keys"文件中

[root@server2 ~]$ ssh-keygen -t rsa -P ''

[root@server2 ~]$ cat /root/.ssh/id_rsa.pub >> /root/.ssh/authorized_keys

将server2、server3节点的公钥"id_rsa.pub"复制到server1节点的"/root/"目录下

[root@slave1 ~]$ scp -r /root/.ssh/id_rsa.pub root@master:/root/

以下是在server1节点的配置操作

将server2、server3的公钥追加到Master的授权文件"authorized_keys"中去。

[root@server1 ~]$ cat ~/id_rsa.pub >> ~/.ssh/authorized_keys

删除Slave1复制过来的"id_rsa.pub"文件

[root@server1 ~]$ rm –r /root/id_rsa.pub

jdk-8u121安装部署

上传压缩包并解压

jdk-8u121-linux-x64.tar.gz

[root@server1 ~]$ tar xvf jdk-8u121-linux-x64.tar.gz

[root@server1 ~]$ mv jdk1.8.0_121/ /usr/local/

添加Java环境变量,在/etc/profile中添加

[root@server1 ~]$ vim /etc/profile

export JAVA_HOME=/usr/local/jdk1.8.0_121

export PATH=$JAVA_HOME/bin:$PATH

export CLASSPATH=.:$JAVA_HOME/lib/rt.jar

export JAVA_HOME PATH CLASSPATH

保存后刷新配置

[root@localhost ~]$ source /etc/profile

复制master节点的profile文件夹到server2和server3上

[root@localhost ~]$ scp -r /etc/profile/ root@slave1:/etc/

[root@localhost ~]$ scp -r /etc/profile/ root@slave2:/etc/

复制master节点的jdk1.8.0_121文件夹到server2和server3上

[root@localhost ~]$ scp -r /usr/local/jdk1.8.0_121/ root@slave1:/usr/local/

[root@localhost ~]$ scp -r /usr/local/jdk1.8.0_121/ root@slave2:/usr/local/

zookeeper-3.5.8安装部署

上传压缩包并解压

zookeeper-3.4.14.tar.gz

[root@localhost ~]$ tar -zxvf zookeeper-3.4.14.tar.gz

[root@localhost ~]$ mv zookeeper-3.4.14 /opt/zookeeper

[root@localhost ~]$ cd /opt/zookeeper/conf/

拷贝模板文件

[root@localhost conf]$ cp zoo_sample.cfg zoo.cfg

修改配置文件,并添加

[root@localhost conf]$ vim zoo.cfg

server.1=192.168.66.128:2888:3888

server.2=192.168.66.129:2888:3888

server.3=192.168.66.130:2888:3888

修改profile文件

[root@localhost ~]$ vim /etc/profile

export ZOOKEEPER_HOME=/opt/zookeeper/

export PATH=$ZOOKEEPER_HOME/bin:$PATH

刷新profile

[root@localhost ~]$ source /etc/profile

复制master节点的zookeeper文件夹到server2和server3上

[root@localhost zookeeper]$ scp -r /opt/zookeeper/ root@slave1:/opt/

[root@localhost zookeeper]$ scp -r /opt/zookeeper/ root@slave2:/opt/

创建myid文件

server1上执行:echo "1" > /opt/zookeeper/myid

server2上执行:echo "2" > /opt/zookeeper/myid

server3上执行:echo "3" > /opt/zookeeper/myid

启动zookeeper

[root@localhost zookeeper]$ ./bin/zkServer.sh start

ZooKeeper JMX enabled by default

Using config: /opt/zookeeper/bin/../conf/zoo.cfg

Starting zookeeper ... STARTED

查看状态

[root@localhost zookeeper]$ ./bin/zkServer.sh status

ZooKeeper JMX enabled by default

Using config: /opt/zookeeper/bin/../conf/zoo.cfg

Client port found: 2181. Client address: localhost.

Mode: leader

[root@server1 zookeeper]$ jps

9941 Jps

9725 QuorumPeerMain

kafka_2.10-0安装部署

上传解压包并压缩

kafka_2.11-2.2.0.tgz

[root@server1 ~]$ tar -zxvf kafka_2.11-2.2.0.tgz

[root@server1 ~]$ mv kafka_2.11-2.2.0 /opt/kafka

[root@server1 ~]$ cd /opt/kafka/config/

修改kafka的主配置文件 server1、server2、server3

[root@server1 config]$ vim server.properties

broker.id=1 $ 20行

advertised.listeners=PLAINTEXT://server1:9092 $ 35行

zookeeper.connect=192.168.112.140:2181,192.168.112.138:2181,192.168.112.139:2181/kafka $ 112行

[root@server2 config]$ vim server.properties

broker.id=2 $ 20行

advertised.listeners=PLAINTEXT://server2:9092 $ 35行

zookeeper.connect=192.168.112.140:2181,192.168.112.138:2181,192.168.112.139:2181/kafka $ 112行

[root@server3 config]$ vim server.properties

broker.id=3 $ 20行

advertised.listeners=PLAINTEXT://server3:9092 $ 35行

zookeeper.connect=192.168.112.140:2181,192.168.112.138:2181,192.168.112.139:2181/kafka $ 112行

启动三台kafka

[root@server1 bin]$ ./kafka-server-start.sh -daemon ../config/server.properties

[root@server2 bin]$ ./kafka-server-start.sh -daemon ../config/server.properties

[root@server3 bin]$ ./kafka-server-start.sh -daemon ../config/server.properties

创建一个topic主题

创建一个名为liangzg的主题,并指定该主题的分区数为3,副本数为2

[root@kafka02 bin]$ /opt/kafka/bin/kafka-topics.sh --create --zookeeper 192.168.66.130:2181 --replication-factor 2 --partitions 3 --topic my-topic

Created topic "liangzg". $ 创建成功

报错

Error while executing topic command : Topic "liangzg" already exists.

[2020-12-04 10:32:31,716] ERROR kafka.common.TopicExistsException: Topic "liangzg" already exists.

at kafka.admin.AdminUtils$.createOrUpdateTopicPartitionAssignmentPathInZK(AdminUtils.scala:420)

at kafka.admin.AdminUtils$.createTopic(AdminUtils.scala:404)

at kafka.admin.TopicCommand$.createTopic(TopicCommand.scala:110)

at kafka.admin.TopicCommand$.main(TopicCommand.scala:61)

at kafka.admin.TopicCommand.main(TopicCommand.scala)

(kafka.admin.TopicCommand$)

查看当前有多少个topic主题

[root@kafka02 bin]$ /opt/kafka/bin/kafka-topics.sh --list --zookeeper 192.168.66.130:2181

my-topic

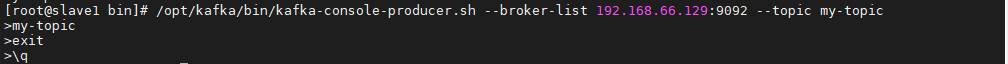

模拟生产者

[root@kafka02 bin]$ /opt/kafka/bin/kafka-console-producer.sh --broker-list 192.168.66.129:9092 --topic my-topic

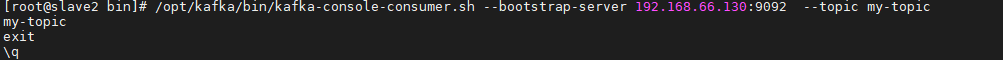

模拟消费者

[root@kafka03 bin]$ /opt/kafka/bin/kafka-console-consumer.sh --bootstrap-server 192.168.66.130:9092 --topic my-topic --from-beginningb