转化成mnn模型虽然可以进行推理

不过模型文件可能较大或者运行较慢的情况

特别是在移动设备等边缘设备上,算力和储存空间受限

因此压缩模型是一个急需的工作

mnn自带了量化工具,环境安装很简单,这文章编译就可以使用量化了

mnn模型文件是使用的是之前的文章训练并转化的mnn文件

在使用之前需要新建一个json文件,里面配置好内容

preprocessConfig.json

{

"format":"GRAY",

"mean":[

127.5

],

"normal":[

0.00784314

],

"width":28,

"height":28,

"path":"FashionMNIST",

"used_image_num":50,

"feature_quantize_method":"KL",

"weight_quantize_method":"MAX_ABS"

}需要说明的是因为之前那篇文件训练的是单通道的图片,因此json文件里面format写的GRAY,实际上可以选择的有:"RGB", "BGR", "RGBA", "GRAY",需要根据自己模型情况进行选择

具体可以看一下文档https://www.yuque.com/mnn/cn/tool_quantize

准备工作都做好了,现在只需要量化即可

/opt/MNN/build/quantized.out FashionMNIST.mnn quan.mnn preprocessConfig.json量化的结果:

[15:54:55] /opt/MNN/tools/quantization/quantized.cpp:21: >>> modelFile: FashionMNIST.mnn

[15:54:55] /opt/MNN/tools/quantization/quantized.cpp:22: >>> preTreatConfig: preprocessCo nfig.json

[15:54:55] /opt/MNN/tools/quantization/quantized.cpp:23: >>> dstFile: quan.mnn

[15:54:55] /opt/MNN/tools/quantization/quantized.cpp:50: Calibrate the feature and quanti ze model...

[15:54:55] /opt/MNN/tools/quantization/calibration.cpp:121: Use feature quantization meth od: KL

[15:54:55] /opt/MNN/tools/quantization/calibration.cpp:122: Use weight quantization metho d: MAX_ABS

[15:54:55] /opt/MNN/tools/quantization/Helper.cpp:100: used image num: 50

ComputeFeatureRange: 100.00 %

CollectFeatureDistribution: 100.00 %

[15:54:55] /opt/MNN/tools/quantization/quantized.cpp:54: Quantize model done!

389K的文件变成了108K

现在需要测试一下量化和未量化之前的运行速度和运行结果

import time

import MNN

import numpy as np

if __name__ == '__main__':

x=np.ones([1, 1, 28, 28]).astype(np.float32)

#quan mnn

start=time.time()

interpreter = MNN.Interpreter("quan.mnn")

print("quan mnn load")

mnn_session = interpreter.createSession()

input_tensor = interpreter.getSessionInput(mnn_session)

tmp_input = MNN.Tensor((1, 1, 28, 28),\

MNN.Halide_Type_Float, x[0], MNN.Tensor_DimensionType_Tensorflow)

interpreter.runSession(mnn_session)

output_tensor = interpreter.getSessionOutput(mnn_session,'output')

output_data=np.array(output_tensor.getData())

print('quan mnn result is:',output_data)

print('quan mnn run time is ',time.time()-start)

运行结果:

quan mnn load

quan mnn result is: [ 0.5922392 -0.40196353 0.32656723 0.13848761 0.01854512 -1.11787963

0.99948055 -0.32638997 0.92734373 -0.93912888]

quan mnn run time is 0.0015997886657714844

和之前的运行结果有一定差距,量化必然会带来精度的损失,需要重点注意

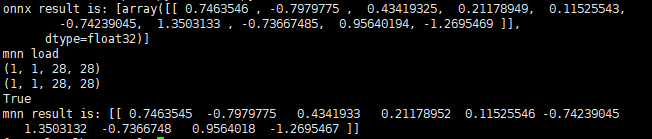

这里贴出来之前的结果

再使用mnn自带的时间测试工具测试一下

/opt/MNN/build/timeProfile.out quan.mnn 10 0运行结果:

Use extra forward type: 0

Open Model quan.mnn

Sort by node name !

Node Name Op Type Avg(ms) % Flops Rate

11 ConvInt8 0.572700 64.814415 5.521849

13 Pooling 0.015200 1.720236 0.585116

13___FloatToInt8___0 FloatToInt8 0.011900 1.346764 0.146279

14 ConvInt8 0.066800 7.559983 44.174789

16 Pooling 0.010500 1.188321 0.292558

16___FloatToInt8___0 FloatToInt8 0.007600 0.860118 0.073140

17 ConvInt8 0.048200 5.454958 44.174789

19 Pooling 0.008000 0.905387 0.107470

23 BinaryOp 0.007800 0.882753 0.005971

MatMul15 ConvInt8 0.004400 0.497963 3.606106

MatMul19 ConvInt8 0.001000 0.113173 0.062606

Raster12 Raster 0.014000 1.584428 0.026868

Raster16 Raster 0.009200 1.041195 0.005971

Raster18 Raster 0.006700 0.758262 0.005971

Raster22 Raster 0.006900 0.780897 0.000466

Reshape14___tr4MatMul15___FloatToInt8___0 FloatToInt8 0.009000 1.018561 0.026868

Reshape19___tr4MatMul19___FloatToInt8___0 FloatToInt8 0.006600 0.746944 0.005971

___Int8ToFloat___For_130 Int8ToFloat 0.021800 2.467180 0.585116

___Int8ToFloat___For_160 Int8ToFloat 0.007400 0.837483 0.292558

___Int8ToFloat___For_190 Int8ToFloat 0.008200 0.928022 0.146279

___Int8ToFloat___For_MatMul15___tr4Reshape160 Int8ToFloat 0.019600 2.218199 0.005971

___Int8ToFloat___For_MatMul19___tr4Reshape210 Int8ToFloat 0.010800 1.222273 0.000560

input___FloatToInt8___0 FloatToInt8 0.004100 0.464011 0.146279

output BinaryOp 0.005200 0.588502 0.000466

Sort by time cost !

Node Type Avg(ms) % Called times Flops Rate

BinaryOp 0.013000 1.471254 2.000000 0.006437

Pooling 0.033700 3.813944 3.000000 0.985145

Raster 0.036800 4.164783 4.000000 0.039275

FloatToInt8 0.039200 4.436398 5.000000 0.398536

Int8ToFloat 0.067800 7.673157 5.000000 1.030484

ConvInt8 0.693100 78.440491 5.000000 97.540123

total time : 0.883600 ms, total mflops : 2.044533

main, 113, cost time: 13.603001 ms

未量化的时间测试

/opt/MNN/build/timeProfile.out FashionMNIST.mnn 10 0运行结果:

Use extra forward type: 0

Open Model FashionMNIST.mnn

Sort by node name !

Node Name Op Type Avg(ms) % Flops Rate

11 Convolution 0.485900 54.638489 5.601901

13 Pooling 0.017400 1.956595 0.593599

14 Convolution 0.089500 10.064097 44.815208

16 Pooling 0.012000 1.349376 0.296799

17 Convolution 0.048400 5.442484 44.815208

19 Pooling 0.153200 17.227037 0.109028

23 BinaryOp 0.006300 0.708422 0.006057

MatMul15 Convolution 0.025700 2.889914 3.658385

MatMul19 Convolution 0.009000 1.012032 0.063514

Raster10 Raster 0.006400 0.719667 0.006057

Raster12 Raster 0.005400 0.607219 0.000473

Raster6 Raster 0.014200 1.596762 0.027257

Raster8 Raster 0.009700 1.090746 0.006057

output BinaryOp 0.006200 0.697178 0.000473

Sort by time cost !

Node Type Avg(ms) % Called times Flops Rate

BinaryOp 0.012500 1.405600 2.000000 0.006530

Raster 0.035700 4.014394 4.000000 0.039845

Pooling 0.182600 20.533010 3.000000 0.999427

Convolution 0.658500 74.047020 5.000000 98.954201

total time : 0.889300 ms, total mflops : 2.015316

main, 113, cost time: 12.360001 ms