文章目录

网页分析

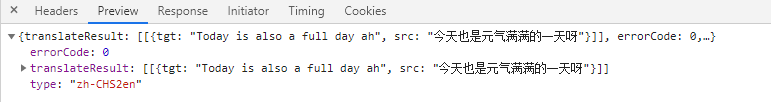

打开有道翻译,使用翻译功能,F12打开Network,会发现有很多get和post的数据,找到对所翻译内容请求的数据,如下图

简单分析一下header的内容

添加header的两种方式

1)通过request的header参数修改

2)通过request的add_header()方法修改

根据返回内容获取到翻译结果

实现代码

import urllib.request as req

import urllib.parse

import json

while True:

content = input("请输入需要翻译的内容:(输入!q退出)")

if content == '!q':

break

# 输入请求URL

url = 'http://fanyi.youdao.com/translate?smartresult=dict&smartresult=rule&sessionFrom='

'''

添加header方法1

head = {}

head['User-Agent'] = 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/87.0.4280.88 Safari/537.36'

'''

data = {

}

# 将请求表单以字典的形式写入data传入,i表示需要翻译的内容

# data['i'] = '今天也是元气满满的一天呀'

data['i'] = content

data['from'] = 'AUTO'

data['to'] = 'AUTO'

data['smartresult'] = 'dict'

data['client'] = 'fanyideskweb'

data['salt'] = '16099982906531'

data['sign'] = 'a9d35d5d61ef0421160b9d53b6dff04f'

data['lts'] = '1609998290653'

data['bv'] = '4f7ca50d9eda878f3f40fb696cce4d6d'

data['doctype'] = 'json'

data['version'] = '2.1'

data['keyfrom'] = 'fanyi.web'

data['action'] = 'FY_BY_CLICKBUTTION'

data = urllib.parse.urlencode(data).encode('utf-8')

request = req.Request(url, data)

# 添加header方法2

request.add_header('User-Agent',

'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/87.0.4280.88 Safari/537.36')

response = req.urlopen(request)

html = response.read().decode('utf-8')

# 根据返回内容获取翻译结果

target = json.loads(html)

target = target['translateResult'][0][0]['tgt']

print(target)

测试结果:

requests库和urllib库的区别