当我们使用下列代码导出onnx模型,使用Netron查看的结果是这样的:

import torch

import torch.onnx

import torchvision.models as models

from collections import OrderedDict

device = torch.device("cpu")

def convert():

model = models.inception_v3(pretrained = False)

pthfile = './inception_v3_google-1a9a5a14.pth'

state_dict =torch.load(str(pthfile))

new_state_dict = OrderedDict()

for k, v in state_dict.items():

if(k[0:7] == "module."):

name = k[7:]

else:

name = k[0:]

new_state_dict[name] = v

model.load_state_dict(new_state_dict)

print(model)

input_names = ["actual_input_1"]

output_names = ["output1"]

dummy_input = torch.randn(16, 3, 224, 224)

dynamic_axes = {

'actual_input_1': {

0: '-1'}, 'output1': {

0: '-1'}}

torch.onnx.export(model, dummy_input, "inception_v3.onnx", input_names = input_names, output_names = output_names, dynamic_axes = dynamic_axes, opset_version=11)

if __name__ == "__main__":

convert()

但是有些时候我们想要查看算子输出的shape结果,显然我们没有办法从上面的图中查看。那么这时候我们就需要onnx库中的shape_inference来进行推导,代码如下:

import onnx

from onnx import shape_inference

path = "..." #the path of your onnx model

onnx.save(onnx.shape_inference.infer_shapes(onnx.load(path)), path)

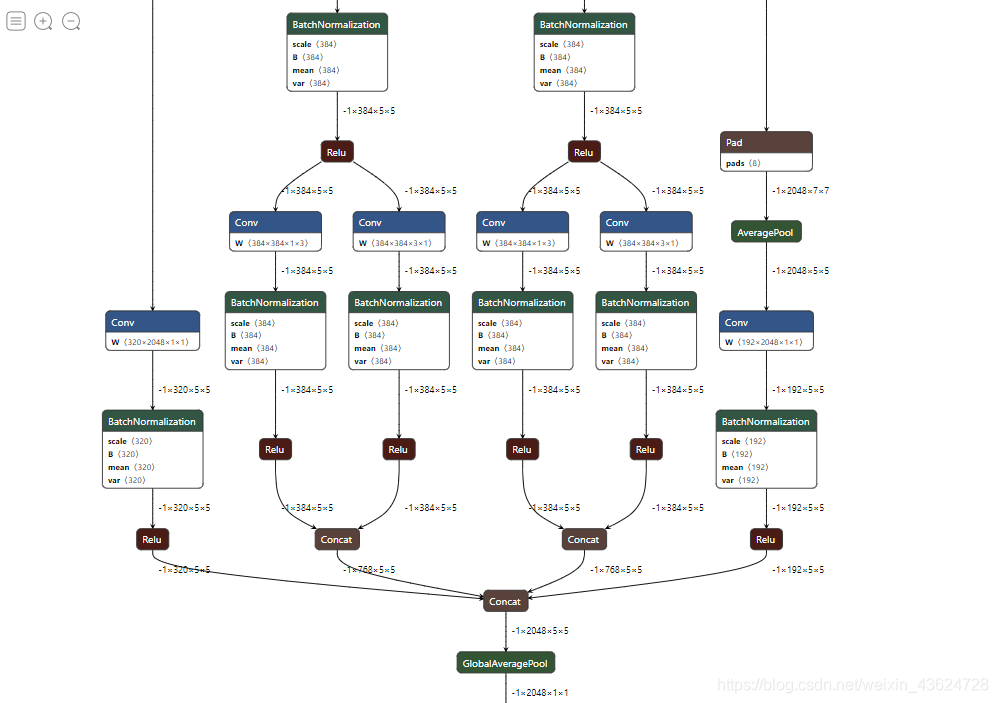

使用Netron查看保存的模型结果:

顺利达到我们想要的效果!!!