CV经典网络讲解

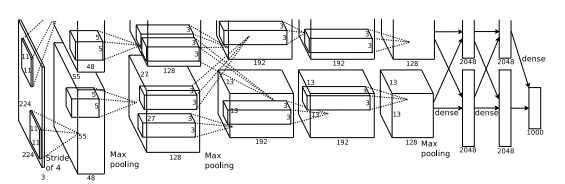

AlexNet

Alexnet论文:Imagenet classification with deep convolutional neural networks

import torch

from torch import nn, optim

import torchvision

device = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

class AlexNet(nn.Module):

def __init__(self):

super(AlexNet, self).__init__()

self.conv = nn.Sequential(

# 不再使用模型并行将网络按照深度拆分,而是视为一个整体

# 第一层

nn.Conv2d(1, 96, 11, 4), # in_channels, out_channels, kernel_size, stride, padding

nn.ReLU(),

nn.MaxPool2d(3, 2), # kernel_size, stride

# 第二层

nn.Conv2d(96, 256, 5, 1, 2),

nn.ReLU(),

nn.MaxPool2d(3, 2),

# 第三层

nn.Conv2d(256, 384, 3, 1, 1),

nn.ReLU(),

# 第四层

nn.Conv2d(384, 384, 3, 1, 1),

nn.ReLU(),

# 第五层

nn.Conv2d(384, 256, 3, 1, 1),

nn.ReLU(),

nn.MaxPool2d(3, 2),

)

# 全连接层

self.fc = nn.Sequential(

# 根据上面的讲解这里按照论文中的应该是256*6*6

# 但是我们在看论文中会发现第一层的卷积层计算出的尺寸实际上是54.25不能整除

# 论文按55计算了,所以到了最后是256*6*6

# 而pytorch应该是向下取整了,这里如果写256*6*6会出现size mismatch错误

nn.Linear(256*5*5, 4096),

nn.ReLU(),

nn.Dropout(0.5),

nn.Linear(4096, 4096),

nn.ReLU(),

nn.Dropout(0.5),

# 输出层。由于这里使用Fashion-MNIST,所以用类别数为10,而非论文中的1000

nn.Linear(4096, 10),

)

def forward(self, img):

feature = self.conv(img)

print(feature.shape)

output = self.fc(feature.view(img.shape[0], -1))

return output

————————————————

原代码链接:https://blog.csdn.net/codeswarrior/article/details/107219813