前言

tensorflow 使用RNN,GRU,LSTM模型对数据继续 情感分析

提示:以下是本篇文章正文内容,下面案例可供参考

一、tensorflow

tensorflow: 使用RNN,GRU,LSTM在情感分析上的应用

二、使用步骤

1.引入库

代码如下(示例):

import numpy as np

import pandas as pd

import pickle

from tensorflow.keras.callbacks import EarlyStopping

from tensorflow.keras import Sequential, layers

import jieba

from keras.preprocessing.text import text_to_word_sequence,one_hot,Tokenizer

from keras.preprocessing.sequence import pad_sequences

from tensorflow.keras.utils import to_categorical

from tensorflow.keras.models import load_model

2.读入数据

代码如下(示例):

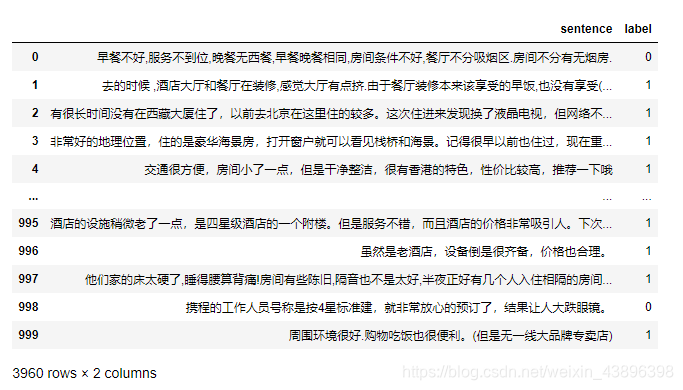

train=pd.read_csv('C:\\Users\\kkk\\Desktop\\kk\\train.tsv',sep='\t')

test=pd.read_csv('C:\\Users\\kkk\\Desktop\\kk\\dev.tsv',sep='\t')

data=pd.concat([train,test],axis=0) #合并在一起的原因在于,有些步骤可能会重复,这样可以简写代码

3.参数定义

vocab_size = 15000 # 使用jieba分词出来不同词的个数,一般来说自己定义

max_review_len = 200 # data中sentence中使用jieba分词的数来的数量最大为200,超过200截取,小于200补0进行填充

embedding_len = 100 # data中sentence中使用jieba分词出来的每个词用100维的向量进行表示

batch_size=32 #每次32 个数据更新一次损失

4.数据处理

t = Tokenizer(vocab_size) # 要使得文本向量化时省略掉低频词,就要设置这个参数

data['sentence']=data['sentence'].apply(lambda x :jieba.lcut(x)) #对每个句子继续jieba分词

t.fit_on_texts(data['sentence']) # 在所有的评论数据集上训练,得到统计信息

word_index = t.word_index # 不受vocab_size的影响 查看每个词对应的索引

train_data=data.iloc[:2960,] #数据的划分

dev_data=data.iloc[2960:,]

v_train_x = t.texts_to_sequences(train_data['sentence']) # 受vocab_size的影响

v_dev_x = t.texts_to_sequences(dev_data['sentence']) # 受vocab_size的影响

pad_X_train = pad_sequences(v_train_x,maxlen=max_review_len,padding='post') #对数据进行裁剪,填充

pad_X_dev = pad_sequences(v_dev_x,maxlen=max_review_len,padding='post')

5.模型的训练

random_indexs = np.random.permutation(len(pad_X_train))

X_train = pad_X_train[random_indexs] #打乱训练集 进行训练

Y_train = train_data['label'][random_indexs]

one_hot_label = to_categorical(Y_train) #这个代码的主要作用在于,如果使用dense 输出为2,则要对标签也进行修改2类 如 1-》[0,1]

callbacks_list = [EarlyStopping(monitor='val_accuracy', patience=3)] #模型设置早停

model = Sequential([

layers.Embedding(vocab_size, embedding_len),

layers.Bidirectional(layers.LSTM(embedding_len)),# 可将LSTM修改成GRU SimpleRNN 继续训练

layers.Dense(64, activation='relu'),

layers.Dense(1, activation='sigmoid')

])

# binary_crossentropy categorical_crossentropy

model.compile(loss='binary_crossentropy', optimizer='adam',

metrics=['accuracy'])

history1 = model.fit(X_train,Y_train, epochs=3,batch_size=32,validation_data=(pad_X_dev,dev_data['label']),callbacks=callbacks_list) #

6.模型的保存和导入预测

model_save_path = 'C:/Users/lwb/Desktop/dataSET/LSTM_0.8960.h5'

model.save(model_save_path) #模型保存

#模型导入

model_GRU=load_model(model_save_path)

# 如果是训练 和预测是分开的话,需要将tokenizer 语料也进行保存,

'''

# 语料库保存

with open('./Model_vocab/tokenizer.pickle', 'wb') as handle:

pickle.dump(t, handle, protocol=pickle.HIGHEST_PROTOCOL)

# 语料库导入

with open('./app/Lstm_Model/Model_vocab/tokenizer.pickle', 'rb') as handle:

tokenizer = pickle.load(handle)

'''

predict_text = t.texts_to_sequences(['照亮你的心,有多远的距离']) # 受vocab_size的影响

pad_predict_text = pad_sequences(predict_text,maxlen=max_review_len,padding='post')

acc = model_GRU.predict(pad_predict_text)

if acc>0.5:

print('准确率:', 1)

else:

print('准确率:', 0)

总结

tensorflow 多分类损失函数的定义from tensorflow.keras import Sequential, layers

model = Sequential([

layers.Embedding(vocab_size, embedding_len),

layers.Bidirectional(layers.GRU(embedding_len)),

layers.Dense(64, activation='relu'),

layers.Dense(2, activation='softmax') # 多于等于2分类的 都用softmax 重点:label也要修改为如下构造的label

])

# binary_crossentropy categorical_crossentropy

model.compile(loss='categorical_crossentropy', optimizer='adam', # 损失函数使用交叉熵,

metrics=['accuracy'])

history1 = model.fit(X_train,one_hot_label, epochs=3,validation_data=(pad_X_dev,dev_data['label'])) #

对于二分类来说 ,一般有两种方式定义,上面一种使用的时候,要将lable进行转换如下的格式:

from tensorflow.keras.utils import to_categorical

one_hot_label = to_categorical(Y_train)

one_hot_label

array([[1., 0.],

[1., 0.],

[1., 0.],

...,

[0., 1.],

[0., 1.],

[1., 0.]], dtype=float32)

另一种方式,因为二分类其实本质是输出二值,所以可以使用:

from tensorflow.keras import Sequential, layers

model = Sequential([

layers.Embedding(vocab_size, embedding_len),

layers.Bidirectional(layers.GRU(embedding_len)),

layers.Dense(64, activation='relu'),

layers.Dense(1, activation='sigmoid') #输出一个值 通过sigmoid 输出概率

])

# binary_crossentropy categorical_crossentropy

model.compile(loss='binary_crossentropy', optimizer='adam', # loss 定义为二值损失

metrics=['accuracy'])

history1 = model.fit(X_train,Y_train, epochs=3,validation_data=(pad_X_dev,dev_data['label'])) #