KCF认为目标追踪的一个主要任务是一个区分分类器,用于区分周围环境和目标。

论文里面涉及到循环矩阵、傅里叶变换、频域时域转换,不做公式推到的介绍。

KCF的大致流程:

1.在上一帧检测到的目标周围扩大2.5倍取图像;

2.对图像进行循环平移生成许多样本,每个样本对应的是为正的置信度,偏移的像素个数越少,置信度越高;

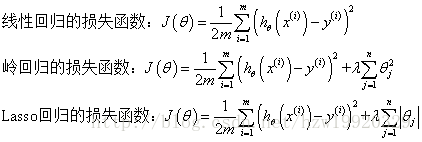

3.使用岭回归公式求取系数w,然后将下一幅图像带入求取置信度最高的位置。(岭回归就是带有正则化项的最小二乘的线性回归)

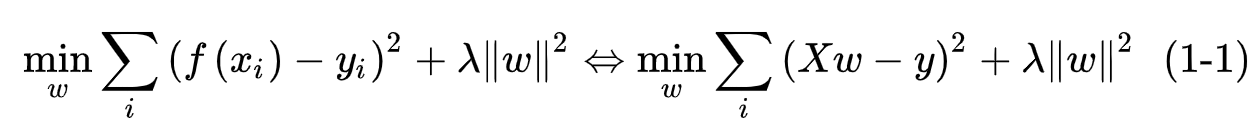

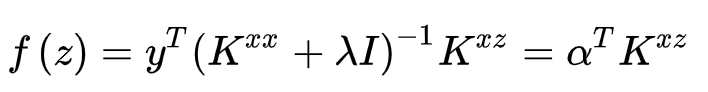

岭回归的公式如下:

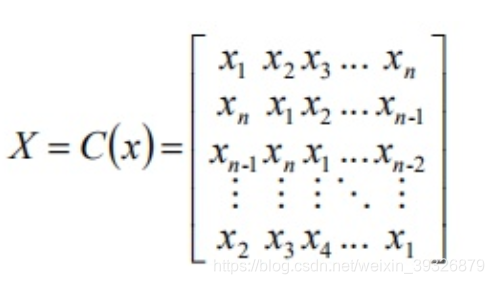

其中X 为特征矩阵,w 为权值,y 为样本标签/响应,其中每一项都采用了L2 范数的平方,即矩阵内所有元素的平方和。因此,该优化的关键在于求最优的 w,求解方法则是使用了最直接的拉格朗日乘子法:

我们假设当前的权重W和输出y都是一维向量,则矩阵的求导公式满足:

![[公式]](https://img-blog.csdnimg.cn/20200703113725923.png)

不过,由于后面要引入复频域空间,所以我们这里做一些微调:

![[公式]](https://img-blog.csdnimg.cn/20200703113740869.png)

其中,H 代表共轭转置,即在转置的同时将矩阵内所有元素变为其共轭形式,原因很简单:

![[公式]](https://img-blog.csdnimg.cn/20200703113803776.png)

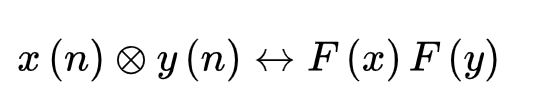

由以上w的求解公式可以看出,计算量较大的是循环矩阵和循环矩阵的转置相乘,也就是矩阵的卷积,(因为跟循环矩阵相乘我们可以看作是跟卷积核卷积)为了节省计算量,我们将时域卷积转到频域,变成相乘,大大减少计算量。

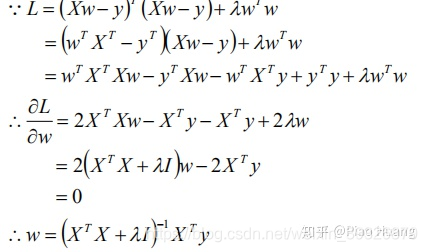

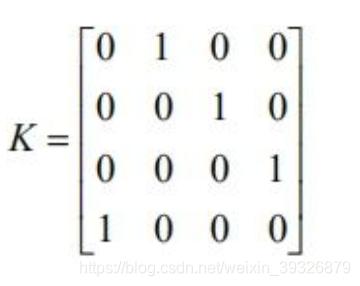

首先我们要求循环矩阵变到频域。

以一维矩阵为例:

矩阵的每一行相对上一行都向右移动了一位,这里举这个矩阵例子是有用意的,通过该矩阵的n阶形式,我们可以轻松的实现任意矩阵 X 的右移 [公式] 或者下移 [公式] ,如:

下面我们对循环矩阵进行傅里叶变换:

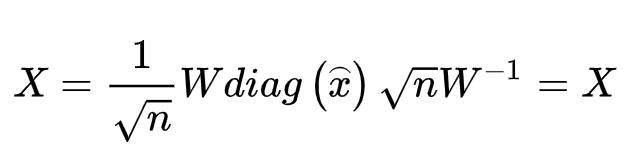

然后对循环矩阵求取特征值和特征向量,循环矩阵可以表达为:

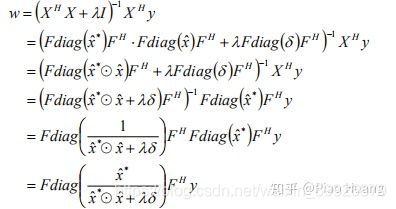

将傅里叶变换后的循环矩阵带入岭回归的求解公式中:

可以看出,原本的卷积变成了矩阵相乘:本质上是将时域卷积变成了频域相乘:

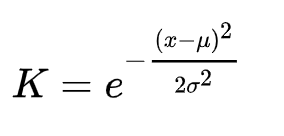

可以从之前岭回归的公式看出,岭回归本质上是一个单层的线性结构(类似于单层全卷积层),那我们如果要增加映射函数的非线性,就要引入核函数,文章选用的是高斯核函数:

KCF用在目标追踪中的场景有:

1.当前帧与下一帧的运动变化不大,且光照变化、模糊程度等差异不大

2.每次追踪之后都需要更新模板,求解出w,为下一帧求解出响应最高的位置做准备;

以下为KCF流程的代码解读:

/*

Tracker based on Kernelized Correlation Filter (KCF) [1] and Circulant Structure with Kernels (CSK) [2].

CSK is implemented by using raw gray level features, since it is a single-channel filter.

KCF is implemented by using HOG features (the default), since it extends CSK to multiple channels.

[1] J. F. Henriques, R. Caseiro, P. Martins, J. Batista,

"High-Speed Tracking with Kernelized Correlation Filters", TPAMI 2015.

[2] J. F. Henriques, R. Caseiro, P. Martins, J. Batista,

"Exploiting the Circulant Structure of Tracking-by-detection with Kernels", ECCV 2012.

Authors: Joao Faro, Christian Bailer, Joao F. Henriques

Contacts: joaopfaro@gmail.com, Christian.Bailer@dfki.de, henriques@isr.uc.pt

Institute of Systems and Robotics - University of Coimbra / Department Augmented Vision DFKI

Constructor parameters, all boolean:

hog: use HOG features (default), otherwise use raw pixels

fixed_window: fix window size (default), otherwise use ROI size (slower but more accurate)

multiscale: use multi-scale tracking (default; cannot be used with fixed_window = true)

Default values are set for all properties of the tracker depending on the above choices.

Their values can be customized further before calling init():

interp_factor: linear interpolation factor for adaptation

sigma: gaussian kernel bandwidth

lambda: regularization

cell_size: HOG cell size

padding: area surrounding the target, relative to its size

output_sigma_factor: bandwidth of gaussian target

template_size: template size in pixels, 0 to use ROI size

scale_step: scale step for multi-scale estimation, 1 to disable it

scale_weight: to downweight detection scores of other scales for added stability

For speed, the value (template_size/cell_size) should be a power of 2 or a product of small prime numbers.

Inputs to init():

image is the initial frame.

roi is a cv::Rect with the target positions in the initial frame

Inputs to update():

image is the current frame.

Outputs of update():

cv::Rect with target positions for the current frame

By downloading, copying, installing or using the software you agree to this license.

If you do not agree to this license, do not download, install,

copy or use the software.

License Agreement

For Open Source Computer Vision Library

(3-clause BSD License)

Redistribution and use in source and binary forms, with or without modification,

are permitted provided that the following conditions are met:

* Redistributions of source code must retain the above copyright notice,

this list of conditions and the following disclaimer.

* Redistributions in binary form must reproduce the above copyright notice,

this list of conditions and the following disclaimer in the documentation

and/or other materials provided with the distribution.

* Neither the names of the copyright holders nor the names of the contributors

may be used to endorse or promote products derived from this software

without specific prior written permission.

This software is provided by the copyright holders and contributors "as is" and

any express or implied warranties, including, but not limited to, the implied

warranties of merchantability and fitness for a particular purpose are disclaimed.

In no event shall copyright holders or contributors be liable for any direct,

indirect, incidental, special, exemplary, or consequential damages

(including, but not limited to, procurement of substitute goods or services;

loss of use, data, or profits; or business interruption) however caused

and on any theory of liability, whether in contract, strict liability,

or tort (including negligence or otherwise) arising in any way out of

the use of this software, even if advised of the possibility of such damage.

*/

#ifndef _KCFTRACKER_HEADERS

#include "kcftracker.hpp"

#include "ffttools.hpp"

#include "recttools.hpp"

#include "fhog.hpp"

#include "labdata.hpp"

#endif

// Constructor

RVKCFTracker::RVKCFTracker(bool hog, bool fixed_window, bool multiscale, bool lab)

{

// Parameters equal in all cases

lambda = 0.0001;

padding = 2.5;

//output_sigma_factor = 0.1;

output_sigma_factor = 0.125;

if (hog) {

// HOG

// VOT

interp_factor = 0.012;

sigma = 0.6;

// TPAMI

//interp_factor = 0.02;

//sigma = 0.5;

cell_size = 4;

_hogfeatures = true;

if (lab) {

interp_factor = 0.005;

sigma = 0.4;

//output_sigma_factor = 0.025;

output_sigma_factor = 0.1;

_labfeatures = true;

_labCentroids = cv::Mat(nClusters, 3, CV_32FC1, &data);

cell_sizeQ = cell_size*cell_size;

}

else{

_labfeatures = false;

}

}

else {

// RAW

interp_factor = 0.075;

sigma = 0.2;

cell_size = 1;

_hogfeatures = false;

if (lab) {

printf("Lab features are only used with HOG features.\n");

_labfeatures = false;

}

}

if (multiscale) {

// multiscale

template_size = 96;

//template_size = 100;

scale_step = 1.05;

scale_weight = 0.95;

if (!fixed_window) {

//printf("Multiscale does not support non-fixed window.\n");

fixed_window = true;

}

}

else if (fixed_window) {

// fit correction without multiscale

template_size = 96;

//template_size = 100;

scale_step = 1;

}

else {

template_size = 1;

scale_step = 1;

}

}

// Initialize tracker

void RVKCFTracker::init(const cv::Rect &roi, cv::Mat image)

{

_roi = roi;

assert(roi.width >= 0 && roi.height >= 0);

//roi扩充2.5倍,计算HOG特征

_tmpl = getFeatures(image, 1);

//根据HOG划分出cell_size的大小造高斯核

_prob = createGaussianPeak(size_patch[0], size_patch[1]);

_alphaf = cv::Mat(size_patch[0], size_patch[1], CV_32FC2, float(0));

//_num = cv::Mat(size_patch[0], size_patch[1], CV_32FC2, float(0));

//_den = cv::Mat(size_patch[0], size_patch[1], CV_32FC2, float(0));

train(_tmpl, 1.0); // train with initial frame

}

// Update position based on the new frame

cv::Rect RVKCFTracker::update(cv::Mat image)

{

if (_roi.x + _roi.width <= 0) _roi.x = -_roi.width + 1;

if (_roi.y + _roi.height <= 0) _roi.y = -_roi.height + 1;

if (_roi.x >= image.cols - 1) _roi.x = image.cols - 2;

if (_roi.y >= image.rows - 1) _roi.y = image.rows - 2;

float cx = _roi.x + _roi.width / 2.0f;

float cy = _roi.y + _roi.height / 2.0f;

float peak_value;

cv::Point2f res = detect(_tmpl, getFeatures(image, 0, 1.0f), peak_value);

if (scale_step != 1) {

// Test at a smaller _scale

float new_peak_value;

cv::Point2f new_res = detect(_tmpl, getFeatures(image, 0, 1.0f / scale_step), new_peak_value);

if (scale_weight * new_peak_value > peak_value) {

res = new_res;

peak_value = new_peak_value;

_scale /= scale_step;

_roi.width /= scale_step;

_roi.height /= scale_step;

}

// Test at a bigger _scale

new_res = detect(_tmpl, getFeatures(image, 0, scale_step), new_peak_value);

if (scale_weight * new_peak_value > peak_value) {

res = new_res;

peak_value = new_peak_value;

_scale *= scale_step;

_roi.width *= scale_step;

_roi.height *= scale_step;

}

}

// Adjust by cell size and _scale

_roi.x = cx - _roi.width / 2.0f + ((float) res.x * cell_size * _scale);

_roi.y = cy - _roi.height / 2.0f + ((float) res.y * cell_size * _scale);

if (_roi.x >= image.cols - 1) _roi.x = image.cols - 1;

if (_roi.y >= image.rows - 1) _roi.y = image.rows - 1;

if (_roi.x + _roi.width <= 0) _roi.x = -_roi.width + 2;

if (_roi.y + _roi.height <= 0) _roi.y = -_roi.height + 2;

assert(_roi.width >= 0 && _roi.height >= 0);

cv::Mat x = getFeatures(image, 0);

train(x, interp_factor);

return _roi;

}

// Detect object in the current frame.

cv::Point2f RVKCFTracker::detect(cv::Mat z, cv::Mat x, float &peak_value)

{

using namespace FFTTools;

cv::Mat k = gaussianCorrelation(x, z);

cv::Mat res = (real(fftd(complexMultiplication(_alphaf, fftd(k)), true)));

//minMaxLoc only accepts doubles for the peak, and integer points for the coordinates

cv::Point2i pi;

double pv;

cv::minMaxLoc(res, NULL, &pv, NULL, &pi);

peak_value = (float) pv;

//subpixel peak estimation, coordinates will be non-integer

cv::Point2f p((float)pi.x, (float)pi.y);

if (pi.x > 0 && pi.x < res.cols-1) {

p.x += subPixelPeak(res.at<float>(pi.y, pi.x-1), peak_value, res.at<float>(pi.y, pi.x+1));

}

if (pi.y > 0 && pi.y < res.rows-1) {

p.y += subPixelPeak(res.at<float>(pi.y-1, pi.x), peak_value, res.at<float>(pi.y+1, pi.x));

}

p.x -= (res.cols) / 2;

p.y -= (res.rows) / 2;

return p;

}

// train tracker with a single image

void RVKCFTracker::train(cv::Mat x, float train_interp_factor)

{

using namespace FFTTools;

//gaussianCorrelation时域卷积,频域相乘

//

cv::Mat k = gaussianCorrelation(x, x);

cv::Mat alphaf = complexDivision(_prob, (fftd(k) + lambda));

_tmpl = (1 - train_interp_factor) * _tmpl + (train_interp_factor) * x;

_alphaf = (1 - train_interp_factor) * _alphaf + (train_interp_factor) * alphaf;

}

// Evaluates a Gaussian kernel with bandwidth SIGMA for all relative shifts between input images X and Y, which must both be MxN. They must also be periodic (ie., pre-processed with a cosine window).

cv::Mat RVKCFTracker::gaussianCorrelation(cv::Mat x1, cv::Mat x2)

{

using namespace FFTTools;

cv::Mat c = cv::Mat( cv::Size(size_patch[1], size_patch[0]), CV_32F, cv::Scalar(0) );

// HOG features

if (_hogfeatures) {

cv::Mat caux;

cv::Mat x1aux;

cv::Mat x2aux;

for (int i = 0; i < size_patch[2]; i++) {

x1aux = x1.row(i); // Procedure do deal with cv::Mat multichannel bug

x1aux = x1aux.reshape(1, size_patch[0]);

x2aux = x2.row(i).reshape(1, size_patch[0]);

cv::mulSpectrums(fftd(x1aux), fftd(x2aux), caux, 0, true);

caux = fftd(caux, true);

rearrange(caux);

caux.convertTo(caux,CV_32F);

c = c + real(caux);

}

}

// Gray features

else {

cv::mulSpectrums(fftd(x1), fftd(x2), c, 0, true);

c = fftd(c, true);

rearrange(c);

c = real(c);

}

cv::Mat d;

cv::max(( (cv::sum(x1.mul(x1))[0] + cv::sum(x2.mul(x2))[0])- 2. * c) / (size_patch[0]*size_patch[1]*size_patch[2]) , 0, d);

cv::Mat k;

cv::exp((-d / (sigma * sigma)), k);

return k;

}

// Create Gaussian Peak. Function called only in the first frame.

//根据最终的HOG特征的大小生成高斯响应

// y(i,j) = exp(output_sigma × [ (i-w/2+1)^2 + (j-h/2+1)^2])

cv::Mat RVKCFTracker::createGaussianPeak(int sizey, int sizex)

{

cv::Mat_<float> res(sizey, sizex);

int syh = (sizey) / 2;

int sxh = (sizex) / 2;

float output_sigma = std::sqrt((float) sizex * sizey) / padding * output_sigma_factor;

float mult = -0.5 / (output_sigma * output_sigma);

for (int i = 0; i < sizey; i++)

for (int j = 0; j < sizex; j++)

{

int ih = i - syh;

int jh = j - sxh;

res(i, j) = std::exp(mult * (float) (ih * ih + jh * jh));

}

return FFTTools::fftd(res);

}

// Obtain sub-window from image, with replication-padding and extract features

cv::Mat RVKCFTracker::getFeatures(const cv::Mat & image, bool inithann, float scale_adjust)

{

cv::Rect extracted_roi;

//cx,cy为中心点的位置

float cx = _roi.x + _roi.width / 2;

float cy = _roi.y + _roi.height / 2;

if (inithann) {

//将长、宽在该roi区域内扩大padding倍数(默认值2.5)

int padded_w = _roi.width * padding;

int padded_h = _roi.height * padding;

//如果给定了模板大小,就用模板大小替换roi大小,这里后面应该用不到

if (template_size > 1) {

// Fit largest dimension to the given template size

if (padded_w >= padded_h) //fit to width

_scale = padded_w / (float) template_size;

else

_scale = padded_h / (float) template_size;

_tmpl_sz.width = padded_w / _scale;

_tmpl_sz.height = padded_h / _scale;

}

else {

//No template size given, use ROI size

_tmpl_sz.width = padded_w;

_tmpl_sz.height = padded_h;

_scale = 1;

// original code from paper:

/*if (sqrt(padded_w * padded_h) >= 100) {

//Normal size

_tmpl_sz.width = padded_w;

_tmpl_sz.height = padded_h;

_scale = 1;

}

else {

//ROI is too big, track at half size

_tmpl_sz.width = padded_w / 2;

_tmpl_sz.height = padded_h / 2;

_scale = 2;

}*/

}

//如果用hog特征的话,

if (_hogfeatures) {

// Round to cell size and also make it even

//让template的大小是hog_cell_size的整数且偶数倍

_tmpl_sz.width = ( ( (int)(_tmpl_sz.width / (2 * cell_size)) ) * 2 * cell_size ) + cell_size*2;

_tmpl_sz.height = ( ( (int)(_tmpl_sz.height / (2 * cell_size)) ) * 2 * cell_size ) + cell_size*2;

}

else {

//Make number of pixels even (helps with some logic involving half-dimensions)

_tmpl_sz.width = (_tmpl_sz.width / 2) * 2;

_tmpl_sz.height = (_tmpl_sz.height / 2) * 2;

}

}

extracted_roi.width = scale_adjust * _scale * _tmpl_sz.width;

extracted_roi.height = scale_adjust * _scale * _tmpl_sz.height;

// center roi with new size

extracted_roi.x = cx - extracted_roi.width / 2;

extracted_roi.y = cy - extracted_roi.height / 2;

cv::Mat FeaturesMap;

//把roi提取出来

cv::Mat z = RectTools::subwindow(image, extracted_roi, cv::BORDER_REPLICATE);

//将扩大2.5倍截取的roi最后缩放到固定的template_size=96的大小

if (z.cols != _tmpl_sz.width || z.rows != _tmpl_sz.height) {

cv::resize(z, z, _tmpl_sz);

}

//这里计算HOG特征,具体过程不表,计算的结果放到FeaturesMap中;

// HOG features

if (_hogfeatures) {

IplImage z_ipl = z;

CvLSVMFeatureMapCaskade *map;

getFeatureMaps(&z_ipl, cell_size, &map);

normalizeAndTruncate(map,0.2f);

PCAFeatureMaps(map);

size_patch[0] = map->sizeY;

size_patch[1] = map->sizeX;

size_patch[2] = map->numFeatures;

FeaturesMap = cv::Mat(cv::Size(map->numFeatures,map->sizeX*map->sizeY), CV_32F, map->map); // Procedure do deal with cv::Mat multichannel bug

FeaturesMap = FeaturesMap.t();

freeFeatureMapObject(&map);

// Lab features

if (_labfeatures) {

cv::Mat imgLab;

cvtColor(z, imgLab, CV_BGR2Lab);

unsigned char *input = (unsigned char*)(imgLab.data);

// Sparse output vector

cv::Mat outputLab = cv::Mat(_labCentroids.rows, size_patch[0]*size_patch[1], CV_32F, float(0));

int cntCell = 0;

// Iterate through each cell

for (int cY = cell_size; cY < z.rows-cell_size; cY+=cell_size){

for (int cX = cell_size; cX < z.cols-cell_size; cX+=cell_size){

// Iterate through each pixel of cell (cX,cY)

for(int y = cY; y < cY+cell_size; ++y){

for(int x = cX; x < cX+cell_size; ++x){

// Lab components for each pixel

float l = (float)input[(z.cols * y + x) * 3];

float a = (float)input[(z.cols * y + x) * 3 + 1];

float b = (float)input[(z.cols * y + x) * 3 + 2];

// Iterate trough each centroid

float minDist = FLT_MAX;

int minIdx = 0;

float *inputCentroid = (float*)(_labCentroids.data);

for(int k = 0; k < _labCentroids.rows; ++k){

float dist = ( (l - inputCentroid[3*k]) * (l - inputCentroid[3*k]) )

+ ( (a - inputCentroid[3*k+1]) * (a - inputCentroid[3*k+1]) )

+ ( (b - inputCentroid[3*k+2]) * (b - inputCentroid[3*k+2]) );

if(dist < minDist){

minDist = dist;

minIdx = k;

}

}

// Store result at output

outputLab.at<float>(minIdx, cntCell) += 1.0 / cell_sizeQ;

//((float*) outputLab.data)[minIdx * (size_patch[0]*size_patch[1]) + cntCell] += 1.0 / cell_sizeQ;

}

}

cntCell++;

}

}

// Update size_patch[2] and add features to FeaturesMap

size_patch[2] += _labCentroids.rows;

FeaturesMap.push_back(outputLab);

}

}

else {

FeaturesMap = RectTools::getGrayImage(z);

FeaturesMap -= (float) 0.5; // In Paper;

size_patch[0] = z.rows;

size_patch[1] = z.cols;

size_patch[2] = 1;

}

if (inithann) {

createHanningMats();

}

//用hanming窗乘以特征图

FeaturesMap = hann.mul(FeaturesMap);

return FeaturesMap;

}

// Initialize Hanning window. Function called only in the first frame.

//初始化hamming窗口;是为了对不同样本赋予不同的权重

/*

目的是采样时为不同的样本分配不同的权重,

kcf算法实际是采样了一个样本作为base样本,

别的样本都是通过对base样本的平移得到,虚拟出来的样本。

一般对样本都采用非正即负的方法来标记训练样本,

即正样本标签为1,负样本为0。

这样标记样本的方法不能很好的反应每个负样本的权重,

即对离中心目标远的样本和离中心目标近的样本等同看待。

但是在实际中我们需要对不同的负样本不同的看待,

就有了根据样本中心离目标的远近分别赋值 [0,1]范数的数。

离目标越近,值越趋向于1,离目标越远,

值越趋向于0.

kcf就是根据汉宁窗来对不同样本分配不同的权重

*/

void RVKCFTracker::createHanningMats()

{

cv::Mat hann1t = cv::Mat(cv::Size(size_patch[1],1), CV_32F, cv::Scalar(0));

cv::Mat hann2t = cv::Mat(cv::Size(1,size_patch[0]), CV_32F, cv::Scalar(0));

for (int i = 0; i < hann1t.cols; i++)

hann1t.at<float > (0, i) = 0.5 * (1 - std::cos(2 * 3.14159265358979323846 * i / (hann1t.cols - 1)));

for (int i = 0; i < hann2t.rows; i++)

hann2t.at<float > (i, 0) = 0.5 * (1 - std::cos(2 * 3.14159265358979323846 * i / (hann2t.rows - 1)));

cv::Mat hann2d = hann2t * hann1t;

// HOG features

if (_hogfeatures) {

cv::Mat hann1d = hann2d.reshape(1,1); // Procedure do deal with cv::Mat multichannel bug

hann = cv::Mat(cv::Size(size_patch[0]*size_patch[1], size_patch[2]), CV_32F, cv::Scalar(0));

for (int i = 0; i < size_patch[2]; i++) {

for (int j = 0; j<size_patch[0]*size_patch[1]; j++) {

hann.at<float>(i,j) = hann1d.at<float>(0,j);

}

}

}

// Gray features

else {

hann = hann2d;

}

}

// Calculate sub-pixel peak for one dimension

float RVKCFTracker::subPixelPeak(float left, float center, float right)

{

float divisor = 2 * center - right - left;

if (divisor == 0)

return 0;

return 0.5 * (right - left) / divisor;

}