文章目录

基本原理

pytorch是如何操作的

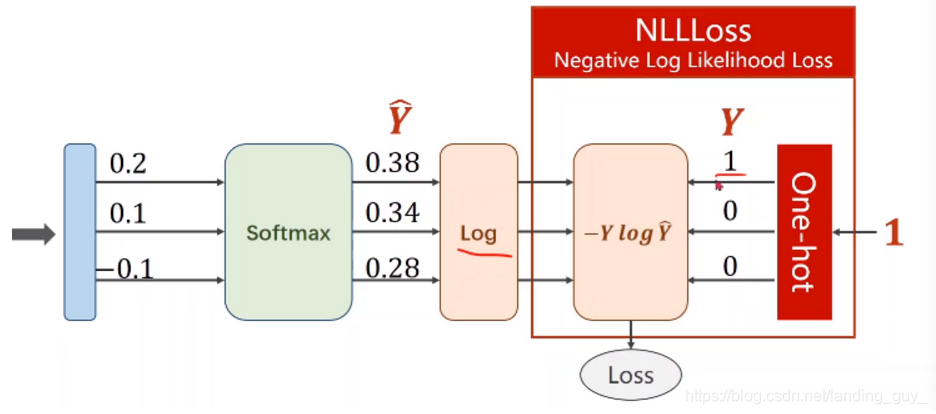

- 在了解cross entropy之前先了解下NLLLoss, 就是Negative Log Likelihood Loss

-

输入端是softmax经过log之后的值,不过这里要注意,y可以是one-hot形式,也可以是是单纯的数像[2, 3, 4]这种

-

CrossEntropyLoss就是把以上Softmax–Log–NLLLoss合并成一步, 也就是说, CrossEntropyLoss的预测值可以是线性层直接和标签值算损失

下面程序需要注意的点:

1. 正确率计算中的max, 也有argmax, max第一个是数, 第二个是索引, 0是对行做, 1 是对列做, 1做完了后维度是batch, 也就是行的维度

2. 还有这个(perd==labels).sum()可以学习下

3. dataloader是如何迭代的(元组)

4. 用CNN特征图变小, 那么通道得变多

import torch

import torchvision

import torch.nn as nn

batch_size = 128

num_workers = 2

# 先对数据相关的数据进行设置

learning_rate = 0.001

num_epoches = 200

train_data = torchvision.datasets.MNIST(root='./dataset/mnist', train=True, download=True, transform=torchvision.transforms.ToTensor())

train_loader = torch.utils.data.DataLoader(train_data, batch_size=batch_size, shuffle=True)

test_data = torchvision.datasets.MNIST(root='./dataset/mnist', train=False, download=True, transform=torchvision.transforms.ToTensor())

test_loader = torch.utils.data.DataLoader(test_data, batch_size=batch_size, shuffle=False)

class NN(nn.Module):

def __init__(self, input_dim, output_dim):

super(NN, self).__init__()

self.linear = nn.Sequential(

nn.Linear(input_dim, 50),

nn.ReLU(),

nn.Linear(50, output_dim)

)

def forward(self, x):

x = x.reshape(x.shape[0], -1)

x = self.linear(x)

return x

Linear_model = NN(784, 10)

criterion = nn.CrossEntropyLoss()

optimizer = torch.optim.Adam(Linear_model.parameters(), lr=learning_rate)

def accuracy(epoch, loader, model):# 检查总的正确率

num_correct = 0

num_samples = 0

model.eval()

for x, y in loader:

x = x

y = y

scores = model(x)

_, pred = scores.max(1) # 注意max和argmax

num_correct += (pred==y).sum()

num_samples += pred.shape[0]

correct = (num_correct/num_samples)*100

print('batch:{}, correct:{}'.format(epoch, correct))

model.train()

if __name__ == '__main__':

for epoch in range(num_epoches):

for index, (image, labels) in enumerate(train_loader):

y_pre = Linear_model(image)

loss = criterion(y_pre, labels)

optimizer.zero_grad()

loss.backward()

optimizer.step()

accuracy(epoch, test_loader, Linear_model)