TensorFlow采用数据流图(data flow graphs)数据以张量形式

流程:

1、创建图

2、数据代入

3、按照方向执行

4、结果输出

处理不同的问题构建的图不一样

依照有无闭环分为有向无环图和有向有环图

先进行安装:pip install tensorflow==版本

不同的版本适配不同的python版本

创建图:

import tensorflow as tf

# 创建数据流图

graph = tf.Graph()

with graph.as_default():

# 向图中加入具体操作——OP

var = tf.Variable(42,name='foo') # 创建变量

init = tf.global_variables_initializer() # 变量初始化

assgin = var.assign(13) # 赋值

with tf.Session(graph=graph) as sess: # 指定打开图创建会话

sess.run(init) # 图中如有变量,一定先执行变量初始化

sess.run(assgin) # 执行赋值OP

print(sess.run(var))

此处输出13

乘法:

a = tf.Variable(0, name='a') # 常量

b = tf.constant(1) # 变量

# 矩阵相加

value = tf.add(a, b)

# 结果赋值给a

updataop = tf.assign(a, value)

# 初始化变量

init = tf.global_variables_initializer()

with tf.Session() as sess:

sess.run(init)

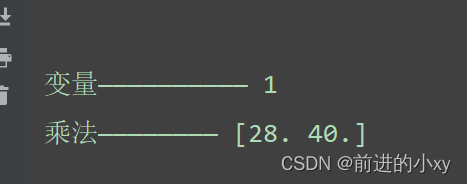

print("变量——————————", sess.run(updataop))

# 定义占位符

input1 = tf.placeholder(np.float32)

input2 = tf.placeholder(np.float32)

# 矩阵乘法

out_value = tf.multiply(input1, input2)

with tf.Session() as sess:

print("乘法————————", sess.run(out_value, feed_dict={input1: [4, 5], input2: [7, 8]}))

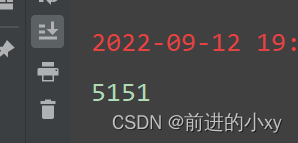

1~100的加法

思路:

1、int i=0

2、int sum=0

3、i+1 add

4、i=i+1 assign

5、sum+i add

6、sum=sum+i assign

# 创建变量

star_var = tf.Variable(0, name='i')

sum_var = tf.Variable(0, name='sum')

# 创建常量

b = tf.constant(1)

# 实现加法

updata_op = tf.add(star_var, b)

sum_op = tf.add(sum_var, updata_op)

# 初始化变量

init = tf.global_variables_initializer()

# 赋值

assign_up = tf.assign(star_var, updata_op)

assign_sum = tf.assign(sum_var, sum_op)

# 创建张量

# tensor = tf.ones((2,2),dtype=tf.float32)

with tf.Session() as sess:

sess.run(init)

for i in range(100):

# i=i+1

sess.run(assign_sum)

# sum=sum+i

sess.run(assign_up)

# i+1

sess.run(updata_op)

# sum+i

sess.run(sum_op)

print(sess.run(assign_sum))

求方程:y=1/1+e^-(w*x+b)

# 创建占位符 x输入的数据

x = tf.placeholder(np.float32)

y = tf.placeholder(np.float32)

# 创建变量

w = tf.Variable(tf.random_normal([1],name='weight'))

b = tf.Variable(tf.random_normal([1],name='bias'))

# OP准备

y_predict = tf.sigmoid(tf.add(tf.multiply(x,w),b))

# 构建损失函数

num_sample = 400 # 数据样本

# 误差函数 求误差 |y-y1| (y-Y1)^2

cost = tf.reduce_sum(tf.pow(y_predict-y,2.0))/num_sample

# 梯度下降算法 误差最小

Optimizer = tf.train.AdamOptimizer().minimize(cost)

# 创建数据 测试

xs = np.linspace(-5,5,num_sample)

ys = np.sign(xs) # 保证数据在-1~1之间

init = tf.global_variables_initializer()

num_train = 500

cost_pre = 0

with tf.Session() as sess:

sess.run(init)

# 解方程

for i in range(num_train):

# 从xs ys取出准备数据,喂Optimizer,存到占位符

for xa,ya in zip(xs,ys):

sess.run(Optimizer,feed_dict={x:xa,y:ya})

train_cost = sess.run(cost,feed_dict={x:xa,y:ya})

if np.abs(train_cost-cost_pre)<1e-5:

break

cost_pre = train_cost

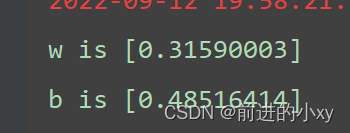

print('w is',sess.run(w,feed_dict={x:xa,y:ya}))

print('b is', sess.run(b, feed_dict={x: xa, y: ya}))

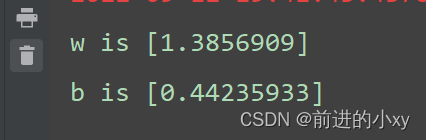

结果:

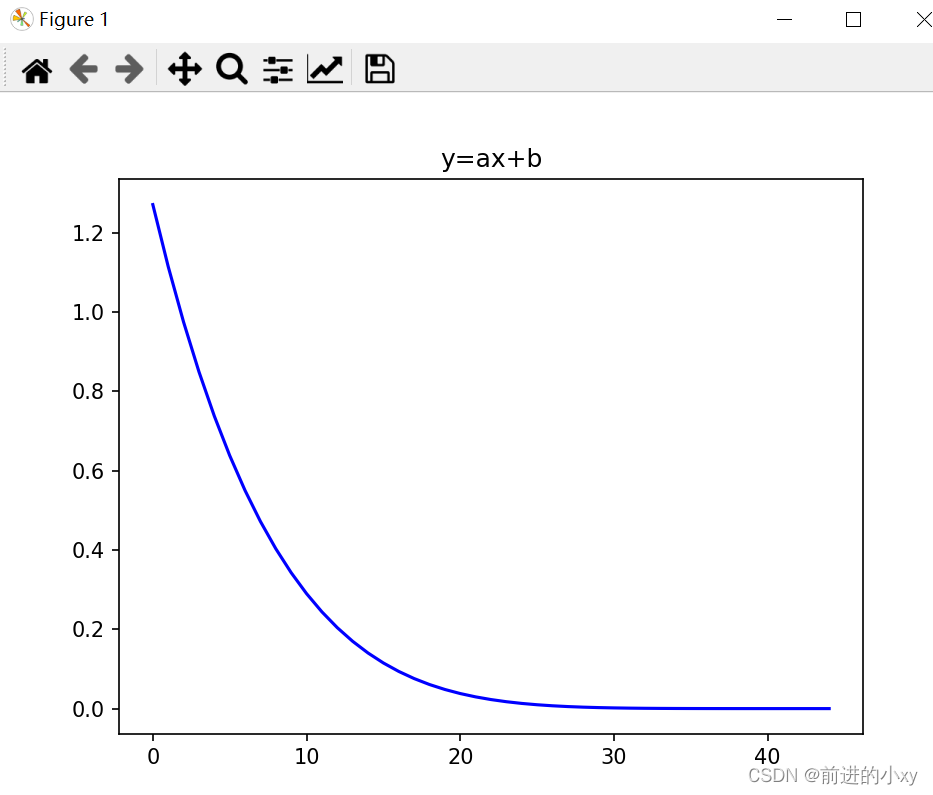

解函数y=ax+b初始化a=0.3,b=0.5

# y=ax+b

x_data = np.random.randn(100).astype(np.float32)

y_data = x_data*0.3+0.5

# 后期运行填充数据——占位符

x = tf.placeholder(tf.float32)

y = tf.placeholder(tf.float32)

w = tf.Variable(tf.random_normal([1],name='weight'))

b = tf.Variable(tf.random_normal([1],name='bias'))

y_predict = tf.add(tf.multiply(x,w),b)

cost = tf.reduce_mean(tf.square(y-y_predict))

Optimizer = tf.train.AdamOptimizer().minimize(cost)

init = tf.global_variables_initializer()

cost_num = []

cost_pre = 0

with tf.Session() as sess: # 使用默认图

sess.run(init)

for step in range(500):

for xa,ya in zip(x_data,y_data):

sess.run(Optimizer, feed_dict={x: xa, y: ya})

train_cost = sess.run(cost, feed_dict={x: xa, y: ya})

cost_num.append(train_cost)

if np.abs(train_cost-cost_pre)<1e-6:

break

cost_pre = train_cost

print('w is',sess.run(w,feed_dict={x:xa,y:ya}))

print('b is', sess.run(b, feed_dict={x: xa, y: ya}))

# 画图

plt.plot(range(len(cost_num)),cost_num,'blue')

plt.title("y=ax+b")

plt.xlabel=("epoch")

plt.ylabel=("cost")

plt.show()