模型贡献至Open model zoo(2)

mobilenet-yolov4-syg.yml

文件作用

launcher:模型在不同框架tf和dlsdk上的要求

datasets:数据集的annotation convert、pro和post处理

文件包含以上4个测试的参数配置

yaml格式要求

基本格式要求:

- 大小写敏感;

- 使用缩进代表层级关系;

- 缩进只能使用空格,不能使用tab键,不要求空格个数,只需要相同层级左对齐(一般2或4个空格)。

对象

使用冒号代表,格式为key: value。冒号后要有一个空格。

数组

使用一个短横线加一个空格表示一个数组项。

常量结构

包括整数、浮点数、字符串、null、日期、布尔值、时间。

(1)launchers参数

How to configure openvino launcher

framework

TensorFlow:tf(暂时未用,后面以dlsdk为主)

openvino:dlsdk

tags: - FP16FP32 标注模型文件的数据精度

model weights

mobilenet-yolo-syg.xml

mobilenet-yolo-syg.bin

名字和model.yml对应;tf_model for TensorFlow model (.pb, .pb.frozen, .pbtxt)

device: CPU

Supported: CPU, GPU, FPGA, MYRIAD, HDDL

batch: 1

(2)adapter参数定义

Adapter is a function for conversion network infer output to metric specific format. You can use 2 ways to set adapter for topology

type: yolo_v3

converting output of YOLO v3 family models to DetectionPrediction representation

classes: 9

模型9分类,具体顺序和名称看model_data/car_classes.txt

anchors: “12,16, 19,36, 40,28, 36,75, 76,55, 72,146, 142,110, 192,243, 459,401”

默认值即可,具体看model_data/yolo_anchors.txt

coords: 4

number of bbox coordinates (default 4)

num: 3

num parameter from DarkNet configuration file (default 3)

anchor_masks: [[0, 1, 2], [3, 4, 5], [6, 7, 8]]

顺序和outputs对应即可,通过netron查看xml文件

threshold: 0.001

minimal objectness score value for valid detections (default 0.001)

outputs:

separable_conv2d_22/BiasAdd/YoloRegion

separable_conv2d_30/BiasAdd/YoloRegion

separable_conv2d_38/BiasAdd/YoloRegion

netron查看xml文件即可

raw_output: True

启用原始YOLO输出格式的附加预处理(默认 False)

输入文件准备

VOCdevkit

VOC2007/2012格式即可

dataset下载链接和解压参数

model.yml文件中添加压缩包,后续要解压

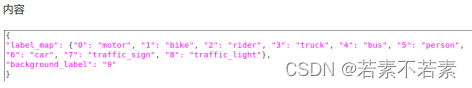

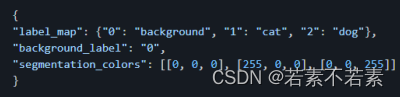

voc_label.json

作用

将模型可检测的类别按model_data/car_classes.txt中顺序书写,作为accuracy checker推理所用的文件,是dataset_meta的参数。

书写规范

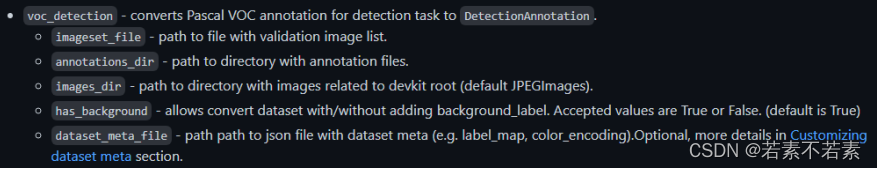

(3)datasets参数

annotation参数的2种设置方式(error)

已通过command做过annotation convert,相对路径即可

- name: VOCdevkit

data_source: VOCdevkit/VOC2007/JPEGImages

nnotation: voc_detection.pickle

dataset_meta: voc_detection.json

未通过,绝对路径 - name: VOCdevkit

data_source: VOCdevkit/VOC2007/JPEGImages

annotation_conversion:

converter: voc_detection

imageset_file: /opt/intel/openvino_2021.2.185/deployment_tools/open_model_zoo/tools/downloader/public/mobilenet-yolosyg/VOCdevkit/VOC2007/ImageSets/Main/val.txt

annotations_dir: /opt/intel/openvino_2021.2.185/deployment_tools/open_model_zoo/tools/downloader/public/mobilenetyolo-syg/VOCdevkit/VOC2007/Annotations

images_dir:/opt/intel/openvino_2021.2.185/deployment_tools/open_model_zoo/tools/downloader/public/mobilenet-yolo-syg/

/VOCdevkit/VOC2007/JPEGImages

dataset_meta_file: /opt/intel/openvino_2021.2.185/deployment_tools/open_model_zoo/tools/downloader/public/mobilenetyolo-syg/VOCdevkit/voc_label.json

报错:没有motor这类的AP值

(3)dataset Preprocessing参数

作用

processes input data before model inference

- type: bgr_to_rgb

reversing image channels. Convert image in BGR format to RGB

from IPL import Image

Image.open()

-----RGB模式

import OpenCV

cv2.imshow()

-----BGR模式 - type: resize

size: 416

resizing the image to a new width and height

processes prediction and/or annotation data after model infer and before metric calculation

- type: resize_prediction_boxes

根据图像大小调整标准化检测预测框的大小。 - type: filter

apply_to: prediction

remove_filtered: true

min_confidence: 0.001

使用不同的参数过滤数据。

apply_to:确定要处理的目标框(annotation for ground truth boxes and prediction for detection results, allfor both)

remove_filtered:移除过滤的数据。默认情况下,annotation支持忽略过滤后的数据而不移除,在其他情况下将被自动移除

min_confidence:最小置信度 - type: nms

overlap: 0.5

non-maximum suppression

overlap:合并检测的阈值 - type: clip_boxes

apply_to: prediction

裁剪检测边界框大小

apply_to:确定处理目标框的选项,annotation for ground truth boxes and prediction for detection results, all for both

其他参数

resize_realization:parameter specifies functionality of which library will be used for resize: opencv, pillow or tf (default opencv is used)

normalization:模型已经做了归一化化处理,参考/nets/yolov4.py

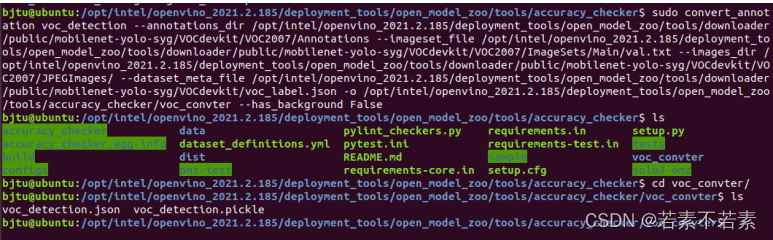

command测试Annotation Converter

作用

Annotation converter is a function which converts annotation file to suitable for metric evaluation format.

实现方式

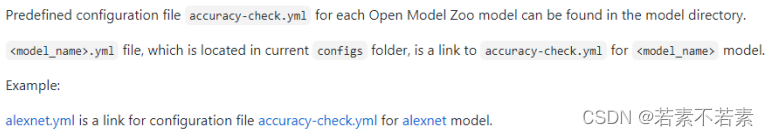

configuration file:accuracy-check.yml

command line

文件位置

输入数据集-----

downloader/public/mobilenet-yolo-syg/VOCdevkit

voc_label.json,命令参数dataset_meta_file-----

tools/accuracy_checker/

voc_convter/voc_label.json

command

sudo mkdir voc_convert

sudo convert_annotation voc_detection --annotations_dir

/opt/intel/openvino_2021.2.185/deployment_tools/open_model_zoo/tools/downloader/public/mobilenet-yolosyg/VOCdevkit/VOC2007/Annotations --imageset_file

/opt/intel/openvino_2021.2.185/deployment_tools/open_model_zoo/tools/downloader/public/mobilenet-yolosyg/VOCdevkit/VOC2007/ImageSets/Main/val.txt --images_dir

/opt/intel/openvino_2021.2.185/deployment_tools/open_model_zoo/tools/downloader/public/mobilenet-yolosyg/VOCdevkit/VOC2007/JPEGImages/ --dataset_meta_file

/opt/intel/openvino_2021.2.185/deployment_tools/open_model_zoo/tools/downloader/public/mobilenet-yolo-syg/ -o

/opt/intel/openvino_2021.2.185/deployment_tools/open_model_zoo/tools/accuracy_checker/voc_convert --has_background

False

参数说明

convert名字:voc_detection

–annotations_dir:dataset的标注文件位置

–images_dir:dataset的图像位置

–dataset_meta_file:label格式转换文件位置

–has_background:label是否包含dataset的背景background

-o:生成的.json和.pickle所在目录

result

command for coco dataset

sudo mkdir coco_convert

sudo convert_annotation mscoco_detection --annotation_file yolo3-omz/instances_val2017.json -a yolo3-

omz/mscoco_detection.pickle -m yolo3-omz/mscoco_detection.json -o yolo3-omz/coco_convert/

(4)metrics参数

作用

For correct work metrics require specific representation format

- type: map

integral: 11point

ignore_difficult: true

presenter: print_scalar

integral:平均精度计算的积分类型。Pascal VOC 11point和max方法是可用的

ignore_difficult:允许在度量计算中忽略困难的annotation boxes

presenter: - type: coco_precision

max_detections: 100

threshold: 0.5

presenter: print_vector

即mAP

max_detections:每个图像的最大预测结果数

threshold:IOU的阈值,可指定一个值或逗号分隔的值范围。

此参数支持标准COCO阈值的预计算值,(.5, .75, .5:.05:.95),对于VOC数据集是0.5

representation说明Object Detection

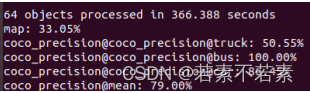

测试accuracy checker

安装

Deep learning accuracy validation framework

Openvino Int8 量化前的数据集转换和精度检查工具文档

sample用于验证安装成功

测试

文件位置

/opt/intel/openvino_/deployment_tools/open_model_zoo/models/public/mobilenet-yolo-syg/accurac-check.yml

此外,accuracy-checker/configs目录下是模型model_name的accuracy-check.yml的链接。

command

cd /opt/intel/openvino_/deployment_tools/open_model_zoo/tools/accuracy_checker

已通过command做过annotation convert

accuracy_check -c /opt/intel/openvino_2021.2.185/deployment_tools/open_model_zoo/models/public/mobilenet-yolosyg/accuracy-check.yml -m

/opt/intel/openvino_2021.2.185/deployment_tools/open_model_zoo/tools/downloader/public/mobilenet-yolo-syg/FP16 -s

/opt/intel/openvino_2021.2.185/deployment_tools/open_model_zoo/tools/downloader/public/mobilenet-yolo-syg -a

…/downloader/public/mobilenet-yolo-syg/voc_convert/ -td cpu

未通过

参数解释

m:models,在downloader测试目录下

s:images of dataset named VOCdevkit,在downloader测试目录下

a:annotations of dataaset format pickle,在accuracy checker测试目录下

result

map:35.38%

coco_perciison@mean:75.36%