前言

image_demo检测的推理输出后测试检测结果,能够将结果可视化的部分

本博客的讲解代码来源:https://github.com/open-mmlab/mmdetection

主代码路径:demo/image_demo.py

1.主要流程

主要流程:

(1)初始化模型,将训练好的权重加载进模型中,模型变成eval()

(2)对输入图片进行推理

(3)将预测的结果进行可视化

def main(args):

# build the model from a config file and a checkpoint file

model = init_detector(args.config, args.checkpoint, device=args.device)

# test a single image

result = inference_detector(model, args.img)

# show the results

show_result_pyplot(model, args.img, result, score_thr=args.score_thr)

初始化模型

def init_detector(config, checkpoint=None, device='cuda:0', cfg_options=None):

if isinstance(config, str):

config = mmcv.Config.fromfile(config) # 加载config配置

elif not isinstance(config, mmcv.Config):

raise TypeError('config must be a filename or Config object, '

f'but got {

type(config)}')

if cfg_options is not None:

config.merge_from_dict(cfg_options)

config.model.pretrained = None

config.model.train_cfg = None

model = build_detector(config.model, test_cfg=config.get('test_cfg')) #初始化模型

if checkpoint is not None:

checkpoint = load_checkpoint(model, checkpoint, map_location='cpu') #加载训练好的权重

if 'CLASSES' in checkpoint.get('meta', {

}):

model.CLASSES = checkpoint['meta']['CLASSES']

else:

warnings.simplefilter('once')

warnings.warn('Class names are not saved in the checkpoint\'s '

'meta data, use COCO classes by default.')

model.CLASSES = get_classes('coco')

model.cfg = config # save the config in the model for convenience

model.to(device)

model.eval() # 推理阶段,模型变成eval()

return model

推理部分

def inference_detector(model, imgs):

if isinstance(imgs, (list, tuple)): # 根据输入的图像的格式进行选择

is_batch = True

else:

imgs = [imgs] # 要变成一个列表,imgs:图片路径

is_batch = False

cfg = model.cfg

device = next(model.parameters()).device # model device

if isinstance(imgs[0], np.ndarray): # 如果输入的是一个np.ndarray图片数据

cfg = cfg.copy()

# set loading pipeline type

cfg.data.test.pipeline[0].type = 'LoadImageFromWebcam'

cfg.data.test.pipeline = replace_ImageToTensor(cfg.data.test.pipeline) #为了使用batch推理,使用DefaultFormatBundle代替ImageToTensor

test_pipeline = Compose(cfg.data.test.pipeline) #初始化数据处理部分

datas = []

for img in imgs:

# prepare data

if isinstance(img, np.ndarray):

# directly add img

data = dict(img=img)

else:

# add information into dict

data = dict(img_info=dict(filename=img), img_prefix=None)

# build the data pipeline

data = test_pipeline(data) # 处理数据

datas.append(data)

# Puts each data field into a tensor/DataContainer with outer dimension batch size

data = collate(datas, samples_per_gpu=len(imgs))

# just get the actual data from DataContainer,取出

data['img_metas'] = [img_metas.data[0] for img_metas in data['img_metas']]

data['img'] = [img.data[0] for img in data['img']]

if next(model.parameters()).is_cuda:

# scatter to specified GPU

data = scatter(data, [device])[0]

else:

for m in model.modules():

assert not isinstance(

m, RoIPool

), 'CPU inference with RoIPool is not supported currently.'

# forward the model

with torch.no_grad():

results = model(return_loss=False, rescale=True, **data)

if not is_batch:

return results[0]

else:

return results

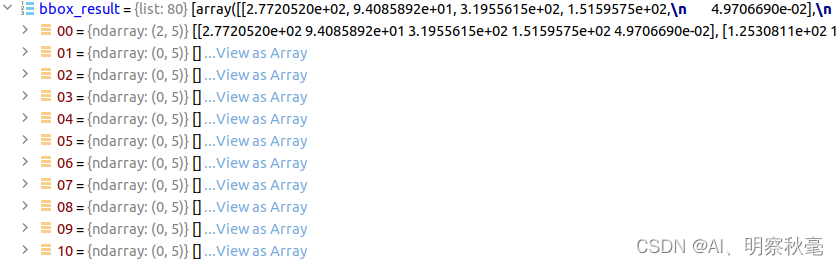

推理输出的det_bboxes, det_labels,要经过变换,将label值与预测的det_bboxes进行对应匹配。

推理输出的部分在我前面的博客中有讲,这里就不重复了:https://editor.csdn.net/md/?articleId=126086285

主要看看怎么将label值与预测的det_bboxes里的预测输出的分类分数对应上,好在框的上方显示对应类的预测分数:

bbox_results = [

bbox2result(det_bboxes, det_labels, self.bbox_head.num_classes)

for det_bboxes, det_labels in results_list #就是将LABEL值与预测的det_bboxes进行匹配

]

bbox2result函数:

def bbox2result(bboxes, labels, num_classes):

if bboxes.shape[0] == 0:

return [np.zeros((0, 5), dtype=np.float32) for i in range(num_classes)]

else:

if isinstance(bboxes, torch.Tensor):

bboxes = bboxes.detach().cpu().numpy() # 将传播流截断,tensor格式变为np,同时在cpu上处理

labels = labels.detach().cpu().numpy()

return [bboxes[labels == i, :] for i in range(num_classes)] #对每个框给定匹配的LABEL值,然后返回的是一个顺序列表

返回的是一个长度为类别数的顺序列表

上上图中,00:表示第0个类别有2个预测框和分数

上上图中,00:表示第0个类别有2个预测框和分数

将预测的结果进行可视化

数据转换处理

def show_result(self,

img,

result,

score_thr=0.3,

bbox_color=(72, 101, 241),

text_color=(72, 101, 241),

mask_color=None,

thickness=2,

font_size=13,

win_name='',

show=False,

wait_time=0,

out_file=None):

"""Draw `result` over `img`."""

img = mmcv.imread(img) #

img = img.copy()

if isinstance(result, tuple):

bbox_result, segm_result = result

if isinstance(segm_result, tuple):

segm_result = segm_result[0] # ms rcnn

else:

bbox_result, segm_result = result, None

bboxes = np.vstack(bbox_result) # 将列表变为ndarray二维数组

labels = [ # bbox_result[0]:类别,bbox_result[1]:bbox

np.full(bbox.shape[0], i, dtype=np.int32) # bbox_result[0].shape:取出类别的形状,有多个列表

for i, bbox in enumerate(bbox_result) # 用相应的索引填充

]

labels = np.concatenate(labels) # 转成NP,一个维度索引

# draw segmentation masks

segms = None

if segm_result is not None and len(labels) > 0: # non empty

segms = mmcv.concat_list(segm_result)

if isinstance(segms[0], torch.Tensor):

segms = torch.stack(segms, dim=0).detach().cpu().numpy()

else:

segms = np.stack(segms, axis=0)

# if out_file specified, do not show image in window

if out_file is not None:

show = False

# draw bounding boxes

img = imshow_det_bboxes(

img,

bboxes,

labels,

segms,

class_names=self.CLASSES,

score_thr=score_thr,

bbox_color=bbox_color,

text_color=text_color,

mask_color=mask_color,

thickness=thickness,

font_size=font_size,

win_name=win_name,

show=show,

wait_time=wait_time,

out_file=out_file)

if not (show or out_file):

return img

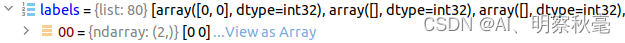

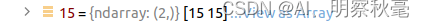

上面的生成labels的过程:

labels = [

np.full(bbox.shape[0], i, dtype=np.int32) # bbox_result:有多少类就有多少个列表

for i, bbox in enumerate(bbox_result) # 用相应的索引填充

]

labels = np.concatenate(labels) # 转成ndarray,一个维度索引

转换为ndarray:

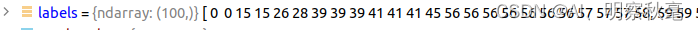

画图:

1.根据所给阈值进行筛选

if score_thr > 0:

assert bboxes.shape[1] == 5 # bbox:[100,5]

scores = bboxes[:, -1]

inds = scores > score_thr # 大于阈值(一般0.3)的索引

bboxes = bboxes[inds, :]

labels = labels[inds]

if segms is not None:

segms = segms[inds, ...]

2.将图像由cv读的bgr->rgb

扫描二维码关注公众号,回复:

14858441 查看本文章

img = mmcv.bgr2rgb(img)

3.将label转换为对应的类别文本,标注到图上

for i, (bbox, label) in enumerate(zip(bboxes, labels)):

bbox_int = bbox.astype(np.int32)

poly = [[bbox_int[0], bbox_int[1]], [bbox_int[0], bbox_int[3]],

[bbox_int[2], bbox_int[3]], [bbox_int[2], bbox_int[1]]]

np_poly = np.array(poly).reshape((4, 2))

polygons.append(Polygon(np_poly))

color.append(bbox_color)

label_text = class_names[

label] if class_names is not None else f'class {

label}' # 将label转换为对应的类别文本