前言

KIND环境介绍及启动(禁用默认的网络插件)请跳转到《KIND网络插件实验:使用bridge作为cni插件》

上文介绍了使用bridge作为KIND网络插件的方法,本文将使用flannel

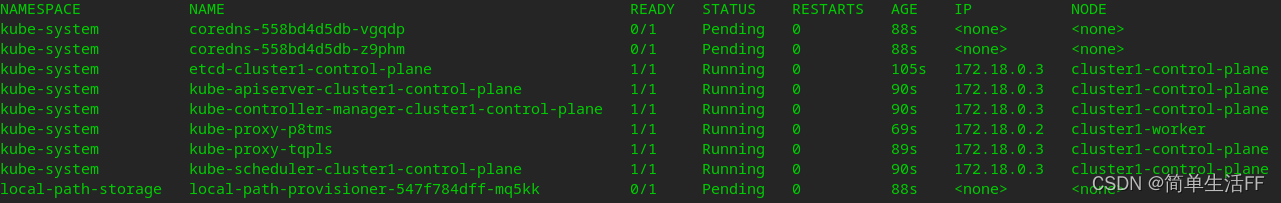

KIND启动后由于没有网络插件,coredns无法被分配地址,所以没起来。禁用默认网络插件启动后的POD状况如下:

部署flannel

kubectl apply -f flannel-v0.14.0.yaml

YAML文件可以从flannel官网获得

# flannel-v0.14.0.yaml

apiVersion: policy/v1beta1

kind: PodSecurityPolicy

metadata:

name: psp.flannel.unprivileged

annotations:

seccomp.security.alpha.kubernetes.io/allowedProfileNames: docker/default

seccomp.security.alpha.kubernetes.io/defaultProfileName: docker/default

apparmor.security.beta.kubernetes.io/allowedProfileNames: runtime/default

apparmor.security.beta.kubernetes.io/defaultProfileName: runtime/default

spec:

privileged: false

volumes:

- configMap

- secret

- emptyDir

- hostPath

allowedHostPaths:

- pathPrefix: "/etc/cni/net.d"

- pathPrefix: "/etc/kube-flannel"

- pathPrefix: "/run/flannel"

readOnlyRootFilesystem: false

# Users and groups

runAsUser:

rule: RunAsAny

supplementalGroups:

rule: RunAsAny

fsGroup:

rule: RunAsAny

# Privilege Escalation

allowPrivilegeEscalation: false

defaultAllowPrivilegeEscalation: false

# Capabilities

allowedCapabilities: ['NET_ADMIN', 'NET_RAW']

defaultAddCapabilities: []

requiredDropCapabilities: []

# Host namespaces

hostPID: false

hostIPC: false

hostNetwork: true

hostPorts:

- min: 0

max: 65535

# SELinux

seLinux:

# SELinux is unused in CaaSP

rule: 'RunAsAny'

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: flannel

rules:

- apiGroups: ['extensions']

resources: ['podsecuritypolicies']

verbs: ['use']

resourceNames: ['psp.flannel.unprivileged']

- apiGroups:

- ""

resources:

- pods

verbs:

- get

- apiGroups:

- ""

resources:

- nodes

verbs:

- list

- watch

- apiGroups:

- ""

resources:

- nodes/status

verbs:

- patch

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: flannel

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: flannel

subjects:

- kind: ServiceAccount

name: flannel

namespace: kube-system

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: flannel

namespace: kube-system

---

kind: ConfigMap

apiVersion: v1

metadata:

name: kube-flannel-cfg

namespace: kube-system

labels:

tier: node

app: flannel

data:

cni-conf.json: |

{

"name": "cbr0",

"cniVersion": "0.3.1",

"plugins": [

{

"type": "flannel",

"delegate": {

"hairpinMode": true,

"isDefaultGateway": true

}

},

{

"type": "portmap",

"capabilities": {

"portMappings": true

}

}

]

}

net-conf.json: |

{

"Network": "10.244.0.0/16",

"Backend": {

"Type": "vxlan"

}

}

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: kubernetes.io/os

operator: In

values:

- linux

hostNetwork: true

priorityClassName: system-node-critical

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni

image: quay.io/coreos/flannel:v0.14.0

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: quay.io/coreos/flannel:v0.14.0

command:

- /opt/bin/flanneld

args:

- --ip-masq=false

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

limits:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: true

capabilities:

add: ["NET_ADMIN", "NET_RAW"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

部署完成后会在节点的/etc/cni/net.d目录下生成flannel的配置文件

/etc/cni/net.d# cat 10-flannel.conflist

{

"name": "cbr0",

"cniVersion": "0.3.1",

"plugins": [

{

"type": "flannel",

"delegate": {

"hairpinMode": true,

"isDefaultGateway": true

}

},

{

"type": "portmap",

"capabilities": {

"portMappings": true

}

}

]

}

节点subset信息生成在节点的 /run/flannel/subnet.env 文件中

root@cluster1-control-plane:/run/flannel# cat subnet.env

FLANNEL_NETWORK=10.244.0.0/16

FLANNEL_SUBNET=10.244.0.1/24

FLANNEL_MTU=1450

FLANNEL_IPMASQ=false

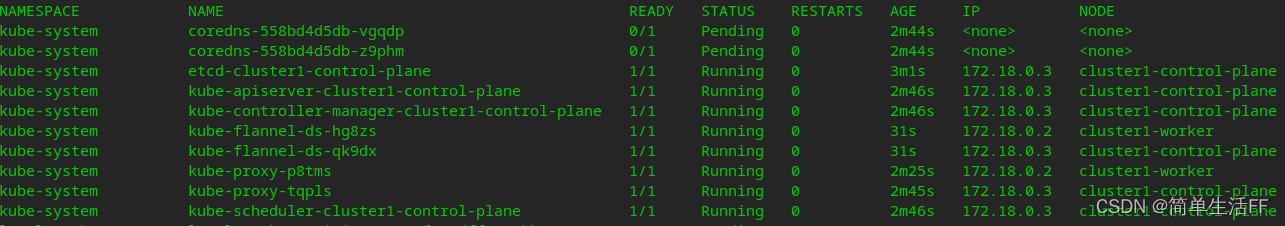

部署完成后,kube-flannel以DaemonSet方式运行在master和worker节点上,但是coredns等仍旧有问题

通过kubectl describe查看:

… failed to find plugin “flannel” in path [/opt/cni/bin]

Warning FailedCreatePodSandBox 10s kubelet, cluster1-worker Failed to create pod sandbox: rpc error: code = Unknown desc = failed to setup network for sandbox “d9d1f4c592c10514313dec335bc24c6eb9c89903e59fa3db3a7f49696e1dd757”: failed to find plugin “flannel” in path [/opt/cni/bin]

需要下载CNI插件: CNI Plugins v0.8.7 (在1.0.0版本后CNI Plugins中没有flannel了,这是为什么?)

将压缩包放到master和worker的/opt/cni/bin目录下,并且解压

#将插件放到master,worker节点类似

sudo docker cp '/home/cni-plugins-linux-arm64-v0.8.7.tgz' aa7c807c9c4a:/opt/cni/bin/

sudo docker exec -it aa7c807c9c4a /bin/bash

root@cluster1-worker:/# cd /opt/cni/bin/

root@cluster1-worker:/opt/cni/bin# ls

cni-plugins-linux-arm64-v0.8.7.tgz host-local loopback portmap ptp

root@cluster1-worker:/opt/cni/bin# tar -xzvf cni-plugins-linux-arm64-v0.8.7.tgz

./

./macvlan

./flannel

./static

./vlan

./portmap

./host-local

./bridge

./tuning

./firewall

./host-device

./sbr

./loopback

./dhcp

./ptp

./ipvlan

./bandwidth

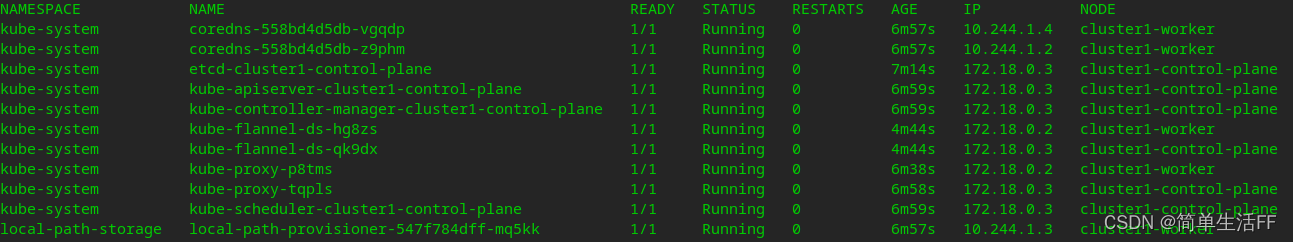

完成后,集群上的pod都正常运行

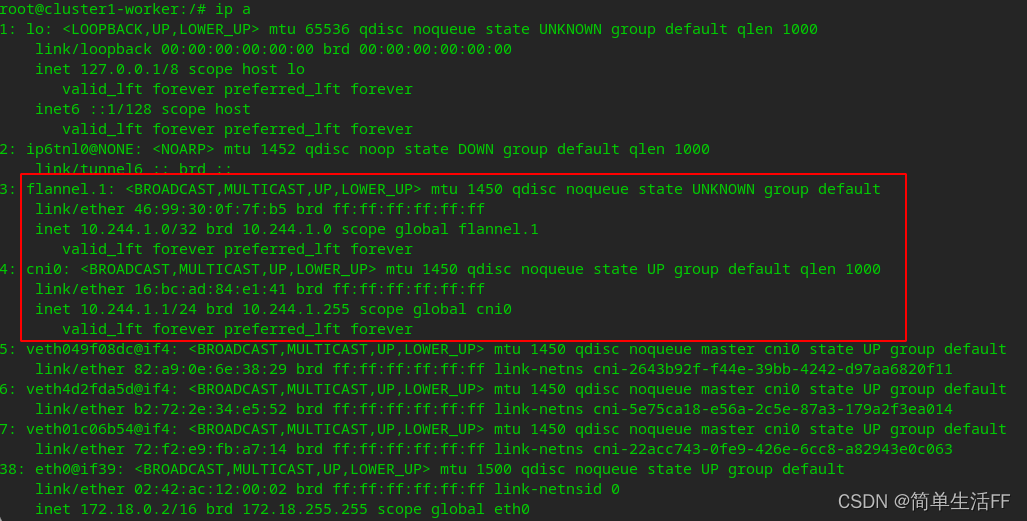

进入worker节点可以看到:

进入worker节点可以看到:

cni0网桥和vtep设备flannel.1 (flannel.[VNI],默认为flannel.1) ,mtu 1450表示使用vxlan进行节点间通信

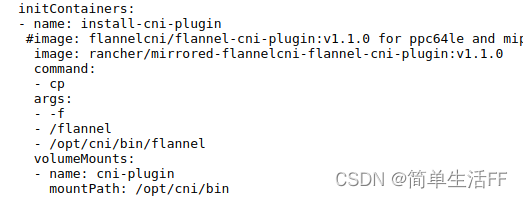

2022-06-01补充:经评论区技术大佬提醒,新版在flannel的yaml部署文件中增加了将二进制文件复制到宿主机/opt/cni/bin的操作,具体yaml文件见官网。yaml文件对应片段如下:

验证

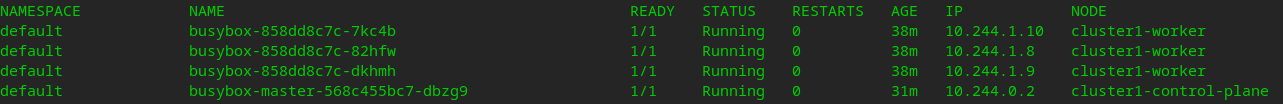

通过上述部署操作,KIND (k8s)环境网络如下

| 主机 | 主机地址 | POD子网 |

|---|---|---|

| master node | 172.18.0.3 | 10.244.0.0/24 |

| worker node | 172.18.0.2 | 10.244.1.0/24 |

部署测试的deployment,通过测试ip分配正常

进入master上的pod(10.244.0.2),ping worker上的pod(10.244.1.10)

进入master上的pod(10.244.0.2),ping worker上的pod(10.244.1.10)

/ # ping 10.244.1.10

PING 10.244.1.10 (10.244.1.10): 56 data bytes

64 bytes from 10.244.1.10: seq=0 ttl=62 time=0.516 ms

64 bytes from 10.244.1.10: seq=1 ttl=62 time=0.480 ms

^C

--- 10.244.1.10 ping statistics ---

2 packets transmitted, 2 packets received, 0% packet loss

round-trip min/avg/max = 0.480/0.498/0.516 ms

/ # traceroute 10.244.1.10

traceroute to 10.244.1.10 (10.244.1.10), 30 hops max, 46 byte packets

1 10.244.0.1 (10.244.0.1) 0.057 ms 0.029 ms 0.024 ms

2 10.244.1.0 (10.244.1.0) 0.025 ms 0.021 ms 0.017 ms

3 10.244.1.10 (10.244.1.10) 0.015 ms 0.013 ms 0.008 ms

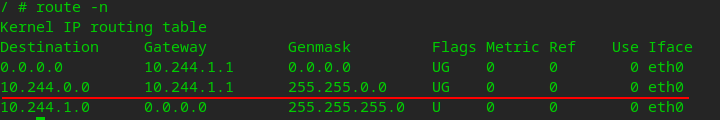

进入worker上的pod,查看路由表:

目的地址是10.244.0.0/16(k8s网络)的包,发往网关10.244.1.1。10.244.1.1是worker节点上的网关cni0的地址。

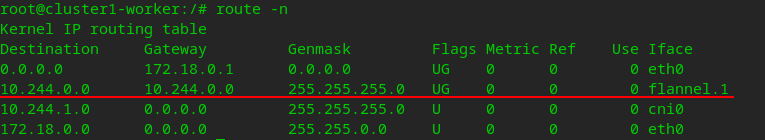

查看worker节点上的路由表:

目的地址是10.244.0.0/24 网段(master上pod的子网)的包,发往master的vtep设备flannel.1(10.244.0.0)

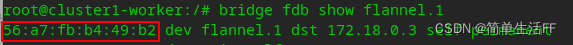

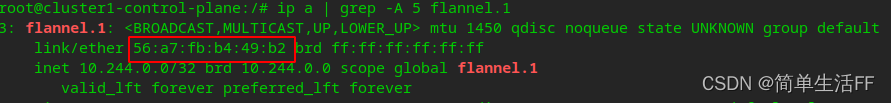

master节点的flannel.1(10.244.0.0)的MAC地址已经维护在worker节点flannel.1的fdb中

上图中56:a7:fb:b4:49:b2就是master节点flannel.1的MAC地址

总结

k8s网络之Flannel网络中结尾部分讲的很好,直接拿过来引用了

总的来说,flannel更像是经典的桥接模式的扩展。我们知道,在桥接模式中,每台主机的容器都将使用一个默认的网段,容器与容器之间,主机与容器之间都能互相通信。要是,我们能手动配置每台主机的网段,使它们互不冲突。接着再想点办法,将目的地址为非本机容器的流量送到相应主机:如果集群的主机都在一个子网内,就搞一条路由转发过去;若是不在一个子网内,就搞一条隧道转发过去。这样以来,容器的跨网络通信问题就解决了。而flannel做的,其实就是将这些工作自动化了而已。

后续

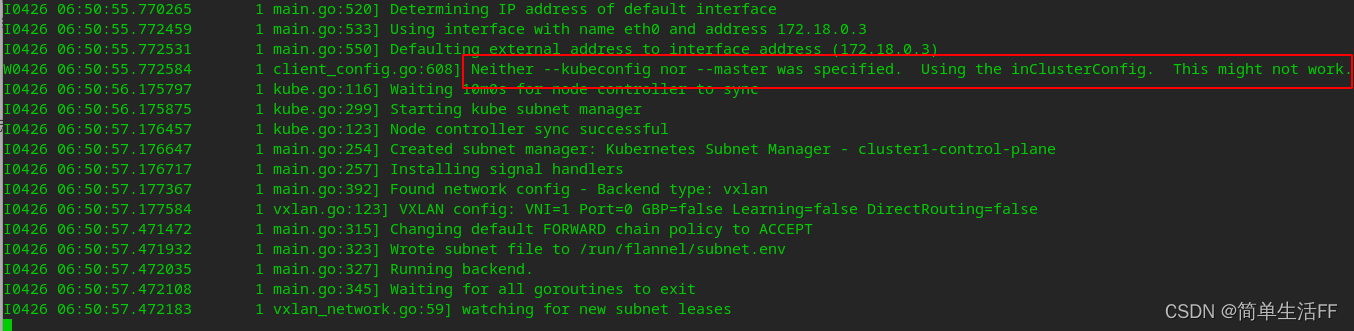

查看flannel的日志

client_config.go:608] Neither --kubeconfig nor --master was specified. Using the inClusterConfig. This might not work.

貌似使用也没有什么影响

如果要增加–kubeconfig启动参数,需要如下:

# 进到master节点

# 注意:我这个集群的apiserver地址是172.18.0.3,集群name是cluster1

# 切换到需要生成配置文件的目录,比如我这里是/usr/local/flannel-wf/

kubectl config set-cluster cluster1 --kubeconfig=flannel.conf --embed-certs --server=https://172.18.0.3:6443 --certificate-authority=/etc/kubernetes/pki/ca.crt

kubectl config set-credentials flannel --kubeconfig=flannel.conf --token=$(kubectl get sa -n kube-system flannel -o jsonpath={

.secrets[0].name} | xargs kubectl get secret -n kube-system -o jsonpath={

.data.token} | base64 -d)

kubectl config set-context cluster1 --kubeconfig=flannel.conf --user=flannel --cluster=cluster1

# 将生成的flannel.conf复制到其他节点

# cat flannel.conf

apiVersion: v1

clusters:

- cluster:

certificate-authority-data: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSUM1ekNDQWMrZ0F3SUJBZ0lCQURBTkJna3Foa2lHOXcwQkFRc0ZBREFWTVJNd0VRWURWUVFERXdwcmRXSmwKY201bGRHVnpNQjRYRFRJeU1EUXlOVEEyTURjd01Wb1hEVE15TURReU1qQTJNRGN3TVZvd0ZURVRNQkVHQTFVRQpBeE1LYTNWaVpYSnVaWFJsY3pDQ0FTSXdEUVlKS29aSWh2Y05BUUVCQlFBRGdnRVBBRENDQVFvQ2dnRUJBTUFYCjNONHUzR2xya1NNdXNDZjRTZkdSQzUzSGMwRDYvb29xeTBGZnIvUURYTmpnYVdXNzVMOTlaZ1JnRG1qSTVhZ1UKMlVxOTRQWFJ5NHNjSy9xZmlNQjdjS0NhSkVJSTJ1YUowcTlKdWlSUkd1REwwZEFSbTk4dU1LMlIzcHF4MXhMMAoyQUcybk0wcnorMGpMRkZ5UkxHQmFhL1hsNzFiRy9nK3FVVUpuMFRiNjNXb1d0c3BMT1pIVDArS1A3dm41TFZNCjNzYUdjWDRxSGswOWZPZEFCYzlrZGFKRUd3dU9ZQ0NpNmUzVnM0T3I1WFNobFl1RWc0OW9oeXZZQU5JQzdCa3gKbVNveXVQM09neEhHSyttS3hRYUxnK1JEZTc3UmgydEQ5WDNZb0haaHVWMk44QWs5Z1NzWHdVZ25NOHQzQWpQNwo5d2xmcmhQZG1iYWZwN1JIYWZVQ0F3RUFBYU5DTUVBd0RnWURWUjBQQVFIL0JBUURBZ0trTUE4R0ExVWRFd0VCCi93UUZNQU1CQWY4d0hRWURWUjBPQkJZRUZKNENmNjFQOTlPY2czbEprc3JjOU1TZlp6aUlNQTBHQ1NxR1NJYjMKRFFFQkN3VUFBNElCQVFDdGFVaEpnaTRXQis4NlEyTUN3WTdRZ2pUc2hPZ2t1UWlrZmRJZ3lDTjFyZ0ZiUTUyVQo3VHBHRS9aa09HdVRLOVRQUkgvUTJNWGNJejhiZHJoL245bFcveEViNklXUnBvaWF4U25tVTkrNHlxZ0pISkdlClVTbUZhZExIQjdaRHFlNnZZdmV6aWRPOWZ1OWRtNXFKUnJOdllxc0s4MVkvM3Y4b0NYbmQ1Uk12eFRoVjlhbFAKR2dHYTk5OGFXT1B6OHFEdHFIL3NkVnJ0UmpWOWVTSVJGNVg1ZE04bDlyTkNNZE43eGt4VGY5TG82V21kQXhseApMR3FYR2hnb1BHbHdIQnhPUGtLanBqZzRTZHpCZmFVZ1J0a1ZJUERsdW1Icm94RVNtZXlQNkc4UUVVY1AzVkJRCkJ3anRzb21aYnp4RWRhUCsydXJ5a05TMXY4cmFONGFXQkRlSAotLS0tLUVORCBDRVJUSUZJQ0FURS0tLS0tCg==

server: https://172.18.0.3:6443

name: cluster1

contexts:

- context:

cluster: cluster1

user: flannel

name: cluster1

current-context: ""

kind: Config

preferences: {

}

users:

- name: flannel

user:

token: eyJhbGciOiJSUzI1NiIsImtpZCI6ImNPbkpfSkFlVTBWa2RfVjVqZ1d6LXNPUDk1VldSY2NVb1M0empJS3hQQ1EifQ.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlLXN5c3RlbSIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJmbGFubmVsLXRva2VuLXM0ajI5Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9zZXJ2aWNlLWFjY291bnQubmFtZSI6ImZsYW5uZWwiLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlcnZpY2UtYWNjb3VudC51aWQiOiI2NzFmNDNiZS0zZjYzLTQwMjktOTQ4Ny1lYWZjZDhmMmQ5ZmIiLCJzdWIiOiJzeXN0ZW06c2VydmljZWFjY291bnQ6a3ViZS1zeXN0ZW06Zmxhbm5lbCJ9.kHo7v-sKNDiRtOceIcngXzpY83Tp1Fnnyo6ueaDoSffAe3dBqW_W2ExU6F99DpujM6mnmlIUXXAq0Nbv6eANagK4fwGVD20so-mM9bVsOVJP74UufQh9oYhT77BHMMfryW7n3Q-ujerSswSvq5XFCax1WAOhQXYitIETIkD3ePKf5_HteRLLjvCTC2yuFNuYK3ZwpcOdypfvm28p_K6Ly0YW1EjOcN7UJ0FGMTAqJ71jeAT2OlVNxstuhNuMNOIfgyhYZ8lppxPuqh073EjTi0Ss59fRpFJCzbaEsEiIAwiel_hVw8xkNR7zCRvKQMlL5lmF-juMCXeoHeBKPcXwLQ

修改flannel部署yaml文件,仅修改DaemonSet,增加启动参数–kubeconfig,配置文件通过宿主机目录挂载到Pod目录。

其他ClusterRole、SA、ConfigMap等都不改动

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: kubernetes.io/os

operator: In

values:

- linux

hostNetwork: true

priorityClassName: system-node-critical

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni

image: quay.io/coreos/flannel:v0.14.0

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: quay.io/coreos/flannel:v0.14.0

command:

- /opt/bin/flanneld

args:

- --ip-masq=false

- --kube-subnet-mgr

- --kubeconfig-file=/usr/local/flannel-wf/flannel.conf # 增加启动参数!

resources:

requests:

cpu: "100m"

memory: "50Mi"

limits:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: true

capabilities:

add: ["NET_ADMIN", "NET_RAW"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

- name: kube-cfg ##

mountPath: /usr/local/flannel-wf # 从宿主机挂载

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

###

- name: kube-cfg

hostPath:

path: /usr/local/flannel-wf # 宿主机目录

重新部署后,查看启动日志就没有这行提示了

$ sudo kubectl logs -f kube-flannel-ds-dn59l -n kube-system

I0426 07:06:52.970794 1 main.go:520] Determining IP address of default interface

I0426 07:06:52.971228 1 main.go:533] Using interface with name eth0 and address 172.18.0.3

I0426 07:06:52.971252 1 main.go:550] Defaulting external address to interface address (172.18.0.3)

I0426 07:06:53.676497 1 kube.go:116] Waiting 10m0s for node controller to sync

I0426 07:06:53.676660 1 kube.go:299] Starting kube subnet manager

I0426 07:06:54.676663 1 kube.go:123] Node controller sync successful

I0426 07:06:54.676715 1 main.go:254] Created subnet manager: Kubernetes Subnet Manager - cluster1-control-plane

I0426 07:06:54.676724 1 main.go:257] Installing signal handlers

I0426 07:06:54.676988 1 main.go:392] Found network config - Backend type: vxlan

I0426 07:06:54.677234 1 vxlan.go:123] VXLAN config: VNI=1 Port=0 GBP=false Learning=false DirectRouting=false

I0426 07:06:54.873344 1 main.go:315] Changing default FORWARD chain policy to ACCEPT

I0426 07:06:54.873695 1 main.go:323] Wrote subnet file to /run/flannel/subnet.env

I0426 07:06:54.873720 1 main.go:327] Running backend.

I0426 07:06:54.873758 1 main.go:345] Waiting for all goroutines to exit

I0426 07:06:54.873809 1 vxlan_network.go:59] watching for new subnet leases