实现过程

实现过程

- 平台

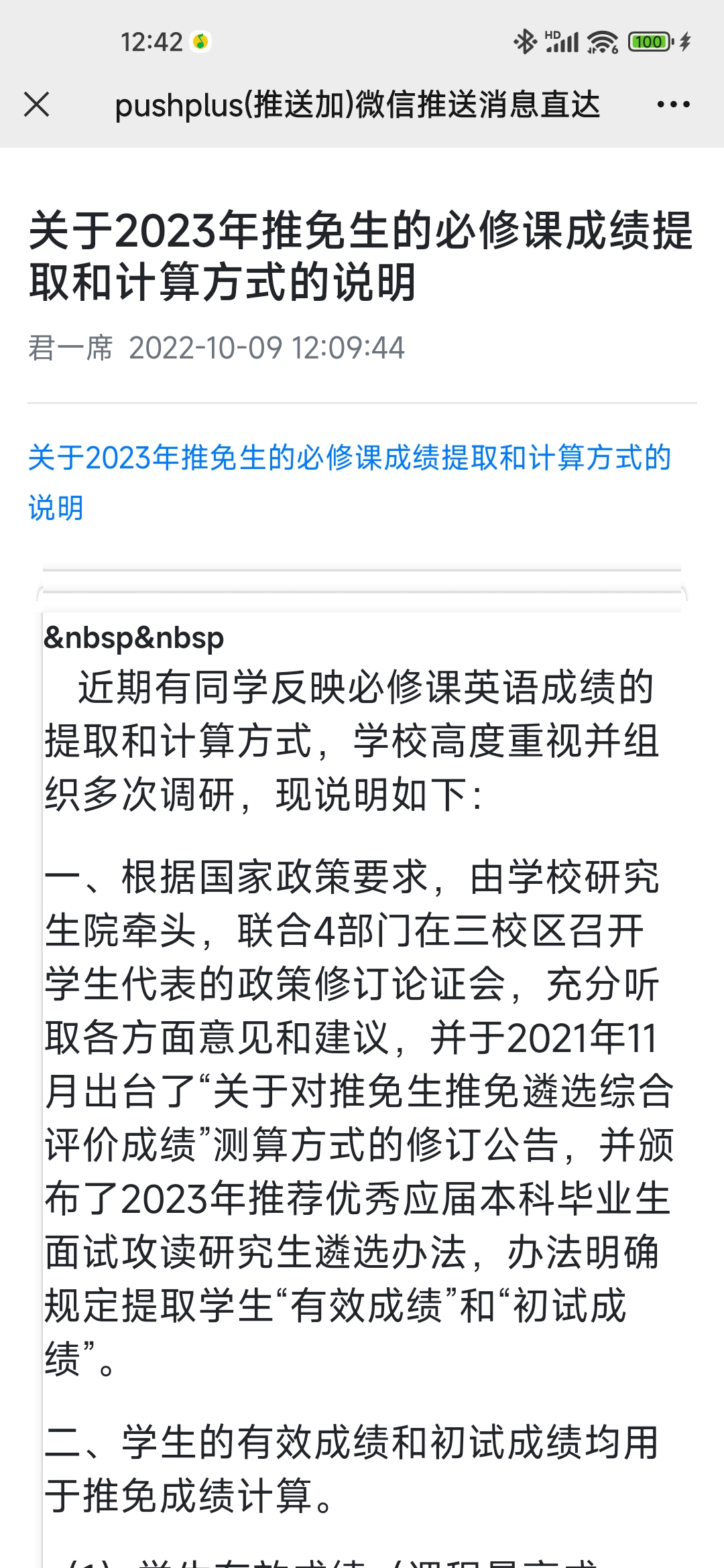

pushplus这个平台直接调用api接口就行了

- 目标网站

研究一下网页源代码的组成部分我们可以发现每个通知的xpath

/html/body/form/div/div[2]/table/tbody/tr

我们获取到每个通知的url后,将它们放入集合中,后用json保存,一定时间再次爬取,

对前后两次集合的并集后取原集合的差集,如果结果不为空,则分别获取集合的结果,分页获取文章内容

在调用平台API,发送信息

为了实现全部自动化,我们可以将脚本部署到服务器上定时运行,当注意教务网每天凌晨要关闭

代码

from lxml import etree

import requests

import json

def get_page(url):

header = {

"accept": "text/html,application/xhtml+xml,application/xml;q=0.9,image/webp,image/apng,*/*;q=0.8,application/signed-exchange;v=b3;q=0.9",

"accept-encoding": "gzip, deflate, br",

"accept-language": "zh-CN,zh;q=0.9,en;q=0.8,en-GB;q=0.7,en-US;q=0.6",

"referer": "https://jiaowu.sicau.edu.cn/web/web/web/index.asp",

"sec-ch-ua": 'Chromium";v="106", "Microsoft Edge";v="106", "Not;A=Brand";v="99"',

"sec-ch-ua-mobile": "?0",

"sec-ch-ua-platform": "Windows",

"sec-fetch-dest": "document",

"sec-fetch-mode": "navigate",

"sec-fetch-site": "same-origin",

"sec-fetch-user": "?1",

"upgrade-insecure-requests": "1",

"user-agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/106.0.0.0 Safari/537.36 Edg/106.0.1370.37"

}

s = requests.get(url, headers=header)

html = etree.HTML(s.content.decode('gbk'))

title=html.xpath("/html/body/div[@class='page-title-2']/text()")

txt=html.xpath("//table[@align='center']/tbody/tr/td/table")

txt=etree.tostring(txt[2],encoding='utf-8').decode('utf-8')

txt = "<head><base href=\"" + url + "\" /></head><p><a href=\""+url+"\">"+title[0]+"</a></p>"+txt

return title,txt

def vx_msg(title,content):

token = '平台token'

url = 'http://www.pushplus.plus/send'

data = {

"token": token,

"title": title,

"content": content

}

body = json.dumps(data).encode(encoding='utf-8')

headers = {

'Content-Type': 'application/json'}

r=requests.post(url, data=body, headers=headers)

j=r.json()

if j["code"]==200:

print("发送成功")

else:

print("失败")

def get_data():

header = {

"accept": "text/html,application/xhtml+xml,application/xml;q=0.9,image/webp,image/apng,*/*;q=0.8,application/signed-exchange;v=b3;q=0.9",

"accept-encoding": "gzip, deflate, br",

"accept-language": "zh-CN,zh;q=0.9,en;q=0.8,en-GB;q=0.7,en-US;q=0.6",

"referer": "https://jiaowu.sicau.edu.cn/web/web/web/index.asp",

"sec-ch-ua": 'Chromium";v="106", "Microsoft Edge";v="106", "Not;A=Brand";v="99"',

"sec-ch-ua-mobile": "?0",

"sec-ch-ua-platform": "Windows",

"sec-fetch-dest": "document",

"sec-fetch-mode": "navigate",

"sec-fetch-site": "same-origin",

"sec-fetch-user": "?1",

"upgrade-insecure-requests": "1",

"user-agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/106.0.0.0 Safari/537.36 Edg/106.0.1370.37"

}

try:

s = requests.get("https://jiaowu.sicau.edu.cn/web/web/web/gwmore.asp", headers=header)

html = etree.HTML(s.content.decode('gbk'))

result = html.xpath("/html/body/form/div/div[2]/table/tbody/tr")

ls =set()

for i in result:

#date = i.xpath("./td[4]/text()")

url = "https://jiaowu.sicau.edu.cn/web/web/web/" + "".join(i.xpath("./td[3]/div/a/@href"))

#title = i.xpath("./td[3]/div/a/font/text()")

ls.add(url)

return ls

except:

print("获取通知列表失败")

def send(url):

title, content = get_page(url)

vx_msg(title[0], content)

def read():

with open('data.json', 'r') as file:

str = file.read()

data = json.loads(str)

return set(data)

def save(data):

with open('data.json', 'w') as file:

str = file.write(json.dumps(data))

try:

ls=get_data()

data=read()

s=data|ls

s=s-data

if len(s)!=0:

for i in s:

print(i)

send(i)

save(list(ls))

else:

print("没有新的通知")

except:

print("爬取失败")