本文说描述的HashMap以JDK1.8为标准进行解释。

前言: HashMap是java开发程序要使用频率比较高的一个Collection。基于哈希表的 Map 接口的实现。是以key-value的形式进行存在的。

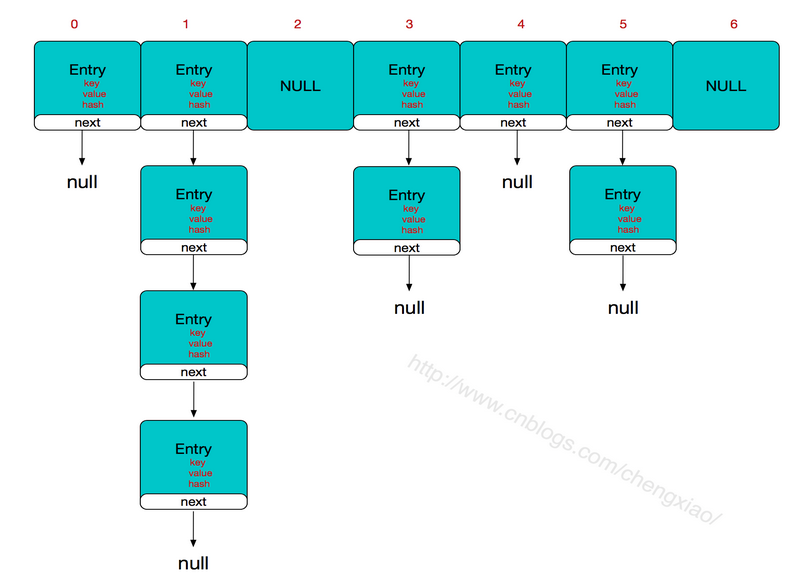

HashMap数据结构

HashMap是以数组+链表的形式进行存储数据的(以下是代码证明)。

数组的优缺点:通过下标索引方便查找,但是在数组中插入或删除一个元素比较困难。

链表的优缺点:由于在链表中查找一个元素需要以遍历链表的方式去查找,而插入,删除快速。因此链表适合快速插入和删除的场景,不利于查找。

/**

* The table, initialized on first use, and resized as

* necessary. When allocated, length is always a power of two.

* (We also tolerate length zero in some operations to allow

* bootstrapping mechanics that are currently not needed.)

*/

transient Node<K,V>[] table;

/**

* Basic hash bin node, used for most entries. (See below for

* TreeNode subclass, and in LinkedHashMap for its Entry subclass.)

*/

static class Node<K,V> implements Map.Entry<K,V> {

final int hash;//key的hash值

final K key;//key-value结构的key

V value;//key-value结构的value

Node<K,V> next;//指向下一个链表的节点

Node(int hash, K key, V value, Node<K,V> next) {

this.hash = hash;

this.key = key;

this.value = value;

this.next = next;

}

Node节点只贴关键代码。

HashMap关键信息

默认大小为16

负载因子默认为0.75(负载因子是当hashmap中的大小达到总大小的指定负载因子时将会对hashmap进行扩容)

hashmap每次扩容为原hashmap的2倍

链表的最大长度为8,当超过8时会将链表装换为红黑树进行存储(jdk1.8新加特性)。

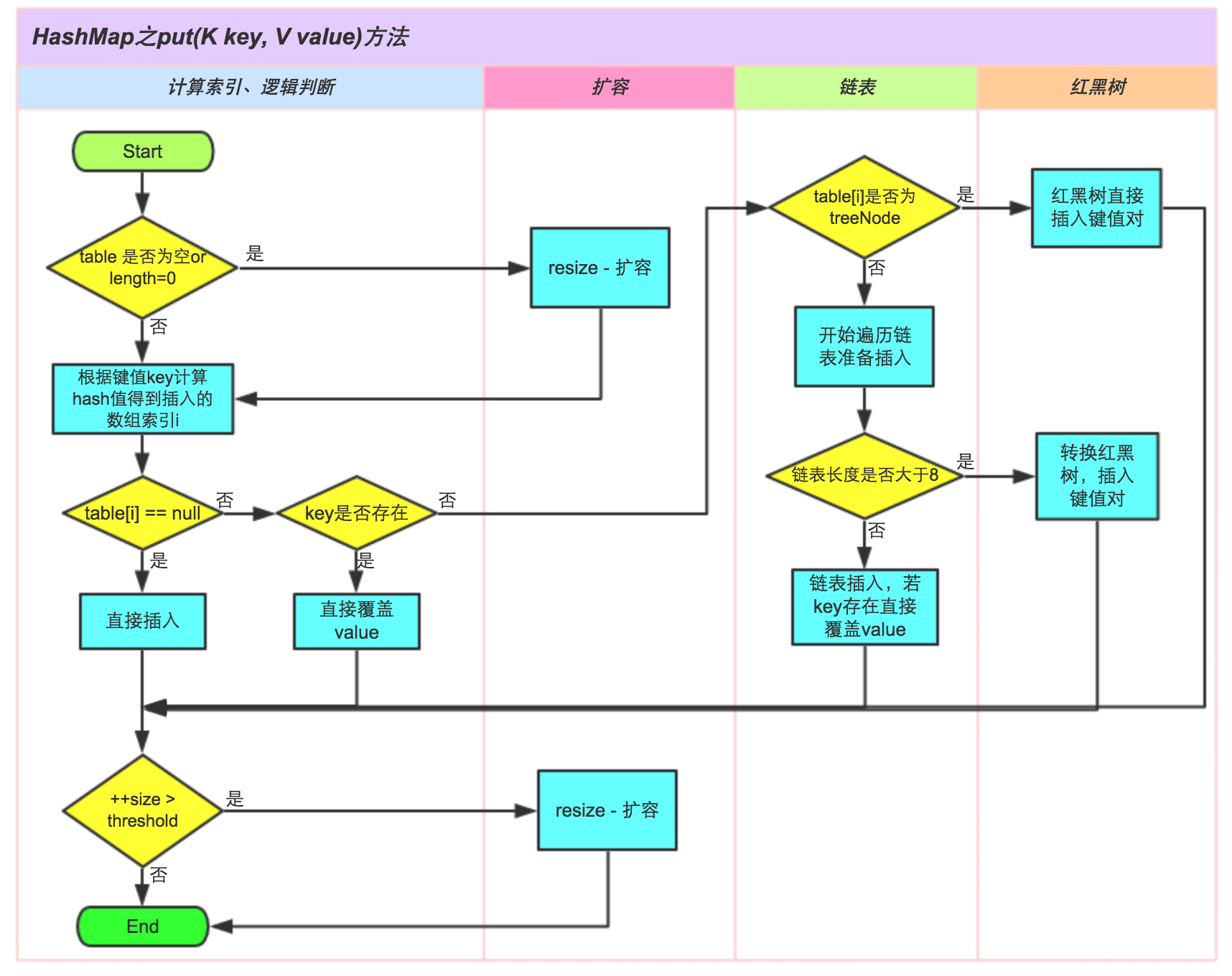

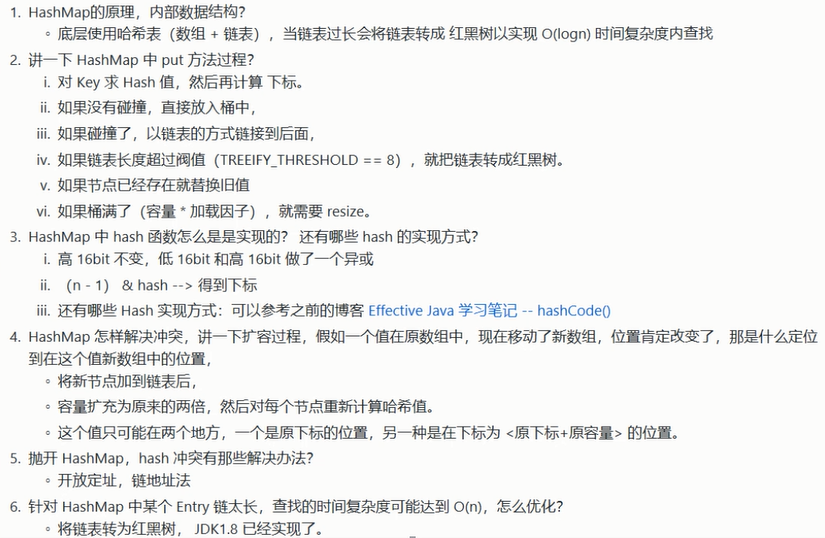

HashMap put时所发生的鲜为人知的事情

hashmap存放数据使用map.put(key,value)方法进行存放。

put时会先调用hash方法对key计算key的Hash值

/**

* Associates the specified value with the specified key in this map.

* If the map previously contained a mapping for the key, the old

* value is replaced.

*

* @param key key with which the specified value is to be associated

* @param value value to be associated with the specified key

* @return the previous value associated with <tt>key</tt>, or

* <tt>null</tt> if there was no mapping for <tt>key</tt>.

* (A <tt>null</tt> return can also indicate that the map

* previously associated <tt>null</tt> with <tt>key</tt>.)

*/

public V put(K key, V value) {

return putVal(hash(key), key, value, false, true);

}

hash方法的作用:高16位不变,低16位和高16位做一个异或(这样做的目的是为了减少hashcode碰撞的概率)

/**

* Computes key.hashCode() and spreads (XORs) higher bits of hash

* to lower. Because the table uses power-of-two masking, sets of

* hashes that vary only in bits above the current mask will

* always collide. (Among known examples are sets of Float keys

* holding consecutive whole numbers in small tables.) So we

* apply a transform that spreads the impact of higher bits

* downward. There is a tradeoff between speed, utility, and

* quality of bit-spreading. Because many common sets of hashes

* are already reasonably distributed (so don't benefit from

* spreading), and because we use trees to handle large sets of

* collisions in bins, we just XOR some shifted bits in the

* cheapest possible way to reduce systematic lossage, as well as

* to incorporate impact of the highest bits that would otherwise

* never be used in index calculations because of table bounds.

*/

static final int hash(Object key) {

int h;

return (key == null) ? 0 : (h = key.hashCode()) ^ (h >>> 16);

}

接下来就是真正的put方法。

put方法第二步会根据key的hash值计算当前数据需要放入数组中的哪一个index下标。

它是把当前hashmap的大小减一和key的hash值做了一个&运算。&运算就是把2个都转换成二进制数然后再进行与的运算,当且仅当两个对应的位置都是1,结果才是1,否则结果为0。这样做的目的就是为了让当前计算出来的index不超过当前数组大小,至于为什么减一是因为数组index是从0开始的。这样的话就让node落点分布均匀,减少碰撞的一个概率,如果碰撞概率高了就势必导致数组小标链表长多过长。

final V putVal(int hash, K key, V value, boolean onlyIfAbsent,

boolean evict) {

Node<K,V>[] tab; Node<K,V> p; int n, i;

// tab为空则创建

if ((tab = table) == null || (n = tab.length) == 0)

n = (tab = resize()).length;

// 计算index,并对null做处理

if ((p = tab[i = (n - 1) & hash]) == null)

tab[i] = newNode(hash, key, value, null);

else {

Node<K,V> e; K k;

// 节点key存在,直接覆盖value

if (p.hash == hash &&

((k = p.key) == key || (key != null && key.equals(k))))

e = p;

// 判断该链为红黑树

else if (p instanceof TreeNode)

e = ((TreeNode<K,V>)p).putTreeVal(this, tab, hash, key, value);

// 该链为链表

else {

for (int binCount = 0; ; ++binCount) {

if ((e = p.next) == null) {

p.next = newNode(hash, key,value,null);

//链表长度大于8转换为红黑树进行处理

if (binCount >= TREEIFY_THRESHOLD - 1) // -1 for 1st

treeifyBin(tab, hash);

break;

}

// key已经存在直接覆盖value

if (e.hash == hash &&

((k = e.key) == key || (key != null && key.equals(k))))

break;

p = e;

}

}

if (e != null) { // existing mapping for key

V oldValue = e.value;

if (!onlyIfAbsent || oldValue == null)

e.value = value;

afterNodeAccess(e);

return oldValue;

}

}

++modCount;

// 步骤:超过最大容量 就扩容

if (++size > threshold)

resize();

afterNodeInsertion(evict);

return null;

}

此图参考地址

HashMap resize()扩容

hashmap扩容是成倍的扩容。至于为什么扩容会是扩容一倍而不是1.5或者其他的倍数呢?既然hashmap在进行put的时候针对key做了一些列的hash以及&运算就是为了减少碰撞的一个概率,如果扩容后的大小不是2的n次幂的话之前做的不是白费了吗?(特别是在做&运算的时候)

else if ((newCap = oldCap << 1) < MAXIMUM_CAPACITY &&

oldCap >= DEFAULT_INITIAL_CAPACITY)

newThr = oldThr << 1; // double threshold

扩容后会重新把原来的所有的数据key的hash重新计算放入扩容后的数组里面去。为什么要这样做?因为不同的数组大小通过key的hash计算出来的index是不一样的。

HashMap多线程处理

hashmap是线程不安全的。

当多个线程同时检测到总数量超过门限值的时候就会同时调用resize操作,各自生成新的数组并rehash后赋给该map底层的数组table,结果最终只有最后一个线程生成的新数组被赋给table变量,其他线程的均会丢失。而且当某些线程已经完成赋值而其他线程刚开始的时候,就会用已经被赋值的table作为原始数组,这样也会有问题。

如果在使用迭代器的过程中有其他线程修改了map,那么将抛出ConcurrentModificationException,这就是所谓fail-fast策略。这一策略在源码中的实现是通过modCount域,modCount顾名思义就是修改次数,对HashMap内容的修改都将增加这个值,那么在迭代器初始化过程中会将这个值赋给迭代器的expectedModCount。在迭代过程中,判断modCount跟expectedModCount是否相等,如果不相等就表示已经有其他线程修改了Map

abstract class HashIterator {

Node<K,V> next; // next entry to return

Node<K,V> current; // current entry

int expectedModCount; // for fast-fail

int index; // current slot

HashIterator() {

expectedModCount = modCount;

Node<K,V>[] t = table;

current = next = null;

index = 0;

if (t != null && size > 0) { // advance to first entry

do {} while (index < t.length && (next = t[index++]) == null);

}

}

public final boolean hasNext() {

return next != null;

}

final Node<K,V> nextNode() {

Node<K,V>[] t;

Node<K,V> e = next;

if (modCount != expectedModCount)

throw new ConcurrentModificationException();

if (e == null)

throw new NoSuchElementException();

if ((next = (current = e).next) == null && (t = table) != null) {

do {} while (index < t.length && (next = t[index++]) == null);

}

return e;

}