import tensorflow as tf

import numpy as np

BATCH_SIZE=8

SEED=23455

#基于seed产生随机数

rdm=np.random.RandomState(SEED)

#从X这个32行2列的矩阵中 取出一行 判断如果和小于1 给Y赋值1 如果和不小于1 给Y赋值0

#作为输入数据集的标签(正确答案)

X=rdm.rand(32,2)

#给标签加上-0.05~+0.05的随机噪声 rdm.rand()-->0~1-->除以10-->0~0.1-->0.05-->-0.05~0.05

Y_=[[x0+x1+(rdm.rand() /10.0-0.05)] for (x0,x1) in X]

print ("X:\n",X)

print ("Y_:\n",Y_)

x=tf.placeholder(tf.float32,shape=(None,2))

y_=tf.placeholder(tf.float32,shape=(None,1))

w1=tf.Variable(tf.random_normal([2,1],stddev=1,seed=1))

#预测值

y=tf.matmul(x,w1)

loss=tf.reduce_mean(tf.square(y_-y))

train_step=tf.train.GradientDescentOptimizer(0.001).minimize(loss)

with tf.Session() as sess:

init=tf.global_variables_initializer()

sess.run(init)

print('w1:\n', sess.run(w1))

#训练模型

step=30000

for i in range(step):

start=(i*BATCH_SIZE)%32

end=start+BATCH_SIZE

sess.run(train_step,feed_dict={x:X[start:end],y_:Y_[start:end]})

if i%1000==0:

total_loss=sess.run(loss,feed_dict={x:X,y_:Y_})

print("After %d training steps, w1 is :"%(i))

print('w1:\n', sess.run(w1))

print('final w1 is: \n',sess.run(w1))

'''

X:

[[ 0.83494319 0.11482951]

[ 0.66899751 0.46594987]

[ 0.60181666 0.58838408]

[ 0.31836656 0.20502072]

[ 0.87043944 0.02679395]

[ 0.41539811 0.43938369]

[ 0.68635684 0.24833404]

[ 0.97315228 0.68541849]

[ 0.03081617 0.89479913]

[ 0.24665715 0.28584862]

[ 0.31375667 0.47718349]

[ 0.56689254 0.77079148]

[ 0.7321604 0.35828963]

[ 0.15724842 0.94294584]

[ 0.34933722 0.84634483]

[ 0.50304053 0.81299619]

[ 0.23869886 0.9895604 ]

[ 0.4636501 0.32531094]

[ 0.36510487 0.97365522]

[ 0.73350238 0.83833013]

[ 0.61810158 0.12580353]

[ 0.59274817 0.18779828]

[ 0.87150299 0.34679501]

[ 0.25883219 0.50002932]

[ 0.75690948 0.83429824]

[ 0.29316649 0.05646578]

[ 0.10409134 0.88235166]

[ 0.06727785 0.57784761]

[ 0.38492705 0.48384792]

[ 0.69234428 0.19687348]

[ 0.42783492 0.73416985]

[ 0.09696069 0.04883936]]

Y_:

[[0.969797861054287], [1.1634604857835003], [1.1942714411690643], [0.53844884486018385], [0.86327606020616487], [0.83393219491487269], [0.92808933540244687], [1.6879345369421652], [0.90366745057004794], [0.51295653519175899], [0.78442523759738858], [1.299175094270699], [1.0919817282657285], [1.0880495166868347], [1.1734589741814216], [1.3098158421478576], [1.2387201482616108], [0.82896799389366127], [1.3550486329517144], [1.5786661754924429], [0.75243054841650525], [0.73263188683810321], [1.2449966435046544], [0.788097599402105], [1.5577488607336392], [0.38892569979304559], [1.0277860551407527], [0.61040422778909775], [0.85948088233563036], [0.88107574300613067], [1.1456401959033111], [0.1907476486033659]]

w1:

[[-0.81131822]

[ 1.48459876]]

After 0 training steps, w1 is :

w1:

[[-0.80974597]

[ 1.48529029]]

After 1000 training steps, w1 is :

w1:

[[-0.21939856]

[ 1.69847655]]

After 2000 training steps, w1 is :

w1:

[[ 0.08942621]

[ 1.67332804]]

After 3000 training steps, w1 is :

w1:

[[ 0.28375748]

[ 1.58544338]]

After 4000 training steps, w1 is :

w1:

[[ 0.42332521]

[ 1.49073923]]

After 5000 training steps, w1 is :

w1:

[[ 0.5311361 ]

[ 1.40545344]]

After 6000 training steps, w1 is :

w1:

[[ 0.6173259 ]

[ 1.33294022]]

After 7000 training steps, w1 is :

w1:

[[ 0.68726856]

[ 1.27260184]]

After 8000 training steps, w1 is :

w1:

[[ 0.74438614]

[ 1.22281957]]

After 9000 training steps, w1 is :

w1:

[[ 0.79115146]

[ 1.18188882]]

After 10000 training steps, w1 is :

w1:

[[ 0.82948142]

[ 1.14828289]]

After 11000 training steps, w1 is :

w1:

[[ 0.8609128 ]

[ 1.12070608]]

After 12000 training steps, w1 is :

w1:

[[ 0.88669145]

[ 1.09808242]]

After 13000 training steps, w1 is :

w1:

[[ 0.90783483]

[ 1.07952428]]

After 14000 training steps, w1 is :

w1:

[[ 0.92517716]

[ 1.06430185]]

After 15000 training steps, w1 is :

w1:

[[ 0.93940228]

[ 1.05181527]]

After 16000 training steps, w1 is :

w1:

[[ 0.95107025]

[ 1.04157281]]

After 17000 training steps, w1 is :

w1:

[[ 0.96064115]

[ 1.03317142]]

After 18000 training steps, w1 is :

w1:

[[ 0.96849167]

[ 1.02628016]]

After 19000 training steps, w1 is :

w1:

[[ 0.974931 ]

[ 1.02062762]]

After 20000 training steps, w1 is :

w1:

[[ 0.98021317]

[ 1.01599193]]

After 21000 training steps, w1 is :

w1:

[[ 0.98454493]

[ 1.01218843]]

After 22000 training steps, w1 is :

w1:

[[ 0.98809904]

[ 1.00906873]]

After 23000 training steps, w1 is :

w1:

[[ 0.99101412]

[ 1.00651038]]

After 24000 training steps, w1 is :

w1:

[[ 0.99340498]

[ 1.00441146]]

After 25000 training steps, w1 is :

w1:

[[ 0.99536628]

[ 1.00269127]]

After 26000 training steps, w1 is :

w1:

[[ 0.99697423]

[ 1.00127912]]

After 27000 training steps, w1 is :

w1:

[[ 0.99829453]

[ 1.00012016]]

After 28000 training steps, w1 is :

w1:

[[ 0.99937749]

[ 0.99917036]]

After 29000 training steps, w1 is :

w1:

[[ 1.00025988]

[ 0.99839056]]

final w1 is:

[[ 1.00097299]

[ 0.99774671]]

'''

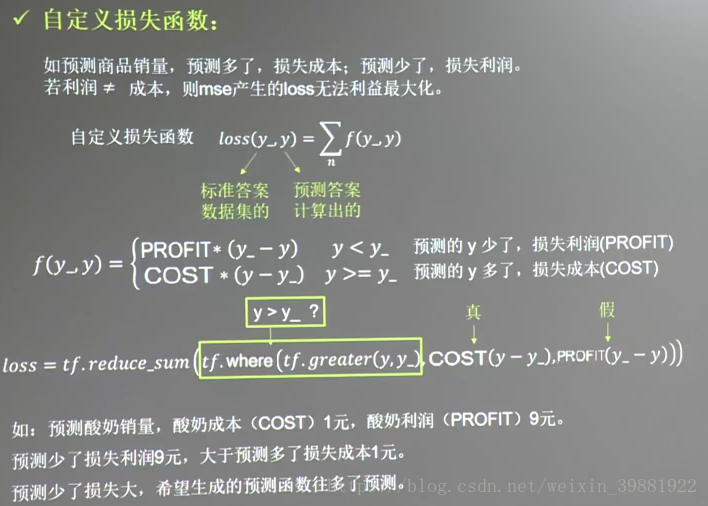

import tensorflow as tf

import numpy as np

'''

酸奶成本1元,酸奶利润9元

预测少了赚的少,损失大,故希望不要预测少,尽量使模型往多了预测

'''

BATCH_SIZE=8

SEED=23455

COST=1

PROFIT=9

#基于seed产生随机数

rdm=np.random.RandomState(SEED)

#从X这个32行2列的矩阵中 取出一行 x0和x1为决定酸奶销量的两个特征,例如:味道酸度,包装绚丽度

#作为输入数据集的标签(正确答案)

X=rdm.rand(32,2)

#给标签加上-0.05~+0.05的随机噪声 rdm.rand()-->0~1-->除以10-->0~0.1-->0.05-->-0.05~0.05

#酸奶销量

Y_=[[x0+x1+(rdm.rand() /10.0-0.05)] for (x0,x1) in X]

print ("X:\n",X)

print ("Y_:\n",Y_)

x=tf.placeholder(tf.float32,shape=(None,2))

#实际统计销量

y_=tf.placeholder(tf.float32,shape=(None,1))

w1=tf.Variable(tf.random_normal([2,1],stddev=1,seed=1))

#预测值

y=tf.matmul(x,w1)

#如果预测多了,预测的酸奶数量大于了真实的销量,那么损失=多出来的酸奶个数*酸奶成本;

#如果本来可以多卖,但预测少了,供不应求,那么损失=预测少了的数量*每瓶酸奶的利润

#由于每瓶酸奶的利润大于每瓶酸奶的成本,要使loss尽量小,所以模型应该是向偏多的方向预测

loss=tf.reduce_sum(tf.where(tf.greater(y,y_),(y-y_)*COST,(y_-y)*PROFIT))

train_step=tf.train.GradientDescentOptimizer(0.001).minimize(loss)

with tf.Session() as sess:

init=tf.global_variables_initializer()

sess.run(init)

print('w1:\n', sess.run(w1))

#训练模型

step=30000

for i in range(step):

start=(i*BATCH_SIZE)%32

end=start+BATCH_SIZE

sess.run(train_step,feed_dict={x:X[start:end],y_:Y_[start:end]})

if i%5000==0:

total_loss=sess.run(loss,feed_dict={x:X,y_:Y_})

print("After %d training steps, w1 is :"%(i))

print('w1:\n', sess.run(w1))

print('final w1 is: \n',sess.run(w1))

'''

w1:

[[-0.81131822]

[ 1.48459876]]

After 0 training steps, w1 is :

w1:

[[-0.76299298]

[ 1.50956583]]

After 5000 training steps, w1 is :

w1:

[[ 1.01956224]

[ 1.04744005]]

After 10000 training steps, w1 is :

w1:

[[ 1.01756001]

[ 1.03981125]]

After 15000 training steps, w1 is :

w1:

[[ 1.01894653]

[ 1.04037356]]

After 20000 training steps, w1 is :

w1:

[[ 1.01714945]

[ 1.03888571]]

After 25000 training steps, w1 is :

w1:

[[ 1.01853597]

[ 1.03944802]]

final w1 is:

[[ 1.02314925]

[ 1.04955781]]

可见两个参数均大于1

'''

将成本改为9,利润改为1,再试试?此时,我们希望模型尽量往少了预测,因为成本比利润要高的多,生产的越多亏的越多,所以:

import tensorflow as tf

import numpy as np

'''

酸奶成本1元,酸奶利润9元

预测少了赚的少,损失大,故希望不要预测少,尽量使模型往多了预测

'''

BATCH_SIZE=8

SEED=23455

COST=9

PROFIT=1

#基于seed产生随机数

rdm=np.random.RandomState(SEED)

#从X这个32行2列的矩阵中 取出一行 x0和x1为决定酸奶销量的两个特征,例如:味道酸度,包装绚丽度

#作为输入数据集的标签(正确答案)

X=rdm.rand(32,2)

#给标签加上-0.05~+0.05的随机噪声 rdm.rand()-->0~1-->除以10-->0~0.1-->0.05-->-0.05~0.05

#酸奶销量

Y_=[[x0+x1+(rdm.rand() /10.0-0.05)] for (x0,x1) in X]

print ("X:\n",X)

print ("Y_:\n",Y_)

x=tf.placeholder(tf.float32,shape=(None,2))

#实际统计销量

y_=tf.placeholder(tf.float32,shape=(None,1))

w1=tf.Variable(tf.random_normal([2,1],stddev=1,seed=1))

#预测值

y=tf.matmul(x,w1)

#如果预测多了,预测的酸奶数量大于了真实的销量,那么损失=多出来的酸奶个数*酸奶成本;

#如果本来可以多卖,但预测少了,供不应求,那么损失=预测少了的数量*每瓶酸奶的利润

#由于每瓶酸奶的利润小于每瓶酸奶的成本,要使loss尽量小,所以模型应该是向偏少的方向预测,尽量少预测,保守销售,这样不会生成多了,卖不出去,亏得更多

loss=tf.reduce_sum(tf.where(tf.greater(y,y_),(y-y_)*COST,(y_-y)*PROFIT))

train_step=tf.train.GradientDescentOptimizer(0.001).minimize(loss)

with tf.Session() as sess:

init=tf.global_variables_initializer()

sess.run(init)

print('w1:\n', sess.run(w1))

#训练模型

step=30000

for i in range(step):

start=(i*BATCH_SIZE)%32

end=start+BATCH_SIZE

sess.run(train_step,feed_dict={x:X[start:end],y_:Y_[start:end]})

if i%5000==0:

total_loss=sess.run(loss,feed_dict={x:X,y_:Y_})

print("After %d training steps, w1 is :"%(i))

print('w1:\n', sess.run(w1))

print('final w1 is: \n',sess.run(w1))

'''

w1:

[[-0.81131822]

[ 1.48459876]]

After 0 training steps, w1 is :

w1:

[[-0.80594873]

[ 1.48737288]]

After 5000 training steps, w1 is :

w1:

[[ 0.95934498]

[ 0.97470313]]

After 10000 training steps, w1 is :

w1:

[[ 0.95873684]

[ 0.97448874]]

After 15000 training steps, w1 is :

w1:

[[ 0.96216297]

[ 0.96877819]]

After 20000 training steps, w1 is :

w1:

[[ 0.96286178]

[ 0.97945559]]

After 25000 training steps, w1 is :

w1:

[[ 0.96628791]

[ 0.97374505]]

final w1 is:

[[ 0.96130908]

[ 0.97270036]]

可见两个参数均小于1

'''

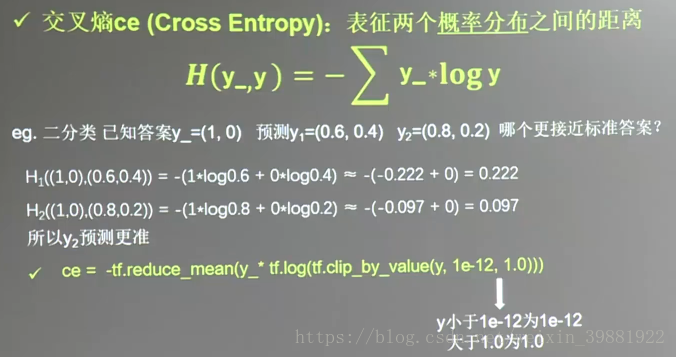

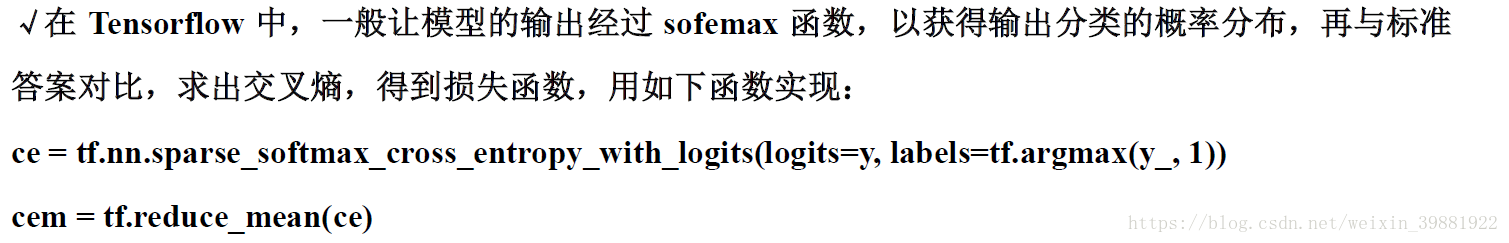

交叉熵越大,两个概率分布越远,交叉熵越小,两个概率分布越近

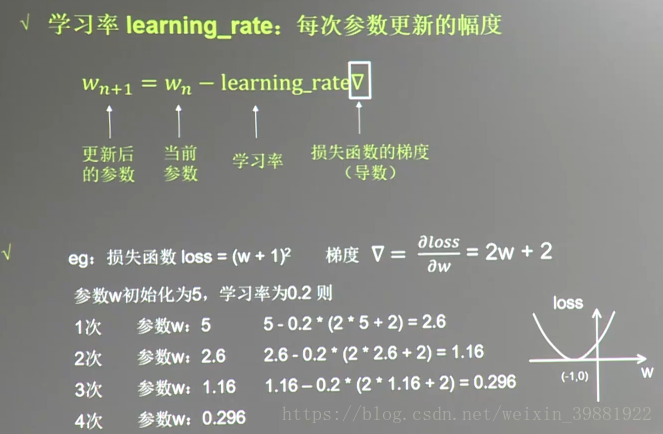

在训练过程中, 参数的更新向着损失函梯度下降方向

'''设损失函数loss=(w+1)^2,另w的初值是常数5,反向传播就是求最优的w值,使得对应的loss最小'''

import tensorflow as tf

w=tf.Variable(tf.constant(5,dtype=tf.float32))

loss=tf.square(w+1)

#反向传播

train_step=tf.train.GradientDescentOptimizer(0.001).minimize(loss)

#生成会话,迭代训练

with tf.Session() as sess:

init=tf.global_variables_initializer()

sess.run(init)

for i in range(6000):

sess.run(train_step)

train_w=sess.run(w)

train_loss=sess.run(loss)

if i%500==0:

print('After %s steps: w is %f, loss is %f'%(i,train_w,train_loss))

'''

After 0 steps: w is 4.988000, loss is 35.856144

After 500 steps: w is 1.200658, loss is 4.842895

After 1000 steps: w is -0.191233, loss is 0.654103

After 1500 steps: w is -0.702769, loss is 0.088346

After 2000 steps: w is -0.890764, loss is 0.011932

After 2500 steps: w is -0.959855, loss is 0.001612

After 3000 steps: w is -0.985246, loss is 0.000218

After 3500 steps: w is -0.994578, loss is 0.000029

After 4000 steps: w is -0.998007, loss is 0.000004

After 4500 steps: w is -0.999268, loss is 0.000001

After 5000 steps: w is -0.999731, loss is 0.000000

After 5500 steps: w is -0.999901, loss is 0.000000

'''由结果可知, 随着损失函数值的减小w无限趋近于 -1,模型 计算推测出 最优参数 w = -1。

√学习率的设置

学习率过大,会导致待优化的参数在最小值附近波动,不收敛;学习率过小,会导致待优化的参数收敛缓慢。

例如:① 对于上例的损失函数 los s = (w + 1) 2。则将上述代码中学习率修改为 1,,其余内容不变。

'''设损失函数loss=(w+1)^2,另w的初值是常数5,反向传播就是求最优的w值,使得对应的loss最小'''

import tensorflow as tf

w=tf.Variable(tf.constant(5,dtype=tf.float32))

loss=tf.square(w+1)

#反向传播

train_step=tf.train.GradientDescentOptimizer(1).minimize(loss)

#生成会话,迭代训练

with tf.Session() as sess:

init=tf.global_variables_initializer()

sess.run(init)

for i in range(40):

sess.run(train_step)

train_w=sess.run(w)

train_loss=sess.run(loss)

print('After %s steps: w is %f, loss is %f'%(i,train_w,train_loss))

'''

After 0 steps: w is -7.000000, loss is 36.000000

After 1 steps: w is 5.000000, loss is 36.000000

After 2 steps: w is -7.000000, loss is 36.000000

After 3 steps: w is 5.000000, loss is 36.000000

After 4 steps: w is -7.000000, loss is 36.000000

After 5 steps: w is 5.000000, loss is 36.000000

After 6 steps: w is -7.000000, loss is 36.000000

After 7 steps: w is 5.000000, loss is 36.000000

After 8 steps: w is -7.000000, loss is 36.000000

After 9 steps: w is 5.000000, loss is 36.000000

After 10 steps: w is -7.000000, loss is 36.000000

After 11 steps: w is 5.000000, loss is 36.000000

After 12 steps: w is -7.000000, loss is 36.000000

After 13 steps: w is 5.000000, loss is 36.000000

After 14 steps: w is -7.000000, loss is 36.000000

After 15 steps: w is 5.000000, loss is 36.000000

After 16 steps: w is -7.000000, loss is 36.000000

After 17 steps: w is 5.000000, loss is 36.000000

After 18 steps: w is -7.000000, loss is 36.000000

After 19 steps: w is 5.000000, loss is 36.000000

After 20 steps: w is -7.000000, loss is 36.000000

After 21 steps: w is 5.000000, loss is 36.000000

After 22 steps: w is -7.000000, loss is 36.000000

After 23 steps: w is 5.000000, loss is 36.000000

After 24 steps: w is -7.000000, loss is 36.000000

After 25 steps: w is 5.000000, loss is 36.000000

After 26 steps: w is -7.000000, loss is 36.000000

After 27 steps: w is 5.000000, loss is 36.000000

After 28 steps: w is -7.000000, loss is 36.000000

After 29 steps: w is 5.000000, loss is 36.000000

After 30 steps: w is -7.000000, loss is 36.000000

After 31 steps: w is 5.000000, loss is 36.000000

After 32 steps: w is -7.000000, loss is 36.000000

After 33 steps: w is 5.000000, loss is 36.000000

After 34 steps: w is -7.000000, loss is 36.000000

After 35 steps: w is 5.000000, loss is 36.000000

After 36 steps: w is -7.000000, loss is 36.000000

After 37 steps: w is 5.000000, loss is 36.000000

After 38 steps: w is -7.000000, loss is 36.000000

After 39 steps: w is 5.000000, loss is 36.000000

'''

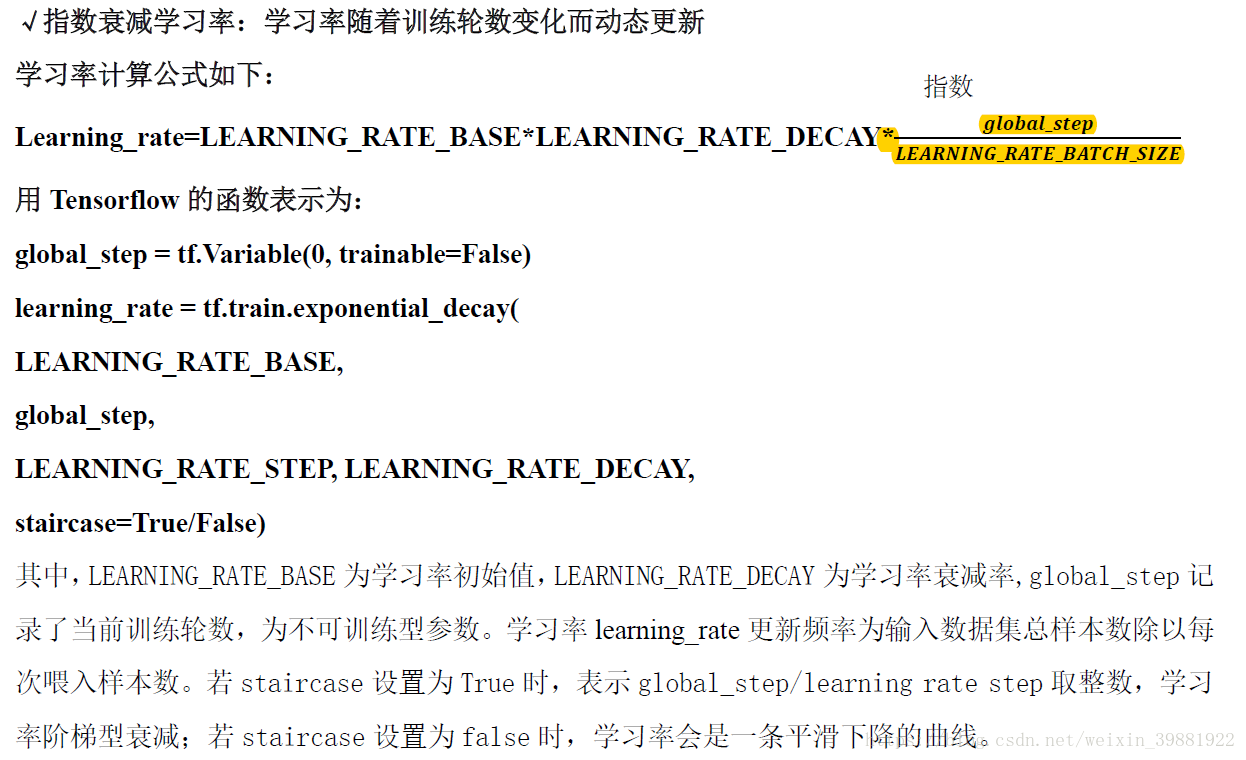

'''

设损失函数loss=(w+1)^2,另w的初值是常数5,反向传播就是求最优的w值,使得对应的loss最小

使用指数衰减的学习率,在迭代初期的到较高的下降速度,可以在较小的轮数下取得更快的收敛度

'''

import tensorflow as tf

#最初的学习率

LEARNING_RATE_BASE=0.1

#学习率衰减率

LEARNING_RATE_DECAY=0.99

#喂入多少轮batchsize后,更新一次学习率,一般设置为总样本数/BATCH_SIZE

LEARNING_RATE_STEP=4

#运行了几轮BATCH_SIZE的计数器,初始值为0,设置不被训练

global_step=tf.Variable(0,trainable=False)

''''

定义指数下降学习率,在本例中,模型训练过程不设定固的学习率使用指数衰减进行。

其初值置为 0.1 ,学习率衰减设置为 0.99 ,BATCH_SIZE 设置为 1。'''

learning_rate=tf.train.exponential_decay(LEARNING_RATE_BASE,global_step,LEARNING_RATE_STEP,LEARNING_RATE_DECAY,staircase=True)

w=tf.Variable(tf.constant(5,dtype=tf.float32))

loss=tf.square(w+1)

#反向传播

train_step=tf.train.GradientDescentOptimizer(learning_rate).minimize(loss, global_step=global_step)

#生成会话,迭代训练

with tf.Session() as sess:

init=tf.global_variables_initializer()

sess.run(init)

for i in range(40):

sess.run(train_step)

learning_rate_val=sess.run(learning_rate)

global_step_val=sess.run(global_step)

w_val=sess.run(w)

loss_val=sess.run(loss)

print("After %s steps: global_step is %f, w is %f, learning rate is %f, loss is %f" % (i, global_step_val, w_val, learning_rate_val, loss_val))

'''

After 0 steps: global_step is 1.000000, w is 3.800000, learning rate is 0.099000, loss is 23.040001

After 1 steps: global_step is 2.000000, w is 2.849600, learning rate is 0.098010, loss is 14.819419

After 2 steps: global_step is 3.000000, w is 2.095001, learning rate is 0.097030, loss is 9.579033

After 3 steps: global_step is 4.000000, w is 1.494386, learning rate is 0.096060, loss is 6.221960

After 4 steps: global_step is 5.000000, w is 1.015166, learning rate is 0.095099, loss is 4.060895

After 5 steps: global_step is 6.000000, w is 0.631886, learning rate is 0.094148, loss is 2.663051

After 6 steps: global_step is 7.000000, w is 0.324608, learning rate is 0.093207, loss is 1.754587

After 7 steps: global_step is 8.000000, w is 0.077684, learning rate is 0.092274, loss is 1.161402

After 8 steps: global_step is 9.000000, w is -0.121202, learning rate is 0.091352, loss is 0.772287

After 9 steps: global_step is 10.000000, w is -0.281761, learning rate is 0.090438, loss is 0.515867

After 10 steps: global_step is 11.000000, w is -0.411674, learning rate is 0.089534, loss is 0.346128

After 11 steps: global_step is 12.000000, w is -0.517024, learning rate is 0.088638, loss is 0.233266

After 12 steps: global_step is 13.000000, w is -0.602644, learning rate is 0.087752, loss is 0.157891

After 13 steps: global_step is 14.000000, w is -0.672382, learning rate is 0.086875, loss is 0.107334

After 14 steps: global_step is 15.000000, w is -0.729305, learning rate is 0.086006, loss is 0.073276

After 15 steps: global_step is 16.000000, w is -0.775868, learning rate is 0.085146, loss is 0.050235

After 16 steps: global_step is 17.000000, w is -0.814036, learning rate is 0.084294, loss is 0.034583

After 17 steps: global_step is 18.000000, w is -0.845387, learning rate is 0.083451, loss is 0.023905

After 18 steps: global_step is 19.000000, w is -0.871193, learning rate is 0.082617, loss is 0.016591

After 19 steps: global_step is 20.000000, w is -0.892476, learning rate is 0.081791, loss is 0.011561

After 20 steps: global_step is 21.000000, w is -0.910065, learning rate is 0.080973, loss is 0.008088

After 21 steps: global_step is 22.000000, w is -0.924629, learning rate is 0.080163, loss is 0.005681

After 22 steps: global_step is 23.000000, w is -0.936713, learning rate is 0.079361, loss is 0.004005

After 23 steps: global_step is 24.000000, w is -0.946758, learning rate is 0.078568, loss is 0.002835

After 24 steps: global_step is 25.000000, w is -0.955125, learning rate is 0.077782, loss is 0.002014

After 25 steps: global_step is 26.000000, w is -0.962106, learning rate is 0.077004, loss is 0.001436

After 26 steps: global_step is 27.000000, w is -0.967942, learning rate is 0.076234, loss is 0.001028

After 27 steps: global_step is 28.000000, w is -0.972830, learning rate is 0.075472, loss is 0.000738

After 28 steps: global_step is 29.000000, w is -0.976931, learning rate is 0.074717, loss is 0.000532

After 29 steps: global_step is 30.000000, w is -0.980378, learning rate is 0.073970, loss is 0.000385

After 30 steps: global_step is 31.000000, w is -0.983281, learning rate is 0.073230, loss is 0.000280

After 31 steps: global_step is 32.000000, w is -0.985730, learning rate is 0.072498, loss is 0.000204

After 32 steps: global_step is 33.000000, w is -0.987799, learning rate is 0.071773, loss is 0.000149

After 33 steps: global_step is 34.000000, w is -0.989550, learning rate is 0.071055, loss is 0.000109

After 34 steps: global_step is 35.000000, w is -0.991035, learning rate is 0.070345, loss is 0.000080

After 35 steps: global_step is 36.000000, w is -0.992297, learning rate is 0.069641, loss is 0.000059

After 36 steps: global_step is 37.000000, w is -0.993369, learning rate is 0.068945, loss is 0.000044

After 37 steps: global_step is 38.000000, w is -0.994284, learning rate is 0.068255, loss is 0.000033

After 38 steps: global_step is 39.000000, w is -0.995064, learning rate is 0.067573, loss is 0.000024

After 39 steps: global_step is 40.000000, w is -0.995731, learning rate is 0.066897, loss is 0.000018

'''

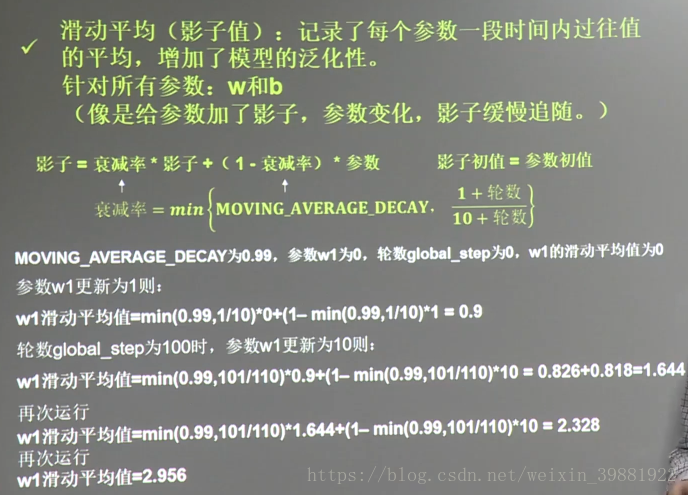

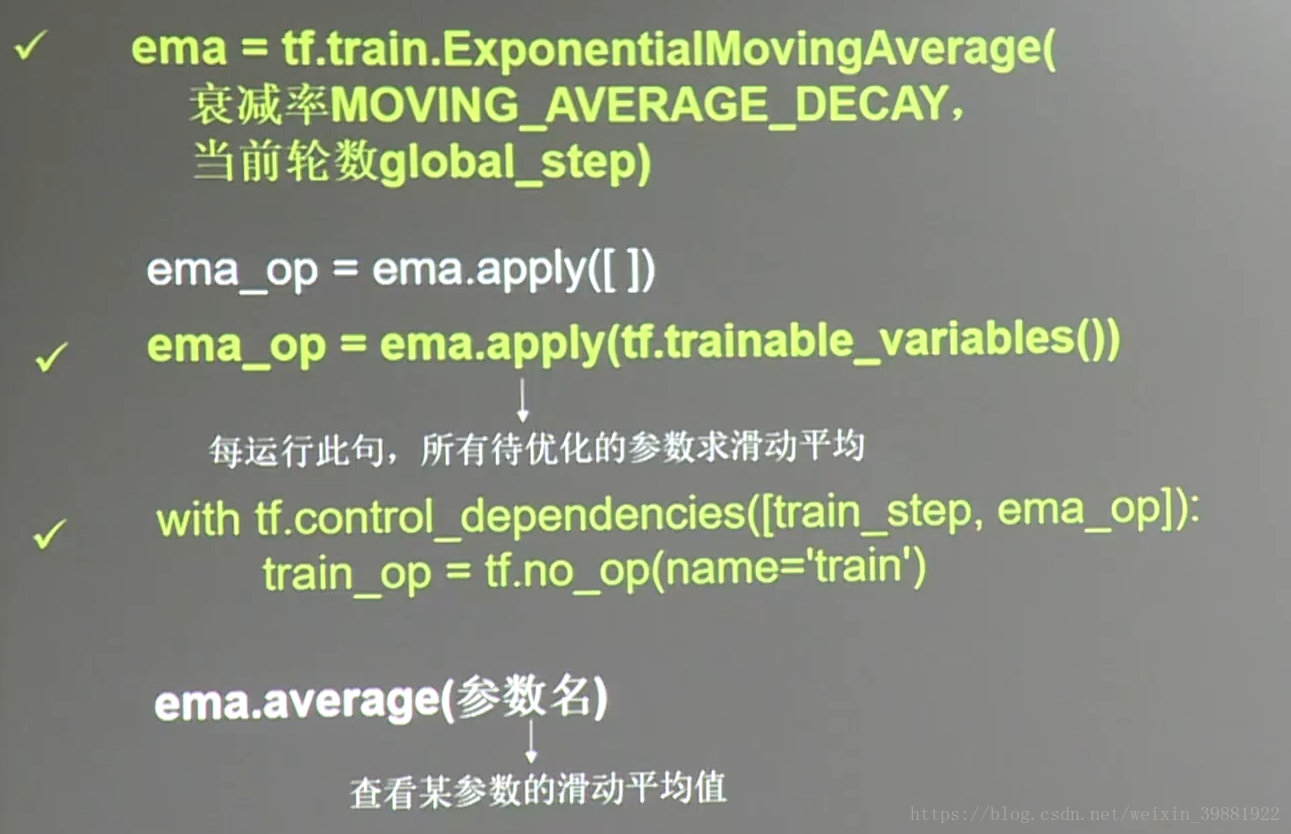

import tensorflow as tf

#定义变量和滑动平均

#定义一个32位的浮点型变量,初始值为0.0,这个代码就是不断地更新w1参数,优化w1参数,滑动平均做了个w1的影子

w1=tf.Variable(tf.constant(0.0),dtype=tf.float32)

global_step=tf.Variable(0,trainable=False)

#实例化滑动平均类,衰减率为0.99,当前轮数为global_step

MOVING_AVERAGE_DECAY=0.99

ema=tf.train.ExponentialMovingAverage(MOVING_AVERAGE_DECAY,global_step)

#ema.apply后的括号里是更新列表,每次运行sess.run(ema_op)时,对更新列表中的元素求滑动平均值。

#在实际应用中会使用tf.trainable_variables()自动将所有待训练的参数汇总为列表

#ema_op = ema.apply([w1])

ema_op=ema.apply(tf.trainable_variables())

with tf.Session() as sess:

init = tf.global_variables_initializer()

sess.run(init)

# 用ema.average(w1)获取w1滑动平均值 (要运行多个节点,作为列表中的元素列出,写在sess.run中)

# 打印出当前参数w1和w1滑动平均值

print("current global_step:", sess.run(global_step))

print("current w1", sess.run([w1, ema.average(w1)]))

# 更新global_step和w1的值,模拟出轮数为100时,参数w1变为10, 以下代码global_step保持为100,每次执行滑动平均操作,影子值会更新

sess.run(tf.assign(global_step, 100))

sess.run(tf.assign(w1, 10))

sess.run(ema_op)

print("current global_step:", sess.run(global_step))

print("current w1", sess.run([w1, ema.average(w1)]))

# 每次sess.run会更新一次w1的滑动平均值

sess.run(ema_op)

print("current global_step:", sess.run(global_step))

print("current w1", sess.run([w1, ema.average(w1)]))

sess.run(ema_op)

print("current global_step:", sess.run(global_step))

print("current w1", sess.run([w1, ema.average(w1)]))

sess.run(ema_op)

print("current global_step:", sess.run(global_step))

print("current w1", sess.run([w1, ema.average(w1)]))

sess.run(ema_op)

print("current global_step:", sess.run(global_step))

print("current w1", sess.run([w1, ema.average(w1)]))

'''

current global_step: 0

current w1 [0.0, 0.0]

current global_step: 100

current w1 [10.0, 0.81818163]

current global_step: 100

current w1 [10.0, 1.5694211]

current global_step: 100

current w1 [10.0, 2.2591956]

current global_step: 100

current w1 [10.0, 2.892534]

current global_step: 100

current w1 [10.0, 3.4740539]

'''