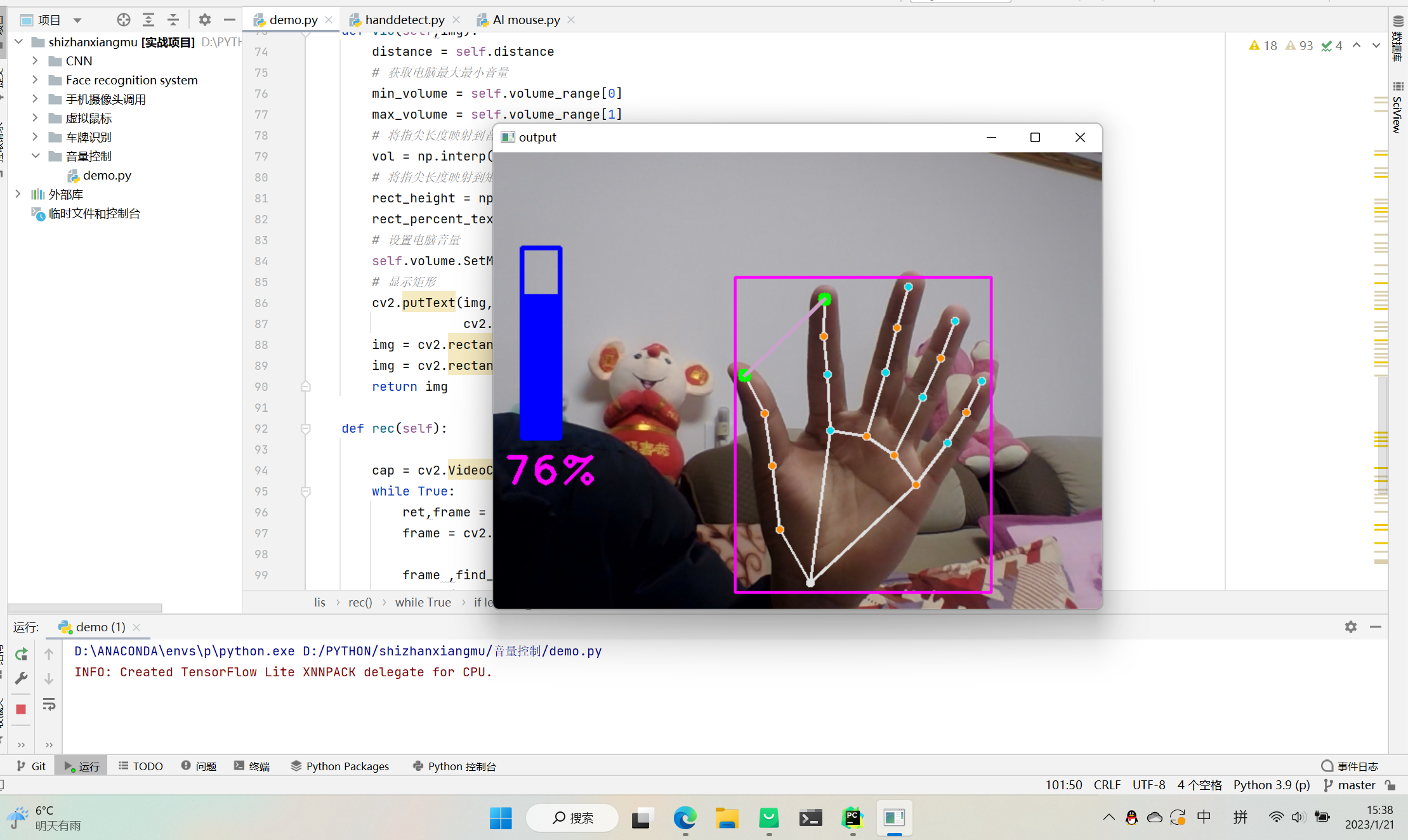

基于opencv和mediapipe进行音量的控制

首先,先利用mediapipe检测出手,获取到相应的坐标,再取出相应点的坐标值基于opencv进行绘制和距离测量等等,最后通过手指关键点的坐标值与电脑的音量进行映射,从而控制电脑的电脑音量。

import cv2

import mediapipe as mp

import numpy as np

import math

# 导入电脑音量控制模块

from ctypes import cast, POINTER

from comtypes import CLSCTX_ALL

from pycaw.pycaw import AudioUtilities, IAudioEndpointVolume

class lis():

def __init__(self,min_detection_confidence=0.7):

self.mpmode = mp.solutions.hands

self.mphand = self.mpmode.Hands() #调用mediapipe

self.min_det_con = min_detection_confidence

self.draw = mp.solutions.drawing_utils

# 获取电脑音量范围

devices = AudioUtilities.GetSpeakers()

interface = devices.Activate(

IAudioEndpointVolume._iid_, CLSCTX_ALL, None)

self.volume = cast(interface, POINTER(IAudioEndpointVolume))

self.volume_range = self.volume.GetVolumeRange()

#手指识别

def findhand(self,img):

RGB_img = cv2.cvtColor(img,(cv2.COLOR_BGR2RGB))

results = self.mphand.process(RGB_img)

x_list = []

y_list = []

self.find_list = []

#!!!!!!!

if (results.multi_hand_landmarks):######需要判断是否存在返回的值,不然会报错

for hand_landmarks in results.multi_hand_landmarks:

self.draw.draw_landmarks(img,hand_landmarks,mp.solutions.hands.HAND_CONNECTIONS,

mp.solutions.drawing_styles.get_default_pose_landmarks_style ( )

)

for id,landmark in enumerate(hand_landmarks.landmark): #获取id以及手指信息

H,W,_ = img.shape #需要获取图片宽度进行比例变换

x = int(landmark.x *W)

y = int(landmark.y *H)

x_list.append(x)

y_list.append(y)

self.find_list.append([id,x,y])

x_min , y_min = min(x_list),min(y_list)

x_max, y_max = max(x_list), max(y_list)

cv2.rectangle(img,(x_min-10,y_min-10),(x_max+10,y_max+10),(255,0,255),2)

return img,self.find_list

#手指画点

def finger_draw(self,img,id):

x,y = self.find_list[id][1:]

cv2.circle(img,(x,y),2,(0,255,0),10)

return img

#手指画线

def finger_line(self,img,p1,p2,):

x ,y = self.find_list[p1][1:]

x1, y1 = self.find_list[p2][1:]

cv2.line(img,(x,y),(x1,y1),(202,162,201),3)

self.distance = math.hypot(x1-x,y1-y)

if self.distance<100:

x3= int((x1+x)/2)

y3= int((y1+y)/2)

cv2.circle(img, (x3, y3), 2, (151, 118, 52), 10)

return img,self.distance

def vio(self,img):

distance = self.distance

# 获取电脑最大最小音量

min_volume = self.volume_range[0]

max_volume = self.volume_range[1]

# 将指尖长度映射到音量上

vol = np.interp(distance, [10, 150], [min_volume, max_volume])

# 将指尖长度映射到矩形显示上

rect_height = np.interp(distance, [10, 150], [0, 200])

rect_percent_text = np.interp(distance, [10, 150], [0, 100])

# 设置电脑音量

self.volume.SetMasterVolumeLevel(vol, None)

# 显示矩形

cv2.putText(img, str(math.ceil(rect_percent_text)) + "%", (10, 350),

cv2.FONT_HERSHEY_PLAIN, 3, (255, 0, 255), 3)

img = cv2.rectangle(img, (30, 100), (70, 300), (255, 0, 0), 3)

img = cv2.rectangle(img, (30, math.ceil(300 - rect_height)), (70, 300), (255, 0, 0), -1)

return img

def rec(self):

cap = cv2.VideoCapture(0)

while True:

ret,frame = cap.read()

frame = cv2.flip(frame,1)

frame ,find_list = self.findhand(frame)

if len(find_list) !=0:

frame = self.finger_draw(frame,8)

frame = self.finger_draw(frame,4)

frame ,a = self.finger_line(frame,8,4)

# print(a)

frame = self.vio(frame)

cv2.imshow('output',frame)

if ord('q') == cv2.waitKey(1):

break

cap.release()

cv2.destroyAllWindows()

s=lis()

s.rec()