前面的话:

目前maven仓库中没有支持cdh的相关依赖。cloudera自己建立了一个相关的仓库。要想利用maven添加相关依赖,则必须单独添加cloudera仓库。

一、项目pom.xml, 添加仓库配置

<repositories>

<repository>

<id>cloudera</id>

<url>https://repository.cloudera.com/artifactory/cloudera-repos/</url>

</repository>

<releases>

<enabled>true</enabled>

</releases>

<snapshots>

<enabled>true</enabled>

</snapshots>

</repositories>二、 添加cdh依赖,如hbase-client:

<dependency>

<groupId>org.apache.hbase</groupId>

<artifactId>hbase-client</artifactId>

<version>1.2.0-cdh5.12.1</version>

</dependency>三、在国内使用maven中央仓库一般会有网络问题,所以大部分人会使用aliyun仓库或者其他开源的仓库。所以需要修改setting.xml (以下配置中 *,!cloudera 表示除了aliyun仓库还使用cloudera仓库)

<mirror>

<id>nexus-aliyun</id>

<mirrorOf>*,!cloudera</mirrorOf>

<name>Nexus aliyun</name>

<url>

http://maven.aliyun.com/nexus/content/groups/public

</url>

</mirror>四、若未设置自动更新maven项目,则需更新maven项目,然后等待下载相关依赖。完成之后便可以使用cdh进行开发啦 ^_^。

附上官网相关说明地址:

https://www.cloudera.com/documentation/enterprise/release-notes/topics/cdh_vd_cdh5_maven_repo.html

import java.sql.SQLException; import java.sql.Connection; import java.sql.ResultSet; import java.sql.Statement; import java.sql.DriverManager; public class HiveJDBCTest { private static String driverName ="org.apache.hive.jdbc.HiveDriver"; public static void main(String[] args) throws SQLException { try { Class.forName(driverName); } catch (ClassNotFoundException e) { e.printStackTrace(); System.exit(1); } //注意默认端口号为10000 Connection con = DriverManager.getConnection("jdbc:hive2://10.131.11.37:10000/default", "root", ""); Statement stmt = con.createStatement(); String tableName = "wyphao"; stmt.execute("drop table if exists " + tableName); stmt.execute("create table " + tableName + " (key int, value string)"); System.out.println("Create table success!"); // show tables String sql = "show tables '" + tableName + "'"; System.out.println("Running: " + sql); ResultSet res = stmt.executeQuery(sql); if (res.next()) { System.out.println(res.getString(1)); } // describe table sql = "describe " + tableName; System.out.println("Running: " + sql); res = stmt.executeQuery(sql); while (res.next()) { System.out.println(res.getString(1) + "\t" + res.getString(2)); } sql = "select * from " + tableName; res = stmt.executeQuery(sql); while (res.next()) { System.out.println(String.valueOf(res.getInt(1)) + "\t" + res.getString(2)); } sql = "select count(1) from " + tableName; System.out.println("Running: " + sql); res = stmt.executeQuery(sql); while (res.next()) { System.out.println(res.getString(1)); } } }pom.xml文件<?xml version="1.0" encoding="UTF-8"?> <project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd"> <modelVersion>4.0.0</modelVersion> <groupId>HHHHHHHHHHH</groupId> <artifactId>BBBBB</artifactId> <version>1.0-SNAPSHOT</version> <repositories> <repository> <id>cloudera</id> <url>https://repository.cloudera.com/artifactory/cloudera-repos/</url> <releases> <enabled>true</enabled> </releases> <snapshots> <enabled>false</enabled> </snapshots> </repository> </repositories> <dependencies> <!--<dependency> <groupId>org.apache.hadoop</groupId> <artifactId>hadoop-common</artifactId> <version>2.6.0</version> </dependency>--> <dependency> <groupId>org.apache.hadoop</groupId> <artifactId>hadoop-common</artifactId> <version>2.6.0-cdh5.12.1</version> </dependency> <!--dependency> <groupId>org.apache.hive</groupId> <artifactId>hive-jdbc</artifactId> <version>1.1.0-cdh5.5.0</version> </dependency>--> <dependency> <groupId>org.apache.hive</groupId> <artifactId>hive-jdbc</artifactId> <version>1.1.0-cdh5.12.1</version> </dependency> <!-- https://mvnrepository.com/artifact/org.apache.hive/hive-jdbc --> <!--<dependency> <groupId>org.apache.hive</groupId> <artifactId>hive-jdbc</artifactId> <version>1.1.0</version> </dependency>--> <dependency> <groupId>com.alibaba</groupId> <artifactId>fastjson</artifactId> <version>1.2.32</version> </dependency> <dependency> <groupId>org.apache.hive</groupId> <artifactId>hive-exec</artifactId> <version>1.1.0-cdh5.12.1</version> </dependency> <!-- https://mvnrepository.com/artifact/org.apache.hive/hive-metastore --> <dependency> <groupId>org.apache.hive</groupId> <artifactId>hive-metastore</artifactId> <version>1.1.0-cdh5.12.1</version> </dependency> <!-- https://mvnrepository.com/artifact/org.apache.hadoop/hadoop-mapreduce-client-core --> <dependency> <groupId>org.apache.hadoop</groupId> <artifactId>hadoop-mapreduce-client-core</artifactId> <version>2.6.0-cdh5.12.1</version> </dependency> </dependencies> <build> <pluginManagement> <plugins> <plugin> <groupId>net.alchim31.maven</groupId> <artifactId>scala-maven-plugin</artifactId> <version>3.2.2</version> </plugin> <plugin> <groupId>org.apache.maven.plugins</groupId> <artifactId>maven-compiler-plugin</artifactId> <version>3.5.1</version> </plugin> </plugins> </pluginManagement> <plugins> <plugin> <groupId>net.alchim31.maven</groupId> <artifactId>scala-maven-plugin</artifactId> <executions> <execution> <id>scala-compile-first</id> <phase>process-resources</phase> <goals> <goal>add-source</goal> <goal>compile</goal> </goals> </execution> <execution> <id>scala-test-compile</id> <phase>process-test-resources</phase> <goals> <goal>testCompile</goal> </goals> </execution> </executions> </plugin> <plugin> <groupId>org.apache.maven.plugins</groupId> <artifactId>maven-compiler-plugin</artifactId> <executions> <execution> <phase>compile</phase> <goals> <goal>compile</goal> </goals> </execution> </executions> </plugin> <plugin> <groupId>org.apache.maven.plugins</groupId> <artifactId>maven-shade-plugin</artifactId> <version>2.4.3</version> <executions> <execution> <phase>package</phase> <goals> <goal>shade</goal> </goals> <configuration> <filters> <filter> <artifact>*:*</artifact> <excludes> <exclude>META-INF/*.SF</exclude> <exclude>META-INF/*.DSA</exclude> <exclude>META-INF/*.RSA</exclude> </excludes> </filter> </filters> </configuration> </execution> </executions> </plugin> </plugins> </build> </project>最后连接成功后出现新的问题:我执行 select * from yuanshuai ; 这个 没问题然后我执行select count(*) from yuanshuai;就开始跑MR任务 就开始出现问题

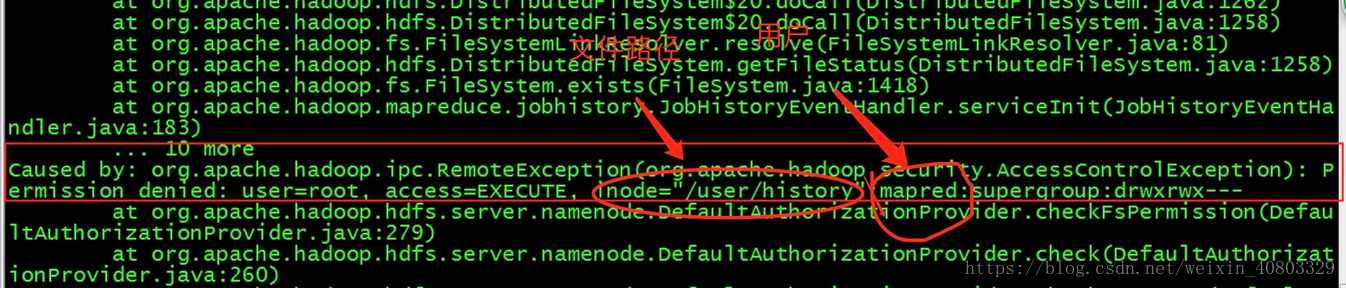

这个就开始跑mR任务 但是就报错

yarn logs -applicationId application_1530238297006_0010 #下执行 最后通过这个命令 查到了原因 此命令非常重要

然后 发现是权限问题 这时去修改指定目录的权限

我开始选择的是 hadoop fs -chmod 777 /user/history 就不对 就会报错

后来切换到hdfs 用户下

su -u hdfs

hdfs dfs -chmod 777 /user/history

大功告成!!!!!!!