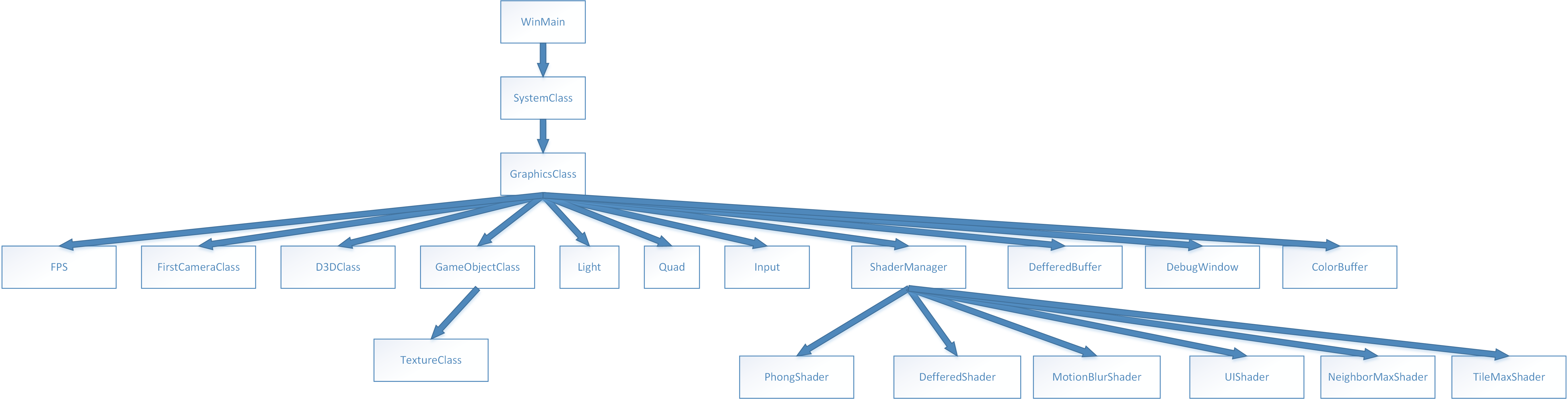

程序结构:

MotionBlur实现过程:

总体分为四个pass:

(1)RenderGbufferPass, 得到colorRT,velocityBufferRT,DepthRT

Texture2D ShaderTexture:register(t0); //纹理资源

SamplerState SampleType:register(s0); //采样方式

static const float EPSILON1 = 0.01f;

static const float2 VONE = float2(1.0f, 1.0f);

static const float2 VHALF = float2(0.5f, 0.5f);

//VertexShader

cbuffer CBCurMatrix:register(b0)

{

matrix curWorld;

matrix curView;

matrix Proj;

matrix WorldInvTranspose;

};

cbuffer CBPreMatrix:register(b1)

{

matrix preWorld;

matrix preView;

};

cbuffer CBOther:register(b2)

{

float halfExpore; //0.5f

float halfExporeXFrame; //FPS的一半

float c_k;

float pad;

}

struct VertexIn

{

float3 Pos:POSITION;

float3 Normal:NORMAL;

float2 Tex:TEXCOORD; //多重纹理可以用其它数字

};

struct VertexOut

{

float4 Pos:SV_POSITION;

float2 Tex:TEXCOORD1;

float4 curClipSpacePos:TEXCOORD2;

float4 preClipSpacePos:TEXCOORD3;

};

struct PixelOut

{

float4 color:SV_Target0;

float4 velocity:SV_Target1;

};

float2 writeBiasScale(float2 v)

{

return (v + VONE) * VHALF;

}

VertexOut VS(VertexIn ina)

{

VertexOut outa;

//将坐标变换到齐次裁剪空间

float4 curClipSpacePos = mul(float4(ina.Pos, 1.0f), curWorld);

curClipSpacePos = mul(curClipSpacePos, curView);

curClipSpacePos = mul(curClipSpacePos, Proj);

outa.Pos = curClipSpacePos;

//目前帧的齐次裁剪空间位置

outa.curClipSpacePos = curClipSpacePos;

//前一帧的齐次裁剪空间位置

float4 preClipSpacePos = mul(float4(ina.Pos, 1.0f), preWorld);

preClipSpacePos = mul(preClipSpacePos, preView);

preClipSpacePos = mul(preClipSpacePos, Proj);

outa.preClipSpacePos = preClipSpacePos;

outa.Tex= ina.Tex;

return outa;

}

/*延迟渲染的PixelShader输出的为屏幕上的未经处理的渲染到屏幕的像素和像素对应的法线*/

PixelOut PS(VertexOut outa) : SV_Target

{

PixelOut pout;

//第一,获取像素的采样颜色

pout.color = ShaderTexture.Sample(SampleType, outa.Tex);

float3 curNDCPos = outa.curClipSpacePos.xyz / outa.curClipSpacePos.w;

float3 preNDCPos = outa.preClipSpacePos.xyz / outa.preClipSpacePos.w;

//[-1,1] * FPS,也就是每帧在NDC空间的移动[-1,1]扩大FPS倍

float2 vQX = (curNDCPos - preNDCPos).xy * halfExporeXFrame;

float fLenQX = length(vQX);

float fWeight = max(0.5, min(fLenQX, c_k));

fWeight /= (fLenQX + EPSILON1);

//实现速度在一个范围内

vQX *= fWeight;

pout.velocity = float4(writeBiasScale(vQX),0.5f, 1.0f);

return pout;

}

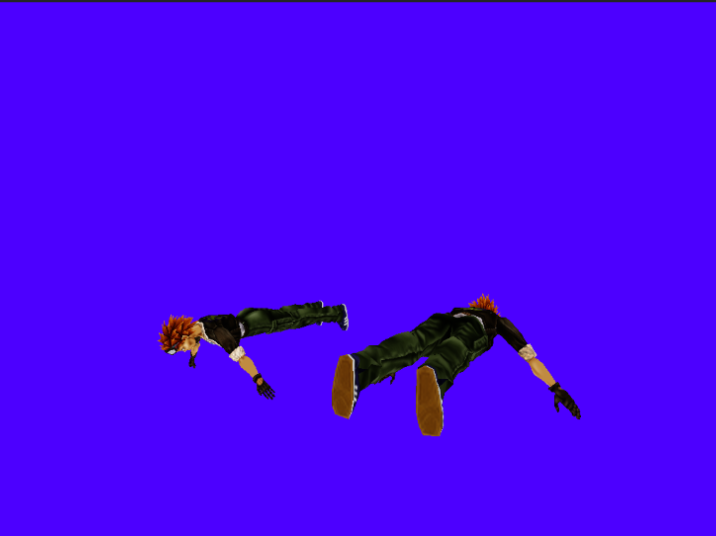

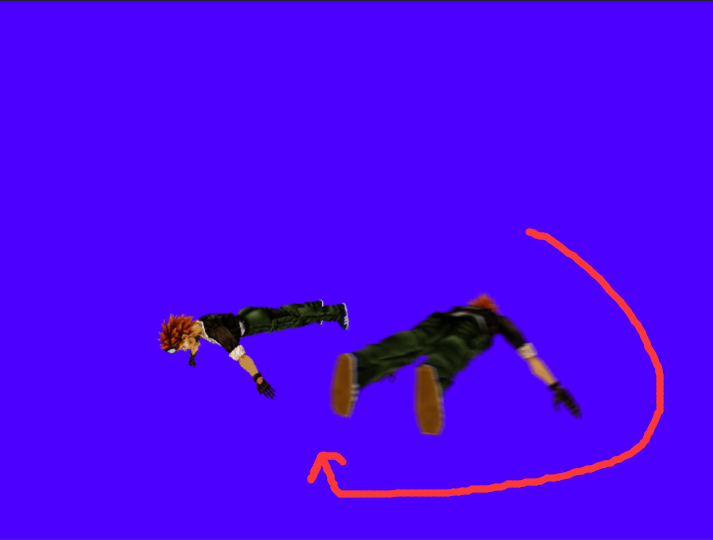

colorRT:

VelocityBufferRT:

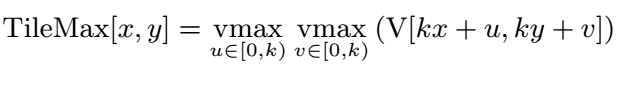

(2)RenderTileMaxPass,得到tileMaxVelocityRT

Texture2D velocityRT:register(t0); //纹理资源

SamplerState SamplePointClamp:register(s0); //采样方式

cbuffer CBEveryFrame:register(b0)

{

float c_k;

float3 pad;

};

struct VertexIn

{

float3 Pos:POSITION;

float2 Tex:TEXCOORD; //多重纹理可以用其它数字

};

struct VertexOut

{

float4 Pos:SV_POSITION;

float2 Tex:TEXCOORD0;

};

static const float4 GRAY = float4(0.5f, 0.5f, 0.5f, 1.0f);

static const float2 VONE = float2(1.0f, 1.0f);

static const float2 VTWO = float2(2.0f, 2.0f);

static const float2 VHALF = float2(0.5f, 0.5f);

float2 textureSize(Texture2D tex)

{

uint width, height;

tex.GetDimensions(width, height);

return float2(width, height);

}

float2 readBiasScale(float2 v)

{

return (v * VTWO) - VONE;

}

float2 writeBiasScale(float2 v)

{

return (v + VONE) * VHALF;

}

VertexOut VS(VertexIn ina)

{

VertexOut outa;

outa.Pos = float4(ina.Pos, 1.0f);

outa.Tex= ina.Tex;

return outa;

}

float4 PS(VertexOut outa) : SV_Target

{

float4 color = GRAY;

float2 texCoordBase = outa.Tex;

float2 texCoordIncrement = float2(1.0f, 1.0f) / textureSize(velocityRT);

float fMaxManitudeSquared = 0.0f;

//实现一个像素存储的速度为临近像素存储速度的最大值

for (int s = 0; s < c_k; ++s)

{

for (int t = 0; t < c_k; ++t)

{

float2 texCoords = texCoordBase + float2(s, t) * texCoordIncrement;

float2 texLookup = velocityRT.SampleLevel(SamplePointClamp, texCoords, 0).xy;

float2 velocity = readBiasScale(texLookup);

float fMagitudeSpuard = dot(velocity, velocity);

if (fMaxManitudeSquared < fMagitudeSpuard)

{

color.xy = writeBiasScale(velocity);

fMaxManitudeSquared = fMagitudeSpuard;

}

}

}

return color;

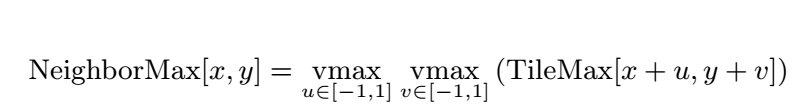

}(3)RenderNeigborMaxPass,得到NeighborMaxVelocityRT:

Texture2D velocityTileMaxRT:register(t0); //纹理资源

SamplerState SamplePointClamp:register(s0); //采样方式

static const float2 VONE = float2(1.0f, 1.0f);

static const float2 VTWO = float2(2.0f, 2.0f);

static const float2 VHALF = float2(0.5f, 0.5f);

struct VertexIn

{

float3 Pos:POSITION;

float2 Tex:TEXCOORD; //多重纹理可以用其它数字

};

struct VertexOut

{

float4 Pos:SV_POSITION;

float2 Tex:TEXCOORD0;

};

float2 textureSize(Texture2D tex)

{

uint width, height;

tex.GetDimensions(width, height);

return float2(width, height);

}

float2 readBiasScale(float2 v)

{

return (v * VTWO) - VONE;

}

float2 writeBiasScale(float2 v)

{

return (v + VONE) * VHALF;

}

VertexOut VS(VertexIn ina)

{

VertexOut outa;

outa.Pos = float4(ina.Pos, 1.0f);

outa.Tex= ina.Tex;

return outa;

}

float4 PS(VertexOut outa) : SV_Target

{

float4 color = 1;

float2 texcoordBase = outa.Tex;

float2 texCoordIncrement = float2(1.0f, 1.0f) / textureSize(velocityTileMaxRT);

float fMaxMagnitideSpuared = 0.0f;

for (int s = -1; s <= 1; ++s)

{

for (int t = -1; t <= 1; ++t)

{

float2 texCoords = texcoordBase + float2(s, t) * texCoordIncrement;

float2 texLookup = velocityTileMaxRT.SampleLevel(SamplePointClamp, texCoords, 0).xy;

float2 velocity = readBiasScale(texLookup);

float fMagitudeSpuard = dot(velocity, velocity);

if (fMaxMagnitideSpuared < fMagitudeSpuard)

{

float fDisplacement = abs(float(s)) + abs(float(t));

float2 vOrientation = sign(float2(s, t)* velocity);

float distance = vOrientation.x + vOrientation.y;

if (abs(distance) == fDisplacement)

{

color.xy = writeBiasScale(velocity);

fMaxMagnitideSpuared = fMagitudeSpuard;

}

}

}

}

return color;

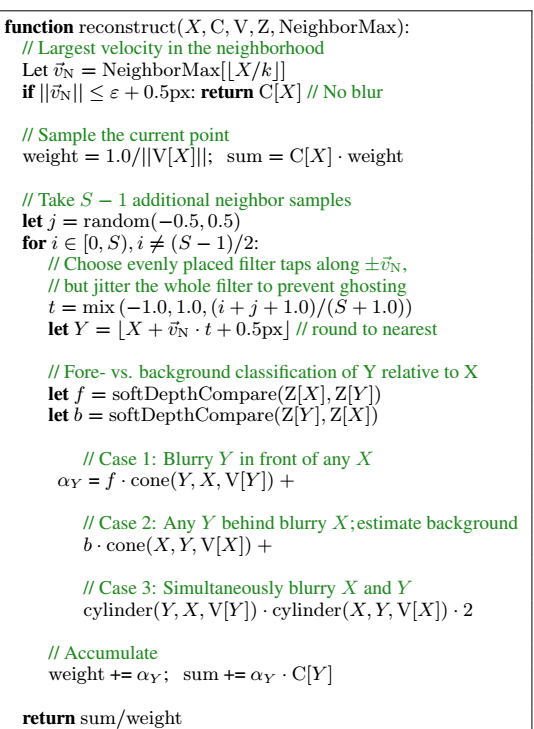

}(4)运用多张RT(colorRT,depthRT,VelocityRT,randomRT,neightborMaxRt)运用随机采样重构法,计算移动模糊的像素:

Texture2D colorRT:register(t0);

Texture2D velocityRT:register(t1);

Texture2D neighborMaxRT:register(t2);

Texture2D depthRT:register(t3);

Texture2D randomRT:register(t4);

static const float EPSILON1 = 0.01f;

static const float HALF_VELOCITY_CUTOFF = 0.25f;

static const float VARIANCE_THRESHOLD = 1.5f;

static const float WEIGHT_CORRECTION_FACTOR = 60.0f;

static const float SOFT_Z_EXTENT = 0.10f;

static const float CYLINDER_CORNER_1 = 0.95f;

static const float CYLINDER_CORNER_2 = 1.05f;

static const float2 VHALF = float2(0.5f, 0.5f);

static const float2 VONE = float2(1.0f, 1.0f);

static const float2 VTWO = float2(2.0f, 2.0f);

SamplerState SamPointWarp:register(s0); //采样方式

SamplerState SamLinearClamp:register(s1); //采样方式

SamplerState SamPointClamp:register(s2); //采样方式

cbuffer CBEveryFrame:register(b0)

{

float c_half_exposure;

float c_k;

float c_s;

float c_max_sample_tab_distance;

};

struct VertexIn

{

float3 Pos:POSITION;

float2 Tex:TEXCOORD; //多重纹理可以用其它数字

};

struct VertexOut

{

float4 Pos:SV_POSITION;

float2 Tex:TEXCOORD0;

};

float2 readBiasScale(float2 v)

{

return (v * VTWO) - VONE;

};

float GetDepth(float2 uv)

{

return -(depthRT.SampleLevel(SamPointClamp, uv, 0).r);

};

float psseudoRandom(float2 uv)

{

return randomRT.SampleLevel(SamPointWarp, uv, 0).r - 0.5f;

};

float2 textureSize(Texture2D tex)

{

uint width, height;

tex.GetDimensions(width, height);

return float2(width, height);

};

float softDepthCompare(float za, float zb)

{

return clamp((1.0 - (za - zb) / SOFT_Z_EXTENT), 0.0, 1.0);

};

float cone(float magDiff, float magV)

{

return 1.0f - abs(magDiff) / magV;

};

float cylinder(float magDiff, float magV)

{

return 1.0 - smoothstep(CYLINDER_CORNER_1 * magV, CYLINDER_CORNER_2 * magV, abs(magDiff));

};

VertexOut VS(VertexIn ina)

{

VertexOut outa;

outa.Pos = float4(ina.Pos, 1.0f);

outa.Tex = ina.Tex;

return outa;

}

float4 PS(VertexOut outa) : SV_Target

{

float2 texSize = textureSize(colorRT);

float2 texcoordBase = outa.Tex;

float4 texColor = colorRT.SampleLevel(SamLinearClamp, texcoordBase, 0);

//相邻最大速度变量

float2 nVelocity = readBiasScale(neighborMaxRT.Sample(SamPointClamp, texcoordBase).xy);

float nVelocityLen = length(nVelocity);

float TempNeiVelocity = nVelocityLen * c_half_exposure;

bool flagNVelocity = (TempNeiVelocity >= EPSILON1);

TempNeiVelocity = clamp(TempNeiVelocity, 0.1f, c_k);

//如果速度太小,则直接返回基本的颜色

if (TempNeiVelocity < HALF_VELOCITY_CUTOFF)

{

return texColor;

}

//纠正halfVelocity

if (flagNVelocity)

{

nVelocity *= (TempNeiVelocity / nVelocityLen);

nVelocityLen = length(nVelocity);

}

//速度变量

float2 velocity = readBiasScale(velocityRT.Sample(SamPointClamp, texcoordBase).xy);

float velocityLen = length(velocity);

float TempVelocity = velocityLen * c_half_exposure;

bool flagVelocity = (TempVelocity >= EPSILON1);

TempVelocity = clamp(TempVelocity, 0.1f, c_k);

if (flagVelocity)

{

velocity *= (TempVelocity / velocityLen);

velocityLen = length(velocity);

}

//在【-0.5f, 0.5f】之间的随机值

float r = psseudoRandom(texcoordBase);

//获取深度缓存值

float depth = GetDepth(texcoordBase);

//如果目前片元的速度太小,则使用NeighborMaxVelocity

float2 CorrectedVelocity = (velocityLen < VARIANCE_THRESHOLD) ?

normalize(nVelocity) : normalize(velocity);

//权重值

float weight = c_s / WEIGHT_CORRECTION_FACTOR / TempVelocity;

//累积和

float3 sum = texColor.xyz * weight;

//相同片元的下标

int selfIndex = (c_s - 1) / 2;

float max_sample_tab_distance = c_max_sample_tab_distance / texSize.x;

float2 half_texel = VHALF / texSize.x;

//循环进行重构采样中

for (int i = 0; i < c_s; ++i)

{

//跳过相同的片元

if (i == selfIndex) { continue; }

//T为目前片元和采样片元之间的距离

//采样不是邻居的片元,而是更远的

float lerpAmount = (float(i) + r + 1.0f) / (c_s + 1.0f);

float T = lerp(-max_sample_tab_distance, max_sample_tab_distance, lerpAmount);

//在纠正速度变量和相邻速度变量

float2 Switch = (i & 1 == 1) ? CorrectedVelocity : nVelocity;

//目前采样的位置

float2 newSampleTexcoord = float2(texcoordBase + float2(Switch * T + half_texel));

float2 newVelocity = readBiasScale(velocityRT.SampleLevel(SamPointClamp, newSampleTexcoord, 0).xy);

float newVelocityLen = length(newVelocity);

//纠正,clamp half_velocity

float TempNewVelocity = newVelocityLen * c_half_exposure;

bool flagNewVelocity = (TempNewVelocity > EPSILON1);

TempNewVelocity = clamp(TempNewVelocity, 0.1f, c_k);

if (flagNewVelocity)

{

newVelocity *= (TempNewVelocity / newVelocityLen);

newVelocityLen = length(newVelocity);

}

//新采样点的深度

float newDepth = GetDepth(newSampleTexcoord);

float alphaY = softDepthCompare(depth, newDepth) * cone(T, TempNewVelocity)+

softDepthCompare(newDepth, depth) * cone(T, TempVelocity)+ cylinder(T, TempNewVelocity) * cylinder(T, TempVelocity) * 2.0;

weight += alphaY;

sum += (alphaY * colorRT.SampleLevel(SamLinearClamp, newSampleTexcoord, 0)).xyz;

}

return float4(sum / weight, 1.0f);

}参考 资料:

【1】NVIDIA SDK 的 AdvancedMotionBlur Sample

【2】McGuire M., Hennessy P., Bukowski M., Osman B.: A reconstruction filter for plausible motion blue. In I3D (2012), pp. 135-142.,非常推荐看这篇paper