前言

本FFMpeg的源码多采用C/C++代码编写的,想要使用FFmpeg提供的库函数,需要将其打包成动态链接库文件。如Linux的so库文件,windows上的dll库文件,Android系统的底层是基于Linux系统内核的,因此要在Android平台上使用FFmpeg框架提供的库函数,需要将其打包成so库文件。而在Linux系统编译打包,需要在FFmpeg框架源码的目录下建立Linux的批文件Shell脚本文件,即可在Linux系统运行命令的脚本文件(Windows系统为bat文件)。本篇博文介绍如何用JNI的方式集成FFmpeg,如果你还了解如何用CMake集成FFmpeg,可以查看 Android CMake集成JNI开发环境和 Android JNI实现Java与C/C++互相调用,以及so库的生成和应用(JNI方式调用美图秀秀so)。有关Android集成FFmpeg框架及其应用的源码在Github上,喜欢给个star,谢谢哦。本人博客会长期更新有关Android FFmpeg,OpenGL和OpenCV,OpenSL。如果您感兴趣的话,可以关注我CSDN哦,博客会持续更新。

本篇文件按如下步骤进行介绍

- windows下编译FFmpeg源码,得到so库文件

- 利用JNI将so库集成到Android项目中

- 通过native方法在C++文件中调用FFmpeg框架的库函数

下面我们看看具体的实现步骤

编译FFmpeg,取得so库文件

windows环境下编译FFmpeg,需要下载如下工具和源码

下载好FFmpeg源码和MinGW工具后,就可以开始编译FFmpeg了

首先需要对源代码中的configure文件进行修改,由于编译出来的动态库文件名的版本号在.so之后(例如 “libavcodec.so.5.100.1”),而Android平台不能识别这样的文件名,所以需要修改这种文件名。找到FFMpeg文件夹下面的configure文件,做如下修改

SLIBNAME_WITH_MAJOR='$(SLIBNAME).$(LIBMAJOR)'

LIB_INSTALL_EXTRA_CMD='$$(RANLIB) "$(LIBDIR)/$(LIBNAME)"'

SLIB_INSTALL_NAME='$(SLIBNAME_WITH_VERSION)'

SLIB_INSTALL_LINKS='$(SLIBNAME_WITH_MAJOR) $(SLIBNAME)'

将其修改成:

SLIBNAME_WITH_MAJOR='$(SLIBPREF)$(FULLNAME)-$(LIBMAJOR)$(SLIBSUF)'

LIB_INSTALL_EXTRA_CMD='$$(RANLIB) "$(LIBDIR)/$(LIBNAME)"'

SLIB_INSTALL_NAME='$(SLIBNAME_WITH_MAJOR)'

SLIB_INSTALL_LINKS='$(SLIBNAME)'然后新建一个build_android.sh文件。注意:要根据环境配置前四项,且每行末尾不能有空格

#!/bin/bash

export TMPDIR="C:/Users/Jacket/Desktop/ff"

NDK=C:/Users/Jacket/AppData/Local/Android/sdk/ndk-bundle

SYSROOT=$NDK/platforms/android-21/arch-arm/

TOOLCHAIN=$NDK/toolchains/arm-linux-androideabi-4.9/prebuilt/windows-x86_64

function build_one {

./configure \

--prefix=$PREFIX \

--enable-shared \

--disable-static \

--disable-doc \

--disable-ffmpeg \

--disable-ffplay \

--disable-ffprobe \

--disable-ffserver \

--disable-avdevice \ --disable-doc \

--disable-symver \

--cross-prefix=$TOOLCHAIN/bin/arm-linux-androideabi- \

--target-os=linux \

--arch=arm \

--enable-cross-compile \

--sysroot=$SYSROOT \

--extra-cflags="-Os -fpic $ADDI_CFLAGS" \

--extra-ldflags="$ADDI_LDFLAGS" \

$ADDITIONAL_CONFIGURE_FLAG

make clean

make

make install

}

CPU=arm

PREFIX=$(pwd)/android/$CPU

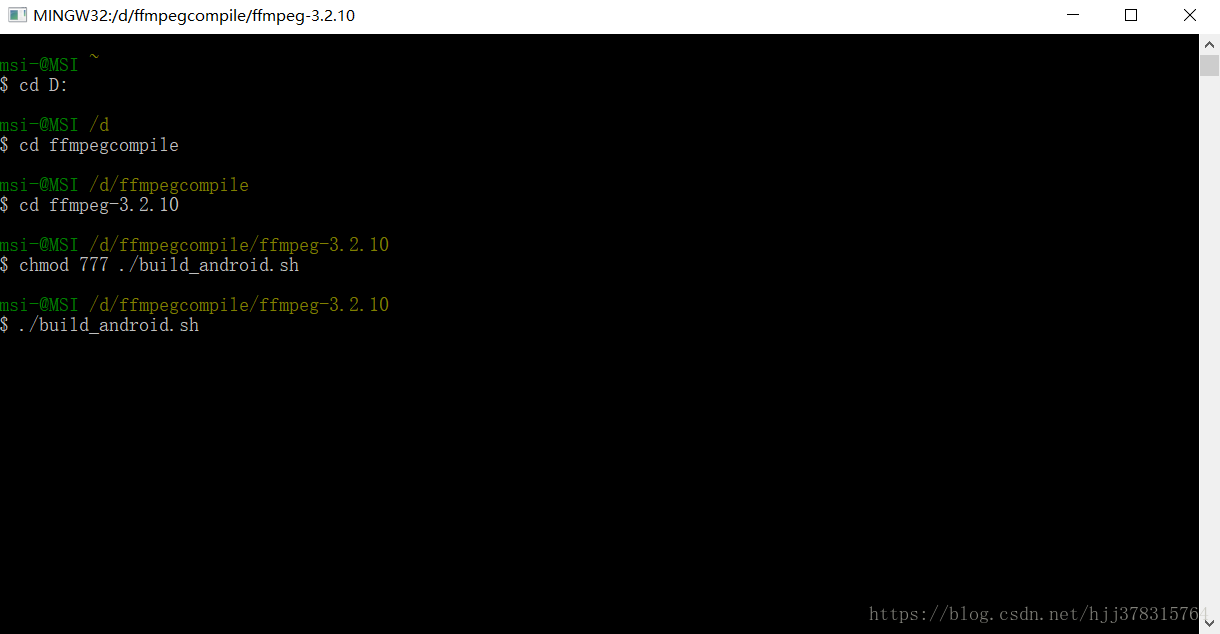

ADDI_CFLAGS="启动MinGW进入FFmpeg源代码对应目录下执行命令脚本

chmod ./build_android.sh

./build_android.sh然后就开始编译了

下面开始移植到Android Studio中

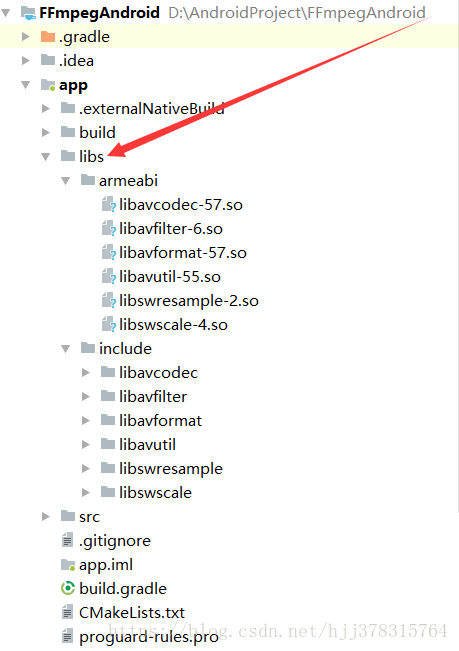

得到so库文件后,在libs目录下新建armeabi和include文件夹,将上面编译得到的android文件夹下对应的armeabi文件夹下的so库文件和include文件夹下的c头文件分别拷贝到libs下的armeabi和include文件夹下。如下图所示:

编写CMakeLists.txt文件,在其中指明FFMpeg库文件目录

# For more information about using CMake with Android Studio, read the

# documentation: https://d.android.com/studio/projects/add-native-code.html

# Sets the minimum version of CMake required to build the native library.

cmake_minimum_required(VERSION 3.4.1)

# Creates and names a library, sets it as either STATIC

# or SHARED, and provides the relative paths to its source code.

# You can define multiple libraries, and CMake builds them for you.

# Gradle automatically packages shared libraries with your APK.

add_library( # Sets the name of the library.

native-lib

# Sets the library as a shared library.

SHARED

# Provides a relative path to your source file(s).

src/main/cpp/native-lib.cpp )

# Searches for a specified prebuilt library and stores the path as a

# variable. Because CMake includes system libraries in the search path by

# default, you only need to specify the name of the public NDK library

# you want to add. CMake verifies that the library exists before

# completing its build.

find_library( # Sets the name of the path variable.

log-lib

# Specifies the name of the NDK library that

# you want CMake to locate.

log )

# Specifies libraries CMake should link to your target library. You

# can link multiple libraries, such as libraries you define in this

# build script, prebuilt third-party libraries, or system libraries.

set(distribution_DIR ../../../../libs)

add_library( avcodec-57

SHARED

IMPORTED)

set_target_properties( avcodec-57

PROPERTIES IMPORTED_LOCATION

${distribution_DIR}/armeabi/libavcodec-57.so)

add_library( avfilter-6

SHARED

IMPORTED)

set_target_properties( avfilter-6

PROPERTIES IMPORTED_LOCATION

${distribution_DIR}/armeabi/libavfilter-6.so)

add_library( avformat-57

SHARED

IMPORTED)

set_target_properties( avformat-57

PROPERTIES IMPORTED_LOCATION

${distribution_DIR}/armeabi/libavformat-57.so)

add_library( avutil-55

SHARED

IMPORTED)

set_target_properties( avutil-55

PROPERTIES IMPORTED_LOCATION

${distribution_DIR}/armeabi/libavutil-55.so)

add_library( swresample-2

SHARED

IMPORTED)

set_target_properties( swresample-2

PROPERTIES IMPORTED_LOCATION

${distribution_DIR}/armeabi/libswresample-2.so)

add_library( swscale-4

SHARED

IMPORTED)

set_target_properties( swscale-4

PROPERTIES IMPORTED_LOCATION

${distribution_DIR}/armeabi/libswscale-4.so)

include_directories(libs/include)

target_link_libraries( # Specifies the target library.

native-lib

avcodec-57

avfilter-6

avformat-57

avutil-55

swresample-2

swscale-4

# Links the target library to the log library

# included in the NDK.

${log-lib}

)3.编写native方法,在C/C++文件中调用FFMpeg库提供的函数

#include <jni.h>

#include <string>

#include <android/log.h>

extern "C" {

//编码

#include "libavcodec/avcodec.h"

//封装格式处理

#include "libavformat/avformat.h"

//像素处理

#include "libswscale/swscale.h"

//视频滤镜

#include "libavfilter/avfilter.h"

}

#define FFLOGI(FORMAT,...) __android_log_print(ANDROID_LOG_INFO,"ffmpeg",FORMAT,##__VA_ARGS__);

#define FFLOGE(FORMAT,...) __android_log_print(ANDROID_LOG_ERROR,"ffmpeg",FORMAT,##__VA_ARGS__);

extern "C"

JNIEXPORT jstring JNICALL

Java_com_jacket_ffmpeg_MainActivity_stringFromJNI(

JNIEnv *env,

jobject /* this */) {

// std::string hello = "Hello from C++";

// return env->NewStringUTF(hello.c_str());

// return env->NewStringUTF(av_version_info());

return env->NewStringUTF(avcodec_configuration());

}

extern "C"

JNIEXPORT void JNICALL

Java_com_jacket_ffmpeg_MainActivity_decode(JNIEnv *env, jclass type, jstring input_,

jstring output_) {

//获取输入输出文件名

const char *input = env->GetStringUTFChars(input_, 0);

const char *output = env->GetStringUTFChars(output_, 0);

//1.注册所有组件

av_register_all();

//封装格式上下文,统领全局的结构体,保存了视频文件封装格式的相关信息

AVFormatContext *pFormatCtx = avformat_alloc_context();

//2.打开输入视频文件

if (avformat_open_input(&pFormatCtx, input, NULL, NULL) != 0)

{

FFLOGE("%s","无法打开输入视频文件");

return;

}

//3.获取视频文件信息

if (avformat_find_stream_info(pFormatCtx,NULL) < 0)

{

FFLOGE("%s","无法获取视频文件信息");

return;

}

//获取视频流的索引位置

//遍历所有类型的流(音频流、视频流、字幕流),找到视频流

int v_stream_idx = -1;

int i = 0;

//number of streams

for (; i < pFormatCtx->nb_streams; i++)

{

//流的类型

if (pFormatCtx->streams[i]->codec->codec_type == AVMEDIA_TYPE_VIDEO)

{

v_stream_idx = i;

break;

}

}

if (v_stream_idx == -1)

{

FFLOGE("%s","找不到视频流\n");

return;

}

//只有知道视频的编码方式,才能够根据编码方式去找到解码器

//获取视频流中的编解码上下文

AVCodecContext *pCodecCtx = pFormatCtx->streams[v_stream_idx]->codec;

//4.根据编解码上下文中的编码id查找对应的解码

AVCodec *pCodec = avcodec_find_decoder(pCodecCtx->codec_id);

if (pCodec == NULL)

{

FFLOGE("%s","找不到解码器\n");

return;

}

//5.打开解码器

if (avcodec_open2(pCodecCtx,pCodec,NULL)<0)

{

FFLOGE("%s","解码器无法打开\n");

return;

}

//输出视频信息

FFLOGI("视频的文件格式:%s",pFormatCtx->iformat->name);

FFLOGI("视频时长:%d", (pFormatCtx->duration)/1000000);

FFLOGI("视频的宽高:%d,%d",pCodecCtx->width,pCodecCtx->height);

FFLOGI("解码器的名称:%s",pCodec->name);

//准备读取

//AVPacket用于存储一帧一帧的压缩数据(H264)

//缓冲区,开辟空间

AVPacket *packet = (AVPacket*)av_malloc(sizeof(AVPacket));

//AVFrame用于存储解码后的像素数据(YUV)

//内存分配

AVFrame *pFrame = av_frame_alloc();

//YUV420

AVFrame *pFrameYUV = av_frame_alloc();

//只有指定了AVFrame的像素格式、画面大小才能真正分配内存

//缓冲区分配内存

uint8_t *out_buffer = (uint8_t *)av_malloc(avpicture_get_size(AV_PIX_FMT_YUV420P, pCodecCtx->width, pCodecCtx->height));

//初始化缓冲区

avpicture_fill((AVPicture *)pFrameYUV, out_buffer, AV_PIX_FMT_YUV420P, pCodecCtx->width, pCodecCtx->height);

//用于转码(缩放)的参数,转之前的宽高,转之后的宽高,格式等

struct SwsContext *sws_ctx = sws_getContext(pCodecCtx->width,pCodecCtx->height,pCodecCtx->pix_fmt,

pCodecCtx->width, pCodecCtx->height, AV_PIX_FMT_YUV420P,

SWS_BICUBIC, NULL, NULL, NULL);

int got_picture, ret;

FILE *fp_yuv = fopen(output, "wb+");

int frame_count = 0;

//6.一帧一帧的读取压缩数据

while (av_read_frame(pFormatCtx, packet) >= 0)

{

//只要视频压缩数据(根据流的索引位置判断)

if (packet->stream_index == v_stream_idx)

{

//7.解码一帧视频压缩数据,得到视频像素数据

ret = avcodec_decode_video2(pCodecCtx, pFrame, &got_picture, packet);

if (ret < 0)

{

FFLOGE("%s","解码错误");

return;

}

//为0说明解码完成,非0正在解码

if (got_picture)

{

//AVFrame转为像素格式YUV420,宽高

//2 6输入、输出数据

//3 7输入、输出画面一行的数据的大小 AVFrame 转换是一行一行转换的

//4 输入数据第一列要转码的位置 从0开始

//5 输入画面的高度

sws_scale(sws_ctx, pFrame->data, pFrame->linesize, 0, pCodecCtx->height,

pFrameYUV->data, pFrameYUV->linesize);

//输出到YUV文件

//AVFrame像素帧写入文件

//data解码后的图像像素数据(音频采样数据)

//Y 亮度 UV 色度(压缩了) 人对亮度更加敏感

//U V 个数是Y的1/4

int y_size = pCodecCtx->width * pCodecCtx->height;

fwrite(pFrameYUV->data[0], 1, y_size, fp_yuv);

fwrite(pFrameYUV->data[1], 1, y_size / 4, fp_yuv);

fwrite(pFrameYUV->data[2], 1, y_size / 4, fp_yuv);

frame_count++;

FFLOGI("解码第%d帧",frame_count);

}

}

//释放资源

av_free_packet(packet);

}

fclose(fp_yuv);

av_frame_free(&pFrame);

avcodec_close(pCodecCtx);

avformat_free_context(pFormatCtx);

env->ReleaseStringUTFChars(input_, input);

env->ReleaseStringUTFChars(output_, output);

}

extern "C"

JNIEXPORT jstring JNICALL

Java_com_jacket_ffmpeg_MainActivity_avfilterinfo(

JNIEnv * env,jobject){

char info[40000] = {0};

avfilter_register_all();

AVFilter *f_temp = (AVFilter *)avfilter_next(NULL);

while (f_temp != NULL){

sprintf(info,"%s%s\n",info,f_temp->name);

f_temp = f_temp->next;

}

return env->NewStringUTF(info);

}

extern "C"

JNIEXPORT jstring JNICALL

Java_com_jacket_ffmpeg_MainActivity_avcodecinfo(

JNIEnv * env,jobject){

char info[40000] = {0};

av_register_all();

AVCodec *c_temp = av_codec_next(NULL);

while(c_temp != NULL){

if(c_temp->decode != NULL){

sprintf(info,"%sdecode:",info);

} else{

sprintf(info,"%sencode",info);

}

switch(c_temp->type){

case AVMEDIA_TYPE_VIDEO:

sprintf(info,"%s(video):",info);

break;

case AVMEDIA_TYPE_AUDIO:

sprintf(info,"%s(audio):",info);

break;

default:

sprintf(info,"%s(other):",info);

break;

}

sprintf(info,"%s[%10s]\n",info,c_temp->name);

c_temp = c_temp->next;

}

return env->NewStringUTF(info);

}

extern "C"

JNIEXPORT jstring JNICALL

Java_com_jacket_ffmpeg_MainActivity_avformatinfo(

JNIEnv * env,jobject){

char info[40000] = {0};

av_register_all();

AVInputFormat *if_temp = av_iformat_next(NULL);

AVOutputFormat *of_temp = av_oformat_next(NULL);

while (if_temp != NULL){

sprintf(info,"%sInput:%s\n",info,of_temp->name);

if_temp = if_temp->next;

}

while (of_temp != NULL){

sprintf(info,"%sOutput: %s\n",info,of_temp->name);

of_temp = of_temp->next;

}

return env->NewStringUTF(info);

}

extern "C"

JNIEXPORT jstring JNICALL

Java_com_jacket_ffmpeg_MainActivity_urlprotocolinfo(

JNIEnv * env,jobject){

char info[40000] = {0};

av_register_all();

struct URLProtocol *pup = NULL;

struct URLProtocol **p_temp = &pup;

avio_enum_protocols((void **)p_temp,0);

while ((*p_temp) != NULL){

sprintf(info,"%sInput: %s\n",info,avio_enum_protocols((void **)p_temp,0));

}

pup = NULL;

avio_enum_protocols((void **)p_temp,1);

while ((*p_temp) != NULL){

sprintf(info,"%sInput: %s\n",info,avio_enum_protocols((void **)p_temp,1));

}

return env->NewStringUTF(info);

}可以看出,Java_com_jacket_ffmepg_MainActivity_stringFromJNI()根据宏定义判定了系统类型并且返回了一个字符串。在这里要注意,C语言中的Char[]是不能直接对应为Java中的String类型的(即jstring)。char[]转换为String需要通过JNIEnv的NewStringUTF()函数。为了调用FFMpeg而经过修改后的Java_com_jacket_ffmpeg_MainActivity_stringFromJN的源代码如下:

Java_com_jacket_ffmpeg_MainActivity_stringFromJNI(

JNIEnv *env,

jobject /* this */) {

return env->NewStringUTF(avcodec_configuration());

}在native-lib.cpp文件中调用libavcodec的avcodec_configuration()方法,用于获取FFMpeg的配置信息。该程序会输出FFMpeg类库下列信息

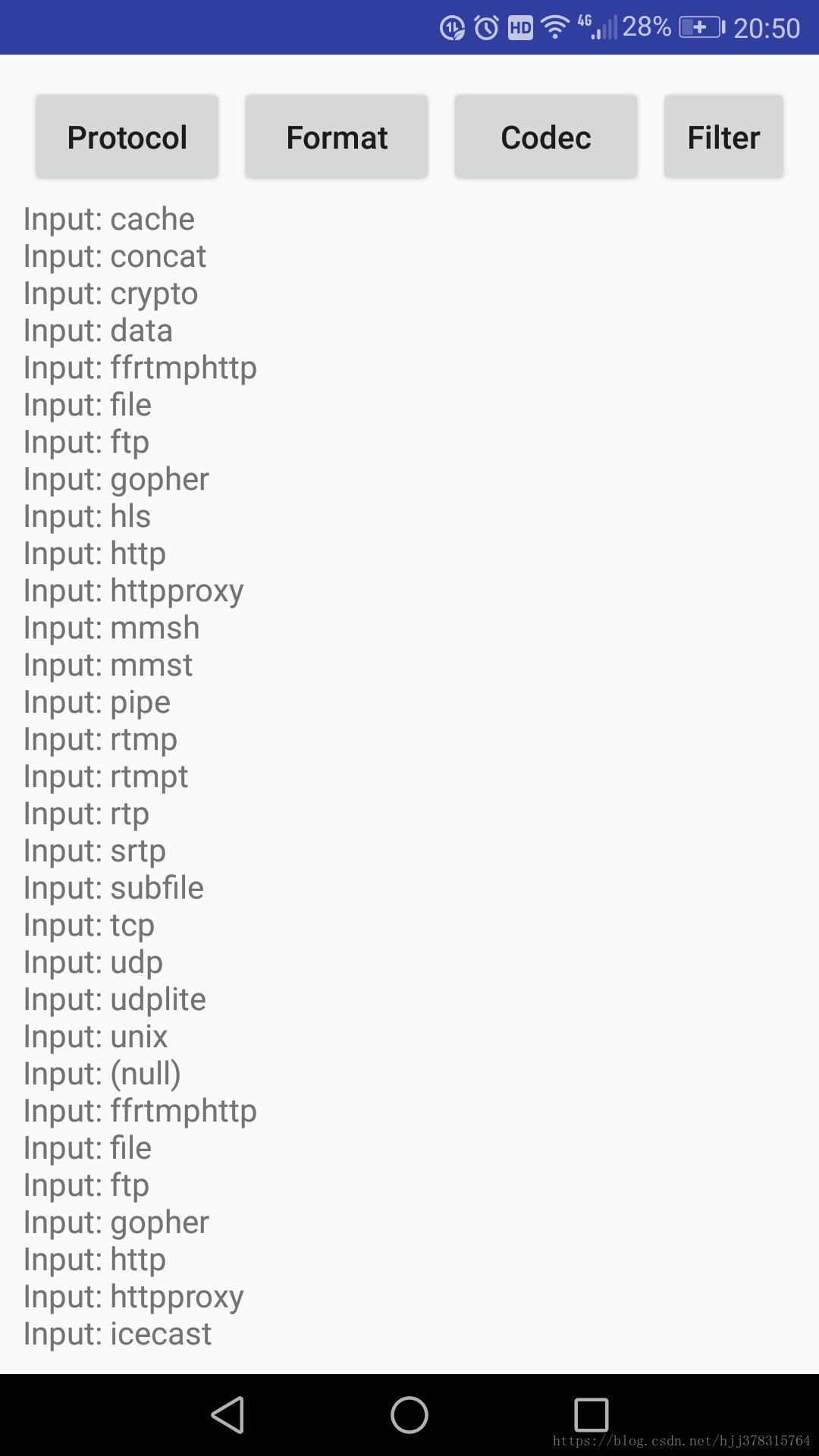

- Protocol: FFMpeg类库支持的协议

- AVFormat: FFMpeg类库支持的封装格式

- AVCodec: FFMpeg类库支持的编解码器

- AVFilter: FFMpeg类库支持的滤镜

- Configure: FFMpeg类库的配置信息

最后在MainActivity中通过native方法调用C/C++函数

public class MainActivity extends AppCompatActivity implements View.OnClickListener

{

// Used to load the 'native-lib' library on application startup.

static

{

System.loadLibrary("native-lib");

// System.loadLibrary("avcodec-57");

// System.loadLibrary("avfilter-6");

// System.loadLibrary("avformat-57");

// System.loadLibrary("avutil-55");

// System.loadLibrary("swresample-2");

// System.loadLibrary("swscale-4");

}

private Button protocol,format,codec,filter;

private TextView tv_info;

@Override

protected void onCreate(Bundle savedInstanceState)

{

super.onCreate(savedInstanceState);

setContentView(R.layout.activity_main);

init();

}

private void init() {

protocol = (Button) findViewById(R.id.btn_protocol);

format = (Button) findViewById(R.id.btn_format);

codec = (Button) findViewById(R.id.btn_codec);

filter = (Button) findViewById(R.id.btn_filter);

tv_info = (TextView) findViewById(R.id.tv_info);

protocol.setOnClickListener(this);

format.setOnClickListener(this);

codec.setOnClickListener(this);

filter.setOnClickListener(this);

}

@Override

public void onClick(View view) {

switch (view.getId()) {

case R.id.btn_protocol:

tv_info.setText(urlprotocolinfo());

break;

case R.id.btn_format:

tv_info.setText(avformatinfo());

break;

case R.id.btn_codec:

tv_info.setText(avcodecinfo());

break;

case R.id.btn_filter:

tv_info.setText(avfilterinfo());

break;

default:

break;

}

}

@Override

public boolean onCreateOptionsMenu(Menu menu)

{

// Inflate the menu; this adds items to the action bar if it is present.

getMenuInflater().inflate(R.menu.menu_main, menu);

return true;

}

@Override

public boolean onOptionsItemSelected(MenuItem item)

{

// Handle action bar item clicks here. The action bar will

// automatically handle clicks on the Home/Up button, so long

// as you specify a parent activity in AndroidManifest.xml.

int id = item.getItemId();

//noinspection SimplifiableIfStatement

if (id == R.id.action_settings)

{

return true;

}

return super.onOptionsItemSelected(item);

}

/**

* A native method that is implemented by the 'native-lib' native library,

* which is packaged with this application.

*/

public native String stringFromJNI();

public native void decode(String input, String output);

public native String avfilterinfo();

public native String avcodecinfo();

public native String avformatinfo();

public native String urlprotocolinfo();

}运行结果如下所示:

最后附上本篇博客的代码:Github地址