我们先看一下它无参构造函数

public ConcurrentHashMap() {

}可以看到并未对hash表进行初始化。

我们再看一下它的put方法

public V put(K key, V value) {

return putVal(key, value, false);

}进入putVal方法。

final V putVal(K key, V value, boolean onlyIfAbsent) {

if (key == null || value == null) throw new NullPointerException(); // key与value不可为null

int hash = spread(key.hashCode()); //key的hash的计算

int binCount = 0;

for (Node<K,V>[] tab = table;;) {

Node<K,V> f; int n, i, fh;

if (tab == null || (n = tab.length) == 0)

tab = initTable(); 第一次put进入这个方法,初始化table

else if ((f = tabAt(tab, i = (n - 1) & hash)) == null) {//如果该桶位置为空,则直接放入

if (casTabAt(tab, i, null,

new Node<K,V>(hash, key, value, null)))

break; // no lock when adding to empty bin

}

else if ((fh = f.hash) == MOVED) //如果发现正在扩容,帮助扩容

tab = helpTransfer(tab, f);

else {//否则就是发生hash冲突

V oldVal = null;

synchronized (f) {

if (tabAt(tab, i) == f) { //判断是不是链表,顺着链表找,若发现相同的key则更新value,否则找到链表尾就添加这个映射

if (fh >= 0) {

binCount = 1;

for (Node<K,V> e = f;; ++binCount) {

K ek;

if (e.hash == hash &&

((ek = e.key) == key ||

(ek != null && key.equals(ek)))) {

oldVal = e.val;

if (!onlyIfAbsent)

e.val = value;

break;

}

Node<K,V> pred = e;

if ((e = e.next) == null) {

pred.next = new Node<K,V>(hash, key,

value, null);

break;

}

}

}

else if (f instanceof TreeBin) { //判断是不是红黑树

Node<K,V> p;

binCount = 2;

if ((p = ((TreeBin<K,V>)f).putTreeVal(hash, key,

value)) != null) {

oldVal = p.val;

if (!onlyIfAbsent)

p.val = value;

}

}

}

}

if (binCount != 0) { //判断需不需要转红黑树

if (binCount >= TREEIFY_THRESHOLD)

treeifyBin(tab, i);

if (oldVal != null)

return oldVal;

break;

}

}

}

addCount(1L, binCount); //hash元素数+1

return null;

}我们看一下key 的计算方法。

static final int spread(int h) {

return (h ^ (h >>> 16)) & HASH_BITS; 用key的hashCode与他自己右移16位的与再与HASH_BITS进行与运算,HASH_BITS = 0x7fffffff

}我们看一下初始化方法initTable。

private final Node<K,V>[] initTable() {

Node<K,V>[] tab; int sc;

while ((tab = table) == null || tab.length == 0) {

if ((sc = sizeCtl) < 0) //表示有线程正在执行初始化操作,将其他线程挂起,hash只能有一个线程执行初始化操作。

Thread.yield(); // lost initialization race; just spin

else if (U.compareAndSwapInt(this, SIZECTL, sc, -1)) {利用CAS操作将sizeCtl值置为-1,表示本线程正在执行初始化

try {

if ((tab = table) == null || tab.length == 0) {

int n = (sc > 0) ? sc : DEFAULT_CAPACITY; //当sc大于0,hash表初始化为sc,否则初始化为DEFAULT_CAPACITY=16

@SuppressWarnings("unchecked")

Node<K,V>[] nt = (Node<K,V>[])new Node<?,?>[n];

table = tab = nt;

sc = n - (n >>> 2); //sc设置为0.75n.

}

} finally {

sizeCtl = sc; //0.75*n,相当于扩容的阈值

}

break;

}

}

return tab;

}我们看一下addCount方法。

private final void addCount(long x, int check) {

CounterCell[] as; long b, s;

if ((as = counterCells) != null || //完成hash元素数+1操作

!U.compareAndSwapLong(this, BASECOUNT, b = baseCount, s = b + x)) {

CounterCell a; long v; int m;

boolean uncontended = true;

if (as == null || (m = as.length - 1) < 0 ||

(a = as[ThreadLocalRandom.getProbe() & m]) == null ||

!(uncontended =

U.compareAndSwapLong(a, CELLVALUE, v = a.value, v + x))) {

fullAddCount(x, uncontended);

return;

}

if (check <= 1)

return;

s = sumCount();

}

if (check >= 0) {

Node<K,V>[] tab, nt; int n, sc;

while (s >= (long)(sc = sizeCtl) && (tab = table) != null &&

(n = tab.length) < MAXIMUM_CAPACITY) {

int rs = resizeStamp(n);

if (sc < 0) {

if ((sc >>> RESIZE_STAMP_SHIFT) != rs || sc == rs + 1 ||

sc == rs + MAX_RESIZERS || (nt = nextTable) == null ||

transferIndex <= 0)

break;

if (U.compareAndSwapInt(this, SIZECTL, sc, sc + 1))

transfer(tab, nt);

}

else if (U.compareAndSwapInt(this, SIZECTL, sc,

(rs << RESIZE_STAMP_SHIFT) + 2))

transfer(tab, null); //扩容

s = sumCount();

}

}private final void transfer(Node<K,V>[] tab, Node<K,V>[] nextTab) {

int n = tab.length, stride;

if ((stride = (NCPU > 1) ? (n >>> 3) / NCPU : n) < MIN_TRANSFER_STRIDE)

stride = MIN_TRANSFER_STRIDE; // subdivide range

if (nextTab == null) { // initiating

try {

@SuppressWarnings("unchecked")

Node<K,V>[] nt = (Node<K,V>[])new Node<?,?>[n << 1]; //扩容为原来的2倍,即2*n

nextTab = nt;

} catch (Throwable ex) { // try to cope with OOME

sizeCtl = Integer.MAX_VALUE;

return;

}

nextTable = nextTab;

transferIndex = n; //transferIndex为如果有下一个线程参与扩容,开始迁移的下标位置,从原hash表尾部开始

}

int nextn = nextTab.length;

ForwardingNode<K,V> fwd = new ForwardingNode<K,V>(nextTab); //如果一个桶位置上位fwd,则表示该桶已被迁移

boolean advance = true;

boolean finishing = false; // to ensure sweep before committing nextTab

for (int i = 0, bound = 0;;) {

Node<K,V> f; int fh;

while (advance) { //不停的从原hash表尾部往前找

int nextIndex, nextBound;

if (--i >= bound || finishing)

advance = false;

else if ((nextIndex = transferIndex) <= 0) {

i = -1;

advance = false;

}

else if (U.compareAndSwapInt

(this, TRANSFERINDEX, nextIndex,

nextBound = (nextIndex > stride ?

nextIndex - stride : 0))) {

bound = nextBound;

i = nextIndex - 1;

advance = false;

}

}

if (i < 0 || i >= n || i + n >= nextn) {

int sc;

if (finishing) {

nextTable = null;

table = nextTab;

sizeCtl = (n << 1) - (n >>> 1);

return;

}

if (U.compareAndSwapInt(this, SIZECTL, sc = sizeCtl, sc - 1)) {

if ((sc - 2) != resizeStamp(n) << RESIZE_STAMP_SHIFT)

return;

finishing = advance = true;

i = n; // recheck before commit

}

}

else if ((f = tabAt(tab, i)) == null)//若原hash表该桶为null,置为fwd,

advance = casTabAt(tab, i, null, fwd);

else if ((fh = f.hash) == MOVED) //若为fwd,表示已迁移过

advance = true; // already processed

else {

synchronized (f) {

if (tabAt(tab, i) == f) { //链表

Node<K,V> ln, hn; //形成两个链表,

if (fh >= 0) {

int runBit = fh & n;

Node<K,V> lastRun = f;

for (Node<K,V> p = f.next; p != null; p = p.next) {

int b = p.hash & n;

if (b != runBit) {

runBit = b;

lastRun = p;

}

}

if (runBit == 0) {

ln = lastRun;

hn = null;

}

else {

hn = lastRun;

ln = null;

}

for (Node<K,V> p = f; p != lastRun; p = p.next) {

int ph = p.hash; K pk = p.key; V pv = p.val;

if ((ph & n) == 0)

ln = new Node<K,V>(ph, pk, pv, ln);

else

hn = new Node<K,V>(ph, pk, pv, hn);

}

setTabAt(nextTab, i, ln); //一个链表放在i位置

setTabAt(nextTab, i + n, hn);//一个放在i+n位置

setTabAt(tab, i, fwd); //该桶位置置为fwd,表示已经迁移过,下一个线程到这个桶直接跳过

advance = true;

}

else if (f instanceof TreeBin) { //红黑树

TreeBin<K,V> t = (TreeBin<K,V>)f;

TreeNode<K,V> lo = null, loTail = null;

TreeNode<K,V> hi = null, hiTail = null;

int lc = 0, hc = 0;

for (Node<K,V> e = t.first; e != null; e = e.next) {

int h = e.hash;

TreeNode<K,V> p = new TreeNode<K,V>

(h, e.key, e.val, null, null);

if ((h & n) == 0) {

if ((p.prev = loTail) == null)

lo = p;

else

loTail.next = p;

loTail = p;

++lc;

}

else {

if ((p.prev = hiTail) == null)

hi = p;

else

hiTail.next = p;

hiTail = p;

++hc;

}

}

ln = (lc <= UNTREEIFY_THRESHOLD) ? untreeify(lo) :

(hc != 0) ? new TreeBin<K,V>(lo) : t;

hn = (hc <= UNTREEIFY_THRESHOLD) ? untreeify(hi) :

(lc != 0) ? new TreeBin<K,V>(hi) : t;

setTabAt(nextTab, i, ln);

setTabAt(nextTab, i + n, hn);

setTabAt(tab, i, fwd);

advance = true;

}

}

}

}

}

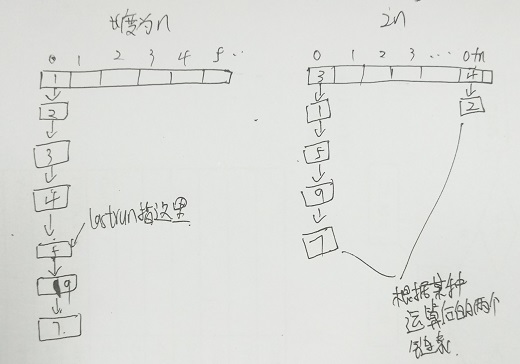

}这里画一张图,帮助理解上面的两个链表的特点和原链表的区别。

这里可以看出,lastrun后面的结点是和原链表一样的,前面的都被逆序了。可以看一下Node的构造方法,有助于理解。

到最后原hash表的所有桶都被置为fwd,迁移就结束了。

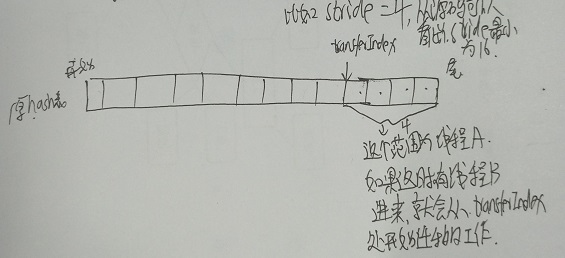

其中stride为步长,意思就是一个线程管stride范围内的桶的迁移工作。而transferIndex为下一个线程开始迁移的位置。