ovs-vswitchd.c的main函数最终会进入一个while循环,在这个无限循环中,里面最重要的两个函数是bridge_run()和netdev_run()。

bridge_init(remote);

free(remote);

exiting = false;

while (!exiting) { //while循环,直到退出

memory_run();

if (memory_should_report()) {

struct simap usage;

simap_init(&usage);

bridge_get_memory_usage(&usage);

memory_report(&usage);

simap_destroy(&usage);

}

bridge_run(); //处理controller交互,ovs-ofctl命令

unixctl_server_run(unixctl); //处理ovs-vsctl命令

netdev_run();

memory_wait();

bridge_wait();

unixctl_server_wait(unixctl);

netdev_wait();

if (exiting) {

poll_immediate_wake();

}

poll_block(); //阻塞,当没有请求处理的时候,阻塞在此处

if (should_service_stop()) {

exiting = true;

}

}

bridge_exit();

unixctl_server_destroy(unixctl);

service_stop();Openvswitch主要管理两种类型的设备,一个是创建的虚拟网桥,一个是连接到虚拟网桥上的设备。

其中bridge_run就是初始化数据库中已经创建的虚拟网桥。

在具体介绍代码调用之前,需要回顾一下OVS 的代码架构:

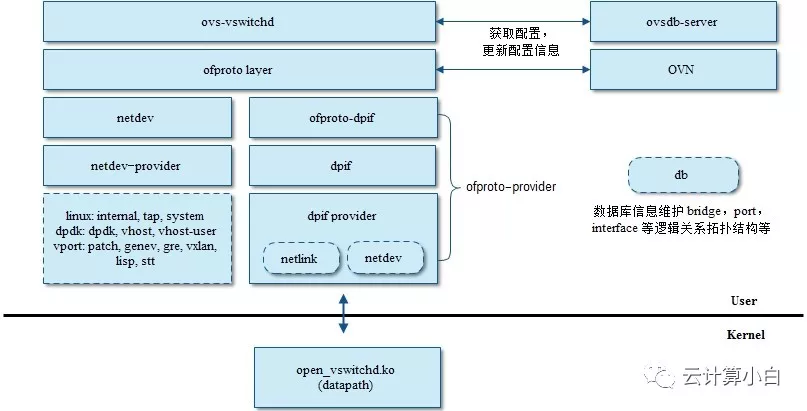

ovs-vswitchd是ovs中最核心的组件,openflow的相关逻辑都在vswitchd里实现,一般来说ovs分为datapath, vswitchd以及ovsdb三个部分,datapath一般是和具体是数据面平台相关的,比如白盒交换机,或者linux内核等。ovsdb用于存储vswitch本身的配置信息,比如端口,拓扑,规则等。vswitchd本身是分层的结构,最上层daemon主要用于和ovsdb通信,做配置的下发和更新等,中间层ofproto,用于和openflow控制器通信,以及通过ofproto_class暴露了ofproto provider接口(目前只支持一个ofproto provider,即ofproto-dpif),不同平台上openflow的具体实现就通过ofproto_class统一。

ovs bridge的创建流程:

1.通过ovs-vsctl 创建网桥,将创建参数发送给ovsdb-server,ovsdb-server将数据写入数据库。

2.ovs-vswitchd从ovsdb-server中读取创建网桥的信息,在ovs-vswithd层创建一个bridge结构体信息

3.然后将brdige信息应用到ofproto层,在ofproto层通过ofproto_create创建网桥,

ofproto_create(br->name, br->type, &br->ofproto)根据配置创建一个交换机,其中type是交换机类型。这个ofproto对象对外和OpenFlow Controller打交道,对内通过netdev和ofproto-dpif和下面打交道。ofproto结构体(该结构体也表示一个bridge)通过ofproto provider 构造函数创建ofproto provider的私有信息(ofproto_class的定义在ofproto-provider.h中,它的实现在ofproto-dpif.c的ofproto_dpif_class中)。

ofproto_class的作用:不同的交换形态(软交换、硬件加速),目前只有一种,软交换。

4.ofproto-dpif通过backer关联dpif。struct dpif_class是datapath interface实现的工厂接口类,用于和实际的datapath(比如openvswitch.ko, 或者userspace datapath)交互。目前已有的两个dpif的实现是dpif-netlink和dpif-netdev,前者是基于内核datapath的dpif实现(linux),后者基于用户态datapath(dpdk):

1)lib/dpif-netlink.c: 特定Linux实现的dpif,该dpif与Open vSwith实现的内核模块通信。内核模块执行所有的交换工作,将内核态不匹配的数据包发送到用户态。dpif封装调用内核接口。

2)lib/dpif-netdev.c :是一种通用的 dpif 实现。该dpif就是Open vSwith在用户态的实现。数据包的交换不会进入内核。

dpif_class的作用:不同的转发平面(用户态、内核态)

用户态dp:基于dpdk技术,轮训转发,适用NFV领域;

内核态dp:内核态交换流表方式,适用于管理平面或IT领域

一、虚拟网桥的初始化bridge_run

bridge_run会调用bridge_run__,bridge_run__中最重要的是对于所有的网桥,都调用ofproto_run:

static void

bridge_run__(void)

{

/* Let each bridge do the work that it needs to do. */

HMAP_FOR_EACH (br, node, &all_bridges) {

ofproto_run(br->ofproto);

}

}int ofproto_run(struct ofproto *p)会调用error = p->ofproto_class->run(p);

ofproto_class的定义在ofproto-provider.h中,它的实现在ofproto-dpif.c的ofproto_dpif_class中:

const struct ofproto_class ofproto_dpif_class = {

init,

enumerate_types,

enumerate_names,

del,

port_open_type,

type_run,

type_wait,

alloc,

construct,

destruct,

dealloc,

run,

wait,

NULL, /* get_memory_usage. */

type_get_memory_usage,

flush,

query_tables,

set_tables_version,

port_alloc,

port_construct,

port_destruct,

port_dealloc,

port_modified,

port_reconfigured,

port_query_by_name,

port_add,

port_del,

port_get_stats,

port_dump_start,

port_dump_next,

port_dump_done,

port_poll,

port_poll_wait,

port_is_lacp_current,

port_get_lacp_stats,

NULL, /* rule_choose_table */

rule_alloc,

rule_construct,

rule_insert,

rule_delete,

rule_destruct,

rule_dealloc,

rule_get_stats,

rule_execute,

set_frag_handling,

packet_out,

set_netflow,

get_netflow_ids,

set_sflow,

set_ipfix,

set_cfm,

cfm_status_changed,

get_cfm_status,

set_lldp,

get_lldp_status,

set_aa,

aa_mapping_set,

aa_mapping_unset,

aa_vlan_get_queued,

aa_vlan_get_queue_size,

set_bfd,

bfd_status_changed,

get_bfd_status,

set_stp,

get_stp_status,

set_stp_port,

get_stp_port_status,

get_stp_port_stats,

set_rstp,

get_rstp_status,

set_rstp_port,

get_rstp_port_status,

set_queues,

bundle_set,

bundle_remove,

mirror_set__,

mirror_get_stats__,

set_flood_vlans,

is_mirror_output_bundle,

forward_bpdu_changed,

set_mac_table_config,

set_mcast_snooping,

set_mcast_snooping_port,

set_realdev,

NULL, /* meter_get_features */

NULL, /* meter_set */

NULL, /* meter_get */

NULL, /* meter_del */

group_alloc, /* group_alloc */

group_construct, /* group_construct */

group_destruct, /* group_destruct */

group_dealloc, /* group_dealloc */

group_modify, /* group_modify */

group_get_stats, /* group_get_stats */

get_datapath_version, /* get_datapath_version */

};在ofproto-provider.h中注释里是这样说的,这里定义了四类数据结构:

Struct ofproto表示一个交换机

Struct ofport表示交换机上的一个端口

Struct rule表示交换机上的一条flow规则

Struct ofgroup表示一个flow规则组

上面说到启动的过程中,会调用ofproto_class->run,也即会调用ofproto-dpif.c中的static int run(struct ofproto *ofproto_)函数。在这个函数中,会初始化netflow, sflow, ipfix,stp, rstp, mac address learning等一系列操作。

bridge_run还会调用static void bridge_reconfigure(const struct ovsrec_open_vswitch *ovs_cfg),其中ovs_cfg是从ovsdb-server里面读取出来的配置。

在这个函数里面,包括以下调用:

// ofproto_create根据配置创建一个交换机

ofproto_create(br->name, br->type, &br->ofproto)

↓

ofproto->ofproto_class->construct(ofproto)

//set config

ofproto_bridge_set_config

// 对于每一个网桥,将网卡添加进去:

HMAP_FOR_EACH (br, node, &all_bridges) {

bridge_add_ports(br, &br->wanted_ports);

shash_destroy(&br->wanted_ports);

}static void

bridge_add_ports(struct bridge *br, const struct shash *wanted_ports)

{

/* First add interfaces that request a particular port number. */

bridge_add_ports__(br, wanted_ports, true);

/* Then add interfaces that want automatic port number assignment.

* We add these afterward to avoid accidentally taking a specifically

* requested port number. */

bridge_add_ports__(br, wanted_ports, false);

}然后依次调用:

static void bridge_add_ports__(struct bridge *br, const struct shash *wanted_ports, bool with_requested_port)

↓

static bool iface_create(struct bridge *br, const struct ovsrec_interface *iface_cfg, const struct ovsrec_port *port_cfg)

↓

static int iface_do_create(const struct bridge *br, const struct ovsrec_interface *iface_cfg, const struct ovsrec_port *port_cfg, ofp_port_t *ofp_portp, struct netdev **netdevp, char **errp)

↓

int ofproto_port_add(struct ofproto *ofproto, struct netdev *netdev, ofp_port_t *ofp_portp)

↓

error = ofproto->ofproto_class->port_add(ofproto, netdev);

然后ofproto-dpif.c中的ofproto_dpif_class的 port_add函数会通过 dpif_port_add函数调用

error = dpif->dpif_class->port_add(dpif, netdev, &port_no);用户态dp会调用dpif_netdev_class的dpif_netdev_port_add函数;内核态dp会调用dpif_netlink_class的dpif_netlink_port_add,会调用 dpif_netlink_port_add__,在这个函数里面,会调用netlink的API,命令为OVS_VPORT_CMD_NEW

const char *name = netdev_vport_get_dpif_port(netdev,

namebuf, sizeof namebuf);

struct dpif_netlink_vport request, reply;

struct nl_sock **socksp = NULL;

if (dpif->handlers) {

socksp = vport_create_socksp(dpif, &error);

if (!socksp) {

return error;

}

}

dpif_netlink_vport_init(&request);

request.cmd = OVS_VPORT_CMD_NEW;

request.dp_ifindex = dpif->dp_ifindex;

request.type = netdev_to_ovs_vport_type(netdev);

request.name = name;

upcall_pids = vport_socksp_to_pids(socksp, dpif->n_handlers);

request.n_upcall_pids = socksp ? dpif->n_handlers : 1;

request.upcall_pids = upcall_pids;

error = dpif_netlink_vport_transact(&request, &reply, &buf);这里会调用内核模块openvswitch.ko,在内核中添加虚拟网卡。

二、虚拟网卡的初始化netdev_run()

void

netdev_run(void)

OVS_EXCLUDED(netdev_class_mutex, netdev_mutex)

{

struct netdev_registered_class *rc;

netdev_initialize();

ovs_mutex_lock(&netdev_class_mutex);

HMAP_FOR_EACH (rc, hmap_node, &netdev_classes) {

if (rc->class->run) {

rc->class->run();

}

}

ovs_mutex_unlock(&netdev_class_mutex);

}依次循环调用netdev_classes中的每一个run。

对于不同类型的虚拟网卡,都有对应的netdev_class。

例如对于dpdk的网卡有:

static const struct netdev_class dpdk_class =

NETDEV_DPDK_CLASS(

"dpdk",

NULL,

netdev_dpdk_construct,

netdev_dpdk_destruct,

netdev_dpdk_set_multiq,

netdev_dpdk_eth_send,

netdev_dpdk_get_carrier,

netdev_dpdk_get_stats,

netdev_dpdk_get_features,

netdev_dpdk_get_status,

netdev_dpdk_rxq_recv);对于物理网卡,也需要有相应的netdev_class:

const struct netdev_class netdev_linux_class =

NETDEV_LINUX_CLASS(

"system",

netdev_linux_construct,

netdev_linux_get_stats,

netdev_linux_get_features,

netdev_linux_get_status);对于连接到KVM的tap网卡:

const struct netdev_class netdev_tap_class =

NETDEV_LINUX_CLASS(

"tap",

netdev_linux_construct_tap,

netdev_tap_get_stats,

netdev_linux_get_features,

netdev_linux_get_status);对于虚拟的软网卡,比如veth pair:

const struct netdev_class netdev_internal_class =

NETDEV_LINUX_CLASS(

"internal",

netdev_linux_construct,

netdev_internal_get_stats,

NULL, /* get_features */

netdev_internal_get_status);其中NETDEV_LINUX_CLASS是一个宏,不是所有的参数都需要全部填写。

#define NETDEV_LINUX_CLASS(NAME, CONSTRUCT, GET_STATS, \

GET_FEATURES, GET_STATUS) \

{ \

NAME, \

\

NULL, \

netdev_linux_run, \

netdev_linux_wait, \

\

netdev_linux_alloc, \

CONSTRUCT, \

netdev_linux_destruct, \

netdev_linux_dealloc, \

NULL, /* get_config */ \

NULL, /* set_config */ \

NULL, /* get_tunnel_config */ \

NULL, /* build header */ \

NULL, /* push header */ \

NULL, /* pop header */ \

NULL, /* get_numa_id */ \

NULL, /* set_multiq */ \

\

netdev_linux_send, \

netdev_linux_send_wait, \

\

netdev_linux_set_etheraddr, \

netdev_linux_get_etheraddr, \

netdev_linux_get_mtu, \

netdev_linux_set_mtu, \

netdev_linux_get_ifindex, \

netdev_linux_get_carrier, \

netdev_linux_get_carrier_resets, \

netdev_linux_set_miimon_interval, \

GET_STATS, \

\

GET_FEATURES, \

netdev_linux_set_advertisements, \

\

netdev_linux_set_policing, \

netdev_linux_get_qos_types, \

netdev_linux_get_qos_capabilities, \

netdev_linux_get_qos, \

netdev_linux_set_qos, \

netdev_linux_get_queue, \

netdev_linux_set_queue, \

netdev_linux_delete_queue, \

netdev_linux_get_queue_stats, \

netdev_linux_queue_dump_start, \

netdev_linux_queue_dump_next, \

netdev_linux_queue_dump_done, \

netdev_linux_dump_queue_stats, \

\

netdev_linux_get_in4, \

netdev_linux_set_in4, \

netdev_linux_get_in6, \

netdev_linux_add_router, \

netdev_linux_get_next_hop, \

GET_STATUS, \

netdev_linux_arp_lookup, \

\

netdev_linux_update_flags, \

\

netdev_linux_rxq_alloc, \

netdev_linux_rxq_construct, \

netdev_linux_rxq_destruct, \

netdev_linux_rxq_dealloc, \

netdev_linux_rxq_recv, \

netdev_linux_rxq_wait, \

netdev_linux_rxq_drain, \

}rc->class->run()调用的是netdev-linux.c下的netdev_linux_run。

netdev_linux_run会调用netlink的sock得到虚拟网卡的状态,并且更新状态:

error = nl_sock_recv(sock, &buf, false);

if (!error) {

struct rtnetlink_change change;

if (rtnetlink_parse(&buf, &change)) {

struct netdev *netdev_ = netdev_from_name(change.ifname);

if (netdev_ && is_netdev_linux_class(netdev_->netdev_class)) {

struct netdev_linux *netdev = netdev_linux_cast(netdev_);

ovs_mutex_lock(&netdev->mutex);

netdev_linux_update(netdev, &change);

ovs_mutex_unlock(&netdev->mutex);

}

netdev_close(netdev_);

}

}总结:

bridge_run__ 循环处理一些必要的操作,如stp、rstp、mcast处理等,同时ovs-ofctl 下发openflow,也是在这里处理。bridge_reconfigure 主要完成根据数据以及当前进程信息,创建、更新、删除必要的网桥、接口、端口以及其他协议的配置等。最终这些操作为应用到ofproto 层、ofproto dpif 层、run_stats_update、run_status_update、run_system_stats 更新openvswitch数据库状态信息。netdev_run监控网卡状态并更新。

补充:

ovs+dpdk场景下,对应的netdev_dpdk_run为NULL,前面介绍过,用户态dp会调用dpif_netdev_class的dpif_netdev_port_add函数,在其中启动pmd线程,采用同步轮询机制来接收和发送报文,而不是中断,以此提高报文的处理效率。