2万多条数据已经爬去完毕,发现格式不正确,该怎么办?

爬取的结果如下:

[{“title”: “工艺:”, “content”: [“油爆”]}, {“title”: “口味:”, “content”: [“咸鲜味”]}, {“title”: “菜系:”, “content”: [“福建菜”]}, {“title”: “功效:”, “content”: [“福建菜”, “通乳调理”, “气血双补调理”, “营养不良调理”]}, {“title”: “主料:”, “content”: [“河虾250克”]}, {“title”: “辅料:”, “content”: [“竹笋35克”, “香菇(鲜)10克”, “青椒20克”, “红萝卜25克”]}, {“title”: “调料:”, “content”: [“大葱10克 鸡蛋清10克 大蒜5克 淀粉(豌豆)12克 白砂糖5克 盐3克 味精1克 料酒3克 胡椒1克 植物油75克 各适量”]}]

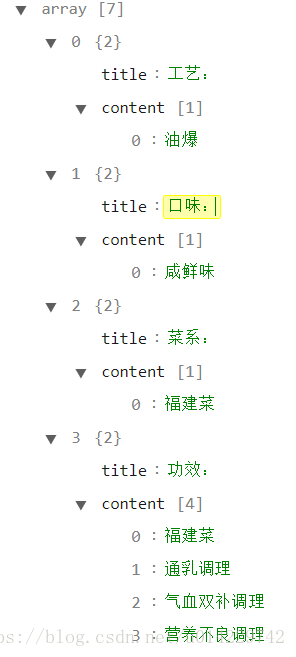

JSon在线解析后结果如下:

通过分析发现:

{“title”: “功效:”, “content”: [“福建菜”, “通乳调理”, “气血双补调理”, “营养不良调理”]}

content中内容还在列表中,我们需要取出来,解决办法有两种:

(1)编写爬虫代码时就应该整理好数据。当数据较少可以修改代码重新跑一次,但是数据太多,重跑不可能。

(2)使用pipelines进行数据整理,这种办法也是数据清理时经常会用到的。方法如下:

#以下代码可以在任意文件夹下运行,只要环境配置正确

import pymysql.cursors

import json

#make a connection with the databases

connection = pymysql.connect(host='localhost',

user='root',

password='123456',

db='baikemy.com',

cursorclass=pymysql.cursors.DictCursor,autocommit=True)

try:

with connection.cursor() as cursor:

sql = "SELECT `id`, `gongyi` FROM `total_copy1`"

cursor.execute(sql) #execute the search

result = cursor.fetchall() #get all the row from search

for r in result:

g = json.loads(r['gongyi']) # change json string to json object

#if when make to json objects,we can get the value by g2['title']

d = []

for g2 in g:

a = g2['title'] #get value

b = g2['content'] #b is a list of strings

strx = ''

for s in b:#change content list of strings (b) into one string

strx += s + ' '

strx = strx[:-1] #remove the last space

d.append( # new gongyi object

{

"title": a,

"content": strx

}

)

with connection.cursor() as cursor2:

d2=str(d).replace("'","\"") # change object to string

print(d2)

sql = "UPDATE `total_copy1` SET `gongyi`=%s WHERE `id`=%s" #update gongyi object

cursor2.execute(sql, (d2, r['id'])) # update by knowing id

print(r['id'])

finally:

connection.close()