1.5 块设备请求返回处理回调机制

本节我们继续完善1.4节中的代码,在上节我们完成了请求的过滤转发,那么请求被磁盘处理完成后返回回来的路径处理是怎样的,本节我们继续带着这样的问题再一次完善我们的驱动程序,通过本节的学习,我们能够真正掌握请求处理,转发过滤,请求完成后回调处理机制的完整学习。

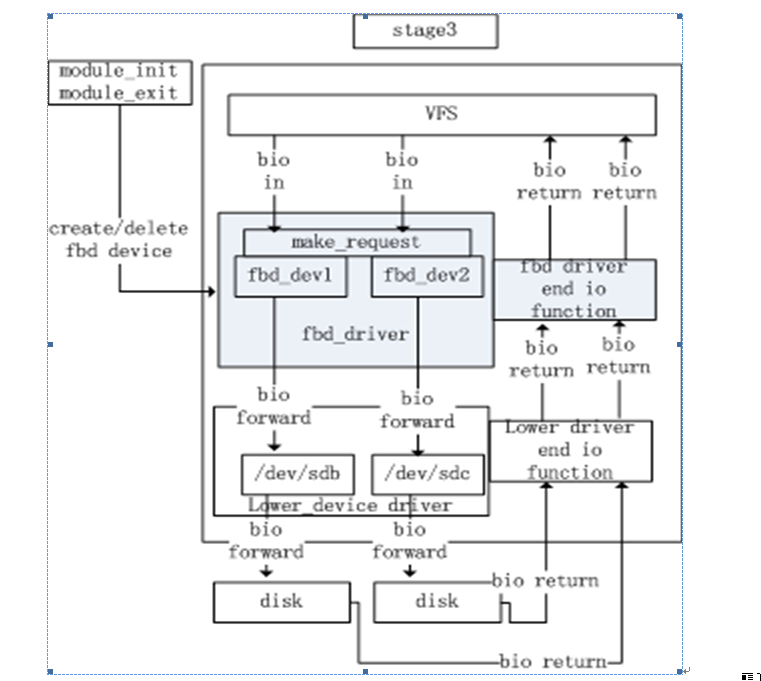

先给出完善后的IO架构图,我们对比一下1.4节最后给出的图有何区别:

相比1.4节,在fbd_driver框图右侧增加了fbd_driver end io function处理模块,底层设备sd#的请求返回后,进入fbd_driver的请求回调处理函数中。我们再次贴一下完善后的代码。

1 #ifndef _FBD_DRIVER_H

2 #define _FBD_DRIVER_H

3 #include <linux/init.h>

4 #include <linux/module.h>

5 #include <linux/blkdev.h>

6 #include <linux/bio.h>

7 #include <linux/genhd.h>

8

9 #define SECTOR_BITS (9)

10 #define DEV_NAME_LEN 32

11

12 #define DRIVER_NAME "filter driver"

13

14 #define DEVICE1_NAME "fbd1_dev"

15 #define DEVICE1_MINOR 0

16 #define DEVICE2_NAME "fbd2_dev"

17 #define DEVICE2_MINOR 1

18

19 struct fbd_dev {

20 struct request_queue *queue;

21 struct gendisk *disk;

22 sector_t size; /* devicesize in Bytes */

23 char lower_dev_name[DEV_NAME_LEN];

24 struct block_device *lower_bdev;

25 };

26

27 struct bio_context {

28 void *old_private;

29 void *old_callback;

30 };

31 #endif

1 /**

2 * fbd-driver - filter blockdevice driver

3 * Author: Talk@studio

4 **/

5 #include "fbd_driver.h"

6

7 static int fbd_driver_major = 0;

8

9 static struct fbd_dev fbd_dev1 =

10 {

11 .queue = NULL,

12 .disk = NULL,

13 .lower_dev_name = "/dev/sdb",

14 .lower_bdev = NULL,

15 .size = 0

16 };

17

18 static struct fbd_dev fbd_dev2 =

19 {

20 .queue = NULL,

21 .disk = NULL,

22 .lower_dev_name = "/dev/sdc",

23 .lower_bdev = NULL,

24 .size = 0

25 };

26

27 static int fbddev_open(struct inode *inode,struct file *file);

28 static int fbddev_close(struct inode*inode, struct file *file);

29

30 static struct block_device_operationsdisk_fops = {

31 .open = fbddev_open,

32 .release = fbddev_close,

33 .owner = THIS_MODULE,

34 };

35

36 static int fbddev_open(struct inode *inode,struct file *file)

37 {

38 printk("device is opened by:[%s]\n", current->comm);

39 return 0;

40 }

41

42 static int fbddev_close(struct inode*inode, struct file *file)

43 {

44 printk("device is closed by:[%s]\n", current->comm);

45 return 0;

46 }

47

48 static int fbd_io_callback(struct bio *bio,unsigned int bytes_done, int error)

49 {

50 struct bio_context *ctx = bio->bi_private;

51

52 bio->bi_private = ctx->old_private;

53 bio->bi_end_io = ctx->old_callback;

54 kfree(ctx);

55

56 printk("returned [%s] io request, end on sector %llu!\n",

57 bio_data_dir(bio) == READ ?"read" : "write",

58 bio->bi_sector);

59

60 if (bio->bi_end_io) {

61 bio->bi_end_io(bio,bytes_done, error);

62 }

63

64 return 0;

65 }

66

67 static int make_request(structrequest_queue *q, struct bio *bio)

68 {

69 struct fbd_dev *dev = (struct fbd_dev *)q->queuedata;

70 struct bio_context *ctx;

71

72 printk("device [%s] recevied [%s] io request, "

73 "access on dev sector[%llu], length is [%u] sectors.\n",

74 dev->disk->disk_name,

75 bio_data_dir(bio) == READ ?"read" : "write",

76 bio->bi_sector,

77 bio_sectors(bio));

78

79 ctx = kmalloc(sizeof(struct bio_context), GFP_KERNEL);

80 if (!ctx) {

81 printk("alloc memory forbio_context failed!\n");

82 bio_endio(bio,bio->bi_size, -ENOMEM);

83 goto out;

84 }

85 memset(ctx, 0, sizeof(struct bio_context));

86

87 ctx->old_private = bio->bi_private;

88 ctx->old_callback = bio->bi_end_io;

89 bio->bi_private = ctx;

90 bio->bi_end_io = fbd_io_callback;

91

92 bio->bi_bdev = dev->lower_bdev;

93 submit_bio(bio_rw(bio), bio);

94 out:

95 return 0;

96 }

97

98 static int dev_create(struct fbd_dev *dev,char *dev_name, int major, int minor)

99 {

100 int ret = 0;

101

102 /* init fbd_dev */

103 dev->disk = alloc_disk(1);

104 if (!dev->disk) {

105 printk("alloc diskerror");

106 ret = -ENOMEM;

107 goto err_out1;

108 }

109

110 dev->queue =blk_alloc_queue(GFP_KERNEL);

111 if (!dev->queue) {

112 printk("alloc queueerror");

113 ret = -ENOMEM;

114 goto err_out2;

115 }

116

117 /* init queue */

118 blk_queue_make_request(dev->queue,make_request);

119 dev->queue->queuedata = dev;

120

121 /* init gendisk */

122 strncpy(dev->disk->disk_name, dev_name,DEV_NAME_LEN);

123 dev->disk->major = major;

124 dev->disk->first_minor = minor;

125 dev->disk->fops =&disk_fops;

126

127 dev->lower_bdev =open_bdev_excl(dev->lower_dev_name, FMODE_WRITE | FMODE_READ,dev->lower_bdev);

128 if (IS_ERR(dev->lower_bdev)) {

129 printk("Open thedevice[%s]'s lower dev [%s] failed!\n", dev_name, dev->lower_dev_name);

130 ret = -ENOENT;

131 goto err_out3;

132 }

133

134 dev->size =get_capacity(dev->lower_bdev->bd_disk) << SECTOR_BITS;

135

136 set_capacity(dev->disk,(dev->size >> SECTOR_BITS));

137

138 /* bind queue to disk */

139 dev->disk->queue = dev->queue;

140

141 /* add disk to kernel */

142 add_disk(dev->disk);

143 return 0;

144err_out3:

145 blk_cleanup_queue(dev->queue);

146err_out2:

147 put_disk(dev->disk);

148err_out1:

149 return ret;

150 }

151

152 staticvoid dev_delete(struct fbd_dev *dev, char *name)

153 {

154 printk("delete the device[%s]!\n", name);

155 close_bdev_excl(dev->lower_bdev);

156

157 blk_cleanup_queue(dev->queue);

158 del_gendisk(dev->disk);

159 put_disk(dev->disk);

160 }

161

162 staticint __init fbd_driver_init(void)

163 {

164 int ret;

165

166 /* register fbd driver, get the drivermajor number*/

167 fbd_driver_major =register_blkdev(fbd_driver_major, DRIVER_NAME);

168 if (fbd_driver_major < 0) {

169 printk("get majorfail");

170 ret = -EIO;

171 goto err_out1;

172 }

173

174 /* create the first device */

175 ret = dev_create(&fbd_dev1, DEVICE1_NAME,fbd_driver_major, DEVICE1_MINOR);

176 if (ret) {

177 printk("create device[%s] failed!\n", DEVICE1_NAME);

178 goto err_out2;

179 }

180

181 /* create the second device */

182 ret = dev_create(&fbd_dev2,DEVICE2_NAME, fbd_driver_major, DEVICE2_MINOR);

183 if (ret) {

184 printk("create device[%s] failed!\n", DEVICE2_NAME);

185 goto err_out3;

186 }

187 return ret;

188err_out3:

189 dev_delete(&fbd_dev1,DEVICE1_NAME);

190err_out2:

191 unregister_blkdev(fbd_driver_major,DRIVER_NAME);

192err_out1:

193 return ret;

194 }

195

196 staticvoid __exit fbd_driver_exit(void)

197 {

198 /* delete the two devices */

199 dev_delete(&fbd_dev2,DEVICE2_NAME);

200 dev_delete(&fbd_dev1,DEVICE1_NAME);

201

202 /* unregister fbd driver */

203 unregister_blkdev(fbd_driver_major,DRIVER_NAME);

204 printk("block device driver exitsuccessfuly!\n");

205 }

206

207module_init(fbd_driver_init);

208module_exit(fbd_driver_exit);

209MODULE_LICENSE("GPL");

先看头文件的变化,27-30行我们新定义了一个数据结构bio_context,顾名思义该数据结构用于描述bio请求的上下文信息,里面有两个成员,都是指针,old_private指针用于保存bio中private指针内容,old_callback用于保存bio中旧的回调函数指针。

接下来分析fbd_driver.c,我们直接看make_request函数,67-96行。

67 staticint make_request(struct request_queue *q, struct bio *bio)

68 {

69 struct fbd_dev *dev = (struct fbd_dev *)q->queuedata;

70 struct bio_context *ctx;

71

72 printk("device [%s] recevied [%s] io request, "

73 "access on dev sector[%llu], length is [%u] sectors.\n",

74 dev->disk->disk_name,

75 bio_data_dir(bio) == READ ?"read" : "write",

76 bio->bi_sector,

77 bio_sectors(bio));

78

79 ctx = kmalloc(sizeof(struct bio_context), GFP_KERNEL);

80 if (!ctx) {

81 printk("alloc memory forbio_context failed!\n");

82 bio_endio(bio,bio->bi_size, -ENOMEM);

83 goto out;

84 }

85 memset(ctx, 0, sizeof(struct bio_context));

86

87 ctx->old_private = bio->bi_private;

88 ctx->old_callback = bio->bi_end_io;

89 bio->bi_private = ctx;

90 bio->bi_end_io = fbd_io_callback;

91

92 bio->bi_bdev = dev->lower_bdev;

93 submit_bio(bio_rw(bio), bio);

94 out:

95 return 0;

96 }

70行定义了一个bio_context指针变量,79行通过kmalloc函数为ctx指针申请了内存并赋值,这样我们申请bio的上下文信息数据结构,并在85行用memset函数将ctx数据结构清空,接下来87-88行,我们保存了bio中的bi_private指针和bi_end_io回调函数指针,在90-91行把bio中的这两个指针重新赋值了,bi_private赋值为ctx指针值,bi_end_io回调函数指针赋值为fbd_io_callback函数指针,这样我们完成了bio请求的再次加工处理,我们趁热打铁继续分析fbd_io_callback函数一探究竟,搞清楚这么的做的原因。

48 static int fbd_io_callback(struct bio *bio,unsigned int bytes_done, int error)

49 {

50 struct bio_context *ctx = bio->bi_private;

51

52 bio->bi_private = ctx->old_private;

53 bio->bi_end_io = ctx->old_callback;

54 kfree(ctx);

55

56 printk("returned [%s] io request, end on sector %llu!\n",

57 bio_data_dir(bio) == READ ?"read" : "write",

58 bio->bi_sector);

59

60 if (bio->bi_end_io) {

61 bio->bi_end_io(bio,bytes_done, error);

62 }

63

64 return 0;

65 }

输入参数是bio指针以及请求完成的子结束和错误标志,由于在90行我们把bio中bi_private的指针赋值到ctx指针中,这样50行的代码我们就可以重新获取ctx指针,进而52-53行我们重新恢复bio的bi_private/bi_end_io指针值,最后54行释放ctx,因为此时ctx中的指针值我们已恢复,ctx已不再有用可以释放其占用的内存,我们继续调用bio原本就注册好的bi_end_io回调函数,完成真正的请求处理结束操作。

至此,我们走完了完整的请求处理,转发过滤,完成回调全过程。

P.S.: 注意此篇并没有深入剖析硬中断->软中断->bio回调处理过程,我们先知道请求会通过我们注册的回调函数返回即可,后续再深入剖析操作系统细节。