In [ ]:

import os

import numpy as np

import pandas as pd

import tensorflow as tf

# read data from file

data = pd.read_csv('data/train.csv')

print(data.info())

In [ ]:

# fill nan values with 0

data = data.fillna(0)

# convert ['male', 'female'] values of Sex to [1, 0]

data['Sex'] = data['Sex'].apply(lambda s: 1 if s == 'male' else 0)

# 'Survived' is the label of one class,

# add 'Deceased' as the other class

data['Deceased'] = data['Survived'].apply(lambda s: 1 - s)

# select features and labels for training

dataset_X = data[['Sex', 'Age', 'Pclass', 'SibSp', 'Parch', 'Fare']]

dataset_Y = data[['Deceased', 'Survived']]

print(dataset_X)

print(dataset_Y)

In [ ]:

from sklearn.model_selection import train_test_split

# split training data and validation set data

X_train, X_val, y_train, y_val = train_test_split(dataset_X.as_matrix(), dataset_Y.as_matrix(),

test_size=0.2,

random_state=42)

In [ ]:

# 声明输入数据占位符

# shape参数的第一个元素为None,表示可以同时放入任意条记录

X = tf.placeholder(tf.float32, shape=[None, 6], name='input')

y = tf.placeholder(tf.float32, shape=[None, 2], name='label')

In [ ]:

# 声明变量

weights = tf.Variable(tf.random_normal([6, 2]), name='weights')

bias = tf.Variable(tf.zeros([2]), name='bias')

In [ ]:

y_pred = tf.nn.softmax(tf.matmul(X, weights) + bias)

In [ ]:

# 使用交叉熵作为代价函数

cross_entropy = - tf.reduce_sum(y * tf.log(y_pred + 1e-10),

reduction_indices=1)

# 批量样本的代价值为所有样本交叉熵的平均值

cost = tf.reduce_mean(cross_entropy)

NOTE

在计算交叉熵的时候,对模型输出值 y_pred 加上了一个很小的误差值(在上面程序中是 1e-10),这是因为当 y_pred 十分接近真值 y_true 的时候,也就是 y_pred 的值非常接近 0 或 1 的取值时,计算会得到负无穷 -inf,从而导致输出非法,并进一步导致无法计算梯度,迭代陷入崩溃。要解决这个问题有三种办法:

- 在计算时,直接加入一个极小的误差值,使计算合法。这样可以避免计算,但存在的问题是加入误差后相当于y_pred的值会突破1。在示例代码中使用了这种方案;

- 使用 clip() 函数,当 y_pred 接近 0 时,将其赋值成为极小误差值。也就是将 y_pred 的取值范围限定在的范围内;

- 当计算交叉熵的计算出现 nan 值时,显式地将cost设置为0。这种方式回避了 函数计算的问题,而是在最终的代价函数上进行容错处理。

In [ ]:

# 使用随机梯度下降算法优化器来最小化代价,系统自动构建反向传播部分的计算图

train_op = tf.train.GradientDescentOptimizer(0.001).minimize(cost)

In [ ]:

# 计算准确率

correct_pred = tf.equal(tf.argmax(y, 1), tf.argmax(y_pred, 1))

acc_op = tf.reduce_mean(tf.cast(correct_pred, tf.float32))

In [ ]:

with tf.Session() as sess:

# variables have to be initialized at the first place

tf.global_variables_initializer().run()

# training loop

for epoch in range(10):

total_loss = 0.

for i in range(len(X_train)):

# prepare feed data and run

feed_dict = {X: [X_train[i]], y: [y_train[i]]}

_, loss = sess.run([train_op, cost], feed_dict=feed_dict)

total_loss += loss

# display loss per epoch

print('Epoch: %04d, total loss=%.9f' % (epoch + 1, total_loss))

print 'Training complete!'

# Accuracy calculated by TensorFlow

accuracy = sess.run(acc_op, feed_dict={X: X_val, y: y_val})

print("Accuracy on validation set: %.9f" % accuracy)

# Accuracy calculated by NumPy

pred = sess.run(y_pred, feed_dict={X: X_val})

correct = np.equal(np.argmax(pred, 1), np.argmax(y_val, 1))

numpy_accuracy = np.mean(correct.astype(np.float32))

print("Accuracy on validation set (numpy): %.9f" % numpy_accuracy)

变量的存储和读取是通过tf.train.Saver类来完成的。Saver对象在初始化时,为计算图加入了用于存储和加载变量的算子,并可以通过参数指定是要存储哪些变量。Saver对象的save()和restore()方法是触发图中算子的入口。

In [ ]:

# 训练步数记录

global_step = tf.Variable(0, name='global_step', trainable=False)

# 存档入口

saver = tf.train.Saver()

# 在Saver声明之后定义的变量将不会被存储

# non_storable_variable = tf.Variable(777)

ckpt_dir = './ckpt_dir'

if not os.path.exists(ckpt_dir):

os.makedirs(ckpt_dir)

with tf.Session() as sess:

tf.global_variables_initializer().run()

# 加载模型存档

ckpt = tf.train.get_checkpoint_state(ckpt_dir)

if ckpt and ckpt.model_checkpoint_path:

print('Restoring from checkpoint: %s' % ckpt.model_checkpoint_path)

saver.restore(sess, ckpt.model_checkpoint_path)

start = global_step.eval()

for epoch in range(start, start + 10):

total_loss = 0.

for i in range(0, len(X_train)):

feed_dict = {

X: [X_train[i]],

y: [y_train[i]]

}

_, loss = sess.run([train_op, cost], feed_dict=feed_dict)

total_loss += loss

print('Epoch: %04d, loss=%.9f' % (epoch + 1, total_loss))

# 模型存档

global_step.assign(epoch).eval()

saver.save(sess, ckpt_dir + '/logistic.ckpt',

global_step=global_step)

print('Training complete!')

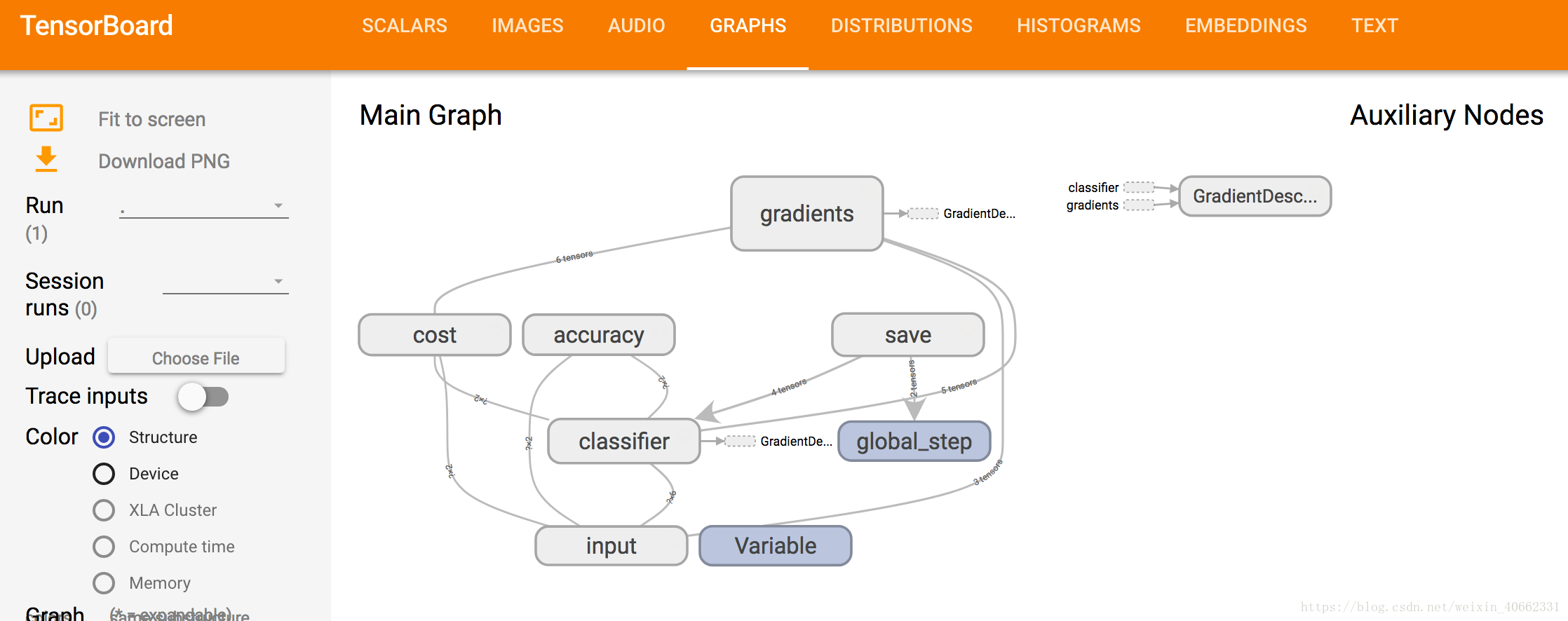

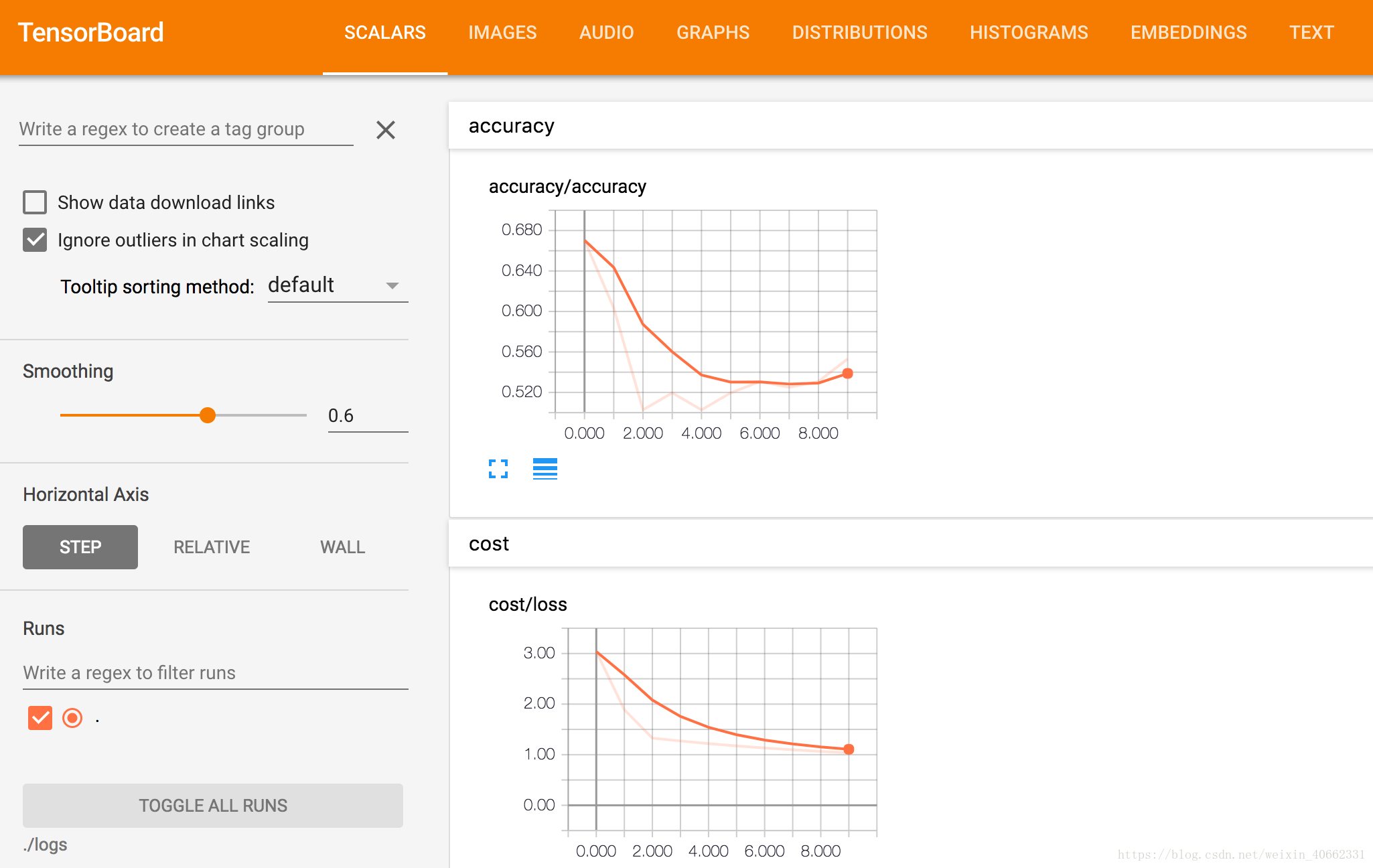

TensorBoard

TensorBoard是TensorFlow配套的可视化工具,可以用来帮助理解复杂的模型和检查实现中的错误。

TensorBoard的工作方式是启动一个WEB服务,该服务进程从TensorFlow程序执行所得的事件日志文件(event files)中读取概要(summary)数据,然后将数据在网页中绘制成可视化的图表。概要数据主要包括以下几种类别:

- 标量数据,如准确率、代价损失值,使用tf.summary.scalar加入记录算子;

- 参数数据,如参数矩阵weights、偏置矩阵bias,一般使用tf.summary.histogram记录;

- 图像数据,用tf.summary.image加入记录算子;

- 音频数据,用tf.summary.audio加入记录算子;

- 计算图结构,在定义tf.summary.FileWriter对象时自动记录。

可以通过TensorBoard展示的完整程序:

In [ ]:

################################

# Constructing Dataflow Graph

################################

# arguments that can be set in command line

tf.app.flags.DEFINE_integer('epochs', 10, 'Training epochs')

tf.app.flags.DEFINE_integer('batch_size', 10, 'size of mini-batch')

FLAGS = tf.app.flags.FLAGS

with tf.name_scope('input'):

# create symbolic variables

X = tf.placeholder(tf.float32, shape=[None, 6])

y_true = tf.placeholder(tf.float32, shape=[None, 2])

with tf.name_scope('classifier'):

# weights and bias are the variables to be trained

weights = tf.Variable(tf.random_normal([6, 2]))

bias = tf.Variable(tf.zeros([2]))

y_pred = tf.nn.softmax(tf.matmul(X, weights) + bias)

# add histogram summaries for weights, view on tensorboard

tf.summary.histogram('weights', weights)

tf.summary.histogram('bias', bias)

# Minimise cost using cross entropy

# NOTE: add a epsilon(1e-10) when calculate log(y_pred),

# otherwise the result will be -inf

with tf.name_scope('cost'):

cross_entropy = - tf.reduce_sum(y_true * tf.log(y_pred + 1e-10),

reduction_indices=1)

cost = tf.reduce_mean(cross_entropy)

tf.summary.scalar('loss', cost)

# use gradient descent optimizer to minimize cost

train_op = tf.train.GradientDescentOptimizer(0.001).minimize(cost)

with tf.name_scope('accuracy'):

correct_pred = tf.equal(tf.argmax(y_true, 1), tf.argmax(y_pred, 1))

acc_op = tf.reduce_mean(tf.cast(correct_pred, tf.float32))

# Add scalar summary for accuracy

tf.summary.scalar('accuracy', acc_op)

global_step = tf.Variable(0, name='global_step', trainable=False)

# use saver to save and restore model

saver = tf.train.Saver()

# this variable won't be stored, since it is declared after tf.train.Saver()

non_storable_variable = tf.Variable(777)

ckpt_dir = './ckpt_dir'

if not os.path.exists(ckpt_dir):

os.makedirs(ckpt_dir)

################################

# Training the model

################################

# use session to run the calculation

with tf.Session() as sess:

# create a log writer. run 'tensorboard --logdir=./logs'

writer = tf.summary.FileWriter('./logs', sess.graph)

merged = tf.summary.merge_all()

# variables have to be initialized at the first place

tf.global_variables_initializer().run()

# restore variables from checkpoint if exists

ckpt = tf.train.get_checkpoint_state(ckpt_dir)

if ckpt and ckpt.model_checkpoint_path:

print('Restoring from checkpoint: %s' % ckpt.model_checkpoint_path)

saver.restore(sess, ckpt.model_checkpoint_path)

start = global_step.eval()

# training loop

for epoch in range(start, start + FLAGS.epochs):

total_loss = 0.

for i in range(0, len(X_train), FLAGS.batch_size):

# train with mini-batch

feed_dict = {

X: X_train[i: i + FLAGS.batch_size],

y_true: y_train[i: i + FLAGS.batch_size]

}

_, loss = sess.run([train_op, cost], feed_dict=feed_dict)

total_loss += loss

# display loss per epoch

print('Epoch: %04d, loss=%.9f' % (epoch + 1, total_loss))

summary, accuracy = sess.run([merged, acc_op],

feed_dict={X: X_val, y_true: y_val})

writer.add_summary(summary, epoch) # Write summary

print('Accuracy on validation set: %.9f' % accuracy)

# set and update(eval) global_step with epoch

global_step.assign(epoch).eval()

saver.save(sess, ckpt_dir + '/logistic.ckpt',

global_step=global_step)

print('Training complete!')

In [ ]: