版权声明:个人博客网址 https://29dch.github.io/ 博主GitHub网址 https://github.com/29DCH,欢迎大家前来交流探讨和fork! https://blog.csdn.net/CowBoySoBusy/article/details/82933871

一部分内容见我的上一篇博客基于MapReduce的词频统计程序WordCount2App(二)

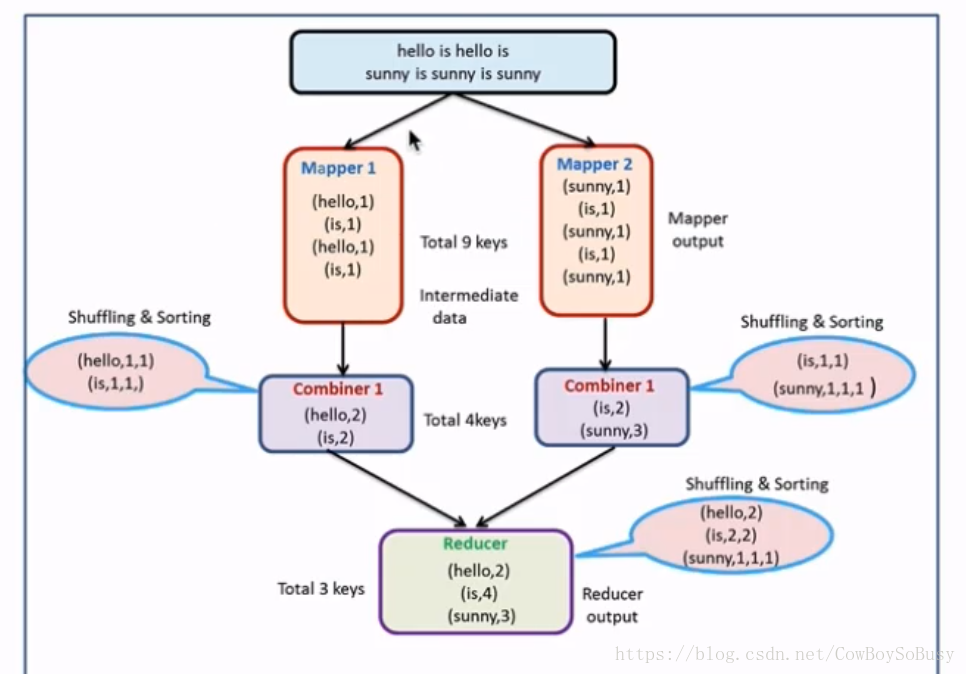

Combiner

可以理解为本地的reducer,减少了Map Tasks输出的数据量以及数据网络传输量

编译运行:

hadoop jar /home/zq/lib/HDFS_Test-1.0-SNAPSHOT.jar MapReduce.CombinerApp hdfs://zq:8020/hello.txt hdfs://zq:8020/output/wc

和前一篇博客的代码是差不多的,只是多出这句核心代码:

//通过job设置combiner处理类,其实逻辑上和我们的reduce是一模一样的

job.setCombinerClass(MyReducer.class);

详细代码如下

CombinerApp.java

package MapReduce;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.IOException;

/**

* 使用MapReduce开发WordCount应用程序

*/

public class CombinerApp {

/**

* Map:读取输入的文件

*/

public static class MyMapper extends Mapper<LongWritable, Text, Text, LongWritable>{

LongWritable one = new LongWritable(1);

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

// 接收到的每一行数据

String line = value.toString();

//按照指定分隔符进行拆分

String[] words = line.split(" ");

for(String word : words) {

// 通过上下文把map的处理结果输出

context.write(new Text(word), one);

}

}

}

/**

* Reduce:归并操作

*/

public static class MyReducer extends Reducer<Text, LongWritable, Text, LongWritable> {

@Override

protected void reduce(Text key, Iterable<LongWritable> values, Context context) throws IOException, InterruptedException {

long sum = 0;

for(LongWritable value : values) {

// 求key出现的次数总和

sum += value.get();

}

// 最终统计结果的输出

context.write(key, new LongWritable(sum));

}

}

/**

* 定义Driver:封装了MapReduce作业的所有信息

*/

public static void main(String[] args) throws Exception{

//创建Configuration

Configuration configuration = new Configuration();

// 准备清理已存在的输出目录

Path outputPath = new Path(args[1]);

FileSystem fileSystem = FileSystem.get(configuration);

if(fileSystem.exists(outputPath)){

fileSystem.delete(outputPath, true);

System.out.println("output file exists, but is has deleted");

}

//创建Job

Job job = Job.getInstance(configuration, "wordcount");

//设置job的处理类

job.setJarByClass(CombinerApp.class);

//设置作业处理的输入路径

FileInputFormat.setInputPaths(job, new Path(args[0]));

//设置map相关参数

job.setMapperClass(MyMapper.class);

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(LongWritable.class);

//设置reduce相关参数

job.setReducerClass(MyReducer.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(LongWritable.class);

//通过job设置combiner处理类,其实逻辑上和我们的reduce是一模一样的

job.setCombinerClass(MyReducer.class);

//设置作业处理的输出路径

FileOutputFormat.setOutputPath(job, new Path(args[1]));

System.exit(job.waitForCompletion(true) ? 0 : 1);

}

}

注意:

使用场景:

求和、次数(局部相加起来就是总和)等是适用的

求平均数(局部平均数的平均数不是总体平均数)不适用

更多代码以及详细信息见我的github相关项目

https://github.com/29DCH/Hadoop-MapReduce-Examples