参考《Openstack设计与实现》

https://blog.apporc.org/2016/04/nova-%E5%88%9B%E5%BB%BA%E8%99%9A%E6%8B%9F%E6%9C%BA%E6%B5%81%E7%A8%8B/

https://github.com/Moonlight-shadow/openstack-workflow

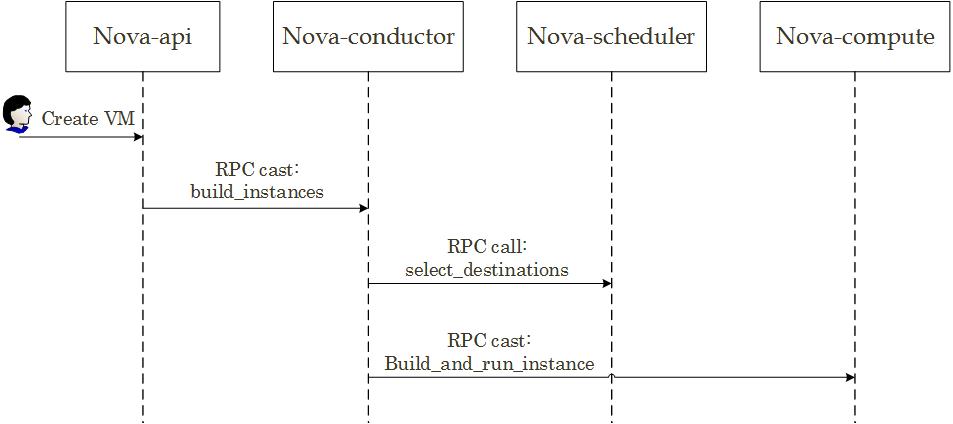

虚拟机创建过程如图1-1

图1-1

如上图,nova-api在收到客户端创建虚拟机的HTTP请求后,通过RPC调用nova.conductor.manager.ComputeTaskManager中的build_instances()方法。nova-conductor会在build_instance()生成request_spec字典,其中包括了详细的虚拟机信息,nova-scheduler会根据这些信息为虚拟机选择一个最佳的主机,然后nova-conductor再通过RPC调用nova-compute创建虚拟机。虚拟机的创建过程即nova中各组件的RPC调用过程。

代码分析

nova-api接收到创建虚拟机的http请求之后,通过nova-api调用compute.API的create方法来创建虚拟机

#nova/api/openstack/compute/servers.py

from nova import compute

class ServersController(wsgi.Controller):

def __init__(self, **kwargs):

...

super(ServersController, self).__init__(**kwargs)

self.compute_api = compute.API(skip_policy_check=True)

#调用compute.API

def create(self, req, body):

#为特定用户创建虚拟机

context = req.environ['nova.context']

server_dict = body['server']

password = self._get_server_admin_password(server_dict)

name = common.normalize_name(server_dict['name'])

#要创建实例的各项参数

create_kwargs = {}

try:

inst_type = flavors.get_flavor_by_flavor_id(

flavor_id, ctxt=context, read_deleted="no")

#调用compute.API的create方法进行虚拟机创建

(instances, resv_id) = self.compute_api.create(context,

inst_type,

image_uuid,

display_name=name,

display_description=name,

metadata=server_dict.get('metadata', {}),

admin_password=password,

requested_networks=requested_networks,

check_server_group_quota=True,

**create_kwargs)

...API的create方法返回值调用APi的_creat_instance()

class API(base.Base):

def compute_task_api(self):

if self._compute_task_api is None:

# TODO(alaski): Remove calls into here from conductor manager so

# that this isn't necessary. #1180540

#调用conductor的ComputeTaskAPI类

from nova import conductor

self._compute_task_api = conductor.ComputeTaskAPI()

return self._compute_task_api

def _create_instance(self, context, instance_type,

image_href, kernel_id, ramdisk_id,

min_count, max_count,

display_name, display_description,

key_name, key_data, security_groups,

availability_zone, user_data, metadata,

injected_files, admin_password,

access_ip_v4, access_ip_v6,

requested_networks, config_drive,

block_device_mapping, auto_disk_config,

reservation_id=None, scheduler_hints=None,

legacy_bdm=True, shutdown_terminate=False,

check_server_group_quota=False):

# self._compute_task_api = conductor.ComputeTaskAPI() 调用调用conductor的ComputeTaskAPI类的build_instances方法

self.compute_task_api.build_instances(context,

instances=instances, image=boot_meta,

filter_properties=filter_properties,

admin_password=admin_password,

injected_files=injected_files,

requested_networks=requested_networks,

security_groups=security_groups,

block_device_mapping=block_device_mapping,

legacy_bdm=False)

return (instances, reservation_id)ComputeTaskAPI中build_instances方法的实现

#/nova/compute/api.py

class ComputeTaskAPI(object):

"""ComputeTask API that queues up compute tasks for nova-conductor."""

def __init__(self):

self.conductor_compute_rpcapi = rpcapi.ComputeTaskAPI()

#云主机调整大小

def resize_instance(self, context, instance, extra_instance_updates,

scheduler_hint, flavor, reservations,

clean_shutdown=True):

# NOTE(comstud): 'extra_instance_updates' is not used here but is

# needed for compatibility with the cells_rpcapi version of this

# method.

self.conductor_compute_rpcapi.migrate_server(

context, instance, scheduler_hint, live=False, rebuild=False,

flavor=flavor, block_migration=None, disk_over_commit=None,

reservations=reservations, clean_shutdown=clean_shutdown)

#rpc调用

def live_migrate_instance(self, context, instance, host_name,

block_migration, disk_over_commit):

scheduler_hint = {'host': host_name}

self.conductor_compute_rpcapi.migrate_server(

context, instance, scheduler_hint, True, False, None,

block_migration, disk_over_commit, None)

#创建云主机,调用rpcapi.ComputeTaskAPI()的build_instances方法

def build_instances(self, context, instances, image, filter_properties,

admin_password, injected_files, requested_networks,

security_groups, block_device_mapping, legacy_bdm=True):

self.conductor_compute_rpcapi.build_instances(context,

instances=instances, image=image,

filter_properties=filter_properties,

admin_password=admin_password, injected_files=injected_files,

requested_networks=requested_networks,

security_groups=security_groups,

block_device_mapping=block_device_mapping,

legacy_bdm=legacy_bdm)

def unshelve_instance(self, context, instance):

self.conductor_compute_rpcapi.unshelve_instance(context,

instance=instance)

#重建云主机

def rebuild_instance(self, context, instance, orig_image_ref, image_ref,

injected_files, new_pass, orig_sys_metadata,

bdms, recreate=False, on_shared_storage=False,

preserve_ephemeral=False, host=None, kwargs=None):

# kwargs unused but required for cell compatibility

self.conductor_compute_rpcapi.rebuild_instance(context,

instance=instance,

new_pass=new_pass,

injected_files=injected_files,

image_ref=image_ref,

orig_image_ref=orig_image_ref,

orig_sys_metadata=orig_sys_metadata,

bdms=bdms,

recreate=recreate,

on_shared_storage=on_shared_storage,

preserve_ephemeral=preserve_ephemeral,

host=host)到了conductor.rpcapi

# nova/conductor/rpcapi.py

class ComputeTaskAPI(object):

"""Client side of the conductor 'compute' namespaced RPC API"""

def build_instances(self, context, instances, image, filter_properties,

admin_password, injected_files, requested_networks,

security_groups, block_device_mapping, legacy_bdm=True):

image_p = jsonutils.to_primitive(image)

version = '1.10'

if not self.client.can_send_version(version):

version = '1.9'

if 'instance_type' in filter_properties:

flavor = filter_properties['instance_type']

flavor_p = objects_base.obj_to_primitive(flavor)

filter_properties = dict(filter_properties,

instance_type=flavor_p)

kw = {'instances': instances, 'image': image_p,

'filter_properties': filter_properties,

'admin_password': admin_password,

'injected_files': injected_files,

'requested_networks': requested_networks,

'security_groups': security_groups}

if not self.client.can_send_version(version):

version = '1.8'

kw['requested_networks'] = kw['requested_networks'].as_tuples()

if not self.client.can_send_version('1.7'):

version = '1.5'

bdm_p = objects_base.obj_to_primitive(block_device_mapping)

kw.update({'block_device_mapping': bdm_p,

'legacy_bdm': legacy_bdm})

cctxt = self.client.prepare(version=version)

#RPC主要用于异步形式,比如下面就用于双肩虚拟机,在创建过程,可能需要比较长的时间

#如果使用RPC call显然对性能有很大影响。cast()的第二个参数是RPC调用的函数名,后面的参数

#将作为参数传递给函数。

#关于同步异步阻塞非阻塞请看 http://blog.csdn.net/moolight_shadow/article/details/70212874

# 这儿就调用上面的build_instances方法进行RPC通信。把创建请求扔到消息队列中

cctxt.cast(context, 'build_instances', **kw)cast() 方法发送出去的 rpc 请求是没有回复的。所以创建虚拟机的请求在_create_instance()一步直接返回了,此时虚拟机并没有创建完毕,但是创建的请求已成功发往了 nova-conductor。后续虚拟机的创建情况通过虚拟机的状态反映。

nova-conductor 提供了 build_instances() 这个 rpc 方法,所以它一直在紧切注视着 rabbitmq 消息队列。当看到有一个请求指向自己时,它就捡起了这个请求,准备进行 build_instances() 操作。进入 nova/conductor/manager.py 这里,做的事情非常明显,发送一个请求到 nova-scheduler,得到一个选好的运行 nova-compute的主机,然后将请求发给 nova-compute。

在nova-conductor进程中进行处理

class ComputeTaskManager(base.Base):

def build_instances(self, context, instances, image, filter_properties,

admin_password, injected_files, requested_networks,

security_groups, block_device_mapping=None, legacy_bdm=True):

# TODO(ndipanov): Remove block_device_mapping and legacy_bdm in version

# 2.0 of the RPC API.

request_spec = scheduler_utils.build_request_spec(context, image,

instances)

for (instance, host) in itertools.izip(instances, hosts):

try:

instance.refresh()

except (exception.InstanceNotFound,

exception.InstanceInfoCacheNotFound):

LOG.debug('Instance deleted during build', instance=instance)

continue

local_filter_props = copy.deepcopy(filter_properties)

scheduler_utils.populate_filter_properties(local_filter_props,

host)

# The block_device_mapping passed from the api doesn't contain

# instance specific information

bdms = objects.BlockDeviceMappingList.get_by_instance_uuid(

context, instance.uuid)

self.compute_rpcapi.build_and_run_instance(context,

instance=instance, host=host['host'], image=image,

request_spec=request_spec,

filter_properties=local_filter_props,

admin_password=admin_password,

injected_files=injected_files,

requested_networks=requested_networks,

security_groups=security_groups,

block_device_mapping=bdms, node=host['nodename'],

limits=host['limits'])