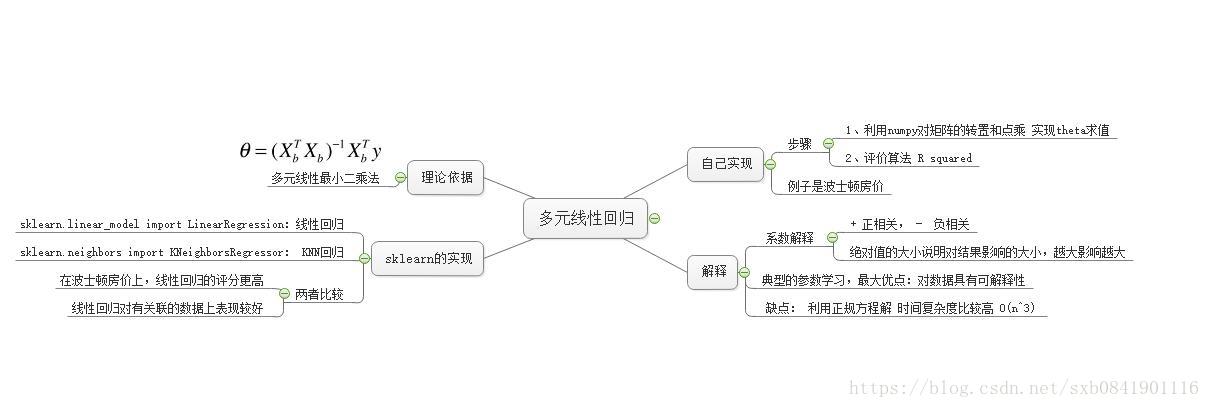

思维导图学习笔记

自己参考BoBo老师课程讲解实现:

# -*- coding: utf-8 -*-

import numpy as np

from metrics import r2_score

class LinearRegression(object):

def __int__(self):

self.coef_ = None # 表示系数

self.intercept_ = None # 表示截距

self._theta = None # 过程计算值,不需要暴露给外面

def fit(self, X_train, y_train):

"""根据训练数据集X_train, y_train训练Linear Regression模型"""

assert X_train is not None and y_train is not None, "训练集X和Y不能为空"

assert X_train.shape[0] == y_train.shape[0], "训练集X和Y的样本数要相等"

# np.linalg.inv(X) 表示求X的逆矩阵

# 不能忘了X要增加一列,第一列数据为0

ones = np.ones(shape=(len(X_train), 1))

X_train = np.hstack((ones, X_train))

self._theta = np.linalg.inv(X_train.T.dot(X_train)).dot(X_train.T).dot(y_train)

self.intercept_ = self._theta[0]

self.coef_ = self._theta[1:]

def _predict(self, X):

return X.dot(self.coef_.T) + self.intercept_

def predict1(self, X_test):

"""给定待预测数据集X_test,返回表示X_test的结果向量"""

assert X_test.shape[1] == self.coef_.shape[0], '测试集X的特征值个数不对'

return np.array([self._predict(X) for X in X_test])

def predict(self, X_test):

"""给定待预测数据集X_test,返回表示X_test的结果向量"""

assert X_test.shape[1] == self.coef_.shape[0], '测试集X的特征值个数不对'

ones = np.ones(shape=(len(X_test), 1))

X_test = np.hstack((ones, X_test))

return X_test.dot(self._theta)

def scores(self, X_test, y_test):

"""根据测试数据集 X_test 和 y_test 确定当前模型的准确度"""

assert X_test.shape[0] == y_test.shape[0], '测试集X和Y的个数不相等'

return r2_score(y_test, self.predict1(X_test))

测试脚本:

# -*- encoding: utf-8 -*-

from sklearn.datasets import load_boston

from model_selection import train_test_split

from linearregression import LinearRegression

boston = load_boston()

X = boston.data

y = boston.target

X = X[y < 50]

y = y[y < 50]

X_train, X_test, y_train, y_test = train_test_split(X, y, seed=666)

lrg = LinearRegression()

lrg.fit(X_train, y_train)

# 为什么求出来的theta.shape== (13L,)

print lrg.coef_

print lrg.intercept_

print (lrg.scores(X_test, y_test))

运行结果:

[-1.18919477e-01 3.63991462e-02 -3.56494193e-02 5.66737830e-02

-1.16195486e+01 3.42022185e+00 -2.31470282e-02 -1.19509560e+00

2.59339091e-01 -1.40112724e-02 -8.36521175e-01 7.92283639e-03

-3.81966137e-01]

34.16143549624022

0.8129802602658537

实现总结:

1、上面的准确率是81.29%

2、在实现过程中,fit方法里面,忘了在X_train训练集中添加1的列向量,导致计算出来的系数矩阵参数不对

3、在pridect中,有两种实现方法,可以直接利用中间计算出来的theta值,也可以用X_test.dot(系数矩阵) + 截距举证

4、 np.hstack这个方法,参数值一个tuple,同时要注意相互之间的顺序,越是前面的矩阵则在合并后矩阵越靠前

5、在numpy中实现一个矩阵的逆矩阵用到的方法是np.linalg.inv方法

sklearn中的回归实现

# -*- encoding: utf-8 -*-

from sklearn.datasets import load_boston

from sklearn.neighbors import KNeighborsRegressor

from sklearn.linear_model import LinearRegression

from sklearn.model_selection import train_test_split, GridSearchCV

def test_regression():

boston = load_boston()

X = boston.data

y = boston.target

X = X[y < 50]

y = y[y < 50]

X_train, X_test, y_train, y_test = train_test_split(X, y)

# sklearn中的利用LinearRegression

lrg = LinearRegression()

lrg.fit(X_train, y_train)

print ('LinearRegression:', lrg.score(X_test, y_test))

# 利用KNN回归

knnrg = KNeighborsRegressor()

# 利用网格搜索找到最好的KNN参数

param_grid = [

{

'weights': ['uniform'],

'n_neighbors': [i for i in range(1, 11)]

},

{

'weights': ['distance'],

'n_neighbors': [i for i in range(1, 11)],

'p': [i for i in range(1, 6)]

}

]

grid_search = GridSearchCV(knnrg, param_grid, n_jobs=-1, verbose=1)

grid_search.fit(X_train, y_train)

print 'GridSearchCV:', grid_search.best_estimator_.score(X_test, y_test)

print 'GridSearchCV Best Params:', grid_search.best_params_

if __name__ == '__main__':

test_regression()

运行结果:

LinearRegression: 0.7068690903842936

Fitting 3 folds for each of 60 candidates, totalling 180 fits

[Parallel(n_jobs=-1)]: Done 180 out of 180 | elapsed: 1.3s finished

GridSearchCV: 0.6994655719704079

GridSearchCV Best Params: {'n_neighbors': 10, 'weights': 'distance', 'p': 1}

sklearn中可以看到出来,线性回归还是要比KNN回归要好。此外也要注意,在使用网格搜索的时候,不能有grid_search.score()方法,而是用grid_search.best_estimator_.score方法,因为两个实现不一样。

要是你在西安,感兴趣一起学习AIOPS,欢迎加入QQ群 860794445