数据集包含百万对病人的记录:

下载记录---【http://bit.ly/1Aoywaq】-需要翻墙才可以下载

解压文件:

unzip donation.zip

继续解压文件:

unzip 'block_*.zip'

创建文件夹:

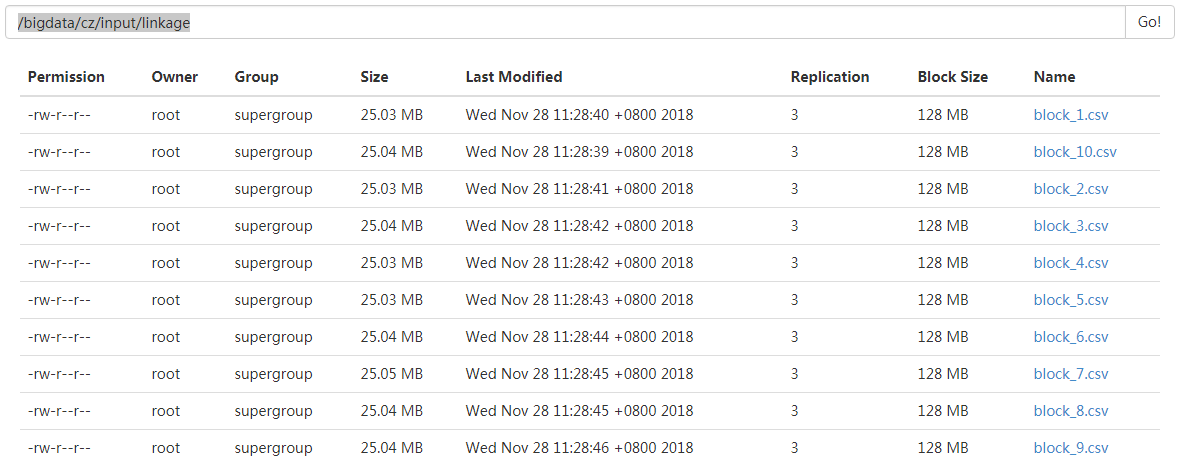

hdfs dfs -mkdir /bigdata/cz/input/linkage

上传文件:

hdfs dfs -put block_*.csv /bigdata/cz/input/linkage/开始spark对其进行操作:

hdfs数据存储位置:

spark代码:

//拿到文件

var blocks = sc.textFile("/bigdata/cz/input/linkage")

//先取1个值看一下数据状态

val head = blocks.take(10)

//数据格式

//Array("id_1","id_2","cmp_fname_c1","cmp_fname_c2","cmp_lname_c1","cmp_lname_c2","cmp_sex","cmp_bd","cmp_bm","cmp_by","cmp_plz","is_match")

定义一个方法,测试是否出现“id_1”字符串序列,等号后面是函数体的内容

def isHeader(line:String):Boolean={

line.contains("id_1")

}

//调用函数,调用过滤器

head.filter(isHeader).foreach(println)

//得到数据

//"id_1","id_2","cmp_fname_c1","cmp_fname_c2","cmp_lname_c1","cmp_lname_c2","cmp_sex","cmp_bd","cmp_bm","cmp_by","cmp_plz","is_match"

//调用函数,调用非过滤器

head.filterNot(isHeader).foreach(println)

//得到数据

/*

39086,47614,1,?,1,?,1,1,1,1,1,TRUE

70031,70237,1,?,1,?,1,1,1,1,1,TRUE

84795,97439,1,?,1,?,1,1,1,1,1,TRUE

36950,42116,1,?,1,1,1,1,1,1,1,TRUE

42413,48491,1,?,1,?,1,1,1,1,1,TRUE

25965,64753,1,?,1,?,1,1,1,1,1,TRUE

49451,90407,1,?,1,?,1,1,1,1,0,TRUE

39932,40902,1,?,1,?,1,1,1,1,1,TRUE

*/spark的强大之处来自于它的血统继承,它可以不用离开sparkShell就可以对整个数据集进行操作,先可以拿到小的数据集进行操作,之后再拿到大量的数据集进行处理。