#两部分需求,一是搜索词实时微博情况,二是相关话题实时的微博情况(通过移动端获取api接口分析得到)

1.相关话题实时的微博情况

规律:要先找到话题list然后再跳转到各话题的实时微博去遍历

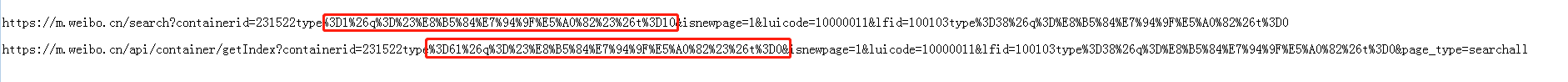

(注意的是话题list的链接不是实时链接需要转化成实时链接后再遍历,这个需要找规律:就是3D前后一个多了个6,一个少了个0)

效果:

代码:

import requests

import json

import re

import csv

def download(url):

headers={

"Accept":"application / json, text / plain, * / *",

"MWeibo - Pwa":"1",

"Referer":"https://m.weibo.cn/search?containerid=100103type%3D1%26q%3D%E8%B5%84%E7%94%9F%E5%A0%82",

"User - Agent":"Mozilla/5.0 (Windows NT 6.1; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/69.0.3497.92 Safari/537.36",

"X - Requested - With":"XMLHttpRequest"

}

html=requests.get(url,headers=headers).text

return json.loads(html)

# 话题实时

topic_lists=[]

topic=download("https://m.weibo.cn/api/container/getIndex?containerid=100103type%3D38%26q%3D%E8%B5%84%E7%94%9F%E5%A0%82%26t%3D0&page_type=searchall")

for n in range(10):

topic_desc2=topic["data"]["cards"][0]["card_group"][n]["desc2"]

topic_title_sub=topic["data"]["cards"][0]["card_group"][n]["title_sub"]

# topic_lists.append(topic_title_sub,topic_desc2)

topic_scheme=topic["data"]["cards"][0]["card_group"][n]["scheme"]

typeid=re.findall("231522type%3D(.*?)3D10",topic_scheme,re.S)[0]

for k in range(1,5):

new_url="https://m.weibo.cn/api/container/getIndex?containerid=231522type%3D6"+typeid+"3D0&isnewpage=1&luicode=10000011&lfid=100103type%3D38%26q%3D%E8%B5%84%E7%94%9F%E5%A0%82%26t%3D0&page_type=searchall&page={}".format(k)

print(new_url)

try:

if download(new_url):

topic_detil = download(new_url)

for m in range(len(topic_detil["data"]["cards"][0]["card_group"])):

topic_detil_content=topic_detil["data"]["cards"][0]["card_group"][m]["mblog"]["text"]

topic_detil_user=topic_detil["data"]["cards"][0]["card_group"][m]["mblog"]["user"]["screen_name"]

topic_detil_mobile=topic_detil["data"]["cards"][0]["card_group"][m]["mblog"]["source"]

topic_lists.append([topic_title_sub,topic_desc2,topic_detil_user,topic_detil_mobile,topic_detil_content])

except:

pass

print(topic_lists)

with open("weibo222.csv", "w", encoding="utf-8",newline="") as f:

k = csv.writer(f, dialect="excel")

k.writerow(["话题", "话题情况", "用户名", "发布来源", "发布内容"])

for list in topic_lists:

k.writerow(list)

2.搜索词实时微博情况

这个相对简单些,但需要坐下判断,因为不同接口返回的数据量可能不一样

效果:

代码:

import requests

import json

import re

import csv

def download(url):

headers={

"Accept":"application / json, text / plain, * / *",

"MWeibo - Pwa":"1",

"Referer":"https://m.weibo.cn/search?containerid=100103type%3D1%26q%3D%E8%B5%84%E7%94%9F%E5%A0%82",

"User - Agent":"Mozilla/5.0 (Windows NT 6.1; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/69.0.3497.92 Safari/537.36",

"X - Requested - With":"XMLHttpRequest"

}

html=requests.get(url,headers=headers).text

return json.loads(html)

#微博实时

wb_lists=[]

for i in range(1,10):

wb_content=download("https://m.weibo.cn/api/container/getIndex?containerid=100103type%3D61%26q%3D%E8%B5%84%E7%94%9F%E5%A0%82%26t%3D0&page_type=searchall&page={}".format(i))

try:

if len(wb_content["data"]["cards"][0]["card_group"]) ==9:

for n in range(9):

wb_test = wb_content["data"]["cards"][0]["card_group"][n]["mblog"]["text"]

wb_user=wb_content["data"]["cards"][0]["card_group"][n]["mblog"]["user"]["screen_name"]

wb_mobile=wb_content["data"]["cards"][0]["card_group"][n]["mblog"]["source"]

wb_lists.append([wb_user,wb_mobile,wb_test])

else:

for n in range(10):

wb_test = wb_content["data"]["cards"][0]["card_group"][n]["mblog"]["text"]

wb_user = wb_content["data"]["cards"][0]["card_group"][n]["mblog"]["user"]["screen_name"]

wb_mobile = wb_content["data"]["cards"][0]["card_group"][n]["mblog"]["source"]

wb_lists.append([wb_user, wb_mobile, wb_test])

except:

pass

with open("weibo111.csv", "w", encoding="utf-8",newline="") as f:

k = csv.writer(f, dialect="excel")

k.writerow(["用户名", "发布来源", "发布内容"])

for list in wb_lists:

k.writerow(list)

print(wb_lists)