版权声明:本文为博主原创文章,未经博主允许不得转载。 https://blog.csdn.net/u012219371/article/details/85233683

要爬取的网站为:

创建一个项目

scrapy startproject tutorial

会创建一个tutorial目录,里面的内容如下

tutorial/

scrapy.cfg # deploy configuration file

tutorial/ # project's Python module, you'll import your code from here

__init__.py

items.py # project items definition file

middlewares.py # project middlewares file

pipelines.py # project pipelines file

settings.py # project settings file

spiders/ # a directory where you'll later put your spiders

__init__.py

编写第一个爬虫

自定义的爬虫需要继承scrapy.Spider,在tutorial/tutorial/spiders目录下新建一个quotes_spider.py文件,内容如下:

import scrapy

class QuotesSpider(scrapy.Spider):

name = "quotes"

def start_requests(self):

urls = [

'http://quotes.toscrape.com/page/1/',

'http://quotes.toscrape.com/page/2/',

]

for url in urls:

yield scrapy.Request(url=url, callback=self.parse)

def parse(self, response):

page = response.url.split("/")[-2]

filename = 'quotes-%s.html' % page

with open(filename, 'wb') as f:

f.write(response.body)

self.log('Saved file %s' % filename)

内容说明:

name:定义爬虫的名字,必须唯一,不同的爬虫名字不能相同

start_requests():该方法主要用来发送请求。必须返回一个可迭代的Request对象。Request参数中的callback用来注册回调方法。

parse():parse是scrapy默认的处理响应的回调方法,response参数是TextResponse的实例。也用来发现新的URL来创建新的Request

运行爬虫

进入项目的根目录,然后运行

scrapy crawl quotes

运行结束后就会看到两个文件:quotes-1.html、quotes-2.html

start_requests 的简单写法

import scrapy

class QuotesSpider(scrapy.Spider):

name = "quotes"

start_urls = [

'http://quotes.toscrape.com/page/1/',

'http://quotes.toscrape.com/page/2/',

]

def parse(self, response):

page = response.url.split("/")[-2]

filename = 'quotes-%s.html' % page

with open(filename, 'wb') as f:

f.write(response.body)

在start_urls中只定义要访问的url即可,不用再定义start_requests方法

提取数据

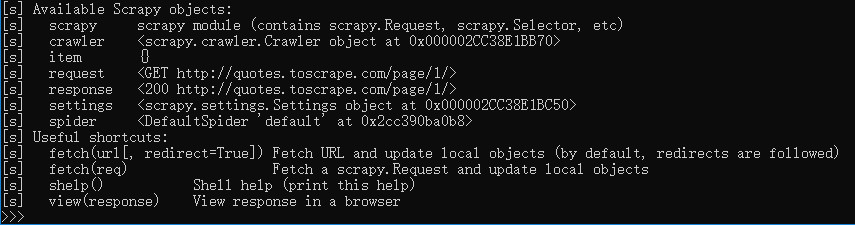

利用scrapy shell来演示,输入如下命令

# URL要用引号引起来,Windows平台要用双引号

scrapy shell 'http://quotes.toscrape.com/page/1/'

或

scrapy shell "http://quotes.toscrape.com/page/1/"

就会进入scrapy shell终端页面,如下图所示

利用CSS选择器来提出数据

>>> response.css('title') # 返回的是SelectorList,即Selector的列表

[<Selector xpath='descendant-or-self::title' data='<title>Quotes to Scrape</title>'>]

>>> response.css('title::text').extract() #只提出title中的内容

['Quotes to Scrape']

>>> response.css('title').extract()

['<title>Quotes to Scrape</title>']

>>> response.css('title::text').extract_first() #可以避免IndexError,没有内容的时候返回None

'Quotes to Scrape

>>> response.css('title::text')[0].extract()

'Quotes to Scrape'

>>> response.css('title::text').re(r'Quotes.*') #利用正则表达式来提取

['Quotes to Scrape']

>>> response.css('title::text').re(r'Q\w+')

['Quotes']

>>> response.css('title::text').re(r'(\w+) to (\w+)')

['Quotes', 'Scrape']

利用XPath来提取数据

>>> response.xpath('//title')

[<Selector xpath='//title' data='<title>Quotes to Scrape</title>'>]

>>> response.xpath('//title/text()').extract_first()

'Quotes to Scrape'

在Spider中提取数据

import scrapy

class QuotesSpider(scrapy.Spider):

name = "quotes"

start_urls = [

'http://quotes.toscrape.com/page/1/',

'http://quotes.toscrape.com/page/2/',

]

def parse(self, response):

for quote in response.css('div.quote'):

yield {

'text': quote.css('span.text::text').extract_first(),

'author': quote.css('small.author::text').extract_first(),

'tags': quote.css('div.tags a.tag::text').extract(),

}

存储爬取到的数据

scrapy crawl quotes -o quotes.json #保存成json格式,重复运行不会覆盖,而是会追加,但会得到一个坏的json文件

scrapy crawl quotes -o quotes.jl #保存成json lines格式,每条记录一行

处理下一页

import scrapy

class QuotesSpider(scrapy.Spider):

name = "quotes"

start_urls = [

'http://quotes.toscrape.com/page/1/',

]

def parse(self, response):

for quote in response.css('div.quote'):

yield {

'text': quote.css('span.text::text').extract_first(),

'author': quote.css('small.author::text').extract_first(),

'tags': quote.css('div.tags a.tag::text').extract(),

}

next_page = response.css('li.next a::attr(href)').extract_first()

if next_page is not None:

next_page = response.urljoin(next_page) #urljoin生成绝对路径

yield scrapy.Request(next_page, callback=self.parse)

Requests 的简单写法

import scrapy

class QuotesSpider(scrapy.Spider):

name = "quotes"

start_urls = [

'http://quotes.toscrape.com/page/1/',

]

def parse(self, response):

for quote in response.css('div.quote'):

yield {

'text': quote.css('span.text::text').extract_first(),

'author': quote.css('span small::text').extract_first(),

'tags': quote.css('div.tags a.tag::text').extract(),

}

next_page = response.css('li.next a::attr(href)').extract_first()

if next_page is not None:

yield response.follow(next_page, callback=self.parse) #follow方法支持相对路径

follow方法也可以传入选择器,而不是String,例如:

for href in response.css('li.next a::attr(href)'):

yield response.follow(href, callback=self.parse)

for a in response.css('li.next a'):

yield response.follow(a, callback=self.parse)

爬取作者信息

import scrapy

class AuthorSpider(scrapy.Spider):

name = 'author'

start_urls = ['http://quotes.toscrape.com/']

def parse(self, response):

# follow links to author pages

for href in response.css('.author + a::attr(href)'):

yield response.follow(href, self.parse_author)

# follow pagination links

for href in response.css('li.next a::attr(href)'):

yield response.follow(href, self.parse)

def parse_author(self, response):

def extract_with_css(query):

return response.css(query).extract_first().strip()

yield {

'name': extract_with_css('h3.author-title::text'),

'birthdate': extract_with_css('.author-born-date::text'),

'bio': extract_with_css('.author-description::text'),

}

向爬虫传递参数

使用 -a 选项,例如

scrapy crawl quotes -o quotes-humor.json -a tag=humor

参数传递到爬虫的__init__方法,默认称为spider的属性

import scrapy

class QuotesSpider(scrapy.Spider):

name = "quotes"

def start_requests(self):

url = 'http://quotes.toscrape.com/'

tag = getattr(self, 'tag', None)

if tag is not None:

url = url + 'tag/' + tag

yield scrapy.Request(url, self.parse)

def parse(self, response):

for quote in response.css('div.quote'):

yield {

'text': quote.css('span.text::text').extract_first(),

'author': quote.css('small.author::text').extract_first(),

}

next_page = response.css('li.next a::attr(href)').extract_first()

if next_page is not None:

yield response.follow(next_page, self.parse)