使用通用自编码器的时候,首先将输入encoder压缩为一个小的 form,然后将其decoder转换成输出的一个估计。如果目标是简单的重现输入效果很好,但是若想生成新的对象就不太可行了,因为其实我们根本不知道这个网络所生成的编码具体是什么。虽然我们可以通过结果去对比不同的对象,但是要理解它内部的工作方式几乎是不可能的,甚至有时候可能连输入应该是什么样子的都不知道。

解决方法是用相反的方法使用变分自编码器(Variational Autoencoder,VAE),即不去关注隐含向量所服从的分布,只需要告诉网络我们想让这个分布转换为什么样子就行了。VAE对隐层的输出增加了长约束,而在对隐层的采样过程也能起到和一般 dropout 效果类似的正则化作用。而至于它的名字变分推理(Variational Inference,VI)的思想是最大化与数据点x相关联的变分下界来训练,即寻找一个容易处理的分布 q(z),使得 q(z) 与目标分布p(z|x) 尽量接近以便用q(z) 来代替 p(z|x),分布之间的‘接近’度量采用 Kullback–Leibler divergence(KL 散度)。

下面用一个简单的github上的代码实现(原地址:https://github.com/FelixMohr/Deep-learning-with-Python/blob/master/VAE.ipynb)来理解:

这段代码目的是生成和MNIST中不一样的手写图像,而首先要做的先对MNIST中的数据进行编码,然后定义一个正态分布便于解码时得出我们期望生成的结果,即在解码时从该分布中随机采样得到“伪造”的图像。

import tensorflow as tf

import numpy as np

import matplotlib.pyplot as plt

from tensorflow.examples.tutorials.mnist import input_data

mnist = input_data.read_data_sets('MNIST_data')#28*28的单色道图像数据

tf.reset_default_graph()

batch_size = 64

X_in = tf.placeholder(dtype=tf.float32, shape=[None, 28, 28], name='X')

Y = tf.placeholder(dtype=tf.float32, shape=[None, 28, 28], name='Y')

Y_flat = tf.reshape(Y, shape=[-1, 28 * 28])#用于计算损失函数

keep_prob = tf.placeholder(dtype=tf.float32, shape=(), name='keep_prob')#dropout比率

dec_in_channels = 1

n_latent = 8

reshaped_dim = [-1, 7, 7, dec_in_channels]

inputs_decoder = 49 * dec_in_channels // 2

def lrelu(x, alpha=0.3):#自定义Leaky ReLU函数使效果更好

return tf.maximum(x, tf.multiply(x, alpha))

#编码

def encoder(X_in, keep_prob):

activation = lrelu

with tf.variable_scope("encoder", reuse=None):

X = tf.reshape(X_in, shape=[-1, 28, 28, 1])

x = tf.layers.conv2d(X, filters=64, kernel_size=4, strides=2, padding='same', activation=activation)

x = tf.nn.dropout(x, keep_prob)

x = tf.layers.conv2d(x, filters=64, kernel_size=4, strides=2, padding='same', activation=activation)

x = tf.nn.dropout(x, keep_prob)

x = tf.layers.conv2d(x, filters=64, kernel_size=4, strides=1, padding='same', activation=activation)

x = tf.nn.dropout(x, keep_prob)

x = tf.contrib.layers.flatten(x)

mn = tf.layers.dense(x, units=n_latent)#means

sd = 0.5 * tf.layers.dense(x, units=n_latent)#standard deviations

epsilon = tf.random_normal(tf.stack([tf.shape(x)[0], n_latent])) #从正态分布中采样

z = mn + tf.multiply(epsilon, tf.exp(sd))

return z, mn, sd

#解码

def decoder(sampled_z, keep_prob):

with tf.variable_scope("decoder", reuse=None):

x = tf.layers.dense(sampled_z, units=inputs_decoder, activation=lrelu)

x = tf.layers.dense(x, units=inputs_decoder * 2 + 1, activation=lrelu)

x = tf.reshape(x, reshaped_dim)

x = tf.layers.conv2d_transpose(x, filters=64, kernel_size=4, strides=2, padding='same', activation=tf.nn.relu)

x = tf.nn.dropout(x, keep_prob)

x = tf.layers.conv2d_transpose(x, filters=64, kernel_size=4, strides=1, padding='same', activation=tf.nn.relu)

x = tf.nn.dropout(x, keep_prob)

x = tf.layers.conv2d_transpose(x, filters=64, kernel_size=4, strides=1, padding='same', activation=tf.nn.relu)

x = tf.contrib.layers.flatten(x)

x = tf.layers.dense(x, units=28*28, activation=tf.nn.sigmoid)

img = tf.reshape(x, shape=[-1, 28, 28])

return img

#结合

sampled, mn, sd = encoder(X_in, keep_prob)

dec = decoder(sampled, keep_prob)

#损失函数

unreshaped = tf.reshape(dec, [-1, 28*28])

img_loss = tf.reduce_sum(tf.squared_difference(unreshaped, Y_flat), 1)

latent_loss = -0.5 * tf.reduce_sum(1.0 + 2.0 * sd - tf.square(mn) - tf.exp(2.0 * sd), 1)

loss = tf.reduce_mean(img_loss + latent_loss)

optimizer = tf.train.AdamOptimizer(0.0005).minimize(loss)

sess = tf.Session()

sess.run(tf.global_variables_initializer())

for i in range(30000):#开始训练

batch = [np.reshape(b, [28, 28]) for b in mnist.train.next_batch(batch_size=batch_size)[0]]

sess.run(optimizer, feed_dict = {X_in: batch, Y: batch, keep_prob: 0.8})

if not i % 200:

ls, d, i_ls, d_ls, mu, sigm = sess.run([loss, dec, img_loss, latent_loss, mn, sd], feed_dict = {X_in: batch, Y: batch, keep_prob: 1.0})

plt.imshow(np.reshape(batch[0], [28, 28]), cmap='gray')

plt.show()

plt.imshow(d[0], cmap='gray')

plt.show()

print(i, ls, np.mean(i_ls), np.mean(d_ls))

#生成新的字符

randoms = [np.random.normal(0, 1, n_latent) for _ in range(10)]

imgs = sess.run(dec, feed_dict = {sampled: randoms, keep_prob: 1.0})

imgs = [np.reshape(imgs[i], [28, 28]) for i in range(len(imgs))]

for img in imgs:

plt.figure(figsize=(1,1))

plt.axis('off')

plt.imshow(img, cmap='gray')

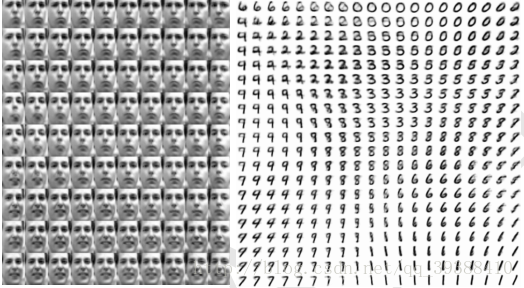

另外它还有非常好的特性是同时训练参数编码器与生成器网络的组合迫使模型学习编码器可以捕获可预测的坐标系,这使其成为一个优秀的流形学习算法,如下图展示的是由变分自动编码器学到的低维流形的例子,可以看出它发现了两个存在于面部图像的因素:旋转角和情绪表达。

而VAE的主要缺点是从在图像上训练的变分自动编码器中采样的样本往往有些模糊,而且原因尚不清楚,其中一种可能性是因为最小化KL散度而由于模糊性是最大似然的固有效应产生的。

主要参考:

bengio deep learning