系列连载目录

- 请查看博客 《Paper》 4.1 小节 【Keras】Classification in CIFAR-10 系列连载

学习借鉴

-

github:BIGBALLON/cifar-10-cnn

-

知乎专栏:写给妹子的深度学习教程

-

Inception-resnet-v2 Caffe 代码:https://github.com/soeaver/caffe-model/blob/master/cls/inception/deploy_inception-resnet-v2.prototxt

-

Inception-resnet-v1 / v2 Keras 代码:https://github.com/titu1994/Inception-v4

-

GoogleNet网络详解与keras实现:https://blog.csdn.net/qq_25491201/article/details/78367696

参考

代码下载

- 链接:https://pan.baidu.com/s/19rIn_o1C-z8othmNSD-Ykg

提取码:0qyz

硬件

- TITAN XP

文章目录

1 理论基础

参考【Inception-v4】《Inception-v4, Inception-ResNet and the Impact of Residual Connections on Learning》

2 Inception-resnet-v1 代码实现

[D] Why aren’t Inception-style networks successful on CIFAR-10/100?

图片来源 https://www.zhihu.com/question/50370954

2.1 Inception_resnet_v1

1)导入库,设置好超参数

import os

os.environ["CUDA_DEVICE_ORDER"]="PCI_BUS_ID"

os.environ["CUDA_VISIBLE_DEVICES"]="3"

import keras

import numpy as np

import math

from keras.datasets import cifar10

from keras.layers import Conv2D, MaxPooling2D, AveragePooling2D, ZeroPadding2D, GlobalAveragePooling2D

from keras.layers import Flatten, Dense, Dropout,BatchNormalization,Activation, Convolution2D, add

from keras.models import Model

from keras.layers import Input, concatenate

from keras import optimizers, regularizers

from keras.preprocessing.image import ImageDataGenerator

from keras.initializers import he_normal

from keras.callbacks import LearningRateScheduler, TensorBoard, ModelCheckpoint

num_classes = 10

batch_size = 64 # 64 or 32 or other

epochs = 300

iterations = 782

USE_BN=True

DROPOUT=0.2 # keep 80%

CONCAT_AXIS=3

weight_decay=1e-4

DATA_FORMAT='channels_last' # Theano:'channels_first' Tensorflow:'channels_last'

log_filepath = './inception_resnet_v1'

2)数据预处理并设置 learning schedule

def color_preprocessing(x_train,x_test):

x_train = x_train.astype('float32')

x_test = x_test.astype('float32')

mean = [125.307, 122.95, 113.865]

std = [62.9932, 62.0887, 66.7048]

for i in range(3):

x_train[:,:,:,i] = (x_train[:,:,:,i] - mean[i]) / std[i]

x_test[:,:,:,i] = (x_test[:,:,:,i] - mean[i]) / std[i]

return x_train, x_test

def scheduler(epoch):

if epoch < 100:

return 0.01

if epoch < 200:

return 0.001

return 0.0001

# load data

(x_train, y_train), (x_test, y_test) = cifar10.load_data()

y_train = keras.utils.to_categorical(y_train, num_classes)

y_test = keras.utils.to_categorical(y_test, num_classes)

x_train, x_test = color_preprocessing(x_train, x_test)

3)定义网络结构

- Factorization into smaller convolutions

eg:3×3 → 3×1 + 1×3

def conv_block(x, nb_filter, nb_row, nb_col, border_mode='same', subsample=(1,1), bias=False):

x = Convolution2D(nb_filter, nb_row, nb_col, subsample=subsample, border_mode=border_mode, bias=bias,

init="he_normal",dim_ordering='tf',W_regularizer=regularizers.l2(weight_decay))(x)

x = BatchNormalization(momentum=0.9, epsilon=1e-5)(x)

x = Activation('relu')(x)

return x

- stem(图6右边 in paper)

上图中,V 表示padding为valid,没有标注的表示same, 因为数据集分辨率的不同,如下代码全部用same表示:

def create_stem(img_input,concat_axis):

x = Conv2D(32,kernel_size=(3,3),strides=(2,2),padding='same',

kernel_initializer="he_normal",kernel_regularizer=regularizers.l2(weight_decay))(img_input)

x = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(x))

x = Conv2D(32,kernel_size=(3,3),strides=(1,1),padding='same',

kernel_initializer="he_normal",kernel_regularizer=regularizers.l2(weight_decay))(x)

x = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(x))

x = Conv2D(64,kernel_size=(3,3),strides=(1,1),padding='same',

kernel_initializer="he_normal",kernel_regularizer=regularizers.l2(weight_decay))(x)

x = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(x))

x = MaxPooling2D(pool_size=(3,3),strides=2,padding='same',data_format=DATA_FORMAT)(x)

x = Conv2D(80,kernel_size=(1,1),strides=(1,1),padding='same',

kernel_initializer="he_normal",kernel_regularizer=regularizers.l2(weight_decay))(x)

x = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(x))

x = Conv2D(192,kernel_size=(3,3),strides=(1,1),padding='same',

kernel_initializer="he_normal",kernel_regularizer=regularizers.l2(weight_decay))(x)

x = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(x))

x = Conv2D(256,kernel_size=(3,3),strides=(2,2),padding='same',

kernel_initializer="he_normal",kernel_regularizer=regularizers.l2(weight_decay))(x)

x = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(x))

return x

- inception module A(图4左边 in paper)

def inception_A(x,params,concat_axis,padding='same',data_format=DATA_FORMAT,scale_residual=False,use_bias=True,kernel_initializer="he_normal",bias_initializer='zeros',kernel_regularizer=None,bias_regularizer=None,activity_regularizer=None,kernel_constraint=None,bias_constraint=None,weight_decay=weight_decay):

(branch1,branch2,branch3,branch4)=params

if weight_decay:

kernel_regularizer=regularizers.l2(weight_decay)

bias_regularizer=regularizers.l2(weight_decay)

else:

kernel_regularizer=None

bias_regularizer=None

#1x1

pathway1=Conv2D(filters=branch1[0],kernel_size=(1,1),strides=1,padding=padding,data_format=data_format,use_bias=use_bias,kernel_initializer=kernel_initializer,bias_initializer=bias_initializer,kernel_regularizer=kernel_regularizer,bias_regularizer=bias_regularizer,activity_regularizer=activity_regularizer,kernel_constraint=kernel_constraint,bias_constraint=bias_constraint)(x)

pathway1 = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(pathway1))

#1x1->3x3

pathway2=Conv2D(filters=branch2[0],kernel_size=(1,1),strides=1,padding=padding,data_format=data_format,use_bias=use_bias,kernel_initializer=kernel_initializer,bias_initializer=bias_initializer,kernel_regularizer=kernel_regularizer,bias_regularizer=bias_regularizer,activity_regularizer=activity_regularizer,kernel_constraint=kernel_constraint,bias_constraint=bias_constraint)(x)

pathway2 = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(pathway2))

pathway2=Conv2D(filters=branch2[1],kernel_size=(3,3),strides=1,padding=padding,data_format=data_format,use_bias=use_bias,kernel_initializer=kernel_initializer,bias_initializer=bias_initializer,kernel_regularizer=kernel_regularizer,bias_regularizer=bias_regularizer,activity_regularizer=activity_regularizer,kernel_constraint=kernel_constraint,bias_constraint=bias_constraint)(pathway2)

pathway2 = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(pathway2))

#1x1->3x3->3x3

pathway3=Conv2D(filters=branch3[0],kernel_size=(1,1),strides=1,padding=padding,data_format=data_format,use_bias=use_bias,kernel_initializer=kernel_initializer,bias_initializer=bias_initializer,kernel_regularizer=kernel_regularizer,bias_regularizer=bias_regularizer,activity_regularizer=activity_regularizer,kernel_constraint=kernel_constraint,bias_constraint=bias_constraint)(x)

pathway3 = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(pathway3))

pathway3=Conv2D(filters=branch3[1],kernel_size=(3,3),strides=1,padding=padding,data_format=data_format,use_bias=use_bias,kernel_initializer=kernel_initializer,bias_initializer=bias_initializer,kernel_regularizer=kernel_regularizer,bias_regularizer=bias_regularizer,activity_regularizer=activity_regularizer,kernel_constraint=kernel_constraint,bias_constraint=bias_constraint)(pathway3)

pathway3 = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(pathway3))

pathway3=Conv2D(filters=branch3[1],kernel_size=(3,3),strides=1,padding=padding,data_format=data_format,use_bias=use_bias,kernel_initializer=kernel_initializer,bias_initializer=bias_initializer,kernel_regularizer=kernel_regularizer,bias_regularizer=bias_regularizer,activity_regularizer=activity_regularizer,kernel_constraint=kernel_constraint,bias_constraint=bias_constraint)(pathway3)

pathway3 = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(pathway3))

# concatenate

pathway_123 = concatenate([pathway1,pathway2,pathway3],axis=concat_axis)

pathway_123 = Conv2D(branch4[0],kernel_size=(1,1),strides=(1,1),padding='same',activation = 'linear',

kernel_initializer="he_normal",kernel_regularizer=regularizers.l2(weight_decay))(pathway_123)

if scale_residual:

x = Lambda(lambda p: p * 0.1)(x)

return add([x,pathway_123])

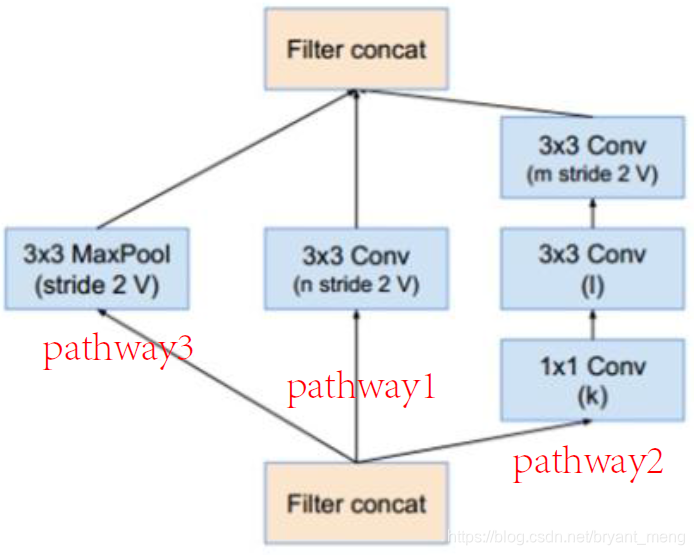

- reduction A(同 inception v4)

图片来源 https://www.zhihu.com/question/50370954

def reduce_A(x,params,concat_axis,padding='same',data_format=DATA_FORMAT,use_bias=True,kernel_initializer="he_normal",bias_initializer='zeros',kernel_regularizer=None,bias_regularizer=None,activity_regularizer=None,kernel_constraint=None,bias_constraint=None,weight_decay=weight_decay):

(branch1,branch2)=params

if weight_decay:

kernel_regularizer=regularizers.l2(weight_decay)

bias_regularizer=regularizers.l2(weight_decay)

else:

kernel_regularizer=None

bias_regularizer=None

#1x1

pathway1 = Conv2D(filters=branch1[0],kernel_size=(3,3),strides=2,padding=padding,data_format=data_format,use_bias=use_bias,kernel_initializer=kernel_initializer,bias_initializer=bias_initializer,kernel_regularizer=kernel_regularizer,bias_regularizer=bias_regularizer,activity_regularizer=activity_regularizer,kernel_constraint=kernel_constraint,bias_constraint=bias_constraint)(x)

pathway1 = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(pathway1))

#1x1->3x3->3x3

pathway2 = Conv2D(filters=branch2[0],kernel_size=(1,1),strides=1,padding=padding,data_format=data_format,use_bias=use_bias,kernel_initializer=kernel_initializer,bias_initializer=bias_initializer,kernel_regularizer=kernel_regularizer,bias_regularizer=bias_regularizer,activity_regularizer=activity_regularizer,kernel_constraint=kernel_constraint,bias_constraint=bias_constraint)(x)

pathway2 = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(pathway2))

pathway2 = Conv2D(filters=branch2[1],kernel_size=(3,3),strides=1,padding=padding,data_format=data_format,use_bias=use_bias,kernel_initializer=kernel_initializer,bias_initializer=bias_initializer,kernel_regularizer=kernel_regularizer,bias_regularizer=bias_regularizer,activity_regularizer=activity_regularizer,kernel_constraint=kernel_constraint,bias_constraint=bias_constraint)(pathway2)

pathway2 = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(pathway2))

pathway2 = Conv2D(filters=branch2[2],kernel_size=(3,3),strides=2,padding=padding,data_format=data_format,use_bias=use_bias,kernel_initializer=kernel_initializer,bias_initializer=bias_initializer,kernel_regularizer=kernel_regularizer,bias_regularizer=bias_regularizer,activity_regularizer=activity_regularizer,kernel_constraint=kernel_constraint,bias_constraint=bias_constraint)(pathway2)

pathway2 = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(pathway2))

# max pooling

pathway3 = MaxPooling2D(pool_size=(3,3),strides=2,padding=padding,data_format=DATA_FORMAT)(x)

return concatenate([pathway1,pathway2,pathway3],axis=concat_axis)

- inception module B(图4中间 in paper)

def inception_B(x,params,concat_axis,padding='same',data_format=DATA_FORMAT,scale_residual=False,use_bias=True,kernel_initializer="he_normal",bias_initializer='zeros',kernel_regularizer=None,bias_regularizer=None,activity_regularizer=None,kernel_constraint=None,bias_constraint=None,weight_decay=weight_decay):

(branch1,branch2,branch3)=params

if weight_decay:

kernel_regularizer=regularizers.l2(weight_decay)

bias_regularizer=regularizers.l2(weight_decay)

else:

kernel_regularizer=None

bias_regularizer=None

#1x1

pathway1=Conv2D(filters=branch1[0],kernel_size=(1,1),strides=1,padding=padding,data_format=data_format,use_bias=use_bias,kernel_initializer=kernel_initializer,bias_initializer=bias_initializer,kernel_regularizer=kernel_regularizer,bias_regularizer=bias_regularizer,activity_regularizer=activity_regularizer,kernel_constraint=kernel_constraint,bias_constraint=bias_constraint)(x)

pathway1 = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(pathway1))

#1x1->1x7->7x1

pathway2=Conv2D(filters=branch2[0],kernel_size=(1,1),strides=1,padding=padding,data_format=data_format,use_bias=use_bias,kernel_initializer=kernel_initializer,bias_initializer=bias_initializer,kernel_regularizer=kernel_regularizer,bias_regularizer=bias_regularizer,activity_regularizer=activity_regularizer,kernel_constraint=kernel_constraint,bias_constraint=bias_constraint)(x)

pathway2 = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(pathway2))

pathway2 = conv_block(pathway2,branch2[1],1,7)

pathway2 = conv_block(pathway2,branch2[2],7,1)

# concatenate

pathway_12 = concatenate([pathway1,pathway2],axis=concat_axis)

pathway_12 = Conv2D(branch3[0],kernel_size=(1,1),strides=(1,1),padding='same',activation = 'linear',

kernel_initializer="he_normal",kernel_regularizer=regularizers.l2(weight_decay))(pathway_12)

if scale_residual:

x = Lambda(lambda p: p * 0.1)(x)

return add([x,pathway_12])

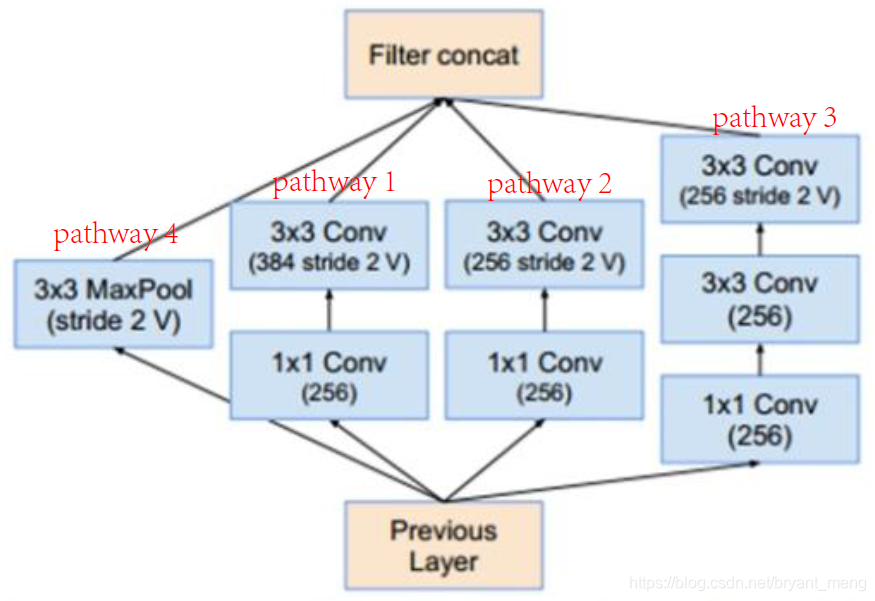

- reduction B

def reduce_B(x,params,concat_axis,padding='same',data_format=DATA_FORMAT,use_bias=True,kernel_initializer="he_normal",bias_initializer='zeros',kernel_regularizer=None,bias_regularizer=None,activity_regularizer=None,kernel_constraint=None,bias_constraint=None,weight_decay=weight_decay):

(branch1,branch2,branch3)=params

if weight_decay:

kernel_regularizer=regularizers.l2(weight_decay)

bias_regularizer=regularizers.l2(weight_decay)

else:

kernel_regularizer=None

bias_regularizer=None

#1x1->3x3

pathway1 = Conv2D(filters=branch1[0],kernel_size=(1,1),strides=1,padding=padding,data_format=data_format,use_bias=use_bias,kernel_initializer=kernel_initializer,bias_initializer=bias_initializer,kernel_regularizer=kernel_regularizer,bias_regularizer=bias_regularizer,activity_regularizer=activity_regularizer,kernel_constraint=kernel_constraint,bias_constraint=bias_constraint)(x)

pathway1 = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(pathway1))

pathway1 = Conv2D(filters=branch1[1],kernel_size=(3,3),strides=2,padding=padding,data_format=data_format,use_bias=use_bias,kernel_initializer=kernel_initializer,bias_initializer=bias_initializer,kernel_regularizer=kernel_regularizer,bias_regularizer=bias_regularizer,activity_regularizer=activity_regularizer,kernel_constraint=kernel_constraint,bias_constraint=bias_constraint)(pathway1)

pathway1 = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(pathway1))

#1x1->3x3

pathway2 = Conv2D(filters=branch2[0],kernel_size=(1,1),strides=1,padding=padding,data_format=data_format,use_bias=use_bias,kernel_initializer=kernel_initializer,bias_initializer=bias_initializer,kernel_regularizer=kernel_regularizer,bias_regularizer=bias_regularizer,activity_regularizer=activity_regularizer,kernel_constraint=kernel_constraint,bias_constraint=bias_constraint)(x)

pathway2 = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(pathway2))

pathway2 = Conv2D(filters=branch2[1],kernel_size=(3,3),strides=2,padding=padding,data_format=data_format,use_bias=use_bias,kernel_initializer=kernel_initializer,bias_initializer=bias_initializer,kernel_regularizer=kernel_regularizer,bias_regularizer=bias_regularizer,activity_regularizer=activity_regularizer,kernel_constraint=kernel_constraint,bias_constraint=bias_constraint)(pathway2)

pathway2 = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(pathway2))

#1x1->3x3->3x3

pathway3 = Conv2D(filters=branch3[0],kernel_size=(1,1),strides=1,padding=padding,data_format=data_format,use_bias=use_bias,kernel_initializer=kernel_initializer,bias_initializer=bias_initializer,kernel_regularizer=kernel_regularizer,bias_regularizer=bias_regularizer,activity_regularizer=activity_regularizer,kernel_constraint=kernel_constraint,bias_constraint=bias_constraint)(x)

pathway3 = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(pathway3))

pathway3 = Conv2D(filters=branch3[1],kernel_size=(3,3),strides=1,padding=padding,data_format=data_format,use_bias=use_bias,kernel_initializer=kernel_initializer,bias_initializer=bias_initializer,kernel_regularizer=kernel_regularizer,bias_regularizer=bias_regularizer,activity_regularizer=activity_regularizer,kernel_constraint=kernel_constraint,bias_constraint=bias_constraint)(pathway3)

pathway3 = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(pathway3))

pathway3 = Conv2D(filters=branch3[2],kernel_size=(3,3),strides=2,padding=padding,data_format=data_format,use_bias=use_bias,kernel_initializer=kernel_initializer,bias_initializer=bias_initializer,kernel_regularizer=kernel_regularizer,bias_regularizer=bias_regularizer,activity_regularizer=activity_regularizer,kernel_constraint=kernel_constraint,bias_constraint=bias_constraint)(pathway3)

pathway3 = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(pathway3))

# max pooling

pathway4 = MaxPooling2D(pool_size=(3,3),strides=2,padding=padding,data_format=DATA_FORMAT)(x)

return concatenate([pathway1,pathway2,pathway3,pathway4],axis=concat_axis)

- inception module C(图4右边 in paper)

def inception_C(x,params,concat_axis,padding='same',data_format=DATA_FORMAT,scale_residual=False,use_bias=True,kernel_initializer="he_normal",bias_initializer='zeros',kernel_regularizer=None,bias_regularizer=None,activity_regularizer=None,kernel_constraint=None,bias_constraint=None,weight_decay=weight_decay):

(branch1,branch2,branch3)=params

if weight_decay:

kernel_regularizer=regularizers.l2(weight_decay)

bias_regularizer=regularizers.l2(weight_decay)

else:

kernel_regularizer=None

bias_regularizer=None

#1x1

pathway1=Conv2D(filters=branch1[0],kernel_size=(1,1),strides=1,padding=padding,data_format=data_format,use_bias=use_bias,kernel_initializer=kernel_initializer,bias_initializer=bias_initializer,kernel_regularizer=kernel_regularizer,bias_regularizer=bias_regularizer,activity_regularizer=activity_regularizer,kernel_constraint=kernel_constraint,bias_constraint=bias_constraint)(x)

pathway1 = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(pathway1))

#1x1->1x3->3x1

pathway2=Conv2D(filters=branch2[0],kernel_size=(1,1),strides=1,padding=padding,data_format=data_format,use_bias=use_bias,kernel_initializer=kernel_initializer,bias_initializer=bias_initializer,kernel_regularizer=kernel_regularizer,bias_regularizer=bias_regularizer,activity_regularizer=activity_regularizer,kernel_constraint=kernel_constraint,bias_constraint=bias_constraint)(x)

pathway2 = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(pathway2))

pathway2 = conv_block(pathway2,branch2[1],1,3)

pathway2 = conv_block(pathway2,branch2[2],3,1)

# concatenate

pathway_12 = concatenate([pathway1,pathway2],axis=concat_axis)

pathway_12 = Conv2D(branch3[0],kernel_size=(1,1),strides=(1,1),padding='same',activation = 'linear',

kernel_initializer="he_normal",kernel_regularizer=regularizers.l2(weight_decay))(pathway_12)

if scale_residual:

x = Lambda(lambda p: p * 0.1)(x)

return add([x,pathway_12])

4)搭建网络

用 3)中设计好的模块来搭建网络

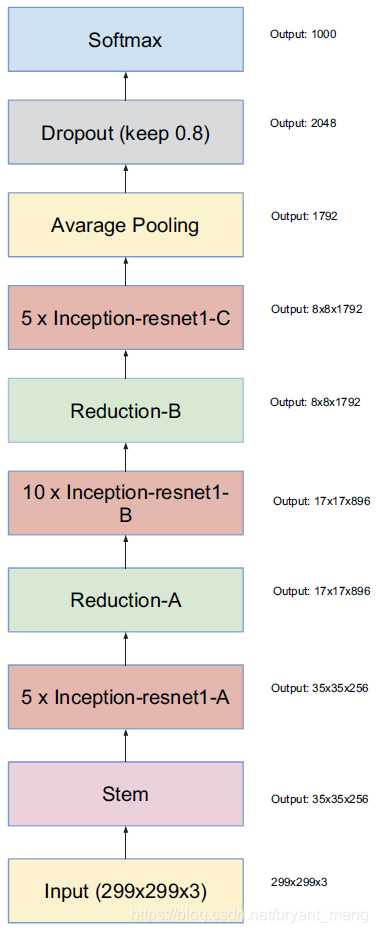

整体结构(图6左边 in paper)

def create_model(img_input):

# stem

x = create_stem(img_input,concat_axis=CONCAT_AXIS)

# 5 x inception_A

for _ in range(5):

x=inception_A(x,params=[(32,),(32,32),(32,32,32),(256,)],concat_axis=CONCAT_AXIS)

# reduce A

x=reduce_A(x,params=[(384,),(192,224,256)],concat_axis=CONCAT_AXIS) # 768

# 10 x inception_B

for _ in range(10):

x=inception_B(x,params=[(128,),(128,128,128),(896,)],concat_axis=CONCAT_AXIS)

# reduce B

x=reduce_B(x,params=[(256,384),(256,256),(256,256,256)],concat_axis=CONCAT_AXIS) # 1280

# 5 x inception_C

for _ in range(5):

x=inception_C(x,params=[(192,),(192,192,192),(1792,)],concat_axis=CONCAT_AXIS)

x=GlobalAveragePooling2D()(x)

x=Dropout(DROPOUT)(x)

x = Dense(num_classes,activation='softmax',kernel_initializer="he_normal",

kernel_regularizer=regularizers.l2(weight_decay))(x)

return x

5)生成模型

img_input=Input(shape=(32,32,3))

output = create_model(img_input)

model=Model(img_input,output)

model.summary()

参数量如下:

Total params: 21,146,522

Trainable params: 21,119,418

Non-trainable params: 27,104

和 inception_v3 参数量差不多

Total params: 23,397,866

Trainable params: 23,359,978

Non-trainable params: 37,888

6)开始训练

# set optimizer

sgd = optimizers.SGD(lr=.1, momentum=0.9, nesterov=True)

model.compile(loss='categorical_crossentropy', optimizer=sgd, metrics=['accuracy'])

# set callback

tb_cb = TensorBoard(log_dir=log_filepath, histogram_freq=0)

change_lr = LearningRateScheduler(scheduler)

cbks = [change_lr,tb_cb]

# set data augmentation

datagen = ImageDataGenerator(horizontal_flip=True,

width_shift_range=0.125,

height_shift_range=0.125,

fill_mode='constant',cval=0.)

datagen.fit(x_train)

# start training

model.fit_generator(datagen.flow(x_train, y_train,batch_size=batch_size),

steps_per_epoch=iterations,

epochs=epochs,

callbacks=cbks,

validation_data=(x_test, y_test))

model.save('inception_resnet_v1.h5')

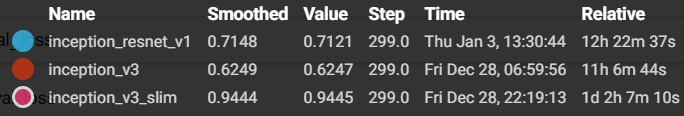

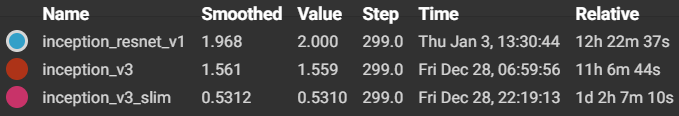

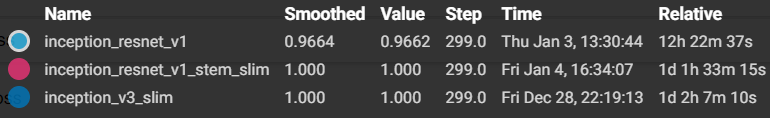

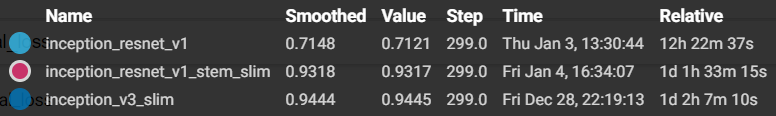

7)结果分析

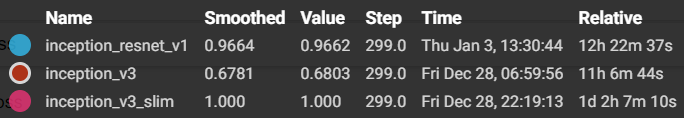

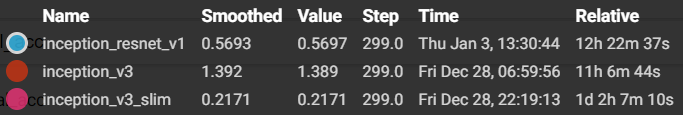

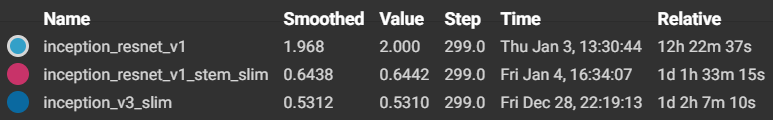

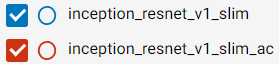

training accuracy 和 training loss

- accuracy

- loss

在训练集上效果还行,比inception-v3好

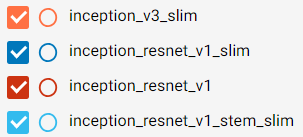

test accuracy 和 test loss

- accuracy

- loss

…………

结果意料之中,因为前面的 stem 把 feature map 的分辨率降到了 4( ),后面inception module中 1x7 与 7x1 的效果可想而知,都是在处理 padding, 差,相当的差。

不要慌,问题不大,有了 inception_v1 to inception_v4 摸爬滚打的经验,Everything is under control.

2.2 Inception_resnet_v1_stem_slim

对2.1 小节中的 inception 模块函数 def create_stem(……)进行如下修改:

把 stride 为 2 的 convolution 和 pooling 都替换成 1,保持 feature map 的分辨率,让 1×7,7×1 convolution 有的放矢

def create_stem(img_input,concat_axis):

x = Conv2D(32,kernel_size=(3,3),strides=(1,1),padding='same',

kernel_initializer="he_normal",kernel_regularizer=regularizers.l2(weight_decay))(img_input)

x = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(x))

x = Conv2D(32,kernel_size=(3,3),strides=(1,1),padding='same',

kernel_initializer="he_normal",kernel_regularizer=regularizers.l2(weight_decay))(x)

x = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(x))

x = Conv2D(64,kernel_size=(3,3),strides=(1,1),padding='same',

kernel_initializer="he_normal",kernel_regularizer=regularizers.l2(weight_decay))(x)

x = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(x))

x = MaxPooling2D(pool_size=(3,3),strides=1,padding='same',data_format=DATA_FORMAT)(x)

x = Conv2D(80,kernel_size=(1,1),strides=(1,1),padding='same',

kernel_initializer="he_normal",kernel_regularizer=regularizers.l2(weight_decay))(x)

x = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(x))

x = Conv2D(192,kernel_size=(3,3),strides=(1,1),padding='same',

kernel_initializer="he_normal",kernel_regularizer=regularizers.l2(weight_decay))(x)

x = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(x))

x = Conv2D(256,kernel_size=(3,3),strides=(1,1),padding='same',

kernel_initializer="he_normal",kernel_regularizer=regularizers.l2(weight_decay))(x)

x = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(x))

return x

其它代码同 inception_resnet_v1 参数量如下(不变):

Total params: 21,146,522

Trainable params: 21,119,418

Non-trainable params: 27,104

- Inception_resnet_v1

Total params: 21,146,522 - inception_v3

Total params: 23,397,866

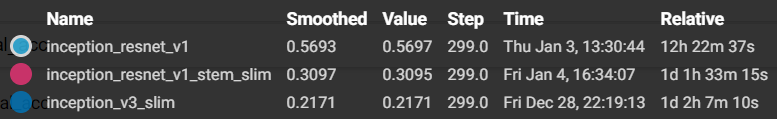

结果分析如下:

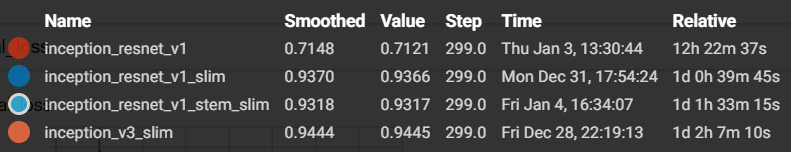

training accuracy 和 training loss

- accuracy

- loss

test accuracy 和 test loss

- accuracy

- loss

结果还行,上到了 93%+

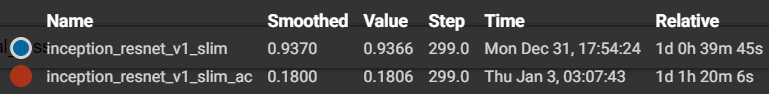

2.3 Inception_resnet_v1_slim

把 Inception_resnet_v1 中 stern 结构直接替换成一个卷积,inception 结构不变,因为stern结果会把原图降到1/8的分辨率,对于 ImageNet 还行,CIFRA-10的话有些吃不消了

def create_stem(img_input,concat_axis):

x = Conv2D(256,kernel_size=(3,3),strides=(1,1),padding='same',

kernel_initializer="he_normal",kernel_regularizer=regularizers.l2(weight_decay))(img_input)

x = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(x))

return x

其它代码同 inception_resnet_v1

参数量如下,降低了一丢丢:

Total params: 20,537,194

Trainable params: 20,510,890

Non-trainable params: 26,304

- Inception_resnet_v1

Total params: 21,146,522 - inception_resnet_v1_stem_slim

Total params: 21,146,522 - inception_v3

Total params: 23,397,866

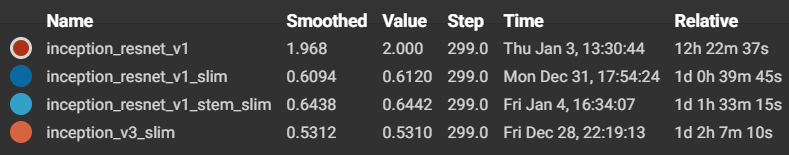

结果分析如下:

test accuracy 和 test loss

- accuracy

- loss

提升了一点点,没有突破94%

2.4 Inception_resnet_v1_slim_0.8

之前代码有写错过,所以对这个 dropout 参数不是很自信,做了一个dropout 的实验,把一开始超参数的设定中DROPOUT=0.2 # keep 80% 改为 0.8,tensorflow中一般设置的是 keep_drop = 0.8

结果分析如下:

test accuracy 和 test loss

哈哈,这是马后炮耶,刚开始 inception_resnet_v1_slim 效果很差的, 所以才会做这个实验,后来发现代码写错了,更正后,差距就很明显了。好拉,确认 keras 中的 dropout 是丢弃的百分比了。

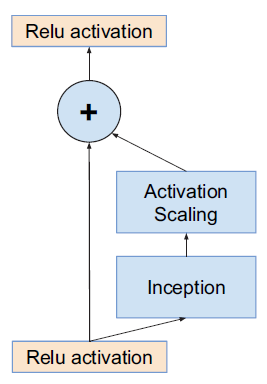

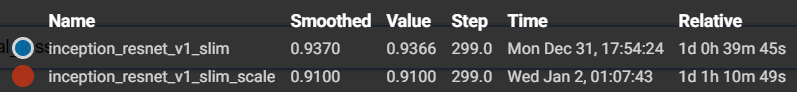

2.5 Inception_resnet_v1_slim_scale

论文中图7,之前 inception_resnet_v1 代码中也有这种结构

也即如下部分代码:

if scale_residual:

x = Lambda(lambda p: p * 0.1)(x)

现在把这个功能激活,修改代码如下 scale_residual=True

def create_model(img_input):

# stem

x = create_stem(img_input,concat_axis=CONCAT_AXIS)

# 5 x inception_A

for _ in range(5):

x=inception_A(x,params=[(32,),(32,32),(32,32,32),(256,)],concat_axis=CONCAT_AXIS,scale_residual=True)

# reduce A

x=reduce_A(x,params=[(384,),(192,224,256)],concat_axis=CONCAT_AXIS) # 768

# 10 x inception_B

for _ in range(10):

x=inception_B(x,params=[(128,),(128,128,128),(896,)],concat_axis=CONCAT_AXIS,scale_residual=True)

# reduce B

x=reduce_B(x,params=[(256,384),(256,256),(256,256,256)],concat_axis=CONCAT_AXIS) # 1280

# 5 x inception_C

for _ in range(5):

x=inception_C(x,params=[(192,),(192,192,192),(1792,)],concat_axis=CONCAT_AXIS,scale_residual=True)

x=GlobalAveragePooling2D()(x)

x=Dropout(DROPOUT)(x)

x = Dense(num_classes,activation='softmax',kernel_initializer="he_normal",

kernel_regularizer=regularizers.l2(weight_decay))(x)

return x

参数量不变,如下所示:

Total params: 20,537,194

Trainable params: 20,510,890

Non-trainable params: 26,304

结果分析如下:

test accuracy 和 test loss

- accuracy

哎呀,没有之前的好!可能需要调整下 hyper-parameters

2.6 Inception_resnet_v1_slim_ac

加辅助分类器,auxiliary classifier,具体参考 【Keras-Inception v1】CIFAR-10

在 inception module B 和 reduction B 之间,加入了 auxiliary classifier ,代码修改如下:

def create_model(img_input):

# stem

x = create_stem(img_input,concat_axis=CONCAT_AXIS)

# 5 x inception_A

for _ in range(5):

x=inception_A(x,params=[(32,),(32,32),(32,32,32),(256,)],concat_axis=CONCAT_AXIS)

# reduce A

x=reduce_A(x,params=[(384,),(192,224,256)],concat_axis=CONCAT_AXIS) # 768

# 10 x inception_B

for _ in range(10):

x=inception_B(x,params=[(128,),(128,128,128),(896,)],concat_axis=CONCAT_AXIS)

# ac

aux_out = AveragePooling2D(pool_size=(5,5),strides=3,padding='same',data_format=DATA_FORMAT)(x)

aux_out = Conv2D(128,kernel_size=(1,1),strides=(1,1),padding='same',

kernel_initializer="he_normal",kernel_regularizer=regularizers.l2(weight_decay))(aux_out)

aux_out = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(aux_out))

aux_out = Conv2D(768,kernel_size=(5,5),strides=(1,1),padding='same',

kernel_initializer="he_normal",kernel_regularizer=regularizers.l2(weight_decay))(aux_out)

aux_out = Activation('relu')(BatchNormalization(momentum=0.9, epsilon=1e-5)(aux_out))

aux_out = Flatten()(aux_out)

aux_out = Dense(num_classes,activation='softmax',kernel_initializer="he_normal",

kernel_regularizer=regularizers.l2(weight_decay))(aux_out)

# reduce B

x=reduce_B(x,params=[(256,384),(256,256),(256,256,256)],concat_axis=CONCAT_AXIS) # 1280

# 5 x inception_C

for _ in range(5):

x=inception_C(x,params=[(192,),(192,192,192),(1792,)],concat_axis=CONCAT_AXIS)

x=GlobalAveragePooling2D()(x)

x=Dropout(DROPOUT)(x)

x = Dense(num_classes,activation='softmax',kernel_initializer="he_normal",

kernel_regularizer=regularizers.l2(weight_decay))(x)

output = Add()([x,aux_out])

return output

参数量如下所示:

Total params: 23,390,452

Trainable params: 23,362,356

Non-trainable params: 28,096

- Inception_resnet_v1

Total params: 21,146,522 - inception_resnet_v1_stem_slim

Total params: 21,146,522 - inception_resnet_v1_slim_scale

Total params: 20,537,194 - inception_resnet_v1_slim

Total params: 20,537,194 - inception_v3

Total params: 23,397,866

结果分析如下:

test accuracy 和 test loss

- accuracy

对不起,打扰了,好像直接崩了!

3 总结

模型大小