版权声明:本文为博主原创文章,未经博主允许不得转载。 https://blog.csdn.net/someby/article/details/87929196

目录

本文将介绍idea创建maven工程以及编写一些常用工具类。

创建maven工程

编写工具类

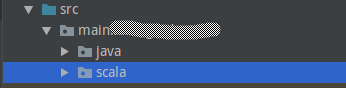

创建两个包,一个java,用于存放java文件代码;一个scala,用于存放spark代码

在java包下创建util包,用于存放常用工具类

下面在util包中创建工具类

具体代码

pom.xml

<?xml version="1.0" encoding="UTF-8"?> <project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd"> <modelVersion>4.0.0</modelVersion> <groupId>XMU</groupId> <artifactId>BigDataGraduationProject</artifactId> <version>1.0-SNAPSHOT</version> <properties> <spark.version>2.3.1</spark.version> <scala.version>2.11</scala.version> </properties> <dependencies> <!--Spark--> <dependency> <groupId>org.apache.spark</groupId> <artifactId>spark-core_${scala.version}</artifactId> <version>${spark.version}</version> <!--添加snappy-java,解决压缩报错问题--> <exclusions> <exclusion> <groupId>org.xerial.snappy</groupId> <artifactId>snappy-java</artifactId> </exclusion> </exclusions> </dependency> <dependency> <groupId>org.apache.spark</groupId> <artifactId>spark-streaming_${scala.version}</artifactId> <version>${spark.version}</version> </dependency> <dependency> <groupId>org.apache.spark</groupId> <artifactId>spark-sql_${scala.version}</artifactId> <version>${spark.version}</version> </dependency> <dependency> <groupId>org.apache.spark</groupId> <artifactId>spark-hive_${scala.version}</artifactId> <version>${spark.version}</version> </dependency> <dependency> <groupId>org.apache.spark</groupId> <artifactId>spark-mllib_${scala.version}</artifactId> <version>${spark.version}</version> </dependency> <dependency> <groupId>org.apache.spark</groupId> <artifactId>spark-examples_${scala.version}</artifactId> <version>${spark.version}</version> </dependency> <!--添加snappy-java的jar包,解决java.lang.UnsatisfiedLinkError: no snappyjava in java.library.path的报错问题--> <dependency> <groupId>org.xerial.snappy</groupId> <artifactId>snappy-java</artifactId> <version>1.1.2</version> </dependency> <!--HADOOP--> <!--导入hadoop的HDFSj ar包--> <dependency> <groupId>org.apache.hadoop</groupId> <artifactId>hadoop-hdfs</artifactId> <version>3.1.1</version> </dependency> <!--导入hadoop的Client jar包--> <dependency> <groupId>org.apache.hadoop</groupId> <artifactId>hadoop-client</artifactId> <version>3.1.1</version> </dependency> <!--导入hadoop的comm jar包--> <dependency> <groupId>org.apache.hadoop</groupId> <artifactId>hadoop-common</artifactId> <version>3.1.1</version> </dependency> <dependency> <groupId>org.apache.hadoop</groupId> <artifactId>hadoop-mapreduce</artifactId> <version>3.1.1</version> </dependency> <dependency> <groupId>org.apache.hadoop</groupId> <artifactId>hadoop-kafka</artifactId> <version>3.1.1</version> </dependency> <dependency> <groupId>org.apache.hadoop</groupId> <artifactId>hadoop-streaming</artifactId> <version>3.1.1</version> </dependency> <dependency> <groupId>org.apache.hadoop</groupId> <artifactId>hadoop-client-modules</artifactId> <version>3.1.1</version> </dependency> <dependency> <groupId>org.apache.hadoop</groupId> <artifactId>hadoop-resourceestimator</artifactId> <version>3.1.1</version> </dependency> <dependency> <groupId>com.fasterxml.jackson.core</groupId> <artifactId>jackson-core</artifactId> <version>2.9.8</version> </dependency> <dependency> <groupId>com.fasterxml.jackson.core</groupId> <artifactId>jackson-databind</artifactId> <version>2.9.8</version> </dependency> <dependency> <groupId>com.fasterxml.jackson.core</groupId> <artifactId>jackson-annotations</artifactId> <version>2.9.8</version> </dependency> <!--com.alibaba:fastjson:1.2.55--> <dependency> <groupId>com.alibaba</groupId> <artifactId>fastjson</artifactId> <version>1.2.55</version> </dependency> </dependencies> <build> <plugins> <plugin> <groupId>org.scala-tools</groupId> <artifactId>maven-scala-plugin</artifactId> <version>2.15.2</version> <executions> <execution> <goals> <goal>compile</goal> <goal>testCompile</goal> </goals> </execution> </executions> </plugin> <plugin> <artifactId>maven-compiler-plugin</artifactId> <version>3.6.0</version> <configuration> <source>1.8</source> <target>1.8</target> </configuration> </plugin> <plugin> <groupId>org.apache.maven.plugins</groupId> <artifactId>maven-surefire-plugin</artifactId> <version>2.19</version> <configuration> <skip>true</skip> </configuration> </plugin> </plugins> </build> </project>

DateUtils.java

package main.xxx.java.util; import java.text.SimpleDateFormat; import java.util.Calendar; import java.util.Date; /** * FileName: DateUtils * Author: hadoop * Email: [email protected] * Date: 19-2-25 下午7:09 * Description:日期时间工具类 */ public class DateUtils { public static final SimpleDateFormat TIME_FORMAT = new SimpleDateFormat("yyyy-MM-dd HH:mm:ss"); public static final SimpleDateFormat DATE_FORMAT = new SimpleDateFormat("yyyy-MM-dd"); /** * 判断一个时间是否是在另一个时间之前 * @param time1 第一个时间 * @param time2 第二个时间 * @return 返回判断结果 */ public static boolean before(String time1,String time2){ try { Date dateTime1 = TIME_FORMAT.parse(time1); Date dateTime2 = TIME_FORMAT.parse(time2); if (dateTime1.before(dateTime2)){ return true; } }catch (Exception e){ e.printStackTrace(); } return false; } /** * 判断time1是否在time2的后面 * @param time1 第一个时间 * @param time2 第二个时间 * @return 返回判断结果 */ public static boolean after(String time1,String time2){ try{ Date dateTime1 = TIME_FORMAT.parse(time1); Date dateTime2 = TIME_FORMAT.parse(time2); if (dateTime1.after(dateTime2)){ return true; } }catch(Exception e){ e.printStackTrace(); } return false; } /** * 计算两个时间的差值(单位为秒)" * @param time1 时间1 * @param time2 时间2 * @return 返回两个时间的差值,单位为秒 */ public static int minus(String time1,String time2){ try{ Date dateTime1 = TIME_FORMAT.parse(time1); Date dateTime2 = TIME_FORMAT.parse(time2); long millisecond = dateTime1.getTime() - dateTime2.getTime(); return Integer.valueOf(String.valueOf(millisecond)); }catch (Exception e){ e.printStackTrace(); } return 0; } /** * 获取年月日和小时 * @param datetime 传入的时间格式(yyyy-MM-dd HH:mm:ss) * @return 结果时间 */ public static String getDateHour(String datetime){ String date = datetime.split(" ")[0]; String hourMinuteSecond = datetime.split(" ")[1]; String hour = hourMinuteSecond.split(":")[0]; return date+"_"+hour; } /** * 获取丹田日期(yyyy-MM-dd) * @return 当天日期 */ public static String getTodayDate(){ return DATE_FORMAT.format(new Date()); } /** * 获取昨天的日期(yyyy-MM-dd) * @return 昨天的日期 */ public static String getYesterdayDate(){ Calendar cal = Calendar.getInstance(); cal.setTime(new Date()); cal.add(Calendar.DAY_OF_YEAR,-1); Date date = cal.getTime(); return DATE_FORMAT.format(date); } /** * 格式化日期(yyyy-MM-dd) * @param date DAte对象 * @return 格式化后的对象 */ public static String formatDate(Date date){ return DATE_FORMAT.format(date); } /** * 格式化时间(yyyy-MM-dd HH:mm:ss) * @param date date Date 对象 * @return 格式化后的时间 */ public static String formateTime(Date date){ return TIME_FORMAT.format(date); } /* public static void main(String[] args){ String t1 = "1551095261"; String t2 = "1551095298"; try { Date time1 = TIME_FORMAT.parse(t1); Date time2 = TIME_FORMAT.parse(t2); System.out.println("Time1: "+t1 + " time1:"+time1); System.out.println("Time2: "+t2 +" time2: "+time2); } catch (ParseException e) { e.printStackTrace(); } boolean beforeResult = before(t2,t1); System.out.println("The result of before:"+beforeResult); } */ }

NumberUtils.java

package main.xxx.java.util; import java.math.BigDecimal; /** * FileName: NumberUtils * Author: hadoop * Email: [email protected] * Date: 19-2-25 下午8:11 * Description:数字格式工具类 */ public class NumberUtils { /** * 格式化小数 * @param num 字符串 * @param scale 四舍五入的位数 * @return 格式化小数 */ public static double formatDouble(double num,int scale){ BigDecimal bd = new BigDecimal(num); return bd.setScale(scale,BigDecimal.ROUND_HALF_UP).doubleValue(); } }

ParamUtils.java

package main.xxx.java.util; import com.alibaba.fastjson.JSONArray; import com.alibaba.fastjson.JSONObject; /** * FileName: ParamUtils * Author: hadoop * Email: [email protected] * Date: 19-2-25 下午8:16 * Description:参数工具类 */ public class ParamUtils { /** * 从命令行中获取任务id * @param args 命令行参数 * @return 任务id */ public static Long getTaskIdFromArgs(String[] args){ try{ if (args != null && args.length > 0){ return Long.valueOf(args[0]); } }catch (Exception e){ e.printStackTrace(); } return null; } /** * 从JSON对象中提取参数 * @param jsonObject JSON对象 * @param field * @return 参数 */ public static String getParm(JSONObject jsonObject, String field){ JSONArray jsonArray = jsonObject.getJSONArray(field); if(jsonArray != null && jsonArray.size()> 0){ return jsonArray.getString(0); } return null; } }

StringUtils.java

package main.xxx.java.util; /** * FileName: StringUtils * Author: hadoop * Email: [email protected] * Date: 19-2-25 下午8:40 * Description:字符串工具类 */ public class StringUtils { /** * 判断字符串是否为空 * @param str 字符串 * @return 返回是否为空 */ public static boolean isEmpty(String str){ return str == null || "".equals(str); } /** * 判断字符串是否不为空 * @param str 字符串 * @return 返回字符串是否不为空 */ public static boolean isNotEmpty(String str){ return str != null && !"".equals(str); } /** * 截断字符串前后两侧的逗号 * @param str 字符串 * @return 字符串 */ public static String trimComma(String str){ if(str.startsWith(",")){ str = str.substring(1); } if (str.endsWith(",")){ str = str.substring(0,str.length()-1); } return str; } /** * 补全两位数 * @param str * @return */ public static String fulfuill(String str){ if(str.length() == 2){ return str; }else return "0"+str; } /** * 从拼接的字符串中提取字段 * @param str 字符串 * @param delimiter 分隔符 * @param field 字段 * @return 字段值 */ public static String getFieldFromConcatString(String str,String delimiter,String field ){ String[] fields = str.split(delimiter); for (String concatField: fields){ String fieldName = concatField.split("=")[0]; String fieldValue = concatField.split("=")[1]; if (fieldName.equals(field)){ return fieldValue; } } return null; } /** * 从拼接的字符串中给字段设置值 * @param str 字符串 * @param delimiter 分隔符 * @param field 字段名 * @param newFieldValue 新的field值 * @return 字段值 */ public static String setFieldInConcatString(String str,String delimiter,String field,String newFieldValue){ String[] fields = str.split(delimiter); for (int i = 0; i < fields.length;i++){ String fieldName = fields[i].split("=")[0]; if (fieldName.equals(field)){ String concatField = fieldName +"=" + newFieldValue; fields[i] = concatField; break; } } StringBuffer buffer = new StringBuffer(""); for (int i = 0; i < fields.length; i++){ buffer.append(fields[i]); if (i < fields.length-1){ buffer.append("|"); } } return buffer.toString(); } }

ValidUtils.java

package main.xxx.java.util; /** * FileName: ValidUtils * Author: hadoop * Email: [email protected] * Date: 19-2-25 下午9:05 * Description:校验工具类 */ public class ValidUtils { /** * 校验数据中的指定字段,是否在指定范围内 * @param data 数据 * @param dataField 数据字段 * @param parameter 参数 * @param startParamField 起始参数字段 * @param endParamField 结束参数字段 * @return 校验结果 */ public static boolean between(String data, String dataField, String parameter, String startParamField, String endParamField) { String startParamFieldStr = StringUtils.getFieldFromConcatString( parameter, "\\|", startParamField); String endParamFieldStr = StringUtils.getFieldFromConcatString( parameter, "\\|", endParamField); if(startParamFieldStr == null || endParamFieldStr == null) { return true; } int startParamFieldValue = Integer.valueOf(startParamFieldStr); int endParamFieldValue = Integer.valueOf(endParamFieldStr); String dataFieldStr = StringUtils.getFieldFromConcatString( data, "\\|", dataField); if(dataFieldStr != null) { int dataFieldValue = Integer.valueOf(dataFieldStr); if(dataFieldValue >= startParamFieldValue && dataFieldValue <= endParamFieldValue) { return true; } else { return false; } } return false; } /** * 校验数据中的指定字段,是否有值与参数字段的值相同 * @param data 数据 * @param dataField 数据字段 * @param parameter 参数 * @param paramField 参数字段 * @return 校验结果 */ public static boolean in(String data, String dataField, String parameter, String paramField) { String paramFieldValue = StringUtils.getFieldFromConcatString( parameter, "\\|", paramField); if(paramFieldValue == null) { return true; } String[] paramFieldValueSplited = paramFieldValue.split(","); String dataFieldValue = StringUtils.getFieldFromConcatString( data, "\\|", dataField); if(dataFieldValue != null) { String[] dataFieldValueSplited = dataFieldValue.split(","); for(String singleDataFieldValue : dataFieldValueSplited) { for(String singleParamFieldValue : paramFieldValueSplited) { if(singleDataFieldValue.equals(singleParamFieldValue)) { return true; } } } } return false; } /** * 校验数据中的指定字段,是否在指定范围内 * @param data 数据 * @param dataField 数据字段 * @param parameter 参数 * @param paramField 参数字段 * @return 校验结果 */ public static boolean equal(String data, String dataField, String parameter, String paramField) { String paramFieldValue = StringUtils.getFieldFromConcatString( parameter, "\\|", paramField); if(paramFieldValue == null) { return true; } String dataFieldValue = StringUtils.getFieldFromConcatString( data, "\\|", dataField); if(dataFieldValue != null) { if(dataFieldValue.equals(paramFieldValue)) { return true; } } return false; } }

到此,目前的工具类已经创建完毕。